The Great GPU Shake-Up: How Cost-Effective Hardware is Fueling the Next AI Boom

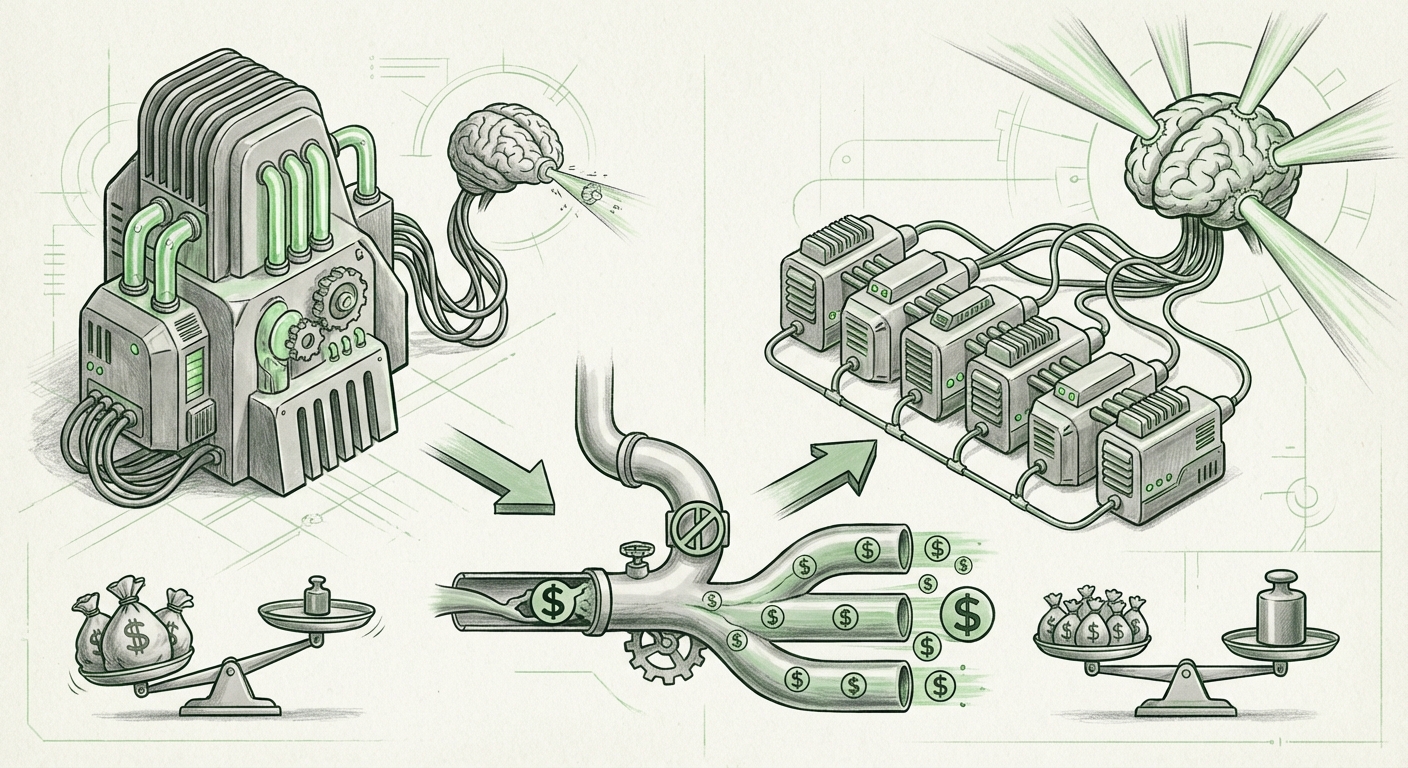

The landscape of Artificial Intelligence infrastructure is undergoing a seismic shift. For years, the narrative around training and deploying massive AI models, particularly Large Language Models (LLMs), has been dominated by a single vendor. However, the current economic realities of scaling AI—where costs quickly spiral out of control—are forcing organizations to look elsewhere. The recent focus on enterprise-ready accelerators like the AMD MI355X is not just a blip; it signals a fundamental re-evaluation of compute strategy across the industry.

As AI moves from the research lab to mainstream business application, the primary constraint is rapidly shifting from *can we build it* to *can we afford to run it*. This article synthesizes several converging trends—cost optimization, competitive hardware dynamics, and the critical importance of inference—to paint a picture of what the next five years of AI deployment will look like.

The FinOps Era: Taming the Exploding Cloud Bill

Building an LLM might require millions in upfront capital for training, but running that model for millions of daily customer queries can cost exponentially more over time. This realization has pushed **FinOps (Financial Operations)** to the forefront of every AI strategy meeting. It’s no longer enough to just buy the fastest chip; teams must prove the return on investment for every clock cycle.

The move toward cheaper cloud GPUs, exemplified by the discussion around the AMD MI355X, is a direct response to this pressure. Enterprises are realizing that defaulting to the most expensive, readily available hardware means building a business model that is inherently unsustainable for high-volume tasks.

What this means for the future: We are entering an age of compute budgeting where infrastructure choice is viewed through a financial lens first. Organizations will adopt multi-cloud and multi-hardware strategies, using specialized, cost-optimized silicon (like the MI355X for inference) for steady-state workloads, while reserving premium hardware for critical, short-burst training runs.

For the CTO and the FinOps practitioner, this means prioritizing agility. They need platforms that allow easy swapping between different hardware providers (like AWS, Azure, GCP, or specialized GPU clouds) based on real-time pricing and availability. The hardware flexibility enables a disciplined approach to cost management, ensuring that AI scale-up doesn't equal unchecked expenditure.

The Accelerators Face Off: Beyond Vendor Lock-In

For nearly a decade, the AI ecosystem has been deeply entwined with NVIDIA’s CUDA platform. This created a powerful, high-performance standard, but it also created a substantial barrier to entry and price control for competitors. The adoption of alternatives, such as AMD’s offerings, directly challenges this status quo.

Recent analyses tracking Cloud GPU market share confirm that while NVIDIA still commands the majority, the appetite for viable alternatives is growing rapidly. This is particularly true for companies wary of supply chain risks or willing to absorb moderate integration challenges for significant cost benefits.

The MI355X specifically appeals because it offers competitive specifications tailored for large models (notably memory scaling, crucial for loading multi-billion parameter models). This forces the market leader to innovate on price and features even faster.

The Trade-Off: Performance vs. Ecosystem Maturity. The critical question for infrastructure architects revolves around the software stack. Is the performance gain worth the potential headache of compatibility issues? This is where the ecosystem validation becomes key.

The Inference Economy: Where the Real Money Is Spent

If training is the *R&D phase* of an AI application, inference is the product delivery phase. Once an LLM is finalized, it might be used once by a handful of researchers during training, but it might be queried thousands of times per second by end-users globally during deployment.

As sources tracking the shifting AI compute focus clearly indicate, the total operational expenditure (OpEx) is overwhelmingly dominated by inference costs. This is the major area where hardware efficiency translates directly into profit margins.

The MI355X guide emphasizes its role in inference, suggesting it offers a superior cost-per-query ratio compared to bleeding-edge training chips. This strategy—using a slightly less powerful, but significantly cheaper, chip for the heavy lifting of serving user requests—is the cornerstone of scalable AI business models.

Practical Implications for MLOps: MLOps teams must now engineer not just for model accuracy, but for "cost-per-token." This requires deep integration with hardware capabilities, potentially involving techniques like:

- Quantization: Shrinking the precision of the model weights (e.g., from 16-bit to 8-bit) to use less memory and run faster on efficient hardware.

- Batching: Grouping multiple user requests together so the GPU can process them efficiently in parallel.

The hardware choice dictates the limits of these optimizations. A card with ample, fast memory—a hallmark of modern enterprise accelerators—allows these efficiency techniques to be pushed further.

Cracking the CUDA Wall: The Rise of ROCm

No discussion about adopting non-NVIDIA hardware is complete without addressing the elephant in the room: software compatibility. CUDA, NVIDIA’s programming model, is deeply embedded in nearly every major AI framework and research paper. Migrating workloads requires faith in the competition’s software stack.

This is why research into ROCm adoption challenges and successes is so crucial. ROCm is AMD’s answer to CUDA. As these ecosystems mature—with new versions (like ROCm 6.0) promising better PyTorch integration and stability—the risk premium associated with adopting AMD hardware decreases.

Actionable Insight for Developers: Deep Learning Engineers must proactively benchmark their critical workloads (not just standard benchmarks, but their unique production models) on ROCm-enabled systems. While the community support is growing, developers need to ensure their specific tensor operations or custom kernels are well-supported to avoid manual porting efforts.

The long-term implication is a healthier, more competitive market. If ROCm achieves true "CUDA-parity" in major cloud deployments, enterprises gain crucial negotiating leverage and hardware choice, democratizing access to cutting-edge AI capabilities.

What This Means for the Future of AI and How It Will Be Used

The current emphasis on cost-effective compute signals a maturation of the AI industry. We are moving beyond the "hype cycle" stage where raw capability was the only metric, into a stage where efficiency and deployment economics determine winners and losers.

Future Implications for Business

- Democratization of Advanced AI: When the cost to serve an LLM drops significantly, smaller companies, startups, and even sophisticated open-source projects can deploy capabilities that were once restricted to tech giants. This lowers the barrier to entry for personalized AI services.

- Ubiquitous, Low-Latency AI: Cheaper inference means AI can be embedded into real-time applications where latency and cost were previously prohibitive—think instantaneous translation, rapid content moderation at massive scale, or deeply personalized interactive virtual assistants.

- Infrastructure Diversification: Organizations will adopt "AI Hardware Agnostic" strategies. Cloud contracts will demand flexibility, and specialized, smaller hardware providers (like those focused solely on inference ASICs or specialized GPUs) will find viable niches, creating a mosaic of compute options rather than a single monolith.

Societal Shift: Moving Beyond Scale

From a societal view, this cost efficiency is vital. If the cost of AI only drops slowly, access to powerful general intelligence remains concentrated. If hardware competition drives costs down faster, we see a faster diffusion of transformative technology into everyday tools, education, and public services. The MI355X discussion is a small data point reflecting a large systemic push toward making powerful AI affordable for everyone who can build it.

Actionable Insights for Technology Leaders

To capitalize on this shift, technology leaders must act decisively:

- Mandate Multi-Vendor Benchmarking: Stop accepting performance reports based solely on one vendor’s reference architecture. Require MLOps teams to run performance-per-dollar comparisons for both training *and* inference across AMD, NVIDIA, and Intel offerings.

- Invest in Software Abstraction: Prioritize investment in abstraction layers (like containerization, ONNX Runtime integration, or advanced orchestration tools) that minimize the code changes required to switch between ROCm and CUDA environments. This reduces the risk of adopting new, cheaper hardware.

- Re-evaluate the Inference Pipeline: Perform an immediate audit of your current inference architecture. Identify the top 20% of queries driving 80% of your compute bill and prioritize deploying those specific models onto cost-optimized hardware like the MI355X as soon as vendor support is validated.

The narrative is changing: high performance is no longer enough. In the new era of scaled AI, efficient performance—performance measured against the dollar—is the ultimate competitive advantage. The emergence of strong contenders like AMD forces the market to optimize, ensuring that the next great wave of AI innovation will be built on a foundation that is both powerful and economically sustainable.