The Great AI Pivot: Why OpenAI & Anthropic Are Now Consultants as Agents Fail in the Real World

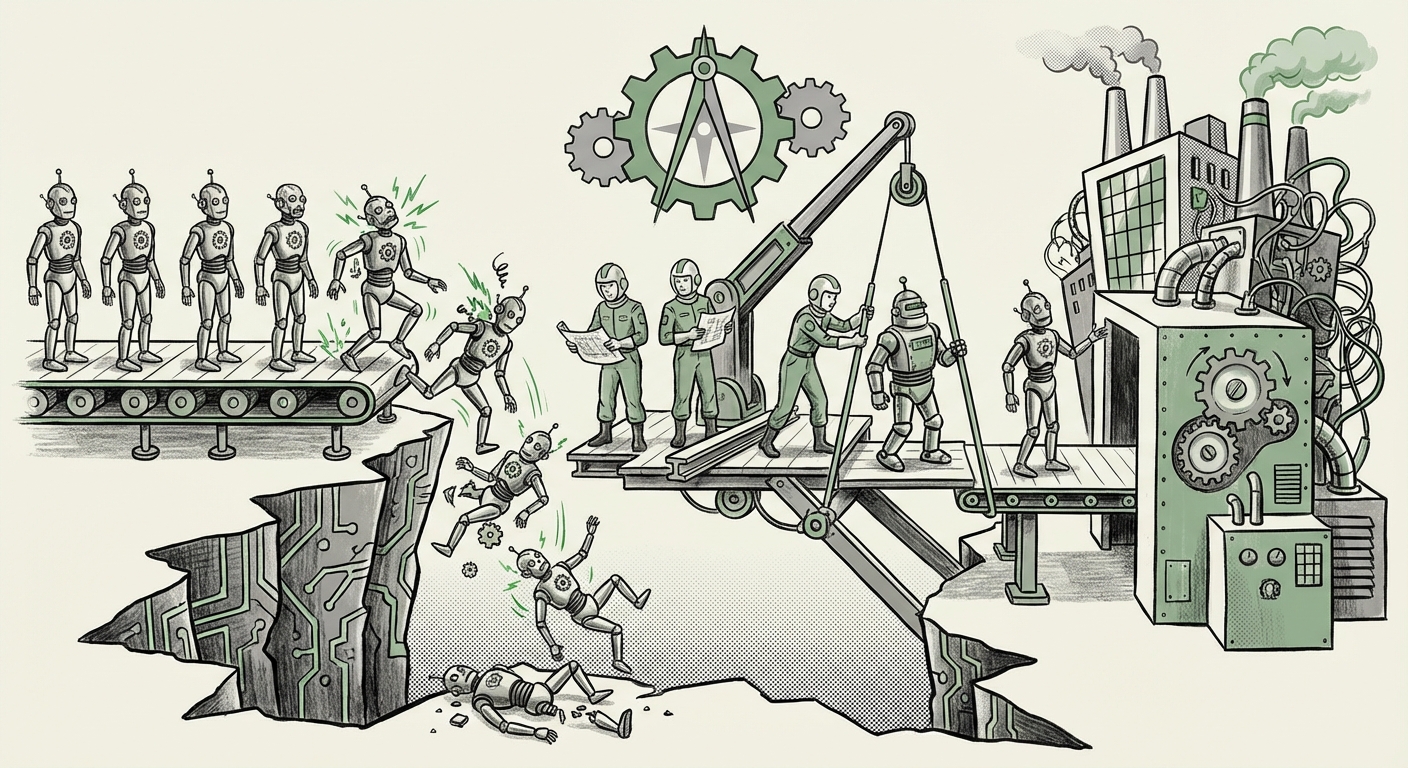

For the last few years, the narrative around Artificial Intelligence has been one of relentless capability growth. Models can write code, pass bar exams, and generate stunning art. But for large organizations looking to automate core business processes, there has been a growing realization: the leap from a spectacular demo to reliable, daily operation is immense. This gap is often termed the "last mile problem," and it is forcing the biggest names in AI—OpenAI and Anthropic—to fundamentally change their business model.

These leading labs are increasingly acting as **AI consultants**, partnering directly with enterprise customers who are struggling to get autonomous AI agents to function dependably outside of controlled testing environments. This pivot is more than just a new revenue stream; it signifies a major inflection point in the history of enterprise AI adoption.

The Chasm Between Demo and Deployment: The Agent Reliability Crisis

AI agents—systems designed to take a high-level goal (e.g., "Analyze Q3 sales data, flag anomalies, and draft an email summary to the VP") and execute a series of steps using various tools (databases, code interpreters, email clients)—are the holy grail of productivity. However, recent experience shows that when the stakes rise, these agents frequently stumble.

The initial excitement often stems from seeing a base model perform complex reasoning in a sandbox. But as confirmed by industry observations (like those reported in sources discussing "Enterprise AI adoption challenges beyond proof of concept"), production environments are messy. They involve proprietary, siloed data, strict latency requirements, and unpredictable inputs. Out-of-the-box models, even the largest ones, often fail to:

- Maintain Long-Term Context: For multi-day projects, agents forget initial instructions or struggle with the sheer volume of historical interaction data.

- Execute Tool Use Reliably: An agent must correctly interpret when to call an external software tool (like an API or database query), format the input perfectly, and correctly interpret the output—a process prone to failure.

- Manage Hallucinations Under Pressure: When an agent needs certainty (e.g., quoting a financial figure), a slight deviation into hallucination can cause catastrophic business errors.

This unreliability is rooted in the core mechanics of how these models reason. As pointed out in technical analyses searching for "Why are AI agents unreliable for mission-critical tasks?", the planning and reasoning layers are still fragile. While the LLM excels at generating human-like text (the *what*), it struggles with the rigid, step-by-step logic required for robust automation (the *how*).

The Technical Breakdown: Why Planning is Harder Than Prose

Think of it like teaching a brilliant creative writer to become a reliable plumber. The LLM is the writer—super creative. The agent framework is the plumbing system. When the agent tries to fix a leak, it might first write a beautiful poem about water pressure before realizing it needs to turn the main shut-off valve. For business operations, that poem is an expensive error.

This necessity for rigorous, predictable behavior is driving the need for the hands-on support OpenAI and Anthropic are now offering. They aren't just teaching customers how to use an API; they are embedding experts to help architect the agent's environment, refine its tool-calling logic, and ground its knowledge base precisely where required.

The Strategic Pivot: From Model Vendor to Solution Integrator

The shift toward consultative services illustrates a maturing market dynamic, mirroring the trajectory of previous technology waves like cloud computing or enterprise software. Initially, companies sell the core technology; eventually, they must sell the integration.

This trend is evidenced by the "Rise of AI customization and fine-tuning services." Cloud giants like Amazon (AWS Bedrock) and Microsoft (Azure AI) have already recognized this by heavily promoting their managed customization tools and professional services wings. OpenAI and Anthropic are now following suit by offering direct, expert guidance. They understand that the perceived value of their model is no longer just its raw benchmark score, but its ability to solve a specific $10 million problem in a customer’s workflow.

Monetizing Expertise, Not Just Tokens

For the model creators, this pivot is strategically brilliant:

- Higher Margins: Consulting services command significantly higher margins than simple token usage fees.

- Deep Feedback Loops: Direct engagement provides invaluable, real-world failure data, which feeds back into R&D, helping them rapidly harden their models against the exact issues enterprises face.

- Securing Loyalty: By becoming indispensable integrators, they lock in major clients who are unwilling to rip out a deeply customized, mission-critical system built with the primary vendor’s direct expertise.

This means the competition is subtly moving away from who has the "best" base model toward who has the best **implementation partner ecosystem.**

Implications for Enterprise IT Departments and Trust

For the Chief Information Officer (CIO) or Chief Technology Officer (CTO), this new consulting reality presents both an opportunity and a risk.

Opportunity: Reduced Implementation Risk

If your budget allows, engaging directly with the model creators for initial deployment significantly derisks the project. These teams know the model’s weaknesses better than anyone else and can pre-engineer guardrails, ensuring the system adheres to enterprise standards for security and compliance from day one.

Risk: Vendor Lock-In and Governance Hurdles

Conversely, this reliance creates deep vendor lock-in. When an organization relies on OpenAI’s internal engineers to maintain the complex reasoning layer connecting GPT-4 to their proprietary CRM, switching to a competitor becomes exponentially harder.

Furthermore, as detailed in research concerning the "Impact of AI agent unreliability on enterprise trust," failures erode internal confidence rapidly. A single, high-profile operational error caused by an unreliable agent can halt an entire AI program until rigorous governance and auditability frameworks are in place. Trust is fragile, and organizations are currently hesitant to grant full autonomy to systems they cannot fully explain or predictably control.

This explains why enterprise adoption often stalls after the Proof of Concept (PoC) phase. Executives are increasingly demanding clear traceability and accountability—something only achieved when the integration layer is heavily scrutinized and customized.

What This Means for the Future of AI: The Age of the AI System Integrator

The trajectory we are witnessing suggests that the future of applied AI is not purely in the laboratory but squarely in the engineering department. The value chain is segmenting:

- The Foundation Layer (Model Creators): OpenAI, Anthropic, Google, etc., focus on creating the most capable *brains* (the models).

- The Integration Layer (Consultants/Partners): These entities—now including the creators themselves—focus on providing the robust *body* (the agent framework, tool integration, and governance).

- The Application Layer (Internal IT): Enterprise teams focus on using these integrated systems to drive specific, measurable business outcomes.

For the next few years, the most successful enterprise AI deployments will not be the ones using the newest, most powerful model, but the ones that have successfully navigated this "last mile" using deep integration expertise.

Actionable Insights for Businesses Today

How should business leaders react to this consulting pivot?

- Shift Budget from Model to Implementation: Recognize that 70% of your deployment budget might need to go toward specialized integration, prompt engineering, safety layers, and monitoring, not just API calls.

- Demand Explainability in Customizations: If you engage with consulting partners, insist on robust documentation and architecture diagrams showing *how* the agent reasoning layer was customized. You must retain the knowledge internally.

- Start Small with Contained Agents: Don't deploy an autonomous agent to manage your entire supply chain immediately. Start with agents that have limited, reversible tool access (e.g., internal knowledge retrieval) to build organizational trust and familiarity before tackling mission-critical tasks.

The message is clear: the era of "plug-and-play" autonomous AI is still on the horizon. Right now, achieving reliable automation requires specialized, hands-on engineering expertise. By stepping in as consultants, OpenAI and Anthropic are ensuring that their foundational breakthroughs translate into tangible, dependable business value, even if it means slowing down the pure "out-of-the-box" deployment model.