The AI Consultant Pivot: Why Reliability, Not Just Raw Power, Is Defining Enterprise LLM Success

The narrative surrounding Generative AI has long been dominated by headline-grabbing capability leaps: faster reasoning, better code generation, and stunning creative output. For a time, the promise was plug-and-play intelligence—simply connect the API and watch business processes transform. However, a crucial signal is emerging from the frontline of enterprise adoption: the world’s leading AI labs, OpenAI and Anthropic, are pivoting into high-touch consulting roles.

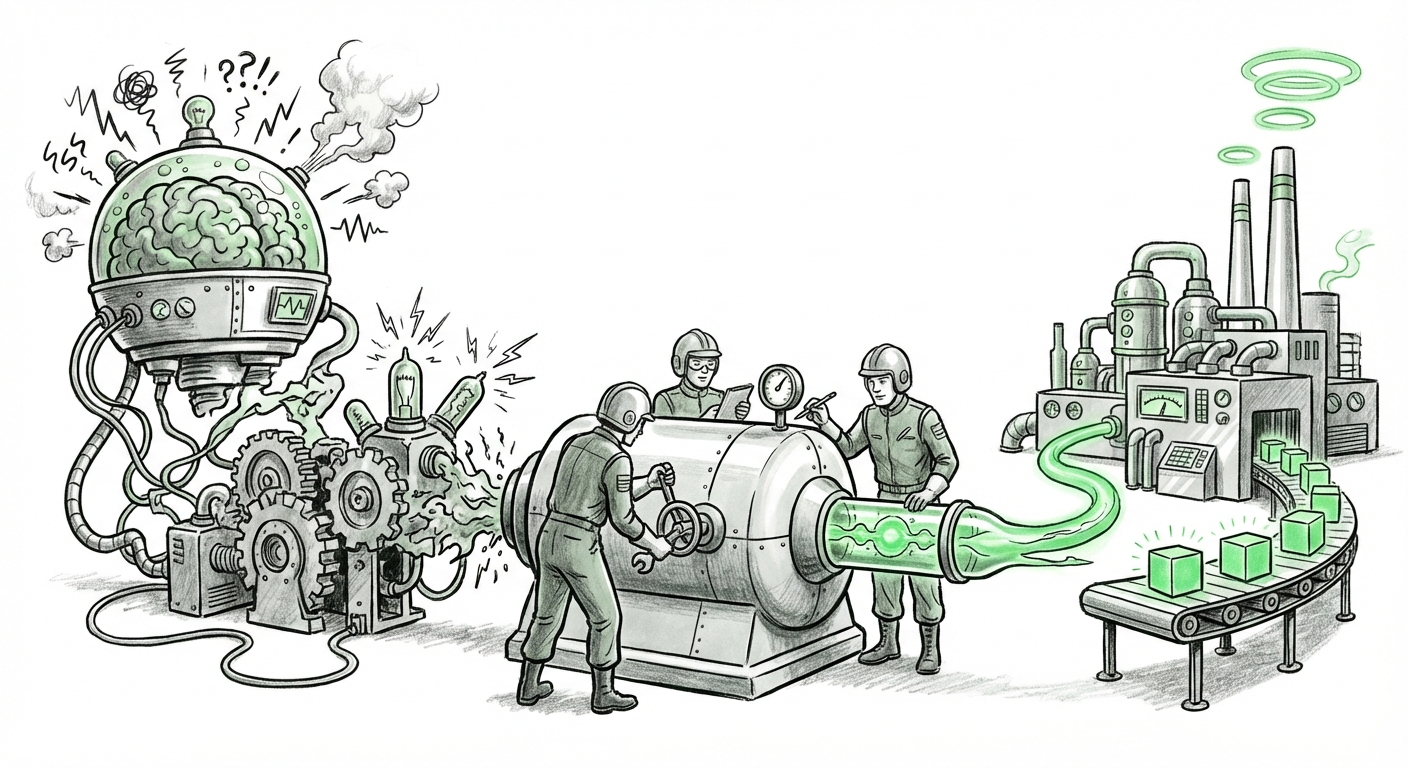

This is not a sign of failure; it is a definitive marker of the technology’s maturation curve. When a technology moves from the research lab to mission-critical business processes, the required standard shifts from "impressive demo" to "guaranteed reliability." This pivot confirms that the biggest hurdle in AI today is not model size, but integration fidelity.

The Reliability Gap: From Sandbox to Production

In controlled settings, Large Language Models (LLMs) shine. Ask GPT-4 or Claude 3 a general knowledge question, and they perform exceptionally. But when tasked with complex, domain-specific business operations—such as processing sensitive insurance claims or autonomously managing supply chain logistics—the fragility of generalized models becomes painfully apparent.

The core issue, as reflected by the need for external consulting, centers on **unpredictability**. We must address this through the lens of core technical hurdles:

1. The Shadow of Hallucination (Query 1 Focus)

The primary blocker for enterprise trust remains the model’s tendency to confidently fabricate information—hallucination. For a marketing copy generator, a minor error is acceptable; for an autonomous financial agent, a hallucinated transaction detail is catastrophic. Enterprises are terrified of deploying systems where outputs cannot be deterministically verified. This fear directly drives demand for experts who can build **guardrails** around the base models.

The initial goal was to use the LLM as the brain; the reality is that businesses require it to function under the strict compliance and accuracy mandates of a highly regulated employee. This necessity validates the need for bespoke consultation to minimize these failure modes.

2. The Customization Imperative (Query 3 Focus)

Out-of-the-box models are trained on the general internet, not a company’s proprietary knowledge base, internal vernacular, or specific compliance documents. To make these models useful, they must be "grounded" in enterprise reality. This is where the heavy engineering begins, often revolving around **Retrieval-Augmented Generation (RAG)** systems.

Articles exploring RAG versus Fine-Tuning show that building reliable agents demands sophisticated knowledge management pipelines. Consultants are not just tweaking prompts; they are architecting the entire information retrieval system, vector databases, and security layers required to connect the LLM to trusted internal data. This complexity exceeds the capacity of many internal IT departments, explaining the scramble for OpenAI and Anthropic’s specialized help.

The Business Model Evolution: Product to Partnership

The shift toward consultancy highlights a fundamental evolution in how leading AI firms monetize their technology. They are realizing that selling API tokens alone is insufficient when the client cannot successfully build a reliable application on top of them. This mirrors historical technology adoption cycles:

When mainframes became the standard, hardware vendors sold the boxes, but specialized service companies emerged to write the application logic. When cloud computing matured, AWS and Azure didn't just sell compute; they built massive professional services divisions to help companies migrate complex legacy systems. LLM providers are following this proven path.

This trend, corroborated by the growth in cloud providers' professional services arms (Query 2), suggests a future where the "AI Platform" is sold in two tiers: the **API/Model Access** (the raw intelligence) and the **Integration Guarantee** (the assurance of reliability through expert deployment).

The Economic Reality of Maintenance (Query 4 Focus)

Reliability is not static. As an enterprise’s internal data changes, as new company policies are issued, or as the underlying foundation model receives updates, the deployed agent can drift out of specification. This necessitates constant monitoring, re-testing, and retraining—the hidden costs of ownership (Query 4).

By offering consultancy, OpenAI and Anthropic secure long-term, high-value partnerships. They transition from being a commodity supplier (pay-per-call) to an indispensable technology partner invested in the customer's sustained operational success.

Future Implications: Governance, Standards, and Agentic Specialization

The necessity for expert guidance forces a critical re-evaluation of AI adoption across the board. This development has several profound implications for the future trajectory of AI technology.

The Rise of Verifiable AI and Governance

As agents take on higher-stakes tasks, regulatory scrutiny increases. If foundational model providers are the ones helping implement the solution, they are implicitly setting de facto standards for deployment robustness. The market is demanding verifiable proof that an AI system performs as intended. This drives the adoption of formal testing and validation frameworks (Query 5).

For CTOs, this means AI projects can no longer be siloed R&D experiments. They must adhere to the same rigorous validation pipelines as any other mission-critical software. The consultancy engagement becomes the bridge between cutting-edge research and necessary regulatory compliance.

Agent Specialization vs. Generalization

The market is segmenting. General-purpose chatbots will remain accessible via simple APIs. However, true, reliable agentic AI—systems capable of executing multi-step workflows with autonomy—will require deep customization. We are seeing the emergence of two distinct AI markets:

- Commodity AI: For tasks like summarization or basic content creation, relying on readily available models.

- Bespoke Agentic AI: For high-value, high-risk automation, requiring intensive customization, security hardening, and continuous management—the domain now requiring specialized consultants.

Actionable Insights for Businesses

For enterprises currently struggling to move beyond pilot programs, this trend provides a clear pathway:

- Acknowledge Complexity: Stop treating LLM integration as a simple software deployment. Treat it as building a new, semi-autonomous business unit that requires dedicated training and supervision.

- Invest in Foundational Engineering: Prioritize robust RAG architecture and data quality above the marginal improvements offered by the absolute newest model version. Reliability rests on the data pipeline, not just the model weights.

- Factor in Long-Term Support: Budget not just for initial implementation, but for ongoing maintenance, monitoring tools, and model recalibration cycles. The consultancy relationship should be viewed as an ongoing operational partnership.

Conclusion: The Era of Applied Intelligence

The movement by OpenAI and Anthropic toward deep enterprise consultancy signifies the end of the "honeymoon phase" for Generative AI. The initial excitement over raw generative power is yielding to the cold, hard reality of operational integration. This transition is healthy, albeit painful, as it forces the industry to build the necessary engineering rigor around a technology that is inherently probabilistic.

The future of successful AI adoption will not belong to those who simply use the biggest models, but to those who can successfully integrate, validate, and maintain reliable, trustworthy autonomous agents. The era of pure product sales is fading; the era of applied, customized, and rigorously managed intelligence has begun. The providers who can deliver this expertise—whether through their own consulting arms or through a strong partner ecosystem—will define the next wave of AI value creation.