The End of Out-of-the-Box AI: Why OpenAI and Anthropic Are Becoming Enterprise Consultants

The initial hype surrounding generative AI agents promised a future where off-the-shelf software could handle complex business tasks with magical precision. We saw the dazzling demos: agents booking travel, drafting intricate code, and summarizing massive corporate documents flawlessly. However, the conversation in enterprise technology circles has recently taken a significant and revealing turn. The developers who built these powerful foundational models—OpenAI and Anthropic—are increasingly acting less like software vendors and more like high-priced consultants.

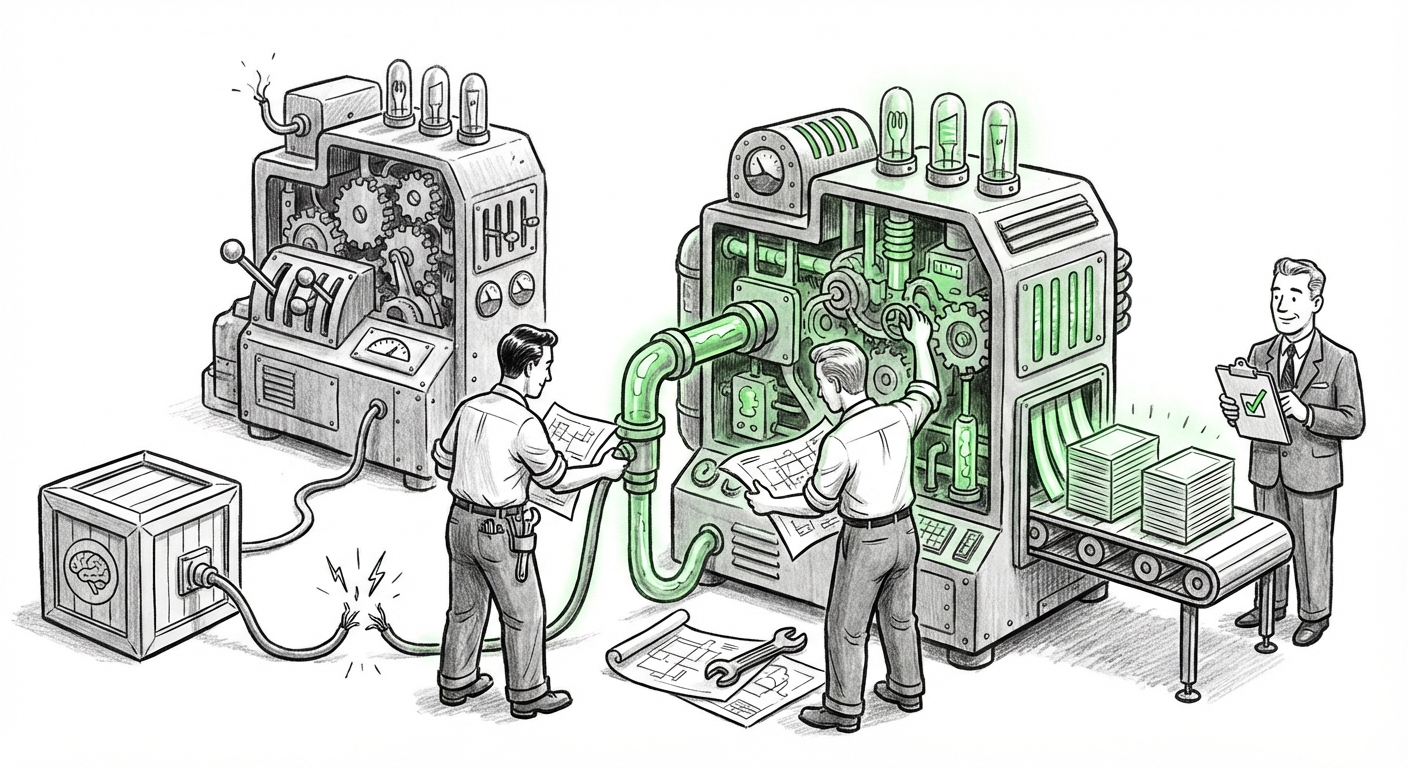

This pivot signals a critical realization: for AI to move beyond novelty and into reliable production environments, the "last mile" of integration is proving to be the hardest. The gap between a model's *potential* (what it does in a clean lab setting) and its *production-ready reality* (what it does reliably inside a complex organization) is forcing vendors to embed themselves deeply within client operations.

The Inevitable Pivot: From API Seller to System Integrator

When LLMs first launched, the business model was straightforward: sell access via API calls. Companies would take the model, point it at their data, and expect results. This model worked wonderfully for simple queries and content generation. But when companies started building autonomous agents—systems designed to execute multi-step workflows that impact customers or core finance—the limitations became starkly apparent.

These agents kept failing. They might use outdated information, misunderstand nuanced internal policies, or completely ignore critical security parameters. This inconsistency is unacceptable in regulated or high-stakes environments. The result, as recently highlighted in reports, is that leading AI labs are now dedicating significant engineering resources to bespoke customization projects. They are essentially selling implementation expertise alongside their core product.

Corroborating the Trend: The Technical Hurdles

Why are the creators themselves being called in to fix what the model does? The answer lies in the technical complexity of grounding the models, specifically through techniques like **Retrieval-Augmented Generation (RAG)**. RAG allows an LLM to look up information from a private database (like a company’s internal policies) before answering. While powerful, RAG pipelines are fragile.

- Data Ingestion Complexity: Getting corporate data—often messy, siloed, and inconsistent—into a format the RAG system can reliably use requires specialized data engineering that the average enterprise lacks.

- Context Window Management: Ensuring the model correctly retrieves the most relevant snippet of data from millions of documents, and doesn't get confused by conflicting contexts, demands deep model parameter tuning.

- Hallucination Mitigation: In a demo, a wrong answer is embarrassing. In production, a wrong answer can lead to compliance violations. The fine-tuning required to enforce truthfulness is often highly specific to the client’s domain.

The industry is recognizing that mastering the LLM Fine-Tuning Enterprise Adoption Challenges is no longer optional; it is the price of admission for real-world deployment.

The Proof of Concept vs. Production Chasm

For any new technology, there is a classic gulf between the shining demo (Proof of Concept, or PoC) and the messy reality of deployment (Production). With generative AI, this gap seems wider than anticipated. Executives saw the potential for massive productivity gains, authorized significant initial spending, and are now encountering friction.

As market analysis suggests, organizations are starting to "scale back initial aggressive AI spending projections" because the infrastructure and talent required for reliable scaling are lagging behind the model capabilities. This leads directly to the vendor dependency we are observing. When a company buys a $10 million AI agent initiative, they cannot afford for it to be unreliable. They look to the source—OpenAI, Anthropic—to guarantee the integration, shifting the vendor's role from selling software licenses to selling guaranteed outcomes.

This dynamic perfectly mirrors observations about the difficulty of maintaining LLM ROI post-initial deployment, such as analyses provided by major consultancies:

"The next frontier for generative AI: From experiment to enterprise value" (McKinsey) often emphasizes that value realization requires governance, operational integration, and continuous monitoring—services that only the creators of the core intelligence are currently best positioned to offer reliably.

A Platform Strategy Shift: Customization is the New Core Product

This consulting pivot isn't isolated to the pure-play model developers. Their primary partners are following suit, signaling that customization is becoming the standard feature for premium enterprise tiers.

Consider the ecosystem surrounding models, such as the Microsoft/OpenAI collaboration. Microsoft is not just selling the Copilot interface; they are deepening their offerings within Azure AI Studio and Microsoft Fabric. These platforms are becoming the specialized toolkits necessary to perform the deep customization that enterprises demand. This movement towards offering robust governance and customization tooling suggests that Microsoft recognizes that out-of-the-box Copilot will only satisfy basic tasks. For complex tasks in finance or compliance, tailored guardrails and knowledge integration are mandatory.

If the largest distribution channels are prioritizing complex integration services, it confirms that the market is maturing past the need for simple, general-purpose tools.

The Future Ecosystem: The Rise of the AI Hardening Specialist

If the primary model creators become consultants, where does that leave the traditional technology consulting landscape?

This trend is actively reshaping the market for System Integrators (SIs). We are witnessing the rise of AI implementation partners and specialized consultants. Companies that used to implement CRM systems or cloud migrations are rapidly reskilling their workforces to become "AI hardening" experts. They are tasked with taking the slightly customized model from the vendor and integrating it securely, legally, and efficiently into legacy enterprise architecture.

This specialization suggests a three-tiered AI integration market is forming:

- The Creators (OpenAI, Anthropic): Focus on core model capability and deep, high-level customization/grounding for anchor clients.

- The Platform Providers (Microsoft, Google): Provide the secure cloud infrastructure and specialized developer tools necessary for adaptation.

- The Integrators (Boutique & Large SIs): Focus on the tactical integration, data pipeline construction, security audits, and legacy system connection—the messy, on-the-ground work that ensures reliability.

Reports on the "AI Services Market" growth confirm this structure. The fastest-growing segments of service contracts are not simple licensing fees, but rather complex projects involving security hardening and bespoke data pipeline construction.

Implications: What This Means for the Future of AI and Business

This transition from selling models to selling integration is more than a minor business adjustment; it signals the true beginning of enterprise AI adoption. The days of cheap, plug-and-play AI are over for serious applications. This new reality carries profound implications:

1. Increased Cost of Entry, Decreased Barrier to Sophistication

For businesses, reliable, agent-based AI will become more expensive initially. The "consulting tax" paid to OpenAI or Anthropic means the total cost of ownership for a production agent is higher than initially projected. However, this investment buys something invaluable: reliability and governance. Companies are paying experts to prevent costly failures.

2. The Talent War Intensifies

If the value lies in the integration layer, the demand for people who understand both deep learning concepts (like RAG structure) and legacy enterprise systems (like SAP or proprietary databases) will skyrocket. Companies that can attract or develop internal talent capable of managing this complex integration layer will pull ahead.

3. A Shift in Vendor Lock-In

When a company pays OpenAI to deeply customize an agent based on Anthropic's infrastructure for a specific compliance task, they become intensely locked into that vendor’s service stack. Future innovation may slow down slightly as clients become heavily invested in bespoke integration solutions provided by the model creators themselves. This effectively turns foundation model providers into essential, non-replaceable partners.

4. AI Becomes Truly Verticalized

The future of AI is not one large, generalized model running everywhere. It is hundreds of hyper-specialized, deeply embedded, and highly customized agents. The fact that vendors must consult proves that "general intelligence" must be expertly carved and focused to solve specific, valuable business problems.

Actionable Insights for Enterprise Leaders

For leaders looking to deploy generative AI strategically, understanding this pivot means adjusting your procurement and development strategies:

- Budget for Implementation, Not Just Licensing: Assume that the operational deployment and customization of any core agent will cost at least 2x to 3x the annual licensing fee for the first year. Budget for specialized professional services immediately.

- Prioritize Governance Early: Do not wait until the agent is "working" to worry about security and compliance. Demand that any consulting engagement—whether directly from the model provider or an SI—makes governance and reliable data grounding the central pillars of the project scope.

- Build an Internal AI Engineering Core: Relying solely on external consultants is unsustainable. Hire or train engineers focused specifically on MLOps, RAG pipeline maintenance, and prompt engineering best practices. This internal team will be necessary to maintain the customized systems once the initial consulting engagement concludes.

- Demand Measurable Reliability SLAs: When signing contracts for agent deployment, move beyond simply agreeing to use the API. Require Service Level Agreements (SLAs) based on accuracy, latency, and adherence to guardrails. This forces the vendor to take accountability for production quality.

The era of "turn it on and watch the magic happen" is definitively ending. The new era of enterprise AI is here: one defined by specialized craftsmanship, deep integration, and the understanding that the most powerful AI systems are those built, not just bought.