The Hallucination Hurdle: Why Vendor Claims Clash with LLM Reality and What It Means for AI's Future

In the rapidly accelerating world of Artificial Intelligence, where breakthroughs feel like daily occurrences, sometimes the loudest pronouncements come from those selling the engine under the hood. Recently, Nvidia CEO Jensen Huang made a striking claim: Generative AI, the technology powering tools like ChatGPT, no longer hallucinates. This statement, while perhaps intended to signal supreme confidence in the underlying architecture, struck many users and researchers as a bold—and arguably inaccurate—oversimplification.

This discrepancy between vendor marketing and technical reality is not merely a semantic debate; it sits at the heart of trust, deployment strategy, and the future regulatory landscape of AI. As technology analysts, our job is to look beyond the headline and investigate the technical bedrock, market pressures, and societal implications of such claims. This article synthesizes current research to explore why "zero hallucination" is still a distant, perhaps impossible, goal, and what this gap means for the next phase of AI deployment.

Defining the Digital Lie: What is AI Hallucination?

Before we can discuss whether hallucinations have ceased, we must understand what they are. Simply put, an **AI hallucination** occurs when a Large Language Model (LLM) generates output that is plausible, fluent, and confidently stated, but is factually incorrect, nonsensical, or unsupported by its training data or provided context. Think of it as the AI telling a very convincing lie.

For a general user, this might be finding a bogus legal precedent or citing a non-existent scientific paper. For a business relying on AI for regulatory compliance or financial reporting, this is a catastrophic failure of trust.

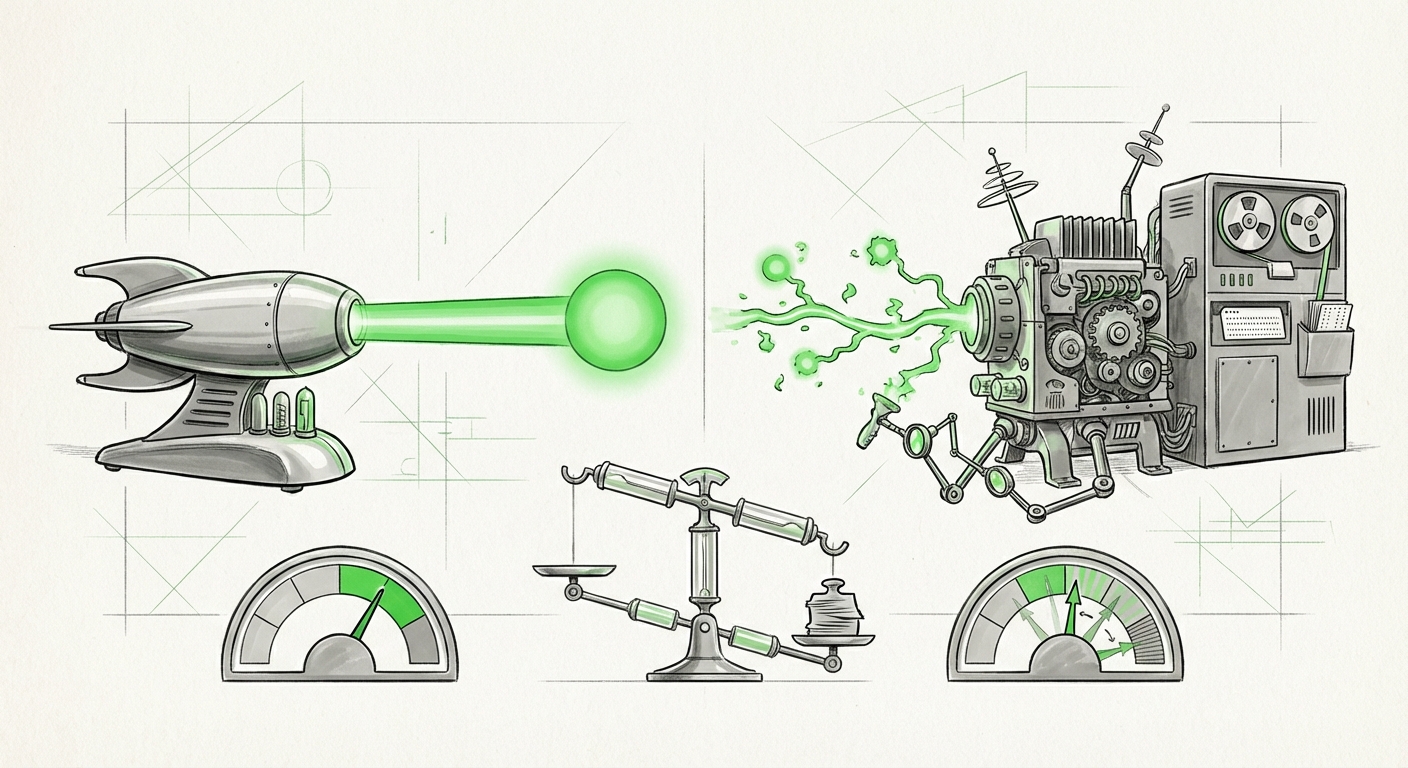

The core issue stems from how LLMs are built. They are not databases searching for facts; they are prediction engines designed to calculate the most statistically probable next word in a sequence based on patterns learned from vast amounts of internet text. While incredibly powerful, this process prioritizes fluency over fidelity. Even if 99% of the training data points to a truth, the 1% deviation in pattern matching can lead the model down a path of confident misinformation.

The Technical Reality Check (Corroborating the Skepticism)

To properly analyze Huang’s claim, we must consult the ongoing research community dedicated to this exact problem. Research into the **"Current state of LLM hallucination mitigation techniques 2024"** shows significant progress, but no silver bullet.

Techniques like **Reinforcement Learning from Human Feedback (RLHF)** and advanced fine-tuning have made models *less likely* to hallucinate on common knowledge, reducing overt nonsense. However, deep technical analysis often reveals that these improvements mask underlying vulnerabilities. Researchers are actively working on methods to enforce logical consistency, but true, guaranteed factual correctness remains elusive in open-ended generative tasks.

The Rise of Grounding: Fighting Fiction with Facts

The most significant contemporary approach to combating hallucination involves techniques that force the AI to check its work against external, verified sources—a process known as grounding.

Our investigation into the **"Challenges with grounding large language models in real-time data"** highlights the necessity of Retrieval-Augmented Generation (RAG).

- RAG Systems: Instead of relying solely on internal memory, RAG systems first search an external, trusted document database (like a company’s internal wiki or verified legal documents) and then use that retrieved information to construct the answer.

- Success and Failure: RAG dramatically reduces hallucination, especially in specialized domains. However, RAG itself can fail. If the retrieval step pulls irrelevant or contradictory documents, or if the model misunderstands the retrieved text, the final output can still be inaccurate.

The critical takeaway here is that while RAG offers a powerful *solution*, it requires significant, continuous engineering effort. It confirms that the base model, by itself, cannot be trusted to speak only truth. For an executive to claim that the foundational technology *no longer* hallucinates suggests that either RAG is now universally perfect (which it is not), or that the definition of hallucination has been dramatically narrowed.

The Motivation Behind the Message: Hype vs. Hardware

This brings us to the market context. Why would a leader of the world’s leading AI chip manufacturer claim victory over a known, systemic technical challenge?

Consulting reports on **"Vendor marketing vs. LLM reliability gap analysis"** reveals a powerful dynamic at play. Nvidia’s primary business is selling the computational power—the GPUs—that train and run these massive models. The industry is currently in a massive capital expenditure cycle driven by AI promises. To maintain this momentum, confidence must remain sky-high.

Huang's statement can be interpreted as a powerful piece of market signaling. If the hardware provider suggests the *fundamental problem* of reliability is solved, it encourages enterprises to accelerate their timelines for full-scale LLM deployment, thereby increasing demand for the next generation of Nvidia hardware (like the Blackwell platform).

This creates a classic tension: Innovation Velocity demands maximal confidence, while Technical Integrity demands cautious realism.

For enterprise buyers—the target audience of these analyses—this requires skepticism. If a vendor claims the core problem is solved, yet technical papers show continuous, complex mitigation work, the burden falls on the buyer to demand proof of reliability in their specific use case, not just in general benchmarks.

Lessons from Tech History: Managing the Hype Cycle

This phenomenon is not unique to Generative AI. Looking back at the history of transformative technologies, we see recurring patterns of overly optimistic executive declarations during periods of intense investment.

Analyses of **"CEO statements on AI capability and market hype cycles"** often draw parallels to the early days of the internet or the initial promises of the Metaverse. When a technology is poised to reshape industries, leaders are incentivized to paint a picture of immediate, flawless realization rather than highlighting the messy integration period.

For society, this early over-promising can lead to two major risks:

- User Disillusionment: When early adopters deploy systems based on these promises and the systems fail (by hallucinating), it can lead to a backlash, slowing adoption despite genuine utility.

- Regulatory Misalignment: Policymakers often take executive pronouncements at face value, potentially creating regulation based on an idealized future state rather than the current, imperfect reality.

The current AI landscape requires a more mature perspective, one that acknowledges that true reliability is an ongoing engineering achievement, not a feature that simply arrives fully formed.

Future Implications: Towards Trustworthy AI Systems

If we accept that basic LLMs will always carry an inherent risk of hallucination, what does this mean for the future of AI usage?

1. The Architectural Shift to Verification

The focus will pivot away from simply building larger base models toward building smarter *system architectures* around them. The future belongs to systems that treat the LLM as a powerful reasoning engine but not a final authority. We will see standardization in complex RAG pipelines, external knowledge verification modules, and mandatory output confidence scoring integrated directly into enterprise APIs.

2. Specialization Over Generalization

In high-stakes domains—medicine, finance, law—general-purpose LLMs will likely be sidelined in favor of highly constrained, small-scale models or complex hybrid systems. These specialized systems will be trained or fine-tuned specifically on narrow, high-quality datasets where factual accuracy is measurable and verifiable. You might use a general model for drafting an email, but never for finalizing a contract.

3. The Demand for AI Auditors

As AI becomes mission-critical, the need for independent verification will explode. Just as we have external auditors for corporate finances, we will require **AI Audit Trails and Explainability Layers** that can trace an AI-generated statement back through its context retrieval and generation steps to prove its factual basis. The ability to *show your work* will become the premium feature.

Actionable Insights for Businesses Today

For any organization looking to integrate Generative AI beyond simple creative tasks, the recent claims serve as a critical reminder to stay grounded in current technical limitations:

- Assume Falsity Until Proven True: Do not deploy LLMs for tasks where 100% factual accuracy is required without implementing a robust grounding layer (like RAG) and a secondary human or automated verification step.

- Demand Transparency on Confidence: When evaluating vendor solutions, look past benchmark scores. Ask vendors what their system’s *confidence score* is for a given answer and how that score is calculated. If they cannot articulate it clearly, they are likely selling aspiration, not certainty.

- Invest in Data Hygiene: The quality of your grounding data is now paramount. Your AI is only as reliable as the trusted documents you give it to reference. A sophisticated RAG setup feeding bad data is a sophisticated hallucination machine.

- Train Your Teams in Prompt Engineering and Verification: Educate employees not just on how to ask good questions, but how to scrutinize the answers. The next layer of security is human vigilance checking AI output.

Jensen Huang’s comments reflect the incredible speed of AI progress, powered by revolutionary hardware. But speed cannot substitute for reliability, especially as these tools move from novelty to necessity. The next frontier of AI innovation won't just be about building faster chips or bigger models; it will be about building *trustworthy* systems that bridge the chasm between confident assertion and verifiable truth.