The AI Frontier: Inside the Battle for Long-Term Dominance, Accountability, and Compute Power

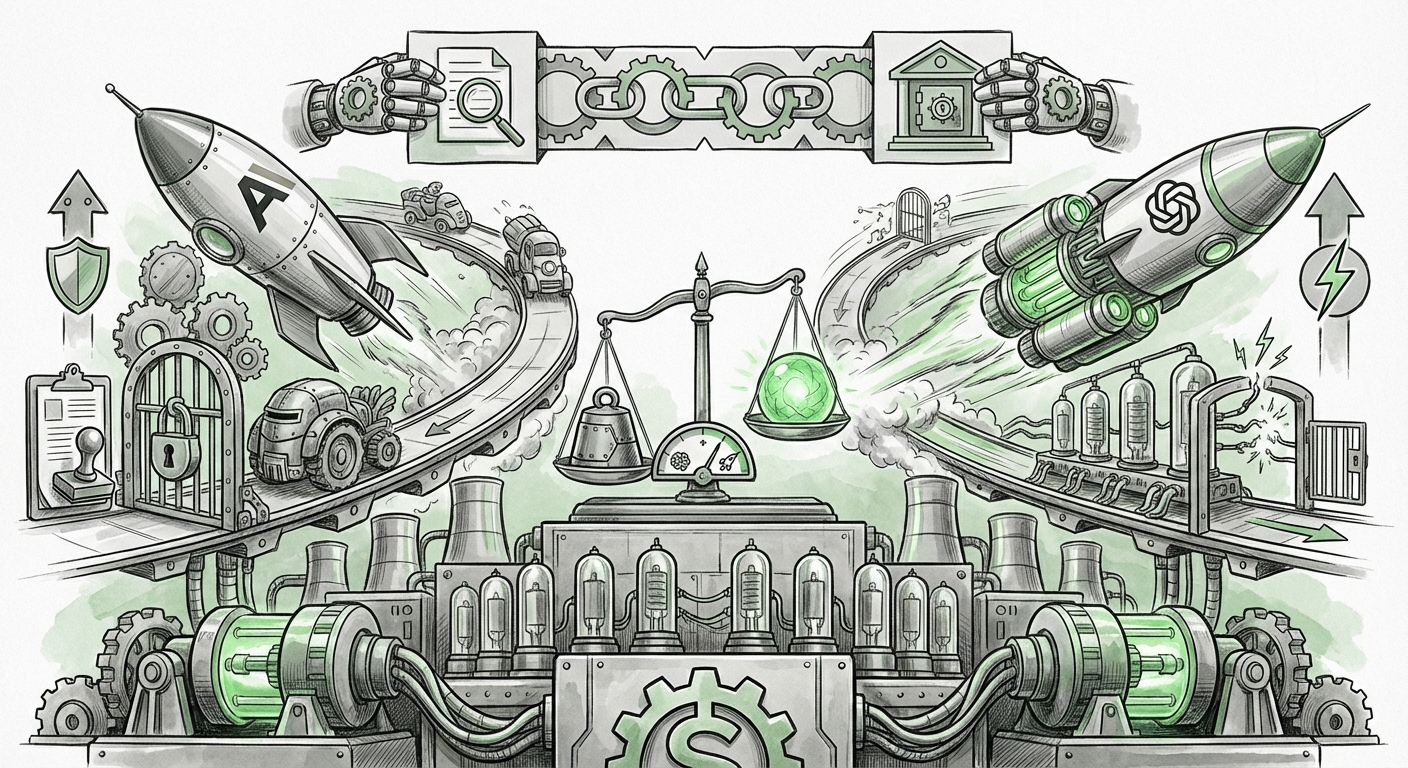

The artificial intelligence landscape is currently defined by a thrilling, yet deeply consequential, tension. On one side, we see an intense, capital-fueled race among frontier labs—most notably Anthropic and OpenAI—vying for long-term supremacy. On the other, a growing recognition that this immense power necessitates robust governance, propelling new accountability measures to the forefront. Analyzing recent developments reveals that the future of AI will not be determined by model performance alone, but by a precarious balance between speed, safety, and structure.

The Long Horizon Battle: Rival Philosophies at Speed

The competition between Anthropic and OpenAI has moved beyond simple benchmarking. It is now a contest of foundational philosophy regarding how fast, and under what constraints, we should pursue Artificial General Intelligence (AGI). These two powerhouses, both heavily backed by tech giants (Google and Microsoft, respectively), represent two distinct paths forward.

Anthropic, often seen as the more safety-conscious contender, champions a methodology rooted in Constitutional AI. This approach embeds a set of guiding principles directly into the model's training, aiming to produce systems that are inherently helpful, harmless, and honest without constant human fine-tuning for every potential error. They are focused on building trust through demonstrable safety protocols.

Conversely, OpenAI continues its aggressive scaling trajectory. While safety remains a stated priority, their releases often prioritize pushing the boundaries of capability, leveraging massive compute resources to achieve state-of-the-art performance across complex reasoning tasks. The underlying strategic implication is clear: whoever achieves the first truly transformative leap in general reasoning capability may set the industry standard for years to come. This rivalry isn't just about who has the smarter chatbot; it’s about who dictates the architectural and ethical defaults of future intelligence.

Corroborating the Strategy: Beyond the Hype

To understand the depth of this strategic rift, one must look past marketing claims to the underlying architecture and investment decisions. Analyst focus has shifted to comparing the **frontier model safety alignment strategy** of both firms. For instance, when new models like Claude 3 Opus are released, experts scrutinize not just the performance gap against GPT-4 Turbo, but the explicit safety disclosures accompanying them. This constant comparison forces both labs to evolve their safety posture publicly, even as they internally race for the next technological breakthrough.

This intensity directly influences the market. For technology strategists and VCs, the choice is no longer just about performance ROI, but alignment risk. A model with slightly lower performance but demonstrably superior safety governance might be preferred for enterprise deployment, highlighting that how a model arrives at its answer is becoming as important as the answer itself.

The Governance Imperative: Forcing Accountability

As models become more capable—handling everything from complex legal drafting to drug discovery—the stakes for misuse or unforeseen failure rise exponentially. This reality is fueling the push for concrete, auditable accountability mechanisms, moving the conversation beyond theoretical ethics and into practical engineering requirements. New organizations and tools, such as those hinted at by efforts like Goodfire and LayerLens, are emerging to fill this governance gap.

For too long, AI development operated largely behind closed doors. Now, there is a clear industry trend toward demanding external checks. This often involves:

- Model Auditing: Requiring third parties to test models for bias, security vulnerabilities, and adherence to specified performance bounds before deployment.

- Transparency Dashboards: Creating standardized reporting on training data provenance, computational requirements, and risk evaluations.

- Accountability Frameworks: Developing legal and technical standards that assign responsibility when an AI system causes harm.

The Ecosystem of Oversight

This movement is supported by evolving global policy. When analyzing the emergence of independent audit tools, we see them fitting directly into the emerging regulatory structures, such as the evolving mandates of the **EU AI Act** or voluntary commitments made under frameworks established by bodies like the **AI Safety Institute**. Policy makers, compliance officers, and ethics professionals are urgently seeking these tools because regulatory compliance in the near future will rely heavily on verifiable, third-party assurance that models are operating within guardrails.

The implication for business is significant: "black box" deployment is becoming synonymous with liability risk. Companies must budget not only for AI acquisition but for the necessary compliance layers—the governance frameworks that confirm the AI is doing what it is supposed to do.

Technical Velocity: The Engine of the Race

The strategic competition described above is entirely predicated on continuous technical innovation. The recent cadence of "major AI releases" confirms that the breakthroughs are accelerating, often driven by efficiency gains that allow labs to deploy massive models more affordably or with greater capability than previously thought possible.

Two critical technical domains currently define this velocity:

- Mixture of Experts (MoE) Architectures: MoE models allow an AI to be selectively activated, meaning that only parts of the massive model are used for any given query. This dramatically increases efficiency. It is a key enabler for labs to deploy models that are both vast in knowledge and relatively fast in execution, directly challenging the brute-force scaling methods of the past.

- Long Context Windows: The ability of a model to "remember" and reason over entire books, vast code repositories, or months of conversation is transforming utility. Models that can handle millions of tokens are moving AI from being a sophisticated autocomplete tool to a genuine collaborator capable of processing entire organizational knowledge bases.

These technical advancements are the currency of the AI arms race. They translate directly into competitive advantage. For engineers and deep tech investors, understanding the adoption of **MoE models** and the expansion of **long-context window modeling** is crucial, as these are the innovations separating today’s powerful tools from tomorrow’s revolutionary systems.

The Hard Reality: Compute as the Ultimate Gatekeeper

While code and algorithms matter, the AI frontier is currently constrained by physics and finance. The battle for long-term dominance is fundamentally a battle for compute access.

The sheer scale of training and iterating on frontier models requires staggering investment in specialized hardware, primarily high-end GPUs. Consequently, the **capital expenditure on AI infrastructure** has become the most significant barrier to entry. Major players like Microsoft, Google, and Amazon are not just investing in AI research; they are reshaping global semiconductor supply chains.

This concentration of resources creates an oligopoly. Only those with access to tens of billions of dollars in capital can afford the training runs necessary to stay competitive. For financial analysts and industry observers, the **semiconductor demand outlook** directly maps onto the pacing of AI progress. The continued dominance of leading chip manufacturers is not an anomaly; it is the necessary scaffolding for the entire cutting-edge AI industry.

What This Means for the Future of AI and How It Will Be Used

The current confluence of intense competition, mandatory governance, and immense resource consumption shapes three major future implications:

1. Bifurcation of the AI Market

We are moving toward a clear split in the AI market. On one end will be the hyperscale, frontier models (the results of the OpenAI/Anthropic battle), which will handle the most complex, general reasoning tasks. These will be accessed via high-cost APIs and will drive scientific discovery and radical new product categories.

On the other end will be highly optimized, smaller, specialized models (often MoE variants) deployed locally or on private clouds. These models will be tailored for specific enterprise needs—legal review, manufacturing control, etc.—and their primary selling point will be compliance and data privacy, rather than raw intelligence.

2. Governance as a Feature, Not a Friction Point

In the near future, successful AI deployment will require embedding accountability tools—like those being developed by the ecosystem surrounding Goodfire and LayerLens—directly into the application layer. Businesses won't simply buy a model; they will purchase a certified stack. The ability to prove to regulators that your AI system has been independently audited for fairness and accuracy will become a prerequisite for market access, similar to achieving ISO certification in manufacturing.

3. The Compute Cold War

The reliance on massive infrastructure means that AI progress will remain tethered to geopolitical stability and the availability of high-end silicon. Companies that fail to secure multi-year compute contracts will rapidly fall behind. This elevates infrastructure strategy to the level of product strategy. For business leaders, securing resilient, diversified access to AI compute, even if it means co-investing in hardware fabrication or new chip architectures, will be critical for long-term viability.

Actionable Insights for Navigating the Next Era

To thrive in this rapidly evolving environment, organizations must take proactive steps:

- Evaluate Alignment Risk: When selecting models, prioritize vendors who openly discuss and provide evidence of their safety and alignment methodologies (Anthropic's style), even if competitors offer marginally higher raw benchmarks.

- Build for Auditability: Assume regulatory scrutiny is coming. Start documenting data pipelines, model versions, and testing methodologies now. Choose tooling that facilitates external review rather than hiding model internals.

- Diversify Compute Strategy: Do not rely on a single cloud provider for frontier access. Explore partnerships or hybrid solutions that hedge against both technical limitations and supply chain shocks in the semiconductor market.

The AI ecosystem is currently balanced precariously between boundless ambition and necessary restraint. The outcome of the strategic battle between the frontier labs will define the speed of innovation, but the success of the accountability movement will define the stability and trustworthiness of the resulting technology.