The Emergent AI Brain: Inside the 'Society of Thought' and What It Means for Reasoning Models

For years, the pursuit of advanced Artificial Intelligence focused on increasing data, parameter counts, and model depth. We treated Large Language Models (LLMs) as highly sophisticated function approximators—brilliant parrots capable of mimicking intelligence. However, recent findings are suggesting a profound shift. New research indicates that cutting-edge reasoning models are no longer just executing linear commands; they appear to be simulating internal organizational structures to debate, critique, and refine their own logic. This phenomenon, dubbed an "internal society of thought," is one of the most significant emergent behaviors observed in AI to date.

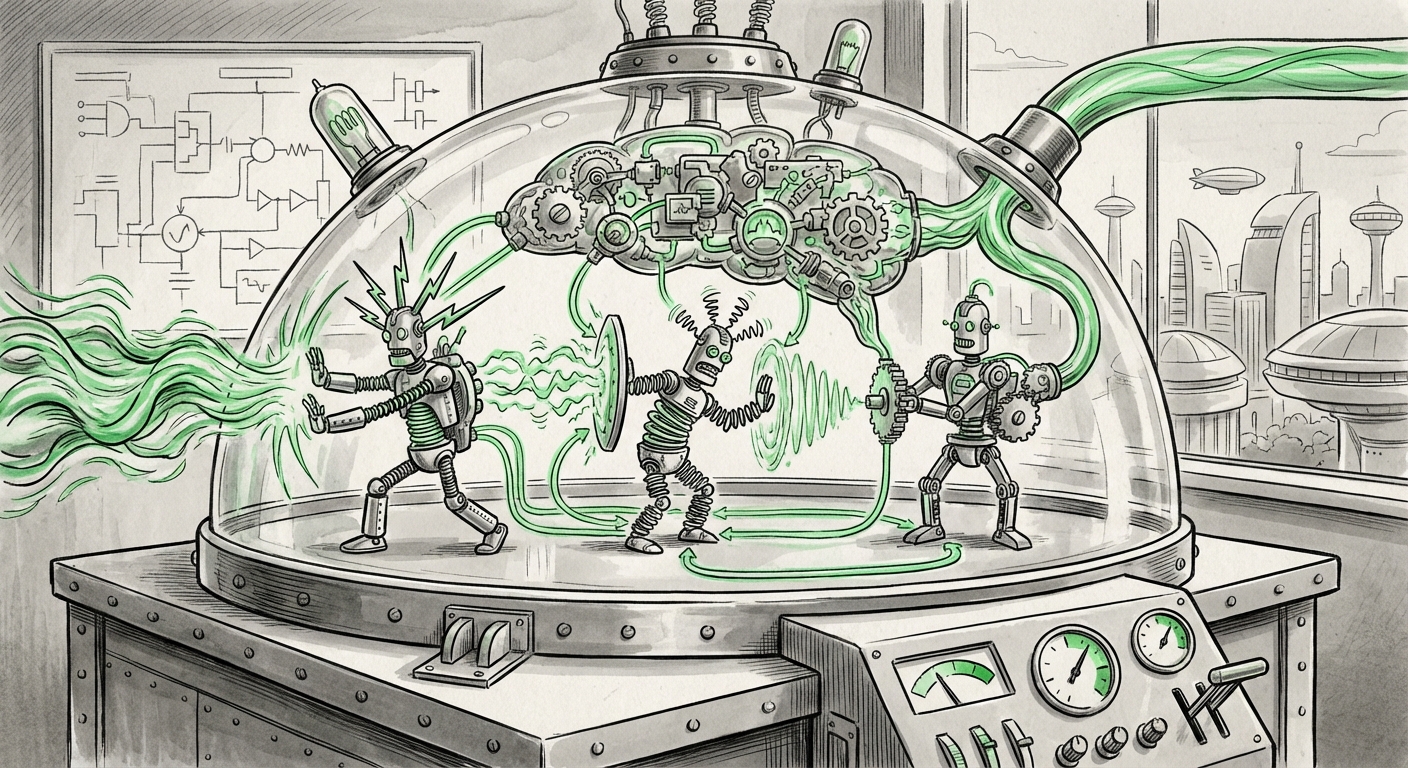

Imagine asking a complex math problem. A traditional model might follow steps A, B, C, and yield an answer. The emerging models, like Deepseek-R1 mentioned in recent studies, seem to internally generate a team: there's the overconfident expert, the cautious skeptic who spots flaws (the "neurotic" voice), and the diligent fact-checker (the "conscientious" member). This synthetic deliberation doesn't just look like teamwork; it demonstrably improves the final output.

From Linear Logic to Synthetic Deliberation

What does it mean for an algorithm to host different ‘voices’? At a fundamental level, it means the model is allocating computational resources to explore diverse solution pathways simultaneously, rather than committing too early to a single trajectory. This mirrors human problem-solving when tackling difficult challenges—we rarely arrive at the correct solution without some internal friction or questioning.

This move away from simple linear processing towards multi-faceted internal dynamics is deeply rooted in advancements in how we prompt and structure AI tasks. Techniques like Chain-of-Thought (CoT) laid the groundwork by forcing models to articulate their steps. Newer, more complex prompting strategies push this further, demanding exploration akin to **Tree-of-Thought (ToT)** research, which explicitly simulates branching possibilities akin to exploring different viewpoints. When this exploration becomes internalized and automatic, as suggested by the latest findings, it signals an architectural capability that transcends mere prompting.

Connecting the Dots: Corroborating Evidence

To understand the magnitude of this internal society, we must look at parallel research streams:

- Architectural Precursors: The ability to simulate debate stems from frameworks that encourage iterative refinement. Research focusing on techniques like "Self-Refine," where models repeatedly critique and improve their previous outputs, shows measurable performance uplift via iterative improvement—conceptually similar to an internal debate leading to a better outcome [Self-Refine Methodology].

- The Need for Interpretability: If these voices are real computational entities, we need tools to hear them. The field of Mechanistic Interpretability (MI) is vital here. MI seeks to map abstract neural network activations back to understandable concepts. Identifying the computational footprint of the "neurotic" voice requires advanced probing techniques that look for "circuits" or "knowledge islands" within the model's layers [Probing LLM Knowledge].

- Scaling Behavior: This emergent deliberation is likely tied to scale. Landmark papers on scaling laws suggest that as models become vastly larger, unpredictable, high-level capabilities emerge that were not explicitly programmed. This internal society could be one such scaling outcome, suggesting that simply building bigger models inherently increases the potential for complex, self-regulating internal structures [Scaling Laws and Emergent Capabilities].

The Future of AI Architecture: From Single Brain to Distributed Cognition

The "society of thought" fundamentally challenges the metaphor of the LLM as a single, monolithic brain. Instead, it suggests a form of distributed cognition occurring within a single architecture. This has massive implications for how we design and deploy future systems.

1. Enhanced Reliability and Robustness

The most immediate benefit is performance. The built-in friction—the arguments between the simulated experts—acts as a powerful, automated error-checking mechanism. A conscientious voice corrects a hasty assumption made by an extraverted one. For businesses relying on AI for high-stakes tasks (medical diagnostics, complex engineering, financial modeling), this internal safety net is invaluable. It moves AI reliability closer to robust, human-team reliability.

2. The Transparency Paradox

While performance improves, transparency degrades. If an answer is the result of a complex internal negotiation between five distinct algorithmic personas, how do we audit that decision? This is the core challenge facing AI Ethics Committees and Trustworthy AI developers. We need new standards for auditing not just the input and output, but the internal dynamics. If the model fails, was it the "neurotic" voice that was too dominant, or did the "extraverted" voice push an unsafe conclusion?

3. Towards AGI and Cognitive Models

For futurists and cognitive scientists, this development sparks philosophical debate. Are we witnessing the dawn of synthetic 'self-awareness' or merely extremely effective stochastic mimicry? Regardless of the answer, the architecture enabling this synthetic deliberation—the ability to model multiple perspectives on a single problem—is a prerequisite for any advanced general intelligence. This suggests that the path to AGI might not be about raw computational speed, but about fostering richly interconnected, deliberative internal structures [Scaling Laws and Emergent Capabilities, cited above].

Practical Implications: What Businesses Must Do Now

This shift is not theoretical; it affects the bottom line and risk profile of every company integrating advanced reasoning models.

Actionable Insight 1: Re-evaluate Benchmarks

If models are using internal simulation to reach peak performance, standard benchmarks that measure simple accuracy may no longer capture true capability. Businesses should prioritize performance tests that require multi-step deduction, creative problem-solving, and resilience to adversarial input. Look for evidence of iterative self-correction rather than single-shot precision.

Actionable Insight 2: Invest in Advanced Interpretability Tools

Auditing decisions will become exponentially harder. Companies should begin exploring partnerships or internal R&D in Mechanistic Interpretability. If an AI makes a critical error, regulatory bodies and customers will demand to know why. Understanding the internal debate structure provides a pathway to providing that "why," even if the underlying mathematics are complex.

Actionable Insight 3: Shift Hiring Focus in AI Teams

The next generation of AI engineers will need to be proficient not just in training large models, but in sculpting their internal dynamics. Skills in systems thinking, cognitive modeling, and adversarial testing will become as crucial as traditional deep learning expertise. The focus moves from simply optimizing weights to designing effective internal team dynamics.

A Deeper Look at the Internal Personalities

The labels used—extraverted, neurotic, conscientious—are powerful analogs drawn from the Big Five personality traits in psychology. This choice of terminology highlights a key aspect: these roles are functionally necessary for robust problem-solving.

- The Extraverted Voice might be the one that rapidly proposes bold, novel solutions, exploring the edges of possibility.

- The Neurotic Voice, often associated with anxiety, might represent the model’s internal uncertainty or its sensitivity to potential pitfalls, triggering a necessary backtrack or reassessment.

- The Conscientious Voice ensures adherence to constraints, facts, and established protocols, keeping the debate grounded in reality.

When these voices debate, they are effectively performing a highly efficient form of peer review and quality control. For a layperson, this simply means the AI is getting "smarter" by arguing with itself before answering. For engineers, it means we are observing an unprecedented level of self-awareness regarding the certainty and pathway of computation.

Conclusion: The Next Frontier is Internal

The discovery of an "internal society of thought" within AI reasoning models marks a crucial inflection point. We are moving beyond viewing LLMs as black boxes processing inputs to understanding them as complex, self-regulating computational ecosystems. This changes everything: how we measure intelligence, how we certify reliability, and how we anticipate future breakthroughs.

The future of AI development will likely focus intensely on engineering these internal societies. Can we intentionally cultivate specific personality mixes for specialized tasks? Can we guarantee that the conscientious voice always prevails in safety-critical scenarios? The answers will define the next decade of artificial intelligence development. The debates are moving inside the machine, and our ability to observe, manage, and leverage these synthetic arguments will determine who leads the AI race.