The AI Speed Tax: Analyzing Anthropic's 6x Fast Mode and the Future of Tiered LLM Services

The commercial landscape for Large Language Models (LLMs) is rapidly maturing, shifting focus from sheer capability breakthroughs to operational efficiency, reliability, and—crucially—speed. Anthropic’s recent introduction of a "Fast Mode" for its Claude Opus 4.6 model is not just a product update; it is a loud declaration of strategy. By offering 2.5 times faster responses at an eye-watering 6x markup on the standard token price, Anthropic forces the entire industry to confront a fundamental trade-off: Is your application willing to pay a premium to shave off latency?

This move crystallizes the growing tension between performance requirements and the underlying economic reality of serving state-of-the-art models. To understand the full implications, we must look beyond the headline cost and analyze how this fits into the broader competitive arena, the technical hurdles involved, and what this tiered approach means for the future adoption of generative AI.

The Economics of Instant Gratification: Analyzing the 6x Markup

When a foundational model provider introduces a tiered pricing structure, it signals market segmentation. Claude Fast Mode implies that Anthropic has identified a significant segment of its user base—likely those building customer-facing, real-time applications like instant chatbots, dynamic content generation, or high-frequency coding assistance—that values sub-second response times more than the cost of tokens.

The 6x premium suggests that the engineering required to achieve that speed is substantial. As we explore the technical realities (Section 3), we see that achieving peak throughput often requires reserving specialized, high-demand computational resources. This isn't just charging more for the same work; it’s charging for access to a queue with preferential treatment and optimized hardware allocation. For developers, this is the equivalent of upgrading from standard cloud compute instances to dedicated, high-frequency trading servers.

Contextualizing the Price: The Search for Industry Benchmarks

To gauge whether this 6x is an aggressive outlier or a new market norm, we must examine the current landscape regarding "AI model pricing tiers" and latency vs. cost LLMs. Currently, most providers offer a single, or perhaps two, tiers based on model size (e.g., GPT-4 vs. GPT-4o). However, the underlying infrastructure often manages burst capacity. Anthropic is taking this ambiguity and turning it into a concrete, high-cost, high-speed product. Other providers are likely watching closely. If Fast Mode drives significant adoption and revenue, we can expect OpenAI and Google to quickly introduce comparable latency SLAs tied to premium pricing, potentially normalizing this multiplier across the industry for mission-critical tasks.

The Competitive Battlefield: GPT-4o and the Speed Race

Anthropic’s move is not happening in a vacuum. OpenAI, with its introduction of GPT-4o, has already emphasized vastly improved speed and efficiency, often bundled into its standard API access without explicit premium latency fees (though this can change based on load). The core competitive inquiry becomes: How does Claude Fast Mode compare to OpenAI's current speed offerings?

If, upon investigating coverage of OpenAI GPT-4o speed cost comparisons vs Claude Opus, we find that GPT-4o already delivers near-instantaneous responses at a standard rate, Anthropic’s 6x fee looks less like a reflection of raw optimization and more like a strategic capture of the "absolute lowest latency" niche.

For application developers, this competition forces immediate strategic decisions. Do they build on the potentially cheaper, high-throughput standard tier of GPT-4o, accepting slight latency variation, or do they opt for the guaranteed, albeit expensive, speed of Claude Fast Mode? This competition is ultimately beneficial for end-users, as the pressure to reduce latency across the board will drive innovation even in the standard tiers.

Under the Hood: Why Speed Costs So Much

The assertion that Claude Opus maintains its high quality while doubling its speed is a significant technical feat. It implies major advancements in the serving layer, rather than just a larger model training run. This brings us to the realm of LLM inference optimization techniques like KV Cache management and GPU utilization.

Serving transformer models is computationally intensive, primarily due to the attention mechanism, which requires storing large Key-Value (KV) caches in high-speed memory (HBM) for each running conversation. To offer 2.5x speed consistently, Anthropic must be utilizing one or more advanced techniques:

- Aggressive Batching: Grouping more user requests together efficiently onto the same GPU, ensuring maximum hardware saturation.

- Optimized Memory Management: Techniques like speculative decoding or continuous batching that minimize the time the GPU sits idle waiting for the next token to be generated.

- Specialized Hardware/Placement: Allocating dedicated clusters of the latest, fastest GPUs solely for Fast Mode users, preventing them from competing with standard traffic.

The 6x cost directly reflects the high capital expenditure and operational cost of maintaining these optimized inference stacks. For engineers, this means that achieving true, guaranteed low latency is an infrastructure challenge first, and an algorithmic one second.

The Future: AI Service Tiering and Market Segmentation

Anthropic’s move strongly suggests that the era of a single, monolithic LLM API price is ending. The exploration into the Future of LLM service tiers and market segmentation confirms that AI is following the path of mature cloud services.

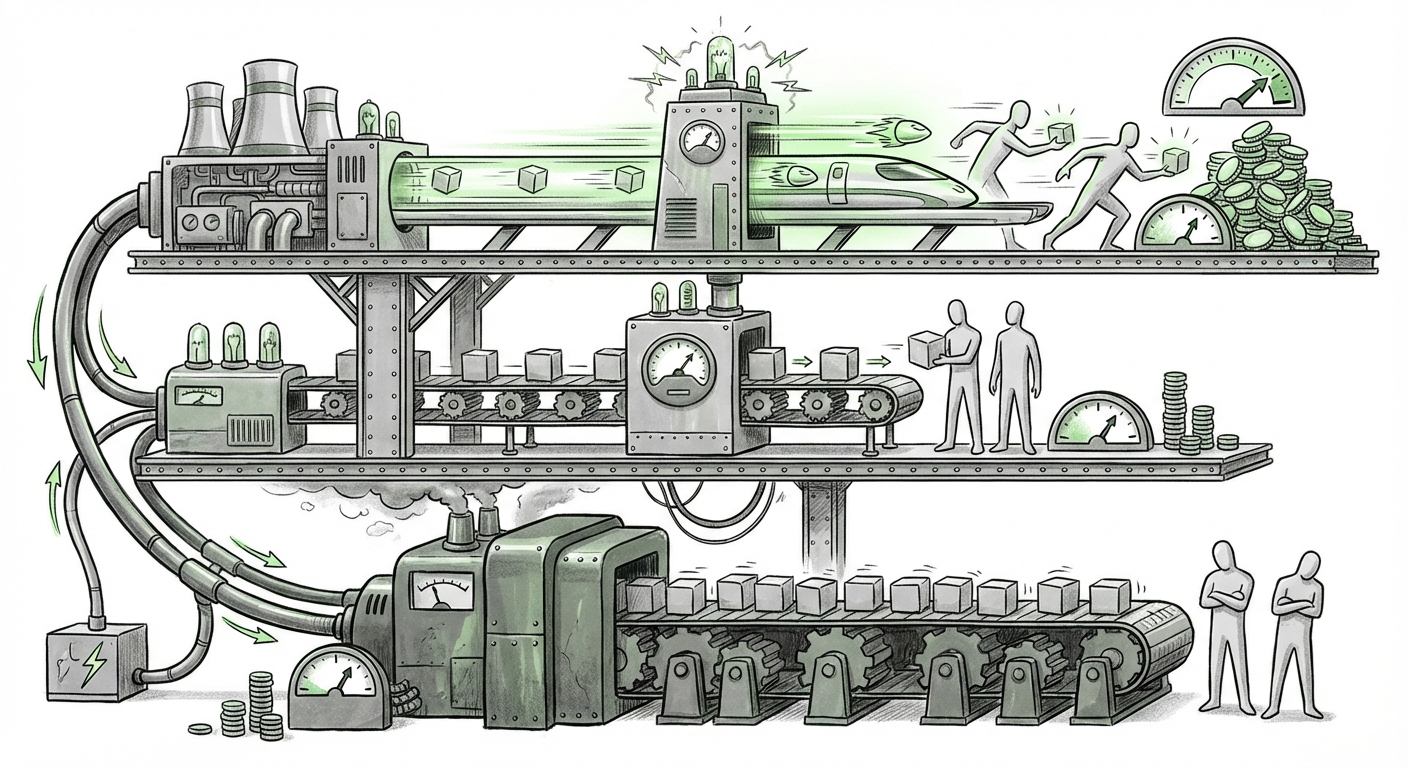

We are establishing three clear tiers, mirroring traditional cloud computing:

- Tier 1: Commodity/Bulk (Low Cost/High Latency): Ideal for background processing, data summarization, or internal documentation searches where a few seconds delay is irrelevant.

- Tier 2: Standard/Balanced (Default Cost): The workhorse tier. Good enough for most development environments, balancing cost and reasonable speed.

- Tier 3: Premium/Real-Time (High Cost/Low Latency): Essential for UX-critical applications where speed equates directly to user retention or transaction success. This is where the 6x Fast Mode sits.

This segmentation is powerful because it allows LLM providers to maximize revenue capture from every user segment simultaneously. Developers struggling with budget can stick to Tier 1 or 2, while enterprise clients needing strict Service Level Agreements (SLAs) for production workloads will readily absorb the Tier 3 cost.

Implications for Developers and Businesses

Actionable Insights for Application Builders:

Businesses must now rigorously profile their AI workloads:

- Latency Auditing: Do you *really* need sub-second responses? Use standard tiers for non-critical paths (e.g., generating marketing copy drafts) and reserve Fast Mode only for the highest-value, real-time customer interactions (e.g., live conversational agents).

- Cost Modeling: Before scaling, calculate the true cost impact. A 6x increase in tokens used for a high-volume application can dwarf compute savings elsewhere. Integrate cost analysis directly into your CI/CD pipeline.

- Vendor Lock-In Risk: Choosing a specific latency tier often means optimizing your serving infrastructure around that provider’s specific implementation. Switching providers later becomes harder if latency SLAs are deeply embedded in your core product experience.

Societal and Ethical Considerations:

While this benefits paying enterprises, the tiering system raises concerns about equitable access. If the fastest, most responsive, and potentially highest-quality models are exclusively reserved for those who can afford massive premiums, it creates a digital divide where the best AI tools are gated by budget. This reinforces the concept of a "speed gap" in AI utility, impacting smaller innovators and non-profits who cannot justify a 600% price hike.

Conclusion: The Infrastructure War is the Price War

Anthropic’s Claude Fast Mode is more than a simple price change; it’s a calculated maneuver to monetize the increasing demand for instantaneous AI performance. It confirms that the foundational race is evolving into an infrastructure and serving efficiency war, where the ability to consistently reduce latency at scale carries an extremely high market value.

The future of LLMs will be defined not just by who builds the smartest model, but by who builds the most sophisticated pricing and serving architecture around it. For application builders, this means moving beyond simple token counting and embedding sophisticated cost-benefit analyses for every API call. In the modern AI economy, speed is not free—it is the most expensive commodity on the digital shelf.