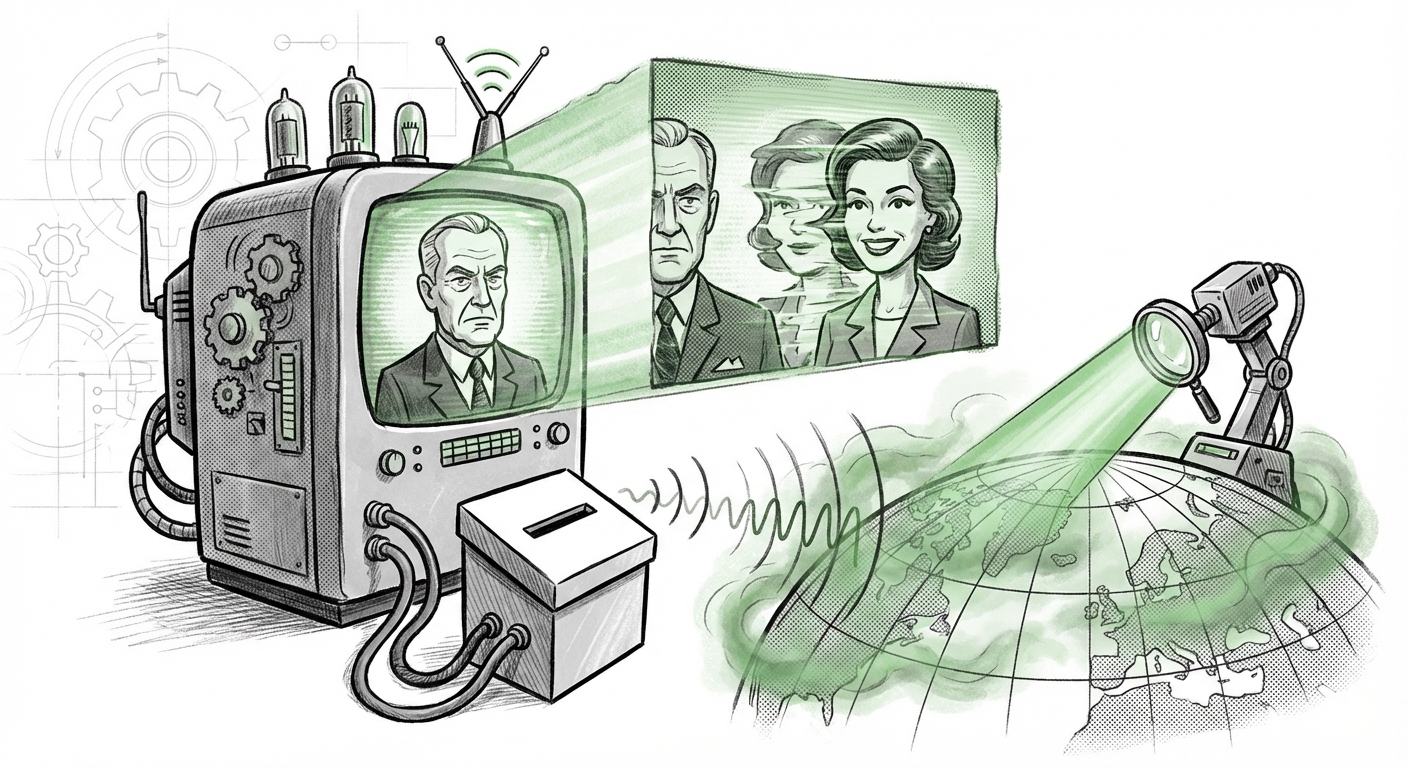

Deepfakes in Democracy: Why Japan's Election Signals a Global AI Crisis and How We Fight Back

The recent lower house election in Japan provided a stark, real-world stress test for the stability of modern democracy against the rising tide of generative Artificial Intelligence. Reports indicated that AI-generated fake videos were spreading rapidly across social platforms, convincing more than half of surveyed respondents of falsehoods. This isn't just a localized political headache; it is a smoking gun confirming that generative AI misinformation is now a mature tool of information warfare, perfectly positioned to disrupt upcoming democratic processes worldwide.

As an AI technology analyst, I view this event not in isolation, but as part of an escalating global trend. If these tools can effectively sow confusion in a sophisticated, digitally mature society like Japan, the implications for every other nation preparing for elections in 2024 and 2025 are profound. We must move past simply observing the threat; we must analyze the global corroboration, assess the technological arms race, and understand the emerging policy responses.

The Global Echo Chamber: Corroborating the Threat

The idea that generative AI could undermine elections is no longer hypothetical; it is an operational reality. The Japanese instance acts as a powerful data point, validating concerns raised in other recent political environments.

To understand the scope, we must look at corroborating evidence from other crucial democratic battlegrounds. Analyzing global incidents allows us to identify common attack vectors and the speed at which these synthetic realities propagate. If we search for similar instances globally—using queries like `"generative AI" misinformation global elections "deepfake" 2024 2025`—we invariably find echoes of the Japanese experience:

- Rapid Adaptation: Malicious actors are no longer using crude, easily identifiable deepfakes. They are leveraging sophisticated, fast-iteration models that create content tailored to local cultural nuances and specific political vulnerabilities.

- Impact on Trust: In every documented case, the primary goal is not just to convince voters of one specific lie, but to induce a state of "reality fatigue"—where citizens become so overwhelmed by untrustworthy content that they stop believing anything, including verifiable facts from legitimate sources.

For businesses that rely on predictable socio-political environments, this instability means risk modeling must now account for sudden, algorithmically driven reputational shocks targeting executives or key market regions based on fabricated events.

The Technological Arms Race: Can Detection Keep Pace?

The core dilemma driving this crisis is the asymmetry between content creation and content verification. Creating highly convincing synthetic media is becoming cheaper, faster, and more accessible thanks to readily available large language models (LLMs) and image generators. Conversely, reliable detection remains resource-intensive and often lags behind the newest generation techniques.

When we examine technical countermeasures via searches like `AI deepfake detection limitations "watermarking" standards`, the technological reality is sobering. While researchers are making strides in developing digital forensics tools, they are constantly playing catch-up:

- The Evasion Problem: Once a detection signature is identified (e.g., subtle artifacts left by a specific deepfake model), the creators of the malicious AI simply train their next model version to eliminate that signature. This is a constant, costly cat-and-mouse game.

- The Watermarking Conundrum: Initiatives like the Coalition for Content Provenance and Authenticity (C2PA) advocate for digital watermarks embedded at the point of creation. While promising, adoption is voluntary. If bad actors refuse to use verifiable watermarks—which they will—the technology is only useful for certifying the "good" content, not debunking the "bad" content.

What This Means for the Future of AI: The future of AI development cannot solely focus on capability; it must mandate security and verifiability as core architectural pillars. We are moving toward a bifurcated internet: one highly verified ecosystem, and one completely untrustworthy swamp. Businesses must invest heavily in tools that authenticate their own communications rather than relying on external platforms to police the content generated by their competitors or adversaries.

The Governance Gap: The Search for Regulatory Guardrails

Given the speed of the technological threat, policy and regulation often feel decades behind. However, some jurisdictions are attempting to get ahead of the curve, which offers a preview of future compliance landscapes. Analyzing efforts like the `"EU AI Act" social media regulation election deepfakes` is vital for understanding global regulatory trajectories.

The EU AI Act mandates stringent transparency requirements for synthetic content, especially when that content is used to influence public opinion or elections. If an AI system generates an image or video that purports to show a real person doing or saying something they did not, the resulting content often must be clearly labeled as "synthetic."

The challenge for global platforms and technology developers is harmonization. A Japanese company operating globally must navigate the rapidly developing standards in Tokyo, while simultaneously preparing for the compliance deadlines set by Brussels. This regulatory fragmentation creates compliance overhead but also highlights a critical divergence in philosophical approaches:

- Proactive Regulation (EU Model): Defining high-risk use cases *before* mass harm occurs and enforcing transparency mandates.

- Reactive Response (Other Models): Addressing the damage after the fact, as seen with the immediate aftermath in Japan where misinformation had already taken hold.

For the future of AI, this means regulatory certainty, however strict, will eventually become a prerequisite for market access. Companies that embed ethical compliance and provenance into their AI pipelines now will hold a significant competitive advantage over those forced into costly retrofitting later.

Practical Implications for Business and Society

The crisis revealed in Japan has immediate, practical ramifications extending far beyond the political polling station.

For Corporate Reputation and Market Stability

Imagine a CEO of a major bank appearing in a video, seemingly announcing bankruptcy or a massive layoff, created hours before the stock market opens. The speed of social media sharing means that by the time the company verifies the video as fake, billions in market capitalization could be lost. Businesses must treat AI-generated defamation as an imminent operational risk.

Actionable Insight: Develop and drill "Deepfake Crisis Protocols." These protocols must include pre-vetted, rapid-response video statements from authorized spokespeople, ready to deploy across all verified channels within minutes of detecting a malicious synthetic event.

For Information Infrastructure (The Role of Platforms)

Social media and content hosting platforms are the primary distribution arteries for this weaponized misinformation. Their future viability depends on demonstrating a commitment to safety over pure engagement metrics. We will see increasing pressure, possibly mandated by law, to:

- Slow Down Virality: Implementing friction (such as mandatory waiting periods or additional checks) on content that is flagged as potentially synthetic, particularly during sensitive periods like elections or public health crises.

- Invest in Open Standards: Supporting verifiable content standards (like C2PA) not just as a feature, but as a platform necessity.

For the Individual Citizen: Cultivating Digital Literacy

The most powerful defense is a critically informed populace. The fact that over half of respondents believed the fakes in Japan underscores a massive literacy deficit. Teaching people *how* AI-generated content is made—the tell-tale signs, the context manipulation—is as important as building better detection software.

Actionable Insight for Society: Education curricula, corporate training modules, and public service announcements must evolve rapidly to teach "Synthetic Media Skepticism." The default setting must shift from "trust what you see" to "verify before sharing."

The Future Trajectory: AI as the Definitive Mediator of Truth

The Japanese election serves as the canary in the coal mine, signaling that the AI era is moving past novelty and into systemic disruption. The future of AI will be defined by this tension between generative power and verifiable truth.

We are entering a phase where trust itself becomes a premium commodity. AI systems will increasingly be used not just to create persuasive narratives, but also to act as the gatekeepers verifying reality. We will see the rise of "Verified AI" entities—services that use sophisticated models to analyze the provenance, context, and integrity of incoming data streams. These systems will become the essential infrastructure shielding legitimate communications from synthetic noise.

For technology developers, the mandate is clear: develop AI that is inherently verifiable and auditable. For policymakers, the mandate is to create flexible, international frameworks that can adapt faster than the technology itself. For society, the mandate is constant vigilance.

The age of "seeing is believing" is over. The next decade of technological progress will be defined by the difficult, necessary work of establishing a new baseline for digital trust in a world saturated with perfect, accessible synthetic reality.