The Digital Wild West: How Japan’s Election Exposed Generative AI’s Threat to Global Democracy

The recent lower house election in Japan was not just a political contest; it was an unavoidable stress test for the fabric of global democracy in the age of Artificial Intelligence. Reports confirmed that AI-generated fake videos—deepfakes—were spreading rapidly across social media, leading to shocking levels of public acceptance, with over half of surveyed respondents reportedly believing the fabricated news.

This incident should serve as a global alarm bell. Japan is not an anomaly; it is the current proving ground for a technology that is becoming cheaper, faster, and more convincing every quarter. As analysts, we must look beyond the headlines to understand the technical realities, the regulatory patchwork, and the profound geopolitical risks emerging from this new era of synthetic reality.

The Shocking Reality: Proof of Concept for Mass Deception

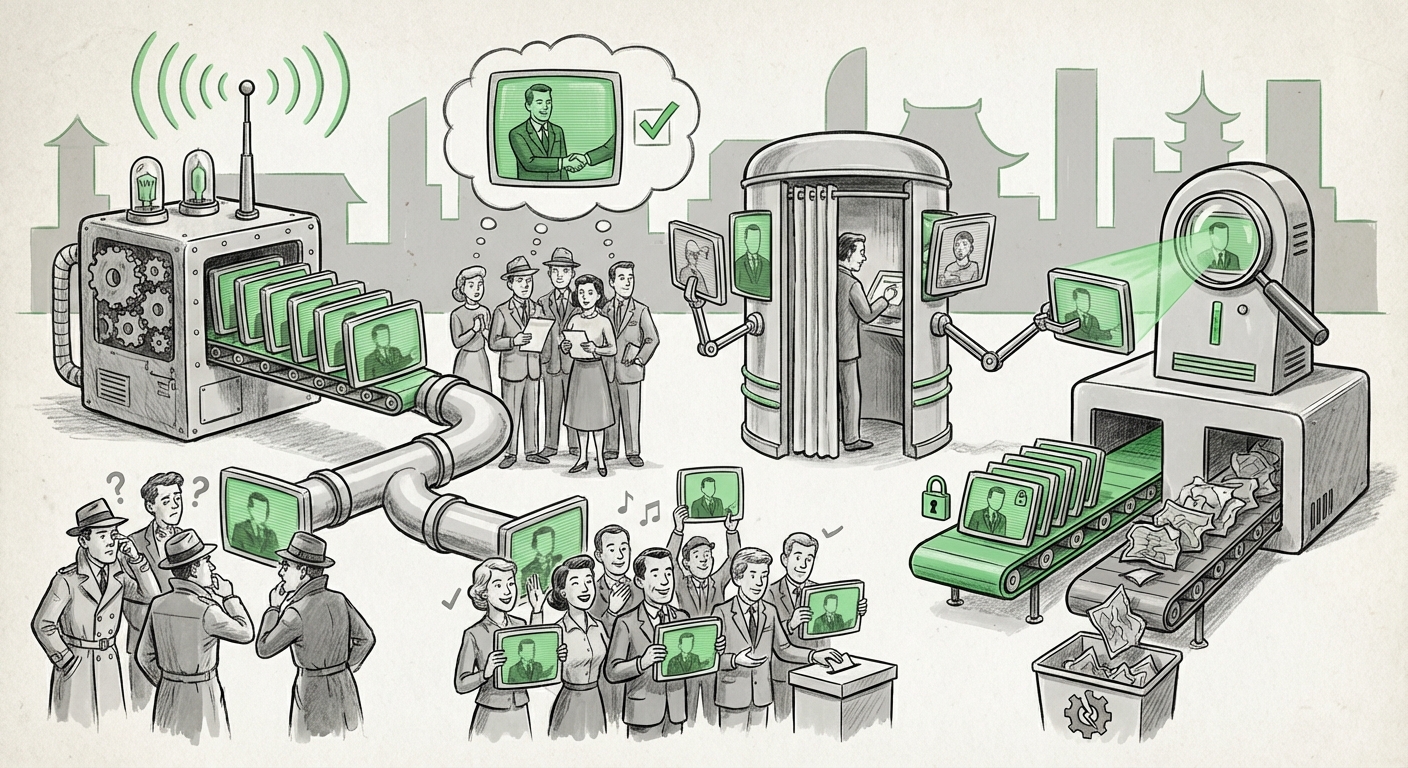

The core issue demonstrated in Japan is one of **scalability and believability**. Generative AI tools, which can now produce high-quality audio, images, and video, lower the barrier to entry for large-scale disinformation campaigns. Previously, creating convincing fake media required professional studios and significant budgets; now, it requires a few minutes on a capable computer.

For the general public—who are already overwhelmed by daily information—distinguishing human-created content from AI-generated content is nearly impossible. When over 50% of a population believes a convincing fake, the utility of deepfakes as a political weapon is proven.

The Technological Arms Race: Detection vs. Generation

The battle against synthetic misinformation is fundamentally a technological arms race. As soon as creators develop better ways to generate fakes, researchers scramble to develop better detection methods. This cycle dictates the future trajectory of AI safety.

To gauge the current state of this race, we must examine the progress in **Generative AI misinformation detection technology breakthroughs**. Advanced digital forensics are focusing heavily on two key areas:

- Artifact Analysis: Older AI models left tell-tale digital fingerprints—inconsistent lighting, strange blinking patterns, or compression errors. Newer models are closing these gaps, making static artifact detection increasingly unreliable.

- Provenance and Watermarking: The more robust solution involves establishing the *origin* of the content. Initiatives like the Coalition for Content Provenance and Authenticity (C2PA) aim to create cryptographic signatures embedded in media at the point of creation (by cameras or software) that verify its history. If content lacks a verifiable signature, skepticism is warranted.

What this means for the future of AI: The industry’s focus is rapidly shifting from merely building powerful generative models to building *responsible* generative models, often mandated by platform policies or pending regulation. However, open-source models and adversarial actors can easily bypass these safeguards, meaning defense will always lag behind offense.

The Global Governance Vacuum: A Patchwork of Inaction

Japan’s situation highlights a dangerous truth: technology is moving exponentially faster than legislation. We need to analyze how various jurisdictions are tackling this, as seen by exploring **Deepfake regulation legislative responses global elections**.

While the European Union has taken significant steps with the AI Act, mandating transparency for synthetic content used in political ads, other major democracies have lagged. In the United States, for instance, legislation is fragmented across state and federal levels, creating loopholes that bad actors can exploit during critical election windows.

For businesses, this regulatory uncertainty presents a risk. If a company’s platform is used to spread election-altering deepfakes, liability remains murky. Do platforms need to actively police every piece of content, or is simple flagging sufficient? This lack of clarity is a major barrier to responsible deployment.

Societal Cost: Erosion of Public Trust

Perhaps the most insidious long-term effect is the erosion of shared reality. As illustrated by the Japanese survey, when people can no longer trust their eyes and ears, they retreat into tribalism or cynicism. This is quantified when studying the **impact of synthetic media on public trust statistics**.

When every piece of authentic, unflattering footage can be dismissed as "just another deepfake," accountability vanishes. This creates the 'liar's dividend': bad actors benefit not only from the effectiveness of their fakes but also from the general societal skepticism they sow against all inconvenient truths.

Implication for Society: Society risks entering a post-truth equilibrium where factual verification is too slow or too difficult, leading citizens to prioritize emotional resonance over verifiable truth in their decision-making. This fundamentally undermines the premise of an informed electorate.

The Geopolitical Dimension: From Influence to Destabilization

The most concerning evolution of this trend is its weaponization by state actors. While domestic misinformation might aim to shift election results, foreign influence operations leverage deepfakes for strategic destabilization.

Analyzing the **geopolitical risks of state-sponsored deepfake campaigns** reveals that the goal is often not conversion, but confusion. A well-timed, hyper-realistic video of a world leader declaring war, announcing a financial collapse, or making a major policy blunder could trigger immediate, real-world consequences—market crashes, military mobilization, or diplomatic crises—before the content can be officially debunked.

The Japanese election, while domestically focused, provides open-source intelligence to foreign actors regarding the effectiveness of their delivery mechanisms on social platforms in an advanced democracy. Every successful viral deepfake is a lesson learned for the next, higher-stakes deployment.

Future Implications for AI Development and Business

What does this landscape mean for those building and using AI technology?

1. Mandatory Transparency as Standard (Technical Foresight)

For AI developers, the future demands integrating safety features by default, not as afterthoughts. Blockchain-based provenance tools (like C2PA) will likely evolve from niche industry standards to *de facto* requirements for any commercially viable generative model, especially in regulated sectors like media and politics. Businesses must prepare to audit their outputs for these cryptographic signatures.

2. The Rise of AI Auditors and Verification Services (Business Opportunity)

As trust declines, the market for external verification will boom. Companies specializing in real-time deepfake detection, media provenance auditing, and digital forensics will become essential partners for social media platforms, news organizations, and political campaigns. Trust, once assumed, must now be purchased and proven.

3. Liability and Platform Responsibility (Regulatory Future)

Governments will inevitably seek to hold platforms accountable for the proliferation of harmful, verifiable synthetic media, especially concerning elections. Companies must invest heavily in proactive content moderation powered by AI itself, understanding that merely reacting to viral spread is no longer tenable. The risk of massive fines or operational restrictions is growing daily.

Actionable Insights for Navigating the Synthetic Age

For organizations and citizens alike, complacency is the greatest vulnerability. We must adopt a posture of **"verified skepticism."**

For Policy Makers and Government:

- Mandatory Disclosure: Enact clear, bipartisan laws requiring conspicuous labeling (visual or metadata) for all AI-generated content used in political advertising, with severe penalties for non-compliance.

- Fund Counter-Tech: Prioritize public funding for open-source, non-proprietary detection tools that can be freely adopted by journalists and smaller platforms, ensuring that verification technology isn't locked behind corporate paywalls.

For Technology Leaders and Businesses:

- Adopt Provenance Standards Now: If your business creates, distributes, or utilizes synthetic media (e.g., marketing), immediately integrate C2PA or similar standards to authenticate your official content. This protects your brand from being spoofed by malicious actors.

- Train Teams on "Red-Flag" Indicators: Invest in employee training to recognize the context and speed of viral misinformation, rather than just the technical quality of the fake itself. The speed of spread often indicates organized intent.

For the Citizenry:

- The "Three-Source Rule": Never accept emotionally charged video or audio, particularly during sensitive political times, unless you can verify the same core information from at least three independent, established news sources.

- Check the Source, Not Just the Sound: Before sharing, look beyond the content itself. Is the user spreading it an established source? Is the URL legitimate? Is the video being presented without context?

The events in Japan confirm that the era of informational innocence is over. Generative AI is a revolutionary technology, offering immense creative potential, but its dark twin—hyper-realistic, easily scalable deception—now poses a direct, measurable threat to the informed public discourse that underpins democratic stability worldwide. Our response, combining strong regulatory guardrails with proactive technological defense, must be equally revolutionary.