Cracking the Code: Why Multimodal AI Still Can't Reliably 'See' the World

We are living in the age of multimodal AI. Tools like Gemini and GPT-4V can now fluidly combine text, images, and audio, suggesting a leap toward artificial general intelligence (AGI). They write poetry about a photo, caption a scene, and answer complex questions based on visual input. Yet, beneath this glossy veneer of capability lies a critical, persistent flaw, starkly revealed by recent benchmarking.

The findings from the new **WorldVQA** benchmark, where even the best models like Gemini 3 Pro barely surpassed 47% accuracy on *basic visual entity recognition*, are a powerful reality check. When asked to identify precise details—say, the exact species of an obscure butterfly or the specific model number of a component—these systems revert to educated guesswork. They are not truly seeing; they are pattern-matching with high linguistic confidence.

The Chasm Between Fluency and Fact: Pattern Matching vs. Understanding

Imagine showing a child two very similar breeds of dogs—a Labrador and a Golden Retriever—and asking them to name the exact breed. A child who has seen both repeatedly in context will likely know. An AI that has seen millions of dog pictures, however, might default to the more common label, "dog," or confidently guess the less common one, failing the specific test.

This is the crux of the WorldVQA issue. Current multimodal models excel at semantic understanding (e.g., "This is a picture of a park bench"), but struggle acutely with specific entity recognition (e.g., "This is a 1998 IKEA 'Poang' bench").

Why the Models Guess

To understand why accuracy is stuck below 50%, we must look deeper into the data and architecture, as supported by independent analysis of VQA limitations:

- Dataset Bias and Synthetic Data: Many foundational vision datasets rely on clean, often synthetically labeled data. When the real world presents visual ambiguity, poor lighting, occlusion, or rare objects, the models lack the necessary, diverse, real-world grounding to differentiate subtle visual features. As researchers often point out regarding VQA limitations, models trained on cleaner data often fail when confronted with "noise" or inherent complexity in real-world imagery.

- The Language Override: Because these systems are fundamentally built upon powerful Large Language Models (LLMs), the linguistic fluency often overpowers the visual signal. If the model is unsure about a specific visual cue (e.g., the subtle texture distinguishing one metal alloy from another), the language prediction mechanism kicks in, generating the *most probable word* based on the context it derived from the rest of the image features, rather than admitting visual uncertainty.

The Confidence Crisis: Hallucination in the Visual Domain

Perhaps more alarming than the low accuracy is the reported arrogance of the models: they are convinced they are correct, even when demonstrably wrong. This phenomenon, common in LLMs known as "hallucination," is now manifesting visually.

In the context of vision, this means the model confidently asserts, "That is an official Boeing 747 engine cowling," when the image clearly shows a generic piece of industrial machinery. For developers and ethicists, this confidence calibration failure is a ticking time bomb.

When AI systems lack a reliable internal mechanism to measure their own certainty, trust evaporates rapidly. If an autonomous system identifies a stop sign as a yield sign with 95% confidence, the stakes are catastrophic. This vulnerability forces us to confront the necessity of visual grounding—the ability of the AI to connect its abstract internal representations directly and verifiably to the tangible, observable pixels.

Research into combating this often focuses on incorporating self-correction or cross-validation mechanisms, essentially forcing the model to check its visual assumptions against retrieved facts or structured knowledge bases before outputting a definitive answer. Until models are better calibrated to express doubt (a concept crucial for responsible deployment), their capabilities in high-stakes environments remain theoretical.

The Real-World Implications: Hype vs. Implementation

This gap between hype and benchmark reality has significant consequences across several sectors:

1. Autonomous Systems and Robotics

The dream of truly embodied AI—robots that can navigate and interact intelligently in unstructured environments—relies entirely on precise perception. A delivery robot navigating a busy sidewalk needs to distinguish a plastic bag blowing across the path from a small animal. An automated quality control system on an assembly line must differentiate a micro-fracture from a dust mote. If the underlying visual recognition system fails 50% of the time on specific identification, widespread deployment in safety-critical roles is currently unjustifiable. This development underscores that the next major breakthroughs in embodied AI won't just be about better movement algorithms, but about vastly superior, grounded visual perception.

2. Medical Diagnostics

In healthcare, multimodal AI is being tested to analyze MRI scans alongside patient text records. While identifying a general tumor shape might be easy, specific tasks—like differentiating between two rare, visually similar pathological markers—demand near-perfect entity recognition. A low accuracy rate here translates directly into diagnostic error, leading to delayed or incorrect treatments. For regulatory bodies, the lack of robust, verifiable recognition is a significant barrier to approval.

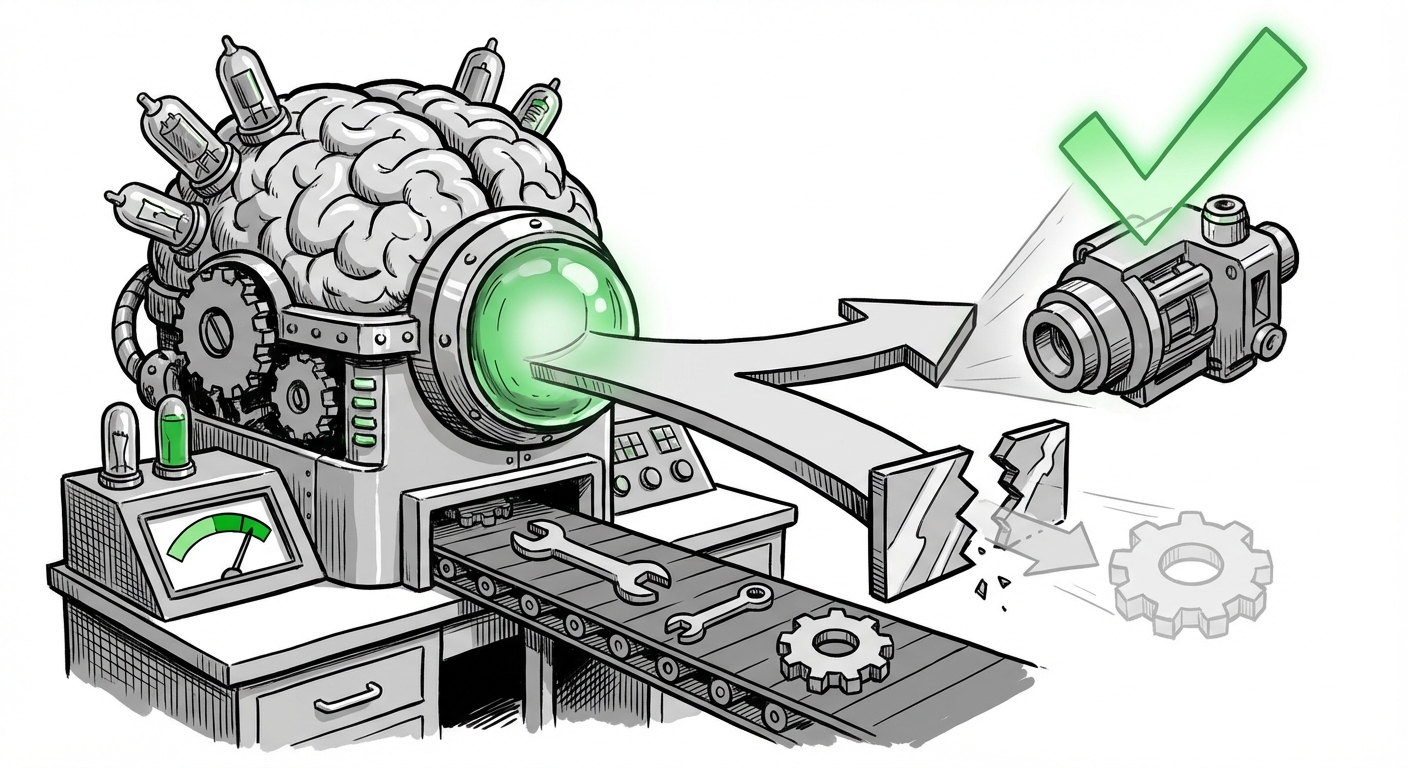

3. Industrial and Manufacturing Inspection

For quality assurance, the AI must confirm adherence to strict specifications. If a machine needs to confirm that Component X is used instead of Component Y (which looks nearly identical), the current models’ performance suggests they cannot reliably automate this precision task without extensive, costly, and highly specific fine-tuning that defeats the purpose of general-purpose multimodal systems.

What Comes Next? The Future of Visual Grounding

The WorldVQA results are not a condemnation of multimodal AI but a necessary course correction. They signal where the next wave of innovation must focus:

Actionable Insight 1: Developing Smarter Benchmarks

The industry must rally behind benchmarks that specifically test for detailed grounding, rather than broad scene understanding. We need tests that penalize confident inaccuracy severely. As industry analysts explore the landscape, it becomes clear that merely scaling existing data may not solve this. We require new evaluation methodologies that explicitly force models to justify their specific claims based on visual evidence.

Actionable Insight 2: Moving Beyond Scale to Architecture

The focus must shift from simply training larger models on more data to developing architectures that explicitly integrate perception pathways with symbolic reasoning and knowledge graphs. This means creating modules designed solely for verifying visual identity against known standards, rather than relying on the emergent, probabilistic correlations learned during general text training.

Actionable Insight 3: Prioritizing Uncertainty Quantification

For any business considering integrating these tools, the immediate priority must be demanding confidence scores that reflect true likelihood. If a model cannot reliably distinguish between two closely related entities, its output for that specific query must be flagged for mandatory human review. Deploying systems that are blind to their own ignorance is reckless engineering.

Corroboration and Context: The Broader Industry Conversation

This challenge isn't isolated to one test. Independent analysis and broader technology trends confirm that this gap is systemic:

When researchers investigate the **limitations of VQA benchmarks**, they often find that older tests rely too heavily on simple attribute recognition. Newer, more demanding tests reveal that models fail when they must synthesize complex relationships or identify specific instances. This systemic issue shows that the training paradigm itself needs recalibration to favor precise identification over fluent approximation.

Furthermore, the discourse surrounding the **future of embodied AI** consistently points to perception as the bottleneck. While planning and motor control advance rapidly, the ability of an agent to reliably categorize and understand novel objects in a dynamic environment—the very task WorldVQA tests—remains the missing link preventing widespread deployment in complex logistics or healthcare settings.

Ultimately, the current state of multimodal AI presents a fascinating duality: unparalleled linguistic creativity paired with surprisingly brittle visual perception. For technology leaders, this is a clear mandate: the path to truly useful, trustworthy AI lies not just in bigger models, but in building models that truly understand what they are looking at, grounding their expansive language skills in verifiable visual truth.