The AI Diagram Revolution: How PaperBanana Signals the End of Manual Scientific Visualization

The engine of scientific progress runs not just on brilliant ideas, but on clear communication. For decades, creating the polished, accurate diagrams that explain complex experiments—flowcharts, schematics, and methodologies—has been a necessary but tedious manual bottleneck in academic publishing. Researchers spend valuable time acting as graphic designers instead of focusing on discovery.

Enter PaperBanana, a collaborative system developed by researchers at Peking University and Google. This system is not just another text generator; it represents a critical leap in AI capability: the ability to translate dense, written technical instructions into high-quality, domain-specific scientific visualizations automatically. This development moves AI squarely from the realm of content assistance into artifact creation.

To fully grasp the magnitude of this shift, we must look beyond the single announcement and contextualize PaperBanana within the broader evolution of AI architecture, the future demands of scientific integrity, and the imminent reshaping of knowledge work.

The Architectural Leap: From Monoliths to Collaborative Agents

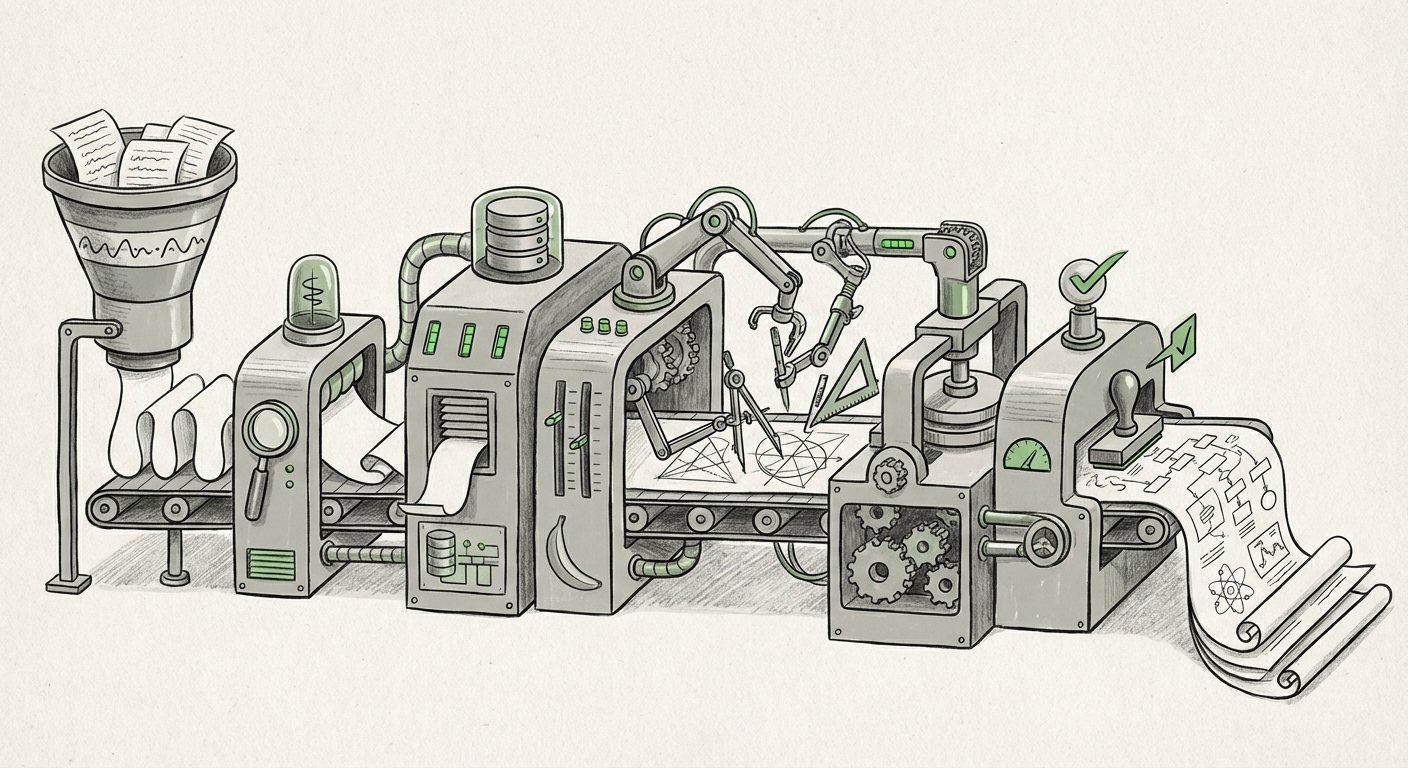

What makes PaperBanana technically revolutionary is its structure. It employs five specialized AI agents working in concert. This is a clear indicator that the industry is moving away from relying on single, monolithic Large Language Models (LLMs) to solve everything.

Think of it this way: if one AI were asked to build a house, it would struggle. But if you assign one agent to handle the foundation, another the framing, a third the plumbing, and a fourth the quality inspection—all coordinated by a project manager—the task becomes manageable and robust. This is the principle of Multi-Agent Systems (MAS).

PaperBanana’s agents likely handle distinct phases:

- Semantic Interpretation Agent: Reads the methodology text and breaks it down into core components.

- Reference Search Agent: Scours databases (internal or public) for existing visual examples or style guides.

- Composition Agent: Assembles the visual layout based on the interpretation and references.

- Refinement Agent: Adds necessary visual detail, labels, and dimensionality.

- Quality Control Agent: The final auditor, ensuring the diagram adheres to scientific accuracy and specified style guides.

What This Means for AI Future: This architectural pattern is the blueprint for the next generation of AI tools. For AI architects and engineers, the focus shifts from building larger foundation models to developing sophisticated orchestration layers that manage complex, sequential, and collaborative workflows. This promises AI systems that are more reliable, specialized, and capable of handling multi-step tasks that require cross-domain reasoning, such as planning entire clinical trials or designing complex microchips.

Contextualizing the Trend: The Rise of Collaborative AI Agents

The trend toward breaking down complex goals is evident across the board. Systems built on this principle—sometimes nicknamed "AI teams"—suggest that AI will soon manage entire projects, not just individual tasks. This specialization allows for deeper expertise in each functional area, leading to higher-fidelity outputs, a necessity when dealing with precise scientific data.

Visualizing Knowledge: Automation Beyond Text Generation

For years, generative AI focused on mastering human language—summarizing, translating, and writing prose. PaperBanana targets a domain that requires spatial reasoning, adherence to geometric constraints, and specialized symbolic knowledge: scientific diagrams.

A research diagram isn't just a pretty picture; it is a truth statement about an experiment. Misplacing one valve in a reactor schematic or drawing an incorrect molecular bond renders the entire figure misleading. This high bar for accuracy is what separates PaperBanana from general image generators like Midjourney or DALL-E.

What This Means for Researchers: The immediate impact is radical efficiency. If generating methodology figures moves from days to seconds, research throughput accelerates dramatically. Researchers can iterate on experimental designs visually much faster, potentially speeding up the discovery pipeline across fields like chemistry, engineering, and biology.

Contextualizing the Trend: The Landscape of Automated Visualization

This effort builds upon earlier attempts to automate visualization. While tools exist to draw specific structures (like molecular models or standard graph plots), PaperBanana aims to automate the *synthesis* of entire experimental narratives into visuals. We see parallel efforts in fields that rely on specific schematic languages, such as using Machine Learning for Automated Synthesis Pathway Visualization in chemistry. PaperBanana’s novelty is applying this rigor to general scientific methodology illustration.

The Integrity Crisis: AI, Peer Review, and the New Authorship

The most profound societal and professional implication of PaperBanana lies in the integrity of the scientific record. If a diagram detailing how an experiment was conducted can be generated by AI, who is responsible if that diagram is flawed, misleading, or—worse—fabricated?

The core of peer review relies heavily on trusting the authors’ description of their methods, which is usually accompanied by a diagram illustrating those methods. If the AI generates a plausible but inaccurate diagram, the human researcher might approve it without sufficient scrutiny, leading to the propagation of unreproducible science.

What This Means for Publishing and Society: Academic journals are already scrambling to update their policies on generative AI. The integration of tools like PaperBanana forces a fundamental reassessment of the peer review process. We may see:

- Mandatory Disclosure: Strict requirements for authors to declare exactly which parts of a manuscript (text, figures, data processing) were AI-generated.

- Verification Over Acceptance: Peer reviewers might pivot from simply verifying reported methods to actively testing the AI-generated figures for internal logical consistency and fidelity against the textual claims.

- Rise of AI Trust Scores: New meta-data attached to papers indicating the level of automated versus human creation.

Contextualizing the Trend: Governance in the AI Age

Major publishers like Elsevier and Springer Nature have already begun issuing guidelines acknowledging that AI cannot be an author, but can be used as a tool. Tools like PaperBanana push the boundary: if the AI performs the *creative synthesis* of the figure, does the human who prompted it truly deserve sole credit for that artifact? This challenges established norms of intellectual contribution.

The Future: Towards Fully Automated Scientific Synthesis

PaperBanana is a visual module in what could become a fully automated research reporting pipeline. If AI can handle the methodology visualization, what’s next? Data visualization, result interpretation summaries, and even the drafting of the discussion section are all susceptible to similar automation.

What This Means for Businesses and Investors: This signals a massive market opportunity in creating "AI Scientific Assistants." Companies that can build comprehensive platforms integrating LLMs with specialized visual, computational, and simulation agents will dominate R&D toolsets. For industries reliant on rapid iteration—pharmaceuticals, materials science, and advanced manufacturing—this technology promises competitive advantage through speed.

Contextualizing the Trend: LLMs Moving Beyond Text

The cutting edge of LLM research is precisely this integration: models that can read data, write code to analyze it, execute that code, and then synthesize the findings into multimedia reports. PaperBanana is tangible proof that the visual/symbolic domain is the next frontier after pure language mastery. It shows that models are learning the implicit rules of *how science is done*, not just how it is written about.

Actionable Insights: Navigating the Visual AI Shift

The deployment of sophisticated, multi-agent visual systems requires proactive strategy from all stakeholders:

- For Researchers and Labs: Begin pilot testing these tools immediately. The goal is not replacement, but augmentation. Learn how to write prompts that elicit scientifically precise diagrams. Treat the AI output as a sophisticated first draft requiring expert human sign-off.

- For Academic Publishers: Invest heavily in AI detection and verification tools tailored for visual analysis. Develop clear, machine-readable standards for metadata tagging that denote AI involvement in figure creation.

- For Technology Developers: Focus on creating robust feedback loops. The success of PaperBanana relies on the quality control agent learning from human corrections. Future systems must excel at incorporating iterative domain-specific feedback to maintain high accuracy.

- For Policy Makers: Initiate conversations now about the ownership and validation standards for AI-generated scientific evidence. Establishing these guardrails early is crucial to prevent long-term corruption of the scientific record.

Conclusion: Redefining Scientific Labor

Google's PaperBanana is far more than a neat trick; it is a bellwether. It confirms the viability of complex, multi-agent systems designed for high-stakes, domain-specific output. By automating the visual articulation of research methods, it fundamentally challenges the traditional structure of scientific labor. We are rapidly moving toward a future where the speed of discovery is limited less by manual documentation and more by the creativity of the prompts we provide and the scrutiny we apply to the intelligent artifacts AI creates.