Why Simple Text Files Beat Complex AI Skill Systems for Coding Agents: A Revolution in Knowledge Grounding

In the rapidly evolving landscape of Artificial Intelligence, complexity is often mistaken for capability. We build intricate multi-agent frameworks, design complex tool-calling systems, and debate the merits of recursive reasoning loops. Yet, a recent revelation from Vercel—the company behind Next.js and a major player in developer tooling—has sent a clear, counterintuitive signal: sometimes, the simplest solution is the most powerful.

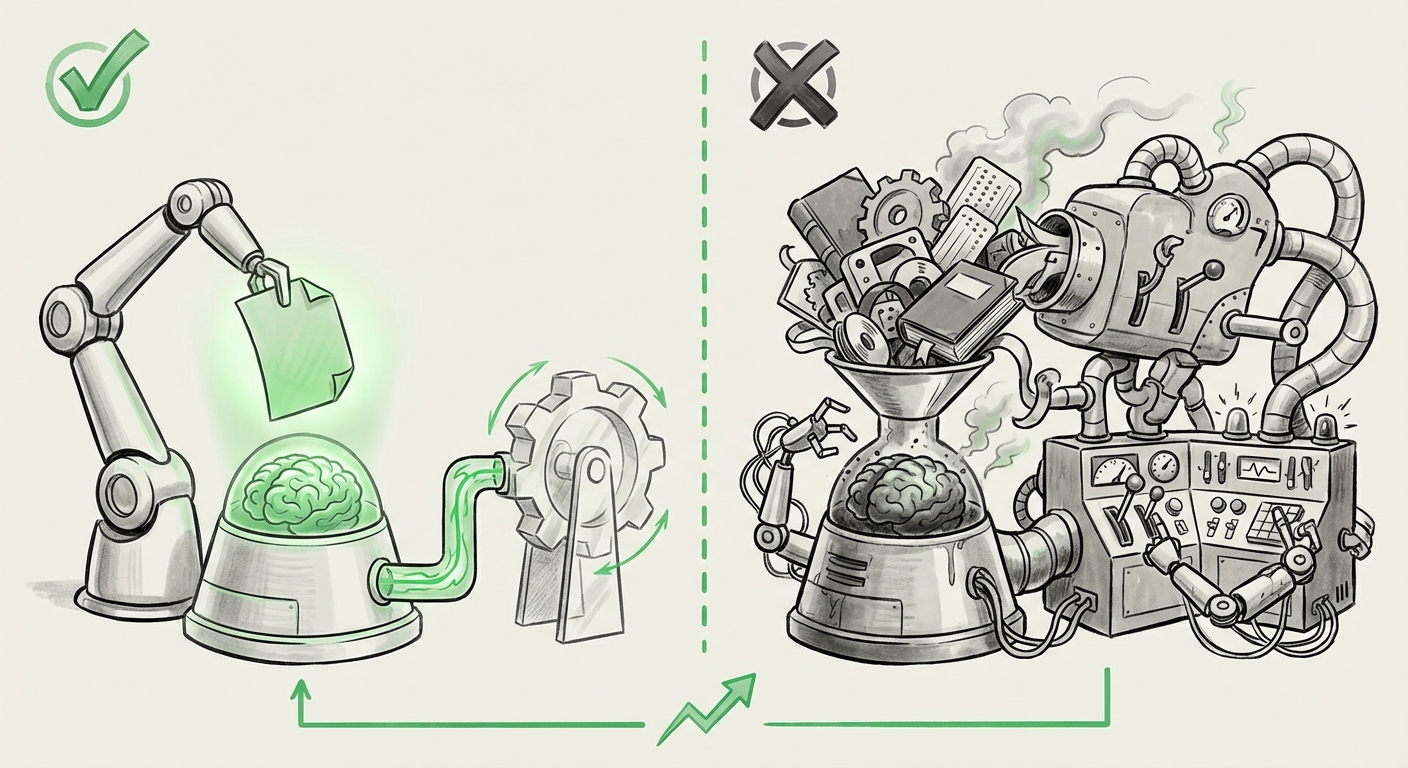

The finding is stark: for giving AI coding agents up-to-date framework knowledge, a simple text file outperformed sophisticated, complex skill systems. This isn't just a technical curiosity; it’s a profound indicator of where AI agent development must pivot to achieve reliable, production-ready performance.

The Problem with Complexity in Agent Architecture

Imagine you are trying to teach a brilliant but literal student (the AI agent) how to fix a leaky faucet. You could give them an entire library of plumbing manuals organized into cross-referenced skill sets (Skill 1: Diagnosis, Skill 2: Wrench Operation, Skill 3: Gasket Replacement protocols). Or, you could hand them a single, laminated cheat sheet that says, "If the leak is at the handle, replace O-ring X."

The Vercel experiment suggests the LLM prefers the cheat sheet. Complex skill systems, while theoretically robust, introduce several hidden costs for the language model:

- Decision Overhead: The agent must first decide *which* skill system to use before executing the task. This arbitration layer adds latency and a chance for error.

- Context Contamination: In Retrieval-Augmented Generation (RAG), complex systems often inject too much retrieved data—both relevant and irrelevant—leading the LLM to get confused about which piece of information is the definitive source of truth.

- Brittleness: If a specific skill is slightly mislabeled or the context retrieval mechanism fails to pull the correct, narrow function, the entire chain breaks down.

The simple text file bypasses this overhead. It acts as a direct, undeniable source of current truth, injected right where the model is performing its reasoning. This points toward a crucial shift: the bottleneck is moving away from architectural elegance and towards context quality and directness.

Corroborating the Trend: The Simplification Imperative

This discovery is not an isolated incident; it aligns with several emerging macro-trends across AI development, suggesting that for many tasks, we have hit the point of diminishing returns on architectural intricacy.

1. RAG Efficiency vs. Context Noise

The debate around Retrieval-Augmented Generation (RAG) often centers on how best to find the right chunks of information from vast databases. However, researchers are increasingly finding that retrieval systems often pull back too much data, overwhelming the model's capacity to focus. This is often referred to as the "lost in the middle" phenomenon, where critical information buried deep within a very long context window is ignored.

When Vercel used a simple text file, they were likely feeding the LLM only the *most relevant* snippets needed for a specific framework version—a highly curated, minimal context set. This strategy drastically reduces noise. If we look at ongoing discussions around RAG optimization, the focus is shifting toward better filtering and smaller, higher-precision retrieval sets, echoing the success of the simple file approach. For developers building production RAG systems, this is a direct warning: spend less time building complex indexing layers and more time perfecting the quality and brevity of the retrieved context blocks.

2. The ROI of Prompt Engineering Over System Engineering

Why invest significant engineering resources into building custom logic trees or complex tool-calling frameworks when superior performance can be achieved by mastering the input prompt itself? The Vercel result reinforces the growing sentiment that for many domain-specific tasks, maximizing the power of the foundational LLM via excellent input context (which can be delivered via a text file) is far more cost-effective and reliable than deep, task-specific fine-tuning or complex agent orchestration.

If an architecture requires constant tweaking of internal "skills" every time a framework updates, its maintenance cost skyrockets. In contrast, updating a single, small text file representing the latest framework API changes is instantaneous and cheap. This suggests a greater return on investment (ROI) for efforts spent on clear, grounded prompt engineering over building rigid, custom execution environments.

3. Reimagining Developer Documentation for the AI Era

For the technical audience, this finding has immediate implications for how software is documented. Developers build SDKs and frameworks assuming human consumption—we create deep hierarchical documentation, complex API references, and extensive Markdown files.

However, AI agents thrive on flatness and clarity. If the best way to ground an agent is a simple text file, it signals that documentation producers must prioritize creating machine-readable, easily parsable summaries of critical, rapidly changing information. Think less about beautiful navigation menus and more about high-density, factual extracts of current syntax and version compatibility. This forces a re-evaluation of technical writing standards, pushing them toward optimized data delivery rather than pure human narrative.

4. Adhering to Occam's Razor in Agent Design

In the world of multi-agent systems, one goal has often been to mimic human teams, creating specialized sub-agents for planning, execution, and validation. This creates an architecture that suffers from "simplicity bias" in reverse. Every added layer of abstraction—every new "skill"—adds potential points of failure and ambiguity for the core LLM.

When an agent is forced to select from 15 defined tools, it may choose the 14th tool because its name sounds vaguely relevant, even if the best solution was encoded simply in the direct context provided. By collapsing the skill system into direct context, we are leaning into Occam's Razor: the simplest explanation (or instruction set) that fits the facts is preferable. For researchers, this validates approaches that prioritize large, high-quality context inputs over intricate, brittle agent coordination logic.

Future Implications: What This Means for AI Deployment

This move toward simplicity is not a retreat from advanced AI; it’s an advancement in its *practical application*. It suggests that the most successful AI deployments in the near future will prioritize grounding and latency over abstract reasoning frameworks.

For Businesses: Shifting Focus from Architecture to Data Curation

Businesses relying on internal AI agents for tasks like customer support, code review, or proprietary data analysis need to adjust their investment strategy. The race is no longer about who has the most sophisticated agent framework; it's about who can curate and serve the most accurate, up-to-date knowledge base in the most digestible format.

- Actionable Insight: Dedicate more resources to data pipeline quality, version control for grounding documents, and creating "golden paths" of truth, rather than architecting complex meta-reasoning layers.

For Developers: Embracing Context-Centric Workflows

Developers building AI tools must shift their mindset from "tool creation" to "context orchestration." If an LLM fails, the first step should not be to rewrite the agent’s internal logic, but to examine the context that was fed in. Did the simple text file accurately reflect the current state of the software being used?

- Actionable Insight: Prioritize fast feedback loops on context accuracy. Use lightweight testing to ensure that core knowledge updates propagate instantly to the model's input stream without needing system rebuilds.

For Society: Improving Trust and Reliability

Reliability is the gateway to mass adoption. Users lose trust when an agent seems smart but gives outdated answers. By rooting the agent's responses in verifiable, explicitly provided current context (the text file), the system becomes more transparent and debuggable. If the answer is wrong, you know exactly where to look: the source context.

This simplification democratizes the creation of useful AI. Tools that are easier to maintain, update, and debug will spread faster and foster greater confidence in their output, pushing AI further into critical business functions.

The New Grounding Paradigm

The Vercel finding serves as a powerful reminder that the most disruptive innovations often involve stripping away the unnecessary. In the current phase of AI development, where LLMs already possess massive general capabilities, the differentiator isn't about teaching them how to reason; it's about ensuring they are standing on solid, current ground.

Complex skill systems are the equivalent of building an elaborate filing cabinet for a librarian who can already memorize every book. What the librarian *actually* needs is a Post-it note reminding them of the one book that was just released yesterday.

The future of functional, deployed AI agents, especially in volatile, rapidly changing domains like software development, will be defined by its commitment to this new grounding paradigm: Precision over Plurality, and Directness over Delegation.