Why Simple Text Files Outsmart Complex AI Skill Systems: The New Grounding Paradigm

The race to build truly capable AI coding agents has been defined by complexity. Engineers have poured resources into creating sophisticated architectures—elaborate state machines, deep decision trees, and finely tuned ‘skill systems’ designed to give an LLM access to complex information and actions. Yet, a recent discovery from Vercel, the company behind Next.js, suggests that in the quest for reliable code generation, the most advanced path forward might be the most unassuming one: a simple text file.

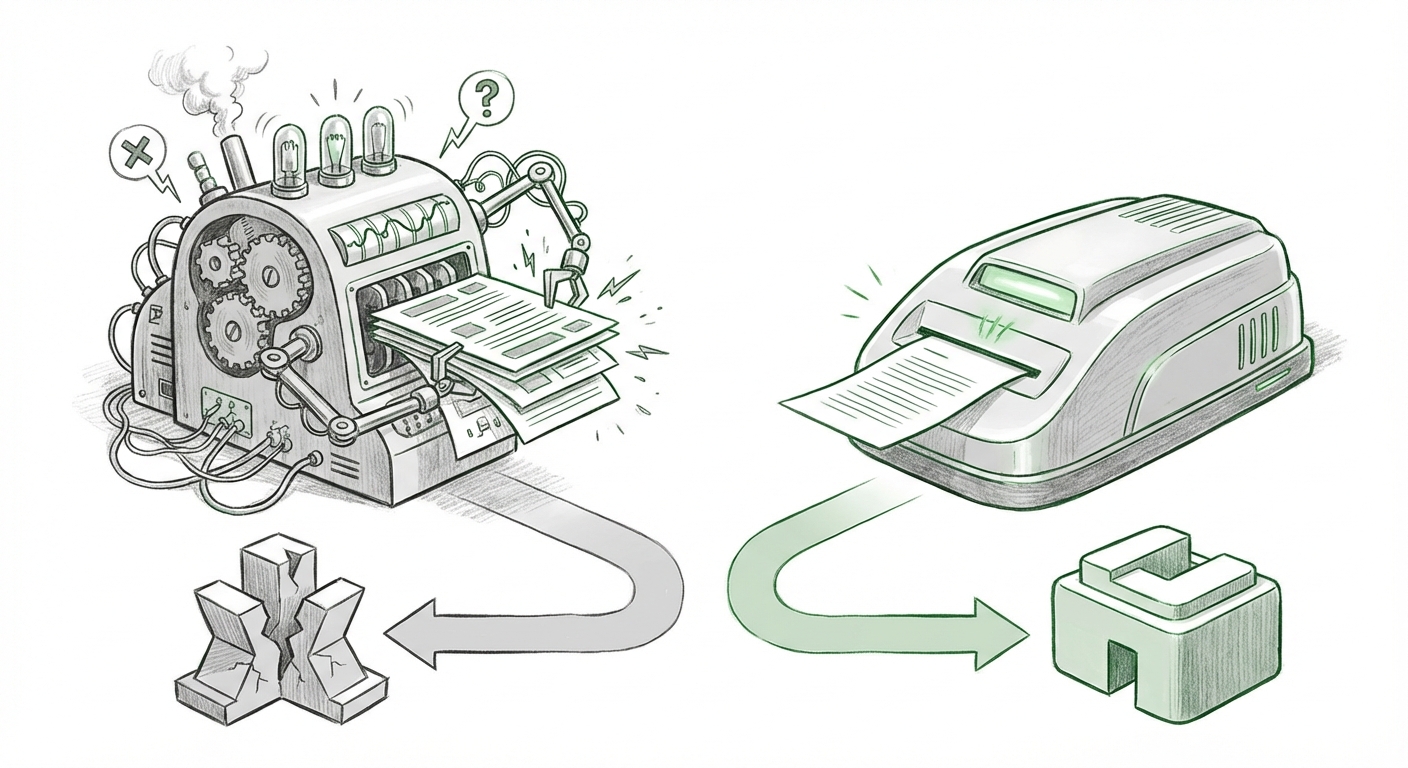

This finding—that a straightforward text file, likely a basic configuration or documentation snippet, vastly outperformed these complex, pre-programmed systems for grounding agents in framework knowledge—is more than a quirky engineering footnote. It is a profound signal about the future trajectory of applied AI, particularly in domain-specific tasks like software development. It champions context over convoluted code, suggesting we may have dramatically over-engineered the ‘brain’ of our agents while neglecting the quality of their local memory.

The Trap of Engineered Complexity in Agent Design

Imagine you hire a brilliant intern (the LLM). You can try to teach them by building them an incredibly detailed, multi-layered instruction manual covering every possible scenario (the skill system). Or, you can hand them the exact, current one-page cheat sheet they need right before they start the task (the simple text file). Vercel’s experiment suggests the latter works better.

Complex skill systems often rely on rigid definitions, often modeled after explicit Function Calling protocols. These systems require the agent to correctly identify the need for a skill, select the right one from hundreds, and format the input perfectly. This complexity introduces several failure modes:

- Brittleness: If the framework changes slightly, the hard-coded skill might break.

- Selection Error: The agent might choose the wrong skill when several seem applicable.

- Overhead: Evaluating dozens of complex tools takes computational time and reasoning budget.

Conversely, a simple text file functions as highly effective, real-time Retrieval-Augmented Generation (RAG). The agent retrieves the relevant snippet directly into its working memory (the context window). This method allows the LLM to use its superior natural language processing capabilities to *interpret* the context, rather than rigidly *execute* a predefined function.

Corroboration: Why RAG Continues to Dominate Grounding

This Vercel result aligns perfectly with emerging industry consensus regarding knowledge grounding, which technical analysts often compare across three main vectors: RAG, Function Calling/Tool Use, and Fine-Tuning (as seen in suggested searches like: "RAG vs Function Calling vs Fine-tuning for Contextual Grounding in LLM Agents"). For rapidly evolving knowledge—like the ever-changing APIs of a popular framework—fine-tuning is too slow and static. Function calling is too rigid. RAG, even on a micro-scale like a single text file, offers the necessary immediacy and flexibility.

The success confirms a major cautionary theme gaining traction across the AI community: The Over-Engineering Trap (as explored in discussions about "Over-engineering in LLM agent design pitfalls"). Many developers mistake architectural complexity for functional intelligence. In reality, adding more layers of orchestration often just adds more points of failure. Simplicity, in this context, equals adaptability and reduced latency.

The Supremacy of Local Context: Configuration as Truth

What exactly is this "simple text file"? In the context of Vercel, a developer environment powerhouse, this file is almost certainly analogous to core configuration artifacts developers use daily—a `vercel.json`, a project manifest, or a standardized README. This brings us to the third crucial insight:

The Language of the Framework

Modern developer frameworks are already standardized around readable configuration files (like YAML, JSON, or Markdown). This means that these simple files are already written in a structure that LLMs are exceptionally good at parsing, especially when the goal is to extract operational constraints. As analysts discussing "LLM agent configuration management best practices context injection" point out, LLMs are essentially statistical pattern matchers trained on trillions of lines of text, including configuration examples. They don't need a separate skill system to learn how to read a configuration file; they are inherently fluent in that "language."

The complex skill system, by contrast, forces the LLM to translate its internal thought process into an external, rigid format that might not perfectly match the natural way it processes information. The text file skips this expensive translation step.

Future Implications: Shifting the Focus from Orchestration to Retrieval

This development forces a strategic pivot for anyone building AI tools, especially those targeting specialized developer workflows or internal enterprise knowledge bases.

1. The Next Frontier is Context Quality, Not Agent Logic

For AI product managers and CTOs, the message is clear: stop spending engineering cycles on building elaborate internal decision trees for agents. Instead, focus intensely on Context Engineering. This means:

- Indexing Strategy: How quickly and accurately can you identify the 100 tokens of information a user *actually* needs?

- Data Freshness: How easily can that knowledge source (the text file, the documentation chunk) be updated when the underlying reality (the framework version) changes?

- Granularity: Breaking down large manuals into small, highly specific context nuggets.

If an agent can reliably answer questions about the latest Next.js feature because it retrieved the relevant line from a newly updated configuration file, that agent is demonstrably more valuable than one rigidly programmed against last year's documentation structure.

2. The Convergence of Tool Use and RAG

This finding signals a potential paradigm shift in how we view agentic tool use, moving away from explicit definitions toward implicit, context-driven actions. We are moving from the era of explicit Function Calling to the era of Dynamic Contextual Retrieval.

As we look at the "Future of LLM Tool Use vs Implicit Context Retrieval," this suggests that highly specialized agents will increasingly operate by retrieving the "how-to" directly, rather than being explicitly told "use this tool." If the context file contains an example of a code snippet, the agent can generate similar code directly, bypassing the need for a separate "Code Generator Tool."

3. Democratizing Agent Development

For developers outside of elite AI labs, this trend is empowering. Building robust agentic capability no longer requires deep expertise in complex prompt engineering or designing multi-step chains. It requires understanding the specific knowledge gaps of the target domain and ensuring that knowledge is present in an easily retrievable format. This lowers the barrier to entry for creating highly effective, domain-specific agents.

Actionable Insights for Application Developers

How can you apply the lesson of the simple text file today?

For Technical Teams:

- Audit Your Agent State Machines: If your agent relies on dozens of internal "skills" or complex nested logic to perform a common task, try to replace 50% of that logic with a direct context injection (RAG) of the necessary procedure or configuration.

- Treat Config as Ground Truth: Ensure that essential project configuration files (`package.json`, environment variables, manifest files) are prioritized in your RAG indexing pipeline. These files are the AI’s 'local environment'.

- Embrace Simplicity in Tool Definitions: If you must use explicit tools (like database access), keep their definitions lean and focused. Let the context window handle the nuance.

For Business Leaders:

- Demand Context Freshness: When evaluating AI vendors for specialized tasks (e.g., internal compliance checking or code review), ask specifically how they ensure their knowledge base is kept current without lengthy retraining cycles. Simple retrieval pipelines signal better maintainability.

- Invest in Documentation Structure: The quality of your documentation and internal knowledge repositories directly correlates with the performance of your applied AI solutions. Treat documentation updates as mission-critical AI infrastructure updates.

Vercel’s breakthrough is a powerful, elegantly simple lesson: AI agents, like human developers, perform best when they are given precisely the right information at the right time, unburdened by unnecessary layers of interpretation or structural overhead. In the high-stakes world of code generation, less structure often means more success. The future of agentic AI will be defined not by the complexity of the orchestration layer, but by the elegance and accessibility of the context provided within.