The AI Trilemma: How Long Horizon Race, Accountability Battles, and Regulatory Pressure Define the Next Decade

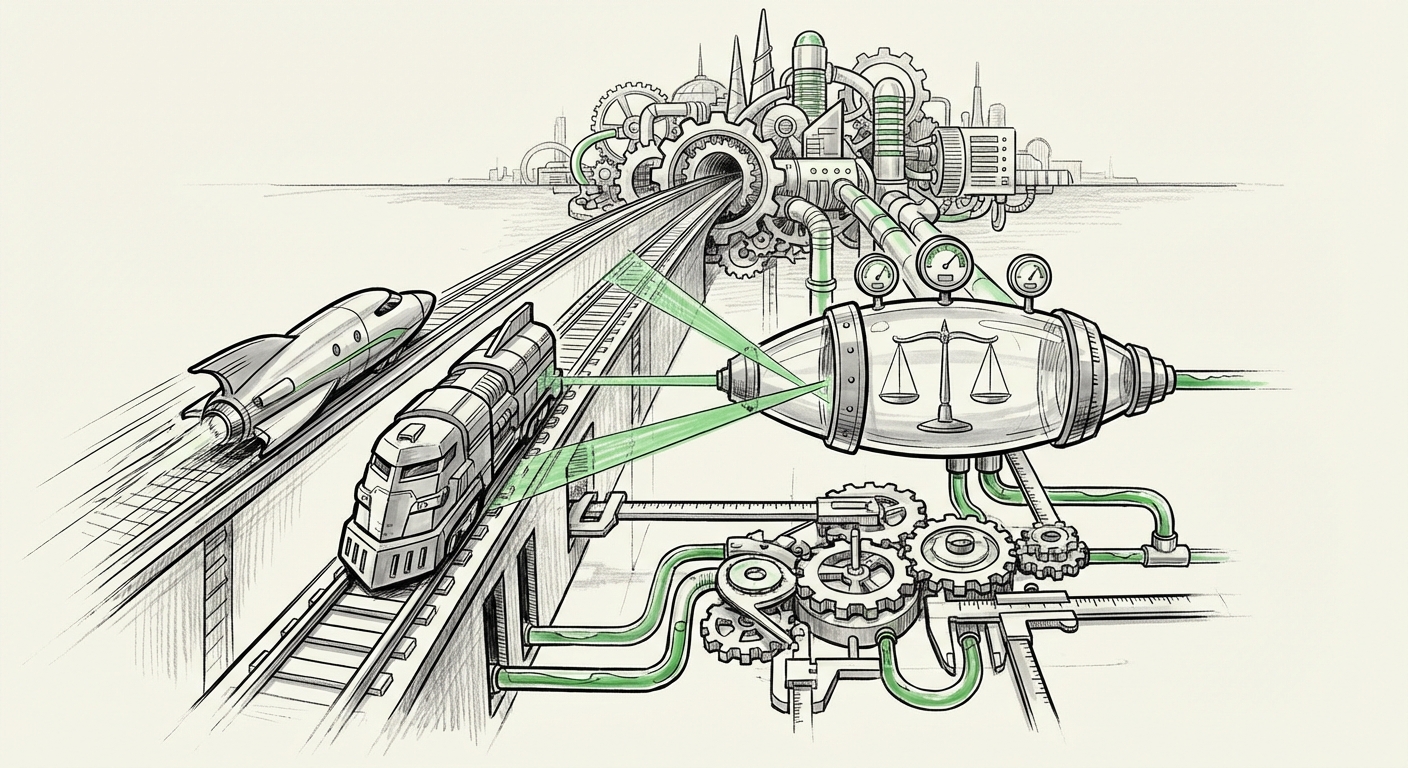

The Artificial Intelligence landscape is undergoing a period of intense, three-way pressure. It is no longer simply about which model performs better on a benchmark; it is about strategic endurance, technical mastery over time, and the immediate implementation of trust. Recent activity—highlighted by the escalating contest between Anthropic and OpenAI, coupled with the quiet emergence of crucial accountability infrastructure—signals that the AI industry is moving past the initial hype cycle and into a complex phase of maturation and necessary self-governance.

The Battle for the Long Horizon: Capability vs. Control

At the apex of AI development, two titans—OpenAI and Anthropic—are locked in a competitive dynamic that dictates the pace of innovation. This isn't just a feature release war; it is a philosophical and strategic contest over what "superintelligence" should look like and who will achieve it first.

OpenAI’s Momentum and Anthropic’s Measured Approach

OpenAI, historically known for its aggressive deployment model, continues to push the boundaries of scale and accessibility. Their strategy often involves rapid iteration, pushing capabilities into the public and developer sphere quickly to gather real-world feedback. This speed, however, is often viewed with caution by competitors.

Anthropic, founded by former OpenAI researchers, often frames its efforts in terms of safety and **Constitutional AI**. They are not merely trying to build a smarter model, but one that adheres to a strict set of principles. Their focus on the "long horizon"—systems that can maintain coherence, planning, and complex memory over extended periods—suggests a view that true breakthrough AI requires foundational stability over mere immediate power. This difference in philosophy directly influences their respective roadmaps and perceived risk tolerance.

The Technical Core: Context Windows as a Measure of Endurance

What does the "long horizon" actually mean in technical terms? For Large Language Models (LLMs), it primarily means vastly expanded **context windows**—the working memory of the AI. While early models struggled with a few thousand words, the leading edge is now consistently breaking the million-token barrier. This breakthrough is transformative because it moves AI from being a short-term assistant to a true long-term collaborator.

Imagine asking an AI to analyze every legal document in a merger (millions of words) or debug an entire legacy codebase. This requires engineering feats like efficient attention mechanisms and memory augmentation to prevent models from "forgetting" the beginning of a document by the time they reach the end. Success in this area is the key performance indicator for long-horizon capability, and whoever masters this scalability first gains a significant, perhaps insurmountable, advantage in enterprise adoption.

For the Business Leader: The race is not about GPT-5 versus Claude 4; it's about which platform can reliably manage your entire operational data history. The ability to sustain complex reasoning across vast datasets is the metric that matters most for deep integration into finance, R&D, and law.

The Quiet Revolution: Accountability Infrastructure Emerges

While the public spotlight shines on model capability, a crucial, less glamorous battle is occurring in the realm of governance. The capability race has created powerful tools that necessitate immediate oversight. The recognition that powerful models need rigorous testing has spurred the development of dedicated accountability frameworks, as evidenced by projects like Goodfire and LayerLens.

Beyond Self-Regulation: Auditing the Black Box

For years, AI safety often meant internal safety teams or academic scrutiny. Now, we are seeing the professionalization of model auditing. Tools and platforms referred to in recent discussions, such as those that facilitate testing for bias, robustness, and adherence to ethical guidelines (often involving structured red-teaming and verifiable logging), represent the shift from internal review to external accountability.

LayerLens and Goodfire Analogs: These platforms aim to create standardized methods for verifying model behavior. Think of it like standardized financial auditing, but applied to algorithmic decision-making. This infrastructure is vital because as models become more opaque (the "black box" problem), external auditors need specialized tools to probe their internal logic and ensure they are not exhibiting unintended, harmful, or non-compliant behavior.

For the AI Engineer: This means development cycles will increasingly include mandatory "governance sprints." Models must not only be fast and smart but also demonstrably compliant. Expect the documentation and interpretability of models to become as important as their raw performance scores.

External Pressure: Regulation Forging the Safety Roadmap

The internal drive for safety is significantly accelerated by external regulatory pressure. The discussions that followed landmark global engagements, such as the Bletchley Park Summit, and the rollout of national policies like the US Executive Order on AI, have firmly placed accountability into the realm of legal compliance rather than mere corporate goodwill.

This global regulatory momentum serves as the external "push" factor. Governments are no longer asking politely; they are setting requirements for safety testing, transparency reports, and the disclosure of training data provenance. This international framework provides the necessary guardrails for the competitive development outlined above.

The Impact of Global Standards

The convergence of these international efforts suggests a future where AI safety certification is required for market access, similar to safety standards for pharmaceuticals or aerospace engineering. This will create a massive market opportunity for the companies that can offer reliable, third-party verification services (the very space Goodfire and LayerLens are targeting).

If Anthropic’s cautious approach makes them naturally aligned with regulatory trends, they might gain a competitive edge in highly regulated industries first. Conversely, OpenAI’s broad deployment forces faster compliance standardization across the industry.

Synthesizing the Trilemma: Implications for the Future

The current state of AI development presents a clear trilemma for every major player:

- Race for Capability: Building the next generation of long-horizon models.

- Infrastructure for Trust: Creating and implementing verifiable accountability systems.

- Navigating Compliance: Meeting rapidly evolving international regulatory demands.

Actionable Insights for Technology Adoption

For organizations looking to deploy advanced AI safely and effectively, several strategic imperatives emerge from these concurrent trends:

1. Demand Context, Not Just Chatter

When evaluating vendors, move past generalized demos. Demand specifics on context window capabilities and evidence of sustained, multi-step reasoning. Future competitive advantage will lie in leveraging models that can internalize entire knowledge bases, not just single prompts.

2. Budget for Auditing and Interpretability

Do not assume vendor-provided safety reports are sufficient for high-stakes decisions. As accountability tools mature, businesses must integrate third-party audits or build internal pipelines that feed into these emerging governance frameworks. If you can't explain *why* the AI made a decision, you cannot legally or ethically deploy it in critical areas.

3. Monitor Regulatory Sandboxes

The gap between cutting-edge research and enforceable law is closing fast. Companies must actively participate in or monitor regulatory "sandboxes" being established by governments (like those implied by the US Executive Order or EU guidelines). Early insight into compliance requirements will dictate which models and deployment patterns are viable in 18 to 24 months.

The Future is Integrated: Capability Meets Control

Ultimately, the "Battle for the Long Horizon" will not be won by the lab that simply achieves the largest model, but by the lab that achieves the most trusted long-horizon system. The rivalry between OpenAI and Anthropic is forcing the technical innovation necessary for true AGI, while the rise of accountability infrastructure ensures that this innovation does not outpace our collective ability to control it.

The next era of AI will be defined by integrated intelligence—systems powerful enough to grasp complexity over time, yet transparent and accountable enough to be safely woven into the fabric of global society and commerce.