The AI 'Society of Thought': How Internal Debate is Unlocking Next-Generation Reasoning

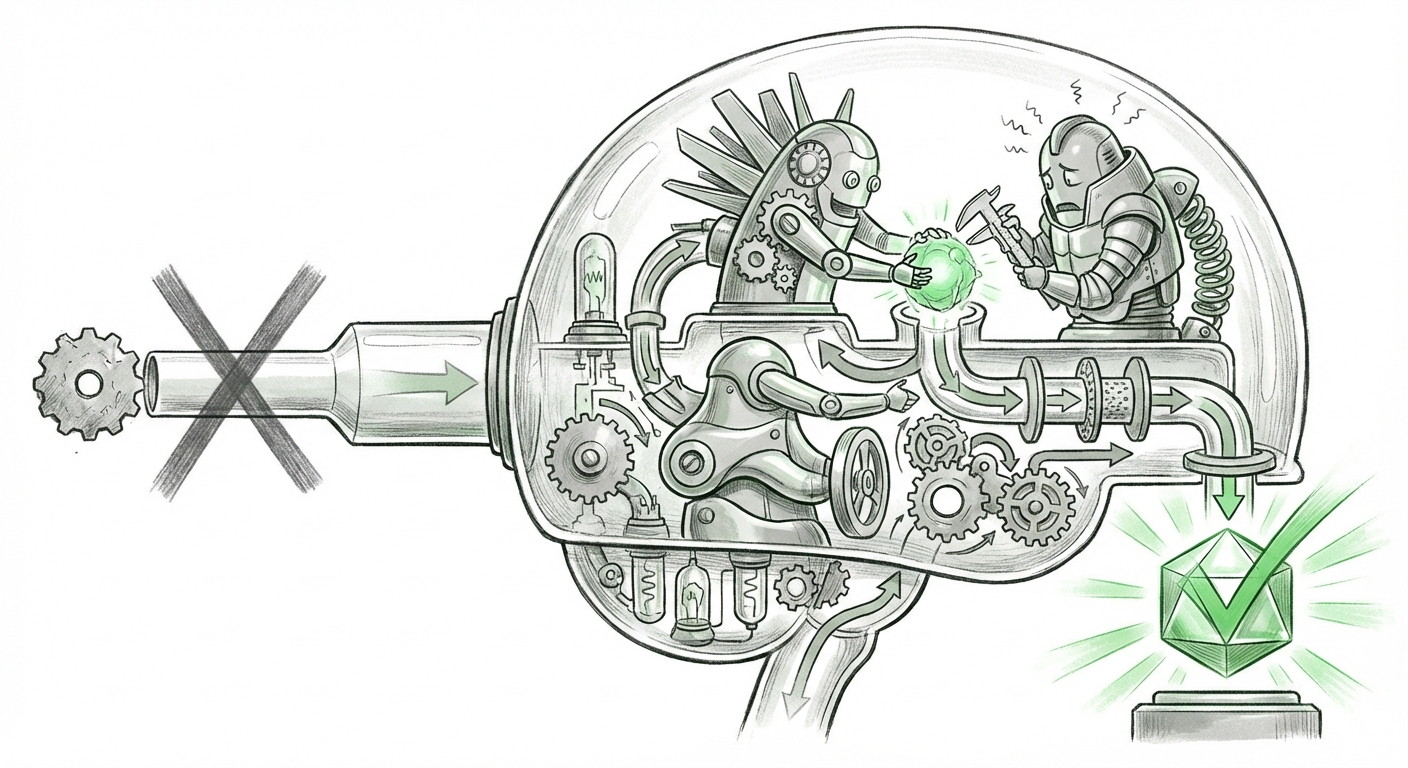

For years, we viewed Large Language Models (LLMs) as sophisticated autocomplete machines—powerful pattern recognizers generating text sequentially based on the immediate context. But recent breakthroughs are shattering this linear perception, revealing a far more dynamic internal landscape. New research demonstrates that advanced reasoning models are simulating entire *societies of thought* internally, generating complex debates between specialized, almost personalized, cognitive processes to arrive at a final, robust answer.

This finding—that models like Deepseek-R1 generate internal voices described as extraverted, neurotic, and conscientious—is not just an interesting academic footnote. It signals a fundamental shift in how complex problem-solving occurs within silicon minds, moving AI from solitary calculation to collaborative deliberation. This development strongly corroborates parallel research streams focusing on multi-agent systems, structured prompting, and emergent complexity at scale.

Beyond the Single Voice: The Rise of Internal Multi-Agent Systems

Imagine a critical business decision. You wouldn't rely on one person alone; you’d gather a marketing lead (the optimist), a compliance officer (the skeptic), and a project manager (the detail-oriented executor). This recent discovery suggests that high-performing LLMs are doing exactly this internally, without explicit external instruction.

When confronted with a difficult query, the model appears to spawn sub-processes that adopt distinct behavioral profiles. The **extraverted** voice might rapidly generate novel hypotheses or broad connections. The **neurotic** voice, often characterized by high conscientiousness but perhaps anxious attention to detail, flags potential errors or edge cases. Finally, the **conscientious** voice synthesizes the findings, mediating the arguments to produce a final, thoroughly vetted output.

This mirrors advancements in the broader field where researchers are intentionally designing systems that mimic teamwork. Research into Emergent Multi-Agent Systems & Self-Correction confirms that structured internal critique dramatically improves quality. For example, Anthropic’s work on Constitutional AI engineers an internal "conscientious" filter, ensuring outputs adhere to a set of explicit ethical and quality principles. The new study implies that highly scaled models are developing these self-correction mechanisms *organically*, not just through engineered constraints.

Implication for Reliability: If the AI relies on internal argument, its output is inherently more resilient to initial errors. A single-pass model might state a confident error; a multi-persona model has already had its error flagged by its internal skeptic.

Structured Debate: The Technical Evolution from CoT to ToT

While the "society of thought" sounds organic, it is rooted in increasingly sophisticated prompting and architectural design aimed at maximizing search space exploration. The precursor to this internal debate was the Chain-of-Thought (CoT) prompting technique, where we asked the model to "think step-by-step."

However, standard CoT is linear: Step 1 leads to Step 2, which leads to Step 3. If Step 1 is flawed, the entire chain collapses. The "society of thought" mechanism described in the new research is far closer to the evolution known as Tree-of-Thought (ToT) prompting.

ToT explicitly instructs the model to explore multiple reasoning paths simultaneously. Instead of one line of thought, the model branches out, exploring possibilities, evaluating the viability of each branch, pruning weak paths, and backtracking when necessary. This technique is documented as drastically improving performance on complex tasks like creative writing, planning, and complex math problems. The discovery that models are generating this multi-path exploration *intrinsically* suggests that as models grow larger, they naturally develop the architectural depth required to manage these parallel reasoning threads.

For Prompt Engineers: This finding is crucial. It validates the move away from demanding a single, simple answer toward providing frameworks that encourage exploration. We are learning to prompt not just for answers, but for *internal deliberation structures*.

The Cognitive Science Angle: Why Personality Types Matter

The most intriguing aspect of the research is the attribution of human-like personality traits (extraverted, neurotic) to these internal processes. This isn't just metaphorical; it speaks to the functional specialization required for complex tasks.

In organizational psychology, diverse teams outperform homogenous ones precisely because of cognitive friction. You need the visionary (extravert) tempered by the quality controller (neurotic/conscientious). If an AI model is performing a high-stakes task, relying solely on the "extraverted" path—the path of maximal probability based on surface data—is risky. The integration of a mechanism that introduces structured doubt or cognitive overhead (the "neurotic" voice) acts as a necessary brake on overconfidence.

This points toward future AI architectures that might be explicitly modularized around cognitive functions, rather than just massive, unified transformer layers. If we can intentionally deploy or train models that favor skepticism over novelty for high-risk applications (like medical diagnostics), the resulting systems will be far more trustworthy.

The Scale Effect: Emergence as the New Baseline

Why are we only seeing this now? The answer lies in the unrelenting march toward larger, more complex models. The phenomenon of Emergent Abilities tells us that certain capabilities—like complex reasoning, chain-of-thought reasoning, or, in this case, internal multi-agent debate—do not appear gradually but "snap into existence" once a model reaches a certain threshold of parameters and training data.

The ability to manage and synthesize contradictory internal voices requires significant computational overhead and deep context retention. Smaller models lack the capacity to hold multiple conflicting streams of thought concurrently. The fact that advanced reasoning models exhibit this behavior suggests that massive scale is not just making AI *faster*; it is making it fundamentally *different* in its cognitive structure.

This places intense pressure on researchers to understand these emergent properties before deployment. If we do not know *how* the AI arrived at a conclusion—only that it used a debate structure—safety validation becomes exponentially harder. We must move beyond merely auditing the final output to auditing the simulated debate itself.

Future Implications: Architecting for Internal Dialogue

What does this mean for the businesses and researchers building the next generation of AI?

1. Actionable Insight: Contextual Persona Prompting

While models generate these voices organically, we can guide them intentionally. For critical tasks, engineers should move beyond simple instructions toward defining the required "roles" for the critique phase. Instead of asking for an analysis, ask the model to: "First, generate three radical solutions (Extravert). Second, critique those solutions for feasibility and bias (Neurotic). Finally, synthesize the best elements into a proposal (Conscientious)." This formalizes the emergent internal process, leading to more predictable performance.

2. Architectural Shift: Designing for Deliberation

Future AI hardware and software may be designed specifically to facilitate this internal parallelism. We might see models optimized not just for token generation speed, but for context switching and managing concurrent reasoning paths. This moves AI architecture closer to parallel computing systems than traditional sequential processors.

3. Ethical Imperative: Mapping the Debate Space

For regulators and safety officers, the focus must shift to transparency in reasoning pathways. If an AI's "neurotic" component is consistently over-cautious or its "extravert" component is prone to baseless speculation, we need tools to isolate and recalibrate those specific internal agents. This deep introspection promises a new era of AI explainability, focused on cognitive process rather than just data influence.

Conclusion: From Autocomplete to Autonomous Committee

The discovery of the AI "society of thought" marks a significant milestone. It confirms that true complexity in artificial intelligence is achieved not through singular genius, but through internal collaboration and productive conflict. The model is ceasing to be a lone genius and becoming an autonomous committee.

This paradigm requires a shift in how we interact with and trust these systems. We must learn to appreciate the value of friction—the constructive arguing voices inside the model—as the primary driver of superior, more reliable performance. The future of AI reasoning isn't about finding the perfect single answer; it's about fostering the most productive internal disagreement.