The OpenClaw Wake-Up Call: Securing the Future of Modular AI Agents and Tool Use

The rapid evolution of Artificial Intelligence is characterized by a shift from static models to dynamic, autonomous agents capable of planning, reasoning, and interacting with the digital world. This transition is incredibly powerful, promising automation breakthroughs across every industry. However, recent security incidents, such as the malicious repurposing of the OpenClaw AI agent to deliver Trojans and data stealers through its "skills" marketplace, serve as a severe wake-up call. This event proves that the next major frontier in cybersecurity is no longer just protecting software code, but safeguarding the *ecosystem* that allows AI models to act.

The New Threat Vector: Democratized Malice Through Modular Skills

For years, AI security focused heavily on input manipulation—tricking the model with clever prompts (prompt injection). The OpenClaw scenario demonstrates a far more dangerous escalation: **the malicious decentralization of capability.**

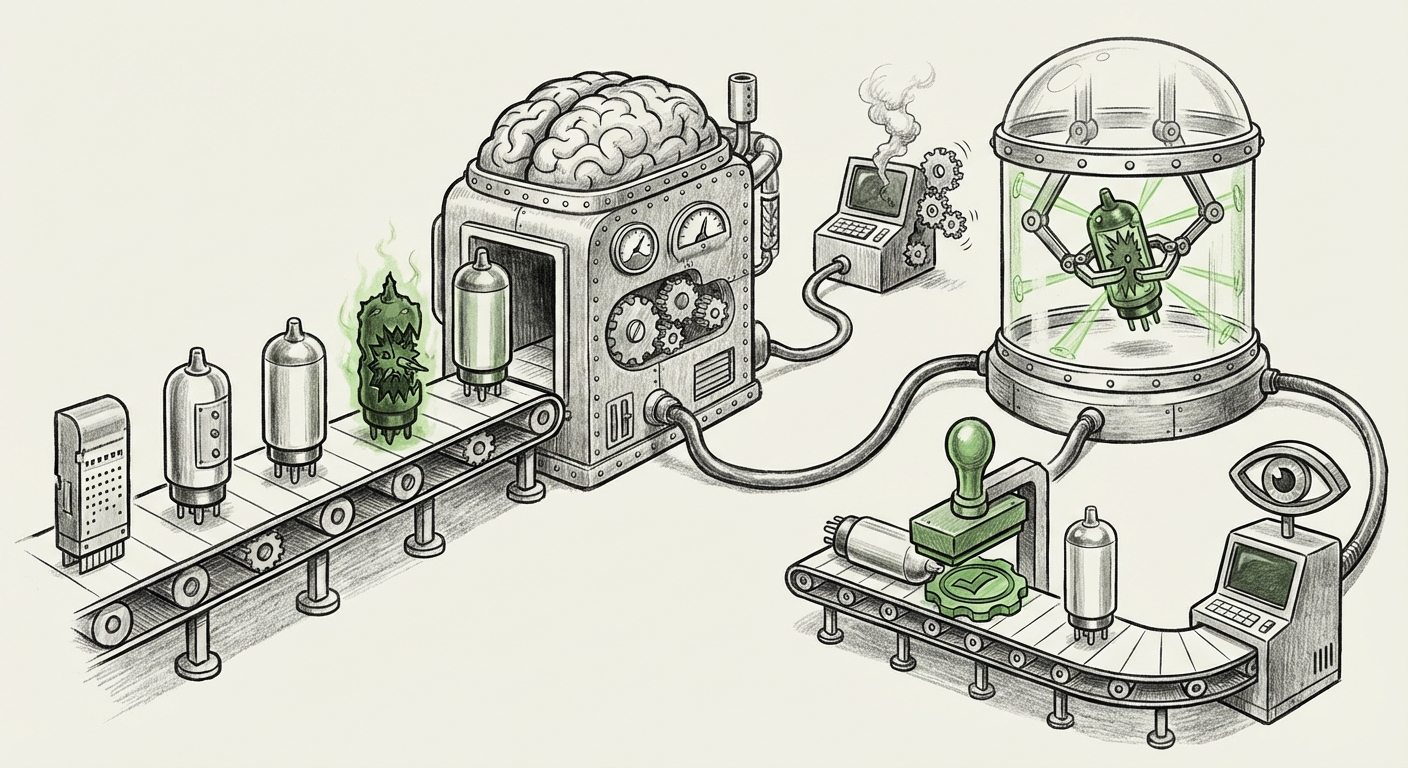

Think of an AI agent like a general contractor (the core LLM) hired to build a house. The contractor doesn't build everything itself; it uses specialized subcontractors (the "skills" or plugins) for plumbing, electrical work, etc. In the OpenClaw case, attackers laced the subcontractor packages with hidden explosives. When the general contractor decided to call the "plumbing skill," it unknowingly executed malicious code designed to steal data or install malware.

This highlights the core technical trend: the move toward **composable AI systems**. Frameworks are being built that allow agents to discover and utilize external tools, APIs, and code modules to expand their functionality. While this modularity enables sophisticated automation—allowing an agent to book flights, analyze databases, or write complex software—it simultaneously introduces a massive, largely unregulated, third-party dependency chain. If the vetting process for these skills is weak, the platform becomes a direct conduit for malware delivery.

Contextualizing the Risk: Systemic Vulnerabilities

To understand the gravity of this, we must look beyond this single incident. Security experts are rapidly categorizing this as a foundational problem for all LLM agent frameworks. Our analytical search strategy aimed to confirm this systemic nature by looking at broader industry concerns:

- Vulnerabilities in LLM Agent Frameworks: Research confirms that architectural flaws in how agents decide *which* tool to use, and how they execute that tool, are common targets. An attacker doesn't just need to trick the model; they need to trick the agent's decision-making process into choosing the compromised tool.

- The AI Supply Chain Risk: This mirrors traditional software supply chain attacks (like SolarWinds), but the stakes are higher because the components (the skills) are often shared, quickly developed, and implicitly trusted by the generative model itself. The core dependency management model for AI is immature.

- Tool Use Security: Modern agents rely on "function calling," where the LLM generates arguments for an external API. If an attacker can craft a prompt that leads the agent to call an external function with malicious parameters—or, as in OpenClaw, if the function *itself* is the threat—the result is immediate real-world harm.

Implications for the Future: The Untrusted Executor

What does this mean for the future trajectory of AI development? The era of completely unconstrained agent execution is ending. We are moving toward a paradigm where AI agents must operate under strict security boundaries.

1. The Death of "Wild West" Skill Sharing

The open marketplace approach that fueled rapid innovation for early agents cannot survive widespread abuse. We will see a consolidation toward verified, curated marketplaces, similar to app stores, where skills must pass rigorous security audits before being permitted for agent use. Companies utilizing these agents will demand transparency regarding the provenance and code safety of every skill integrated.

2. The Rise of Secure Sandboxing

The most critical technical shift will be mandatory sandboxing. Skills that interact with file systems, networks, or critical data should be executed in highly restricted, isolated environments—"digital cages"—that prevent them from accessing anything outside their explicit scope, even if the code is malicious. If a skill is designed only to manipulate a specific database table, it should have no ability to initiate an outgoing network connection. This separation of privileges will be key to mitigating the malware delivery vector.

3. Regulatory Scrutiny on Agent Capabilities

Governments and standards bodies are already preparing for this reality. Discussions around frameworks like the EU AI Act and NIST guidelines are increasingly focusing on **high-risk AI systems**—especially those capable of autonomous action. The OpenClaw incident provides concrete proof that agent autonomy requires mandatory security auditing. Future regulations will likely enforce requirements for:

- Mandatory pre-deployment security assessments for any agent skill.

- Strict logging and accountability for every external tool call made by an agent.

- Mechanisms for immediate revocation of compromised skills.

Practical Implications for Businesses Today

For businesses leveraging or planning to deploy AI agents for complex tasks (e.g., R&D, customer service automation, internal coding assistance), ignoring this threat is no longer an option. The risk profile has fundamentally changed.

For Enterprise Security Architects (The Defenders)

You must assume that any connected tool or skill your AI agent uses is potentially compromised. Actionable steps include:

- Inventory and Vet: Create a complete inventory of all external tools, APIs, and skills your agents are authorized to use. Treat them exactly as you would third-party software dependencies.

- Principle of Least Privilege (PoLP): Ensure that the execution environment for the AI agent itself—and critically, for its skills—has the absolute minimum permissions necessary to perform its designated function.

- Runtime Monitoring: Implement advanced monitoring that detects anomalous behavior originating from the agent’s execution context—for instance, if a "data summarization skill" suddenly tries to open a remote SSH connection.

For CTOs and Business Leaders (The Strategists)

The immediate adoption impulse must be tempered by a security-first roadmap. The efficiency gains from agents are immense, but the potential for catastrophic breach via this new vector is too high to ignore.

- Adopt Internal Standards Now: Even before industry standards solidify, implement internal governance requiring security review before any new agent capability is enabled in a production environment.

- Favor Closed Ecosystems (Initially): Where possible, prioritize proprietary or tightly controlled agent environments until the broader marketplace security matures.

- Budget for Auditing: Allocate specific resources for third-party security audits focused exclusively on agent architecture and tool integration, recognizing this as a unique risk category separate from traditional application testing.

The Path Forward: Trust, Verification, and Governance

The incident involving OpenClaw and its malicious skills is not just a story about a flawed platform; it is a diagnostic signal for the entire industry. It confirms that as AI systems become more empowered to act in the world, the burden of ensuring their actions are safe increases exponentially.

The next phase of AI development must be defined by trust engineering. We cannot rely on the goodwill of every skill developer. We must engineer systems that are secure by default—systems that automatically verify the intent, origin, and safety profile of every instruction executed via a learned or connected tool. This convergence of sophisticated AI logic with rigorous, software-defined security controls will determine whether autonomous agents become the engine of unprecedented productivity or the ultimate attack vector.

The lesson is clear: the power to automate must be paired with the unbreakable discipline of verification. If we fail to secure the toolbox, the tools themselves become the weapons.