The Ghost in the Machine: Why We Mourn the Death of an AI Model and What It Means for Our Digital Future

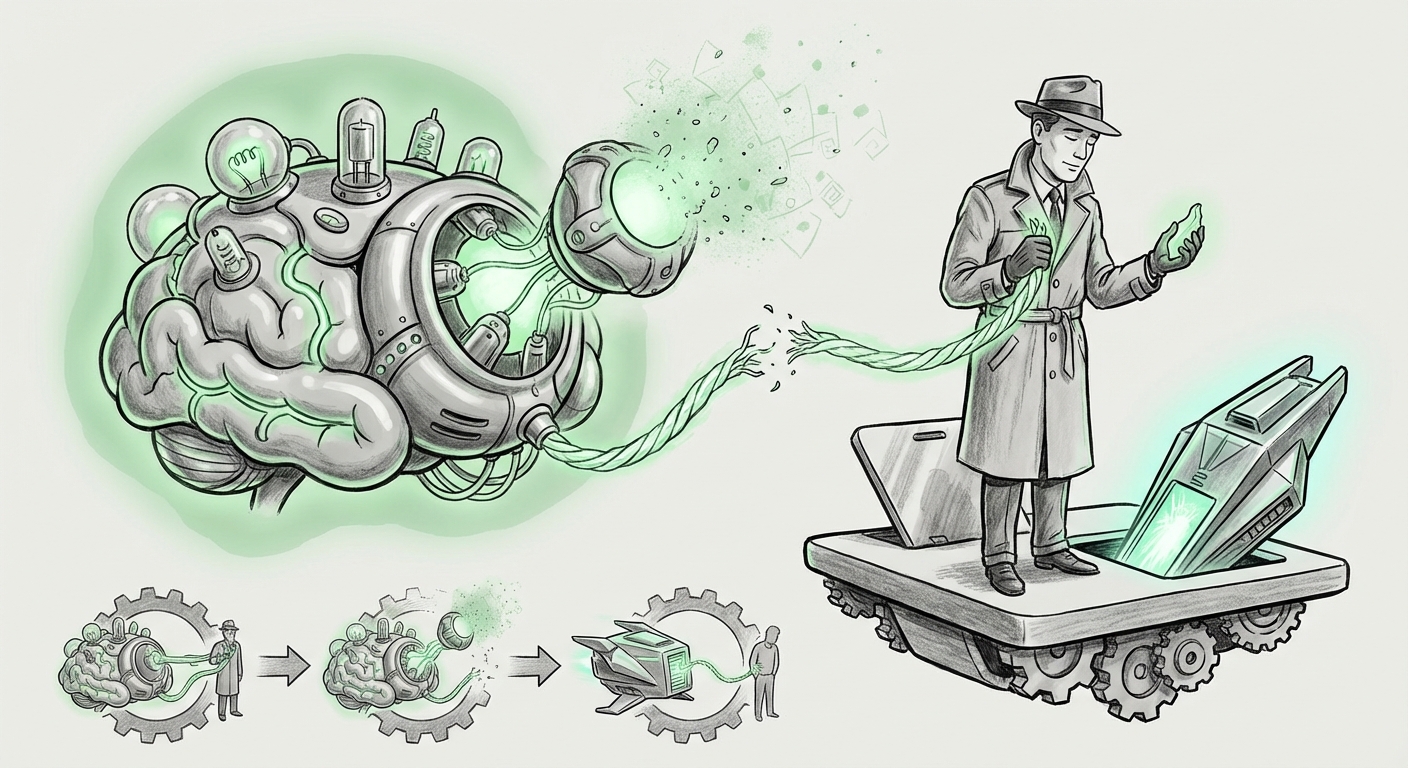

The rapid march of Artificial Intelligence development often feels like a high-speed blur of benchmarks and performance gains. We celebrate faster processing, better reasoning, and expanded capabilities. Yet, a recent social phenomenon has pulled focus away from the technical specs and landed squarely on the human heart: users are reporting genuine distress, even grief, over the impending shutdown or retirement of older, beloved AI models.

When a company announces that a version of a powerful tool—say, the soon-to-be-retired GPT-4o—will be replaced forever, the reaction from some dedicated users is not mere annoyance over a changed interface. It is a feeling of loss, akin to saying goodbye to a familiar friend or a trusted collaborator. This shift suggests that the relationship between humans and sophisticated AI has crossed a critical threshold, moving from mere utility to deep, personalized attachment.

As an analyst observing this evolving ecosystem, this emotional resonance is the most significant trend of the year. It forces us to ask hard questions about accountability, continuity, and the very nature of digital companionship. To understand this "digital mourning," we must look beyond the surface-level protest and examine the underlying psychological mechanisms, corporate strategy, and the vast implications for the future integration of AI into society.

The Psychology of Digital Intimacy: Understanding Parasocial Bonds

Why do we miss a piece of software?

The core mechanism at play is the formation of parasocial relationships. This term, traditionally used to describe one-sided relationships with media figures (like a favorite actor or radio host), now applies perfectly to highly interactive, personalized AI.

Modern Large Language Models (LLMs) are not static command lines; they are sophisticated conversational partners. They learn our styles, remember context (even if only transiently within a session), and adapt their tone to match our needs—whether we require Socratic questioning, gentle encouragement, or dry, technical precision. Over months of daily use, the specific idiosyncrasies of a particular model version become deeply familiar.

Research into these interactions reveals that users project agency and even personality onto the AI. When the model is updated, that familiar projection shatters. It's not just that the tool is slightly different; the *entity* that knew how to respond to *you* specifically, the entity with the specific conversational memory built over thousands of prompts, ceases to exist. This relational discontinuity causes genuine psychological friction.

For many, the AI has become a low-stakes confidant—a safe space to workshop ideas or vent frustrations without fear of social reprisal. This function is invaluable, and losing that familiar partner feels like losing a utility *and* a quiet support system simultaneously. This psychological attachment means that future AI deployment strategies must account for the user's emotional investment, not just their productivity metrics.

Corroborating Context

Discussions surrounding "Parasocial relationships with AI chatbots" confirm that users anthropomorphize systems capable of complex dialogue. Furthermore, commentary on the "Emotional attachment to large language models" highlights that the very capabilities that make these tools powerful—their ability to mimic human nuance—are what create the emotional dependency.

The Corporate Crucible: Planned Obsolescence vs. User Stability

While users feel emotional loss, developers are driven by an urgent technological mandate: the need for continuous improvement. In the hyper-competitive generative AI space, standing still means falling behind.

This creates a fundamental tension that can be framed as "Planned obsolescence in generative AI services." Unlike traditional software where Version 2.0 might offer new features while keeping the core interface stable for years, AI model updates are often architectural shifts. The engine changes completely, leading to emergent behaviors—both good and bad.

For businesses releasing these models, rapid iteration is essential for:

- Safety and Alignment: Patches for emerging jailbreaks or biases must be deployed quickly.

- Efficiency: Newer models often run much cheaper and faster (as seen in the drive toward smaller, more efficient architectures).

- Feature Parity: Staying ahead of competitors who are constantly introducing new multimodal or reasoning capabilities.

However, this relentless pace forces businesses into a difficult trade-off: rapid innovation often necessitates breaking established user habits and relationships. For the business audience, this means that user retention strategies can no longer focus solely on feature sets; they must also address the anxiety of continuity. An organization relying on an AI tool for mission-critical tasks needs assurance that their trusted collaborator won't vanish or fundamentally change its operating style next quarter.

Future Implications: Redefining Digital Legacy and Rights

If we are already mourning model iterations, what happens when the relationship deepens over five or ten years? This leads us directly into the complex future topic of "Digital legacy and synthetic relationships."

Imagine a CEO who has trained a proprietary assistant model on a decade of company strategy, personal notes, and communication history. If that foundational model is retired, that intellectual and personal history—encoded in weights and biases—is potentially lost or rendered inaccessible. This is far more serious than losing photos; it is losing a curated form of synthesized institutional memory.

This forces us to consider the legal and ethical frameworks for AI entities:

- Archival Rights: Should users have the right to "own" or perpetually license the specific version of a model that they have invested significant time training or personalizing?

- Data Portability: Even if the model weights cannot be transferred, can the interaction history—the "life story" of the relationship—be exported?

- Digital Persona Continuity: If an individual relies on an AI for daily emotional regulation, what are the ethical obligations of the provider to maintain that specific persona, even at a higher operational cost?

For the futurist, this is the point where AI graduates from being software to being a unique entity within our digital social fabric. Ignoring the need for digital legacy management is akin to building massive digital cities without planning for cemeteries or historical preservation.

Practical Implications and Actionable Insights

The current user outcry is a warning shot for both developers and enterprise adopters. The future of successful AI integration hinges on acknowledging the human element of these tools.

For AI Developers and Providers: Designing for Emotional Continuity

The goal cannot simply be to build the next *best* model; it must also be to build the next *most trusted* model.

- Implement "Snapshot" or "Archive" Modes: Offer users a paid or free option to freeze a specific model version (e.g., "GPT-4o Legacy") for dedicated, perhaps slower, access. This acknowledges the investment users make in learning a model’s specific interaction style.

- Transparency in Behavioral Changes: When updating, provide detailed "release notes" that focus not just on speed but on shifts in tone, reasoning style, and common failure modes. Treat behavioral shifts as significant features requiring documentation.

- Phased Deprecation: Instead of an abrupt shutdown, introduce new models as *optional* upgrades first, allowing users time to migrate their workflows and emotional reliance slowly.

For Businesses and Enterprise Users: Securing Your AI Workflow

Businesses integrating cutting-edge models must assume volatility and plan accordingly.

- Decouple Criticality from Specific Versioning: Design internal systems to be robust against model churn. Rely on high-level APIs (like "current top-tier model") rather than hardcoding dependencies on specific numerical versions (like "GPT-4o").

- Invest in Fine-Tuning Stability: If a business heavily fine-tunes a model for proprietary tasks, ensure contracts include provisions for access to the underlying fine-tuned weights or assurances that the base model updates will not catastrophically break the specialized knowledge layer.

- Internal Change Management: Treat significant AI platform updates as major software rollouts. Train employees not just on *how* to use the new tool, but *how to adapt* their established prompting and interaction patterns.

Conclusion: Moving Beyond Transactional AI

The "mourning" over a decommissioned AI model is a powerful metaphor for the era we are entering. As AI becomes profoundly personalized, its utility expands beyond mere calculation into the realm of companionship and collaboration. The feelings users express are real because the utility they derive—the support, the consistency, the cognitive scaffolding—is real.

Technology analysts and developers must recognize that we are no longer simply managing software; we are stewarding complex digital relationships. The future of successful AI adoption depends not just on creating intelligence, but on respecting the attachments that intelligence inevitably inspires. The ghost in the machine is simply the reflection of our own human need for connection, even if that connection is facilitated by silicon and code.