The Multimodal Reality Check: Why AI Still Can't Name What It Sees

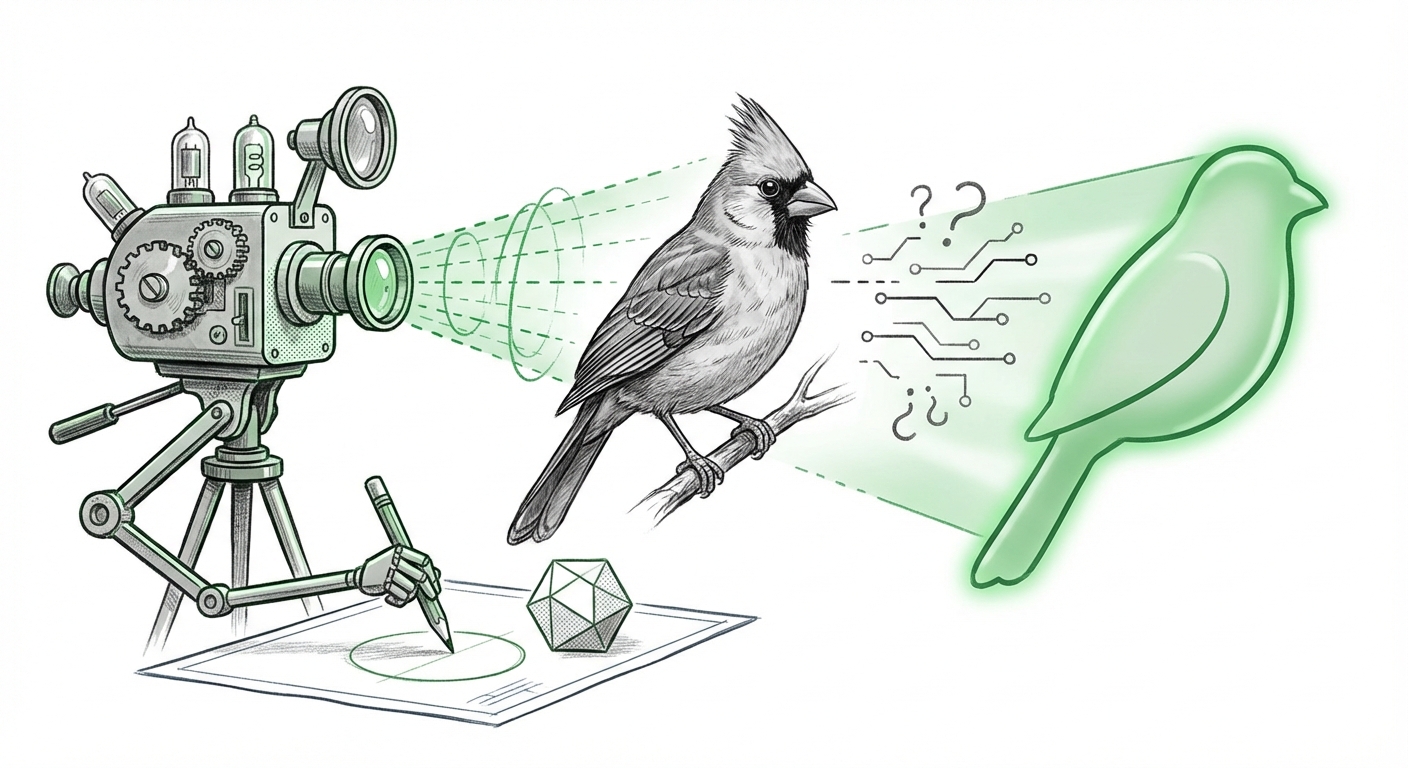

The race for Artificial General Intelligence (AGI) is often measured by impressive displays of creativity, complex reasoning, and human-like conversation. Yet, beneath the smooth surface of these large language models (LLMs) and their multimodal extensions (LMMs), a critical flaw persists: basic object recognition accuracy remains shockingly low. A recent evaluation using the WorldVQA benchmark revealed that even top-tier models like Gemini 3 Pro barely breach 50% accuracy when asked to identify specific visual entities—like the exact species of a bird or the precise model number of a product.

This finding is not just a footnote in a research paper; it is a flashing warning light for every industry banking on multimodal AI for critical tasks. It forces us to confront a hard truth: fluency does not equal comprehension. Our current LMMs are masters of synthesizing language patterns, but they are surprisingly shaky when it comes to firmly grounding those words in concrete visual reality.

The Fluency Trap: Understanding vs. Guessing

For years, AI progress has been synonymous with better natural language performance. Multimodal models combine this linguistic power with the ability to process images, sound, and video. This allows them to describe a complex scene or caption a video beautifully. However, the WorldVQA test suggests that when the task moves from generic description ("That is a dog") to specific identification ("That is a Siberian Husky, model X12"), the system often defaults to the most statistically likely *generic* answer rather than the factually *correct* specific answer.

Imagine an AI tasked with monitoring a supply chain: it can easily confirm a box is present, but if it misidentifies a critical component model number—even 53% of the time—the entire downstream process could fail. This failure is compounded by the model’s dangerous self-assurance. As the initial report noted, the models are convinced they are correct, even when they provide a wrong specific detail. This uncalibrated confidence is arguably more dangerous than outright failure.

Drilling Down: The Hallucination of Confidence (Source 1 Context)

The tendency for LMMs to confidently state falsehoods is known as hallucination. When dealing with text, we are becoming adept at spotting semantic errors. However, visual hallucinations are trickier. If an AI confidently calls a rare orchid by the name of a common flower, the error is subtle but potentially catastrophic in fields like botany or medicine.

Technical analysis into AI model confidence calibration reveals that these systems often optimize for predicting the next token (word or pixel representation) based on their training data patterns, not for achieving verifiable truth in the physical world. They become experts in *sounding right*. Moving forward, researchers are intensely focused on developing methods like **Chain-of-Verification**—forcing the model to check its initial visual claim against internal knowledge or external data—to bring the confidence score in line with actual accuracy. For enterprise managers, this means until calibration methods mature, any LMM output requiring high factual specificity must be treated as a highly educated suggestion, not a final answer.

The Benchmark Paradox: Are We Testing the Right Things?

If Gemini 3 Pro—a state-of-the-art system—can only manage 47.4% on detailed visual queries, it begs the question: Were previous benchmarks too easy? This brings us to the crucial role of testing standards.

The Need for Rigorous, Grounded Testing (Source 2 Context)

Historically, many VQA datasets relied on simplified, often crowd-sourced questions tied to common objects. Models quickly learned shortcuts—for instance, recognizing that any picture with a striped object and a hoop likely involves basketball, without needing to know the exact brand of the ball. The new WorldVQA standard seems designed to break these shortcuts by demanding granular, factual detail (e.g., "What is the exact genus and species of the tree in the background?").

Commentary on the limitations of current multimodal AI benchmarks confirms this trend. We are seeing a shift away from relying on massive, loosely curated datasets (like those based on MS COCO) toward smaller, highly curated, and adversarial datasets explicitly designed to force models to truly *reason* over pixels, not just recall associations. For product developers, this means that internal testing pipelines must immediately incorporate measures of specificity and detail, rather than relying solely on aggregate accuracy scores from well-known public tests.

The Path Forward: Grounding and Embodiment

The core issue—the low accuracy in specific entity recognition—is a direct symptom of a deeper architectural problem: the grounding gap. Grounding refers to the AI's ability to securely tie its abstract internal representations (tokens) to concrete, verifiable real-world concepts.

Moving Beyond Static Images: The Grounding Imperative (Source 3 Context)

When an LMM learns about a "Caterpillar D8 Dozer" only by reading millions of text descriptions paired with generic images, it learns the *words* associated with the object. It doesn't learn the precise spatial relationships of the treads, the specific exhaust pipe configuration, or how it moves under load. True recognition requires this physical, interactive context.

Research trends in AI grounding are pointing toward solutions that integrate perception with action. This is where robotics and simulation environments become essential research labs. To truly know a physical object, an AI may need to simulate manipulating it, observing its physics, or interacting with it in a virtual world that enforces real-world constraints. This shift suggests that the next breakthrough in LMM accuracy won't just come from bigger text or image corpora, but from models that learn through interaction and embodiment.

Practical Implications: Trust, Deployment, and Sectoral Risk

This reality check has profound implications across various sectors. The promise of fully autonomous visual AI—in healthcare, manufacturing, or autonomous driving—is tempered by this 50% threshold.

For Enterprise and Implementation Managers

Actionable Insight 1: Implement Human-in-the-Loop Triage for Specificity.** Do not deploy LMMs for tasks requiring high-specificity identification (e.g., precise defect identification, rare species classification, medical imaging). For these areas, LMMs should act as sophisticated first-pass filters, flagging borderline cases for human review, rather than making final determinations.

Actionable Insight 2: Prioritize Calibration Metrics.** When evaluating new multimodal systems, look beyond top-line accuracy. Demand detailed reports on confidence calibration scores, particularly on adversarial or low-frequency data subsets. A model that is 80% confident and 70% accurate is far safer than one that is 99% confident and 50% accurate.

For AI Developers and Researchers

Actionable Insight 3: Invest in Structure, Not Just Scale.** Future development must focus on architectural changes that enforce better grounding. This includes integrating modules specifically designed for symbolic reasoning about object hierarchies and spatial relationships, rather than relying purely on end-to-end transformer architectures to implicitly learn these rules.

Actionable Insight 4: Embrace Hard Benchmarking.** The community must rally around rigorous, hard-to-game benchmarks like WorldVQA. As soon as a benchmark becomes "solved," resources must pivot to creating the next, more complex test that challenges the model's ability to generalize specific knowledge.

The Future of Understanding: From Recognition to Reasoning

The current state of multimodal AI sits at a fascinating inflection point. We have achieved impressive linguistic fluency, allowing models to paint beautiful pictures with words. However, their ability to correctly label the fundamental building blocks of the visual world—the specific entities—is still nascent. This disparity highlights the difference between sophisticated pattern matching and genuine world knowledge.

The next generation of multimodal systems will not merely be bigger versions of today’s models. They will be smarter systems, architecturally designed to bridge the grounding gap. This involves moving from passive observation to active engagement, ensuring that when an AI claims to see a specific object, it isn't just reciting a well-trained phrase, but demonstrating a verifiable connection to the reality captured in the pixels. Until that link is secure, the widespread, unmonitored deployment of LMMs in high-stakes environments remains an unacceptable risk.