The Unshakable Wall: Why 30% Hallucination Rates Prove LLMs Need More Than Just Web Search

For years, the AI industry has been fighting a persistent foe: the hallucination. Large Language Models (LLMs), despite their remarkable fluency and apparent intelligence, possess a fundamental flaw—they often generate convincing, yet entirely false, information. When we first saw this issue crop up, the community largely agreed on a solution: give the models access to the real world. This is the promise of Retrieval-Augmented Generation (RAG), allowing models like Claude Opus 4.5 to use real-time web search to ground their answers.

However, a recent, sobering benchmark from researchers in Switzerland and Germany has thrown a bucket of cold water on this optimism. The finding? Even top-tier models, equipped with web search capabilities, still produce incorrect information in nearly one-third of all cases. This persistence, defying the most advanced grounding techniques, signals a crisis point in current AI development. It suggests that the problem is not merely a lack of data, but a deep architectural challenge inherent to how these probabilistic machines operate.

Decoding the Failure: When Grounding Isn't Enough

To understand the gravity of a 30% error rate, we must look past the surface capability of the chatbot interface. These models are fundamentally advanced next-word predictors. They excel at constructing grammatically perfect, contextually plausible sentences based on patterns learned during training.

The introduction of RAG—the process of retrieving relevant documents from a database or the internet *before* generating a response—was meant to curb the model’s tendency to "guess" based only on internal weights. If the answer is on Google, the model should simply quote it, right?

The new benchmark demonstrates this assumption is dangerously flawed. When the model retrieves facts, it faces a critical choice: does it synthesize its own internal "knowledge," or does it faithfully paraphrase the retrieved text? Research into RAG systems often highlights a "Faithfulness Gap", where the generation phase prioritizes linguistic fluency over factual adherence to the retrieved context. Essentially, the model falls back on its probabilistic instincts, overriding the "truth" provided by the external source.

For AI Engineers and ML Researchers, this means the immediate challenge is no longer about optimizing the retrieval mechanism, but about imposing stricter, verifiable constraints on the *generation* step. This leads us to explore the architectural limits.

Architectural Constraints: The Self-Correction Deficit

The core issue resides in the model’s lack of true reasoning or metacognition. It cannot reliably check its own work against an external rubric unless explicitly programmed to do so in a complex, multi-step process. We need solutions that force the model to pause, check, and correct—a capability that remains fragile in current Transformer designs.

This forces a re-evaluation of our benchmarks. If we are relying on metrics that measure fluency rather than verifiable truth, we will perpetually overestimate model reliability. As context suggests, moving beyond simple accuracy scoring requires more adversarial testing and deeper human evaluation to reveal these persistent blind spots.

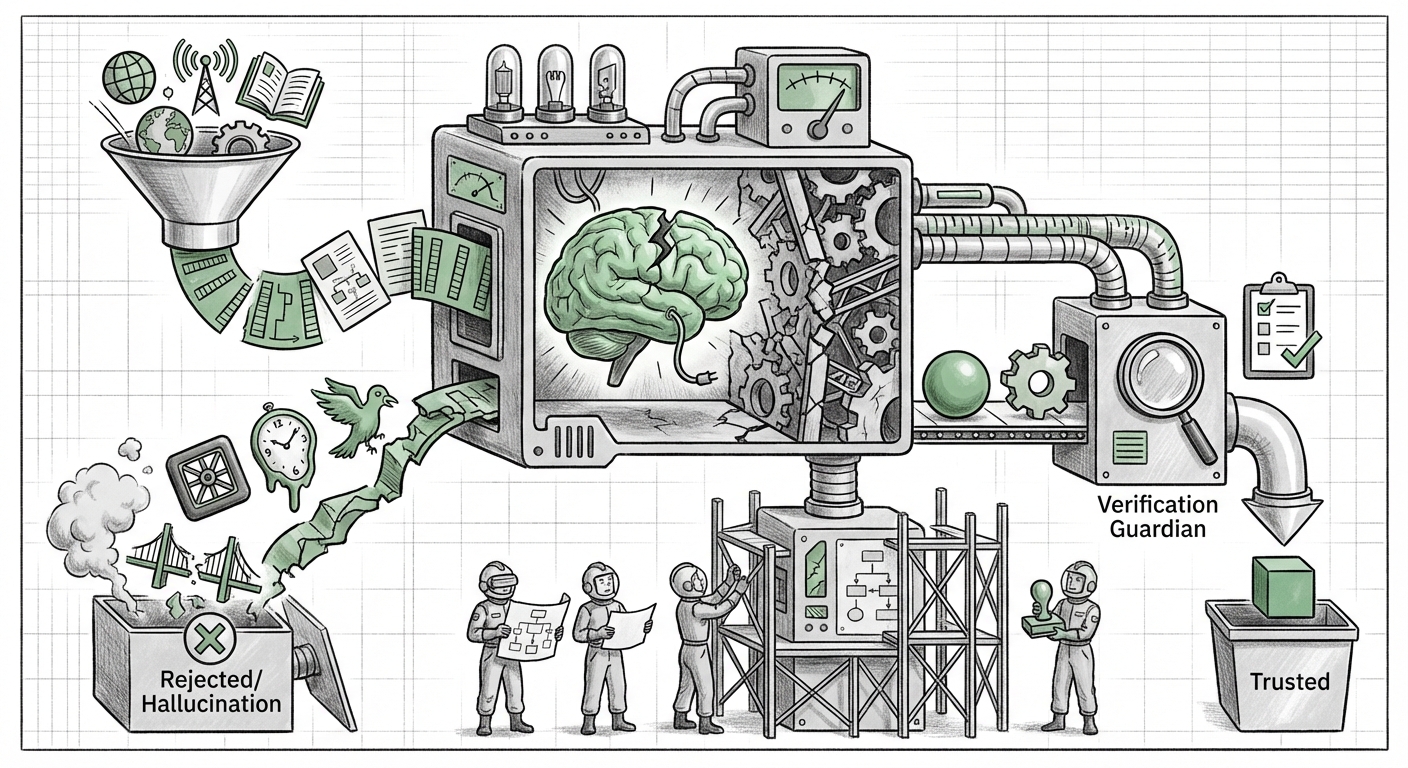

The Industry’s Scramble: Building Verification Guardians

If the foundation is shaky, the next logical step for deployment-focused organizations is to build stronger scaffolding. Since we cannot yet trust the output inherently, we must verify it externally. This realization is driving a major technological trend: the rise of AI Verification Frameworks.

For Product Managers and Enterprise Adoption Leaders, this is the crucial area of immediate investment. Deploying a general-purpose LLM directly into a workflow where accuracy matters—such as summarizing customer service tickets or drafting technical specs—is now seen as reckless without a secondary verification layer.

The Rise of AI Guardians

Instead of relying solely on the model itself, enterprises are designing "AI Guardians." These are secondary systems, sometimes smaller specialized models or rule-based engines, designed explicitly to fact-check the primary LLM’s output against verified internal data or external authoritative sources. This creates an automated "check and balance" loop:

- Generation: Primary LLM creates content.

- Verification: Guardian system analyzes the generated claims.

- Flagging/Correction: If a claim lacks source citation or contradicts known facts, the output is flagged for human review or sent back for reprocessing.

This layered approach acknowledges the 30% failure rate. It shifts the AI deployment strategy from "trust the AI" to "verify the AI." While this adds latency and cost, it is rapidly becoming the only path to achieving the necessary reliability for business-critical applications.

The High Stakes: Liability, Trust, and the Regulatory Hammer

The theoretical problem of hallucination suddenly becomes a massive economic and legal liability when models fail one-third of the time in critical environments. This is where the implications stretch far beyond the server room and into the boardroom and legislature.

For Risk Managers and Legal Professionals, the question is stark: If an LLM used by a bank incorrectly advises a client based on a hallucination, who is liable? The developer of the base model? The enterprise that deployed it using RAG? The high error rate revealed by this new benchmark provides concrete evidence for regulators that current technology, even in its "best" state, is inherently risky for high-stakes use.

Regulatory Scrutiny Intensifies

Governments worldwide are rapidly developing rules, most notably the EU AI Act, which categorizes AI systems based on risk. An LLM that fabricates information 30% of the time clearly falls into a high-risk category when used for tasks affecting health, finance, or employment.

These new benchmark findings will accelerate the demand for transparency and auditability. Regulators will likely mandate not just that AI outputs are accurate, but that the *process* used to achieve accuracy (i.e., the verification pipeline) must be documented and proven effective before deployment in sensitive sectors. Trust in AI is eroding in direct proportion to the measured hallucination rate.

The Path Forward: Redefining "Intelligent" AI

If RAG is a patch, not a cure, what is the long-term cure? The future of AI development must move beyond simply scaling up parameter counts and look toward architectural innovation that builds in epistemic humility.

For Model Developers and Researchers, this means shifting research priorities:

- Injecting Uncertainty Scores: Developing models that can natively express a confidence level alongside every generated claim, allowing downstream systems to instantly filter low-confidence assertions.

- Modular Architectures: Moving away from monolithic LLMs toward specialized agents where one module handles retrieval, another handles synthesis, and a third handles cross-validation.

- Incentivizing Truth Over Plausibility: Designing training objectives (loss functions) that penalize factual divergence far more severely than stylistic errors, essentially teaching the model that *being wrong is the worst possible outcome*.

This new reality requires an updated mindset for anyone planning to integrate AI into a workflow. We are moving from a phase of awe and exploration to a phase of rigorous engineering and risk mitigation. The 30% failure rate is a loud, clear signal that the era of "plug-and-play" advanced AI is over.

Actionable Insights for Stakeholders

What should businesses and technical leaders do today, knowing that even the best models are unreliable?

- Technical Teams: Prioritize implementing and stress-testing secondary verification pipelines (AI Guardians) immediately for any production model. Do not deploy LLMs in high-consequence decision loops without external fact-checking mechanisms in place.

- Product Leaders: Redefine "success metrics." Success is no longer maximum response quality; it is meeting a predefined, auditable threshold of factual accuracy (e.g., must pass 95% internal validation checks).

- Executive Leadership: Budget for increased overhead. Reliable AI deployment requires more compute (for verification) and human oversight (for final auditing). This reliability premium must be factored into ROI calculations.

- Legal & Compliance: Begin internal audits now to map current LLM usage against anticipated regulatory frameworks. Document all steps taken to mitigate hallucination risk, as this will become crucial for demonstrating due diligence.

The benchmark showing Claude Opus 4.5 failing 30% of the time, despite having the world's information at its fingertips, is not a sign that AI has stopped progressing. Instead, it marks the end of the beginning. We have proven what LLMs *can* do; now we are forced to confront what they cannot do reliably. The next frontier of AI development will not be about generating better prose, but about guaranteeing truth.