The AI Video Arms Race: Why ByteDance's Seedance 2.0 Signals a New Era of Generative Content

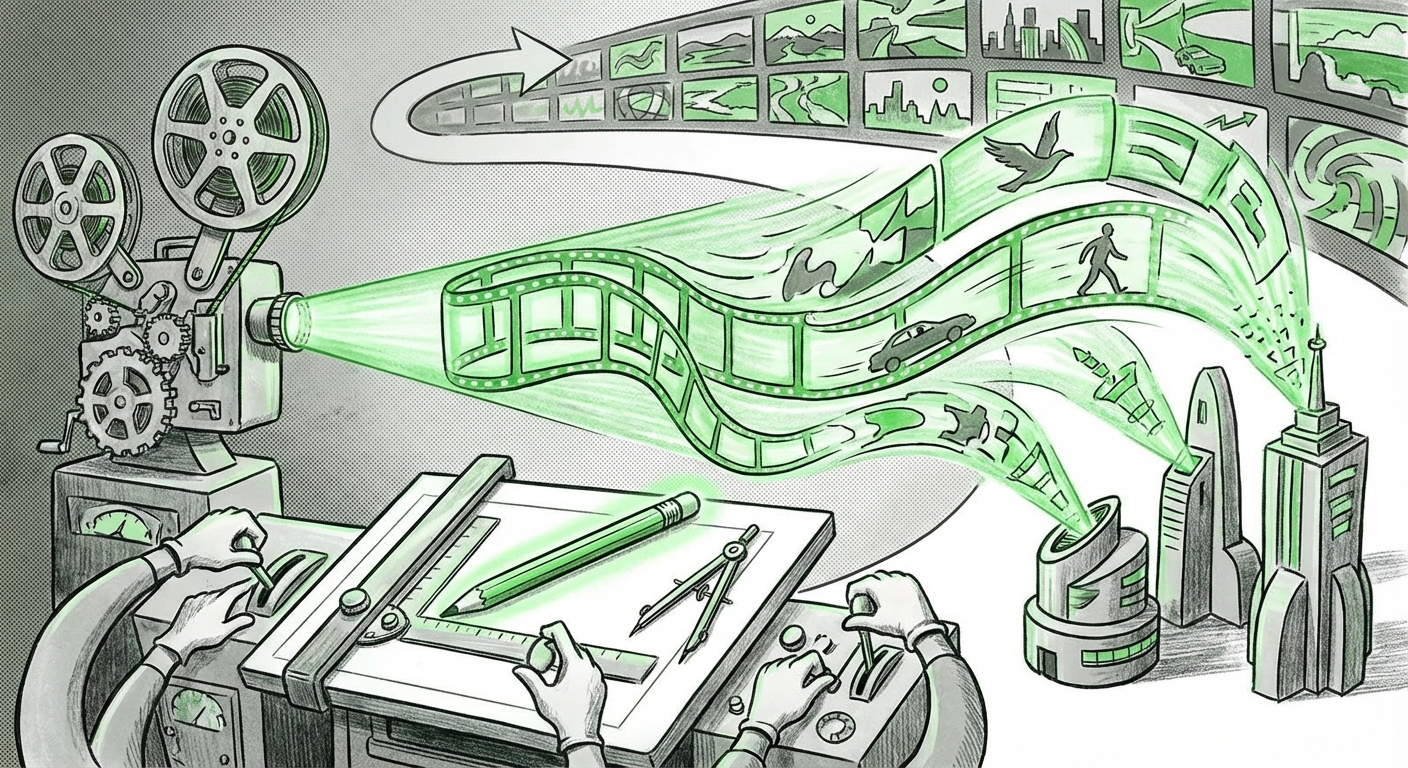

The race to master photorealistic, controllable video generation is no longer a theoretical contest; it is a heated, public technology sprint. Just as the industry was absorbing the shockwaves sent by OpenAI’s Sora and Google’s Lumiere, ByteDance—the powerhouse behind TikTok—has quietly stepped onto the field with **Seedance 2.0**. The initial reports suggest this latest iteration of their AI video model is not just keeping pace but potentially setting new, aggressive benchmarks.

For the layperson, this might sound like an incremental software update. For technology analysts and business leaders, however, this signals a critical inflection point: the cost and complexity of creating high-quality, dynamic synthetic media are collapsing faster than anticipated. This is what this acceleration means for the future of AI, content, and reality itself.

The New Battleground: From Static Images to Dynamic Reality

For years, generative AI mastered still images (think DALL-E 2 or Midjourney). Video, however, is fundamentally harder. A video is not just a sequence of pictures; it requires *temporal consistency*—objects must look the same from frame to frame, physics must be obeyed, and motion must be fluid and logical. Early models produced flickering, surreal results.

The news surrounding Seedance 2.0, coupled with the advancements demonstrated by its peers, confirms that the major labs have solved many of these foundational hurdles. To properly analyze ByteDance’s move, we must situate Seedance 2.0 within the ongoing competition:

- OpenAI’s Sora: Demonstrated unparalleled world-building and long-form narrative capabilities, setting a high bar for physics and complex interaction.

- Google Lumiere: Focused heavily on spatial and temporal conditioning, aiming for greater user control over motion styling and consistency across the entire video.

- ByteDance Seedance 2.0: By reportedly achieving "impressive progress" over its already capable predecessor, Seedance is likely targeting hyper-realism and, critically, *integration* with existing high-volume content pipelines (i.e., TikTok).

This fierce competition ensures rapid progress. When one lab demonstrates superior temporal coherence, others immediately pivot to address that gap. This dynamic, where major global tech entities are benchmarking against each other publicly, drives innovation at an exponential rate. This is the first major takeaway for our audience of tech enthusiasts and industry analysts: **The timeline for mature, consumer-ready AI video has just been drastically pulled forward.**

Decoding the Technological Leap: What Makes 2.0 So Impressive?

To understand *how* ByteDance made this leap, we need to consider the underlying technology trends that experts track, as suggested by our contextual search into "Text-to-video generation technology trends 2024." The key breakthroughs are not just in rendering quality but in model architecture.

1. Spatio-Temporal Modeling Refinement

Older video models treated time as an afterthought. Modern architecture, likely leveraged by Seedance 2.0, uses specialized attention mechanisms or latent space manipulation to process the time dimension simultaneously with the visual dimensions (space). This means the model doesn't just *draw* the next frame; it *predicts* the flow and interaction between objects over time.

For developers and researchers, this means we are moving toward models that intrinsically understand physics simulations, even if they weren't explicitly trained on physics data. When ByteDance claims impressive progress, it likely means their ability to render complex movements—like splashing water or realistic shadows moving across a scene—is now nearly flawless.

2. Data Fidelity and Proprietary Training Sets

While the specific training data remains confidential, ByteDance has an unparalleled advantage: access to the massive, diverse, and constantly refreshing video library generated daily on TikTok. While Sora benefits from large curated web datasets, ByteDance may be uniquely positioned to train models on short-form, high-impact, vertically-optimized content that reflects current human trends.

If Seedance 2.0 excels at generating videos perfectly suited for mobile consumption (high contrast, fast cuts, strong visual hooks), it’s a direct result of training on its own ecosystem. This proprietary data moat is a critical competitive factor moving forward.

3. Controllability and Prompt Adherence

The value of an AI generator is directly proportional to how closely it follows instructions. Seedance 2.0 is expected to show high fidelity in respecting complex prompts—not just "a dog walking," but "a golden retriever wearing a blue vest, walking slowly on wet cobblestones at dusk, viewed from a low angle." Closing the gap with Sora on this front implies that Seedance 2.0 offers creatives unprecedented directorial power without touching a camera.

The Implications: Redefining Content Creation and Digital Reality

The arrival of highly capable tools like Seedance 2.0 transforms the discussion from "Can we make AI video?" to "What happens now that everyone can?" This shift impacts everyone from studio executives to social media marketers.

For Businesses: The Democratization of High-End Production

As our contextual search indicated, the implications for content creation are vast. Previously, producing a 15-second, cinematic-quality advertisement required days of shooting, professional actors, and substantial budget. Now, the barrier to entry is lowering exponentially.

Actionable Insight for Business Leaders: Companies must immediately begin experimenting with integrating AI video workflows. Marketing departments should transition from planning photoshoots to scripting and prompting synthetic video sequences. The competitive advantage will belong to those who can generate five times the volume of polished content at a fraction of the cost, tailored precisely for platforms like TikTok or Reels.

The key shift here is from *production capacity* being the bottleneck to *creative ideation* being the bottleneck. If your team can generate 50 distinct, high-quality visual concepts in an hour using Seedance 2.0 or a similar tool, your ability to test market interest, iterate on campaigns, and seize fleeting trends becomes unparalleled.

For Creatives: Evolution, Not Obsolescence

For artists, designers, and VFX professionals, this technology is both a threat and an opportunity. While tasks focused on rendering basic scenes may become automated, the demand for sophisticated *directors* will soar. Someone must still understand cinematic language, pacing, lighting, and narrative structure to guide the AI effectively.

The technical skills will shift from operating complex physical cameras and editing suites to mastering prompt engineering, understanding model weights, and performing advanced post-processing on AI outputs to ensure they meet professional quality standards.

The Dark Side: The Escalation of Synthetic Reality Risks

We cannot analyze such powerful tools without confronting the risks. The more realistic and accessible AI video becomes, the more potent the threat of deepfakes and synthetic misinformation. If ByteDance deploys Seedance 2.0 widely, the volume of hyper-realistic, fabricated videos designed to influence opinions or create chaos will increase dramatically.

This necessitates immediate focus on technological countermeasures—detection algorithms, watermarking standards (like C2PA), and robust verification protocols. The societal challenge is integrating this stunning creative power while simultaneously building defenses against its misuse. For policymakers and tech ethicists, this is a priority-one crisis.

Looking Ahead: What Comes After Seedance 2.0?

Where do we go from here? The current focus is on higher fidelity and longer clips. The next major phase will center on Interactivity and Real-Time Synthesis.

Imagine a video game environment where the environment morphs instantly based on your voice command, or an interactive educational tool where historical figures respond dynamically to student questions with custom, generated video clips. Seedance 2.0, being backed by a company deeply invested in real-time engagement platforms, might be poised to lead this convergence.

The future of AI video is not just about generating passive content; it’s about generating responsive, dynamic, digital scaffolding for our interactions with the world. The milestones achieved by ByteDance underscore that the foundational engineering required for true digital immersion is rapidly maturing.

Ultimately, the release of Seedance 2.0 confirms that the generative AI landscape is a highly competitive arena driven by global technology giants. While the technology promises a golden age for content creators who can wield its power creatively, it demands a parallel commitment to ethical governance and robust verification systems. The age of synthetic video is here, and it’s moving faster than anyone predicted.

Contextual analysis informed by ongoing industry reporting on AI video models, including comparisons between OpenAI Sora, Google Lumiere, and advancements in spatio-temporal AI modeling.

Original Mention of ByteDance's Advancement: The article Bytedance shows impressive progress in AI video with Seedance 2.0 appeared first on The Decoder. URL=https://the-decoder.com/bytedance-shows-impressive-progress-in-ai-video-with-seedance-2-0/