The Era of Ad-Supported AI: Why ChatGPT’s New Model Signals the True Cost of Generative Intelligence

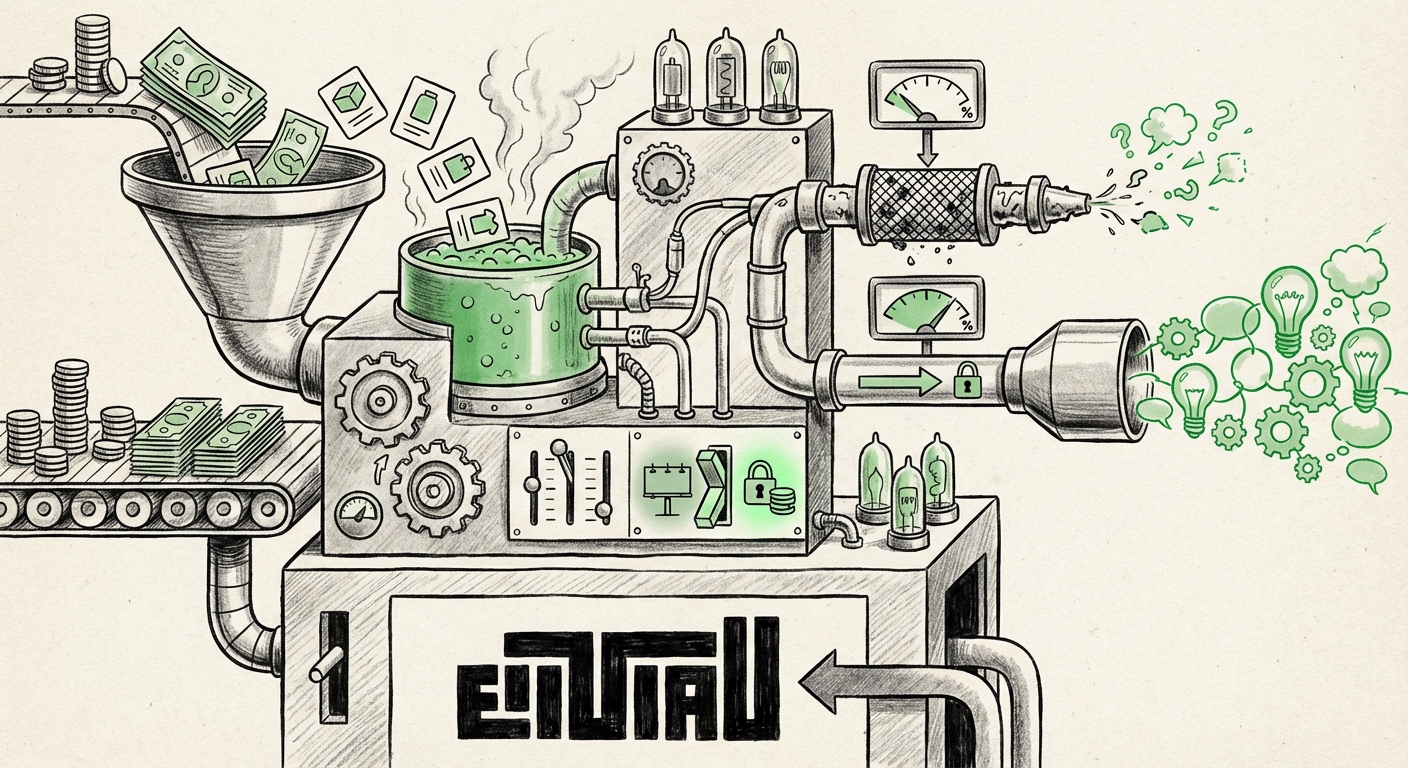

The world of Artificial Intelligence has always been defined by rapid innovation, followed by the inevitable reality check of sustainable business operations. For years, generative AI tools like ChatGPT felt like an endless, free laboratory—a place where access to powerful, world-changing technology was provided at little to no direct cost to the end-user. That era, at least for the majority of users, appears to be over.

The recent announcement that OpenAI is introducing advertisements to its free and "Go" tier users—while allowing subscribers to opt-out, albeit with stricter daily message limits—is more than just a minor feature update. It represents a pivotal, perhaps irreversible, shift in the business model of foundational AI. This development forces us to confront the fundamental economics of LLMs, the fragile state of the "free internet" model when applied to compute-intensive technology, and what this means for how society accesses intelligence itself.

The Unseen Engine: Confronting the True Cost of Inference

To understand the introduction of ads, one must first understand the sheer, staggering expense of running a service like ChatGPT. This isn't like hosting a standard website; this is processing language in real-time using clusters of the most expensive processors on the planet (GPUs).

The core issue is the cost of inference. When you send a prompt to ChatGPT, the model must calculate and generate every word of the response. This requires significant computational power per query. If we imagine the goal of providing cutting-edge AI to billions of people for free, the expenditures quickly balloon past hundreds of millions, if not billions, of dollars annually.

This economic reality is the first major signal we must heed. As we pursue the "Search Query 1: Cost to run large language models inference," we find corroboration that sustained free access at this scale is nearly impossible without a revenue stream. For the free user base, OpenAI is effectively choosing the path of least resistance for monetization: advertising. It’s the tried-and-true method that has funded everything from search engines to social media, proving that when the product is free, *you* are the product.

The Trade-Off: Ads vs. Usage Caps

The genius, and potential ruthlessness, of OpenAI’s current approach lies in the *choice* offered to the user. You can accept the ads, maintaining a relatively high message limit, or you can opt out to maintain a cleaner experience, but you will be heavily restricted in your usage. This dynamic brilliantly segments the user base:

- The Ad-Tolerant Majority: Users who rely on AI for casual, everyday tasks (checking emails, quick brainstorming) will likely tolerate the ads to maintain high volume access.

- The Privacy/UX-Sensitive Minority: Users who need high capacity (developers, heavy researchers, or those deeply concerned about data privacy implications of ad tracking) are strongly incentivized to upgrade to a paid subscription (e.g., Plus).

By linking the opt-out directly to a reduced usage cap, OpenAI uses the cap itself as a powerful, if subtle, driver toward paid conversion. It’s a sophisticated application of demand management rooted in economic necessity.

The Competitive Landscape: Setting the Industry Standard (Query 2)

OpenAI is rarely content to simply follow; they often set the pace. The introduction of ads provides a critical data point for understanding prevailing "Search Query 2: LLM monetization strategies free tier."

If competitors like Google Gemini or Anthropic Claude react by *not* adopting ads, it suggests they are relying on deeper pockets (in Google's case, integrating AI into search and cloud services) or that they believe the short-term reputational hit of ads outweighs the revenue gain. Conversely, if competitors follow suit, it confirms that the ad-supported model is becoming the default cost-sharing mechanism for consumer-facing LLMs.

For businesses, this signals a maturity in the market. No longer can companies assume they can simply iterate on free consumer access indefinitely. Any organization looking to build a service powered by commercial LLMs must now bake significant operational expenditure (OpEx) budgeting into their forecasts from day one. The age of "try before you scale for free" is rapidly receding.

The Trust Factor: UX, Privacy, and the Black Box (Query 3)

OpenAI’s promise is clear: the ads will *not* influence the responses. This is crucial because LLMs are fundamentally different from traditional search engines where relevance is easily measured. With AI, trust centers on the integrity of the reasoning process.

When we investigate "Search Query 3: Impact of ads on user trust in AI tools," we delve into sensitive territory. Users trust ChatGPT for tasks ranging from writing critical business plans to helping children with homework. Introducing advertising inherently raises questions:

- Data Use: Will the context of my conversation now be leveraged for better ad targeting, even if the direct output remains clean?

- Subtle Bias: Can an advertiser influence the *placement* or *framing* of search results without directly altering the factual response? For example, if a user asks for "the best new electric car," does the system subtly prioritize one advertised manufacturer?

While OpenAI insists on a clean separation, the psychological effect of advertising in an environment designed for pure utility can erode user confidence. For professional users, this might be the tipping point that forces a subscription, validating the initial hypothesis about segmentation.

Segmenting the Digital Future: Who Gets the Best AI? (Query 4)

Perhaps the most profound implication of this monetization shift relates to digital equity and access. When considering the "Search Query 4: Future of free consumer AI access," we are examining whether AI will follow the path of social media (free, ad-supported, engagement-maximized) or the path of premium software (subscription-gated, high-fidelity service).

The move to ads suggests LLM providers are attempting a hybrid approach, but the usage caps for opt-outs heavily favor the subscription model for power users. This heralds a potential future where access to the *fastest, most capable, and most reliable* AI tools becomes stratified based on willingness and ability to pay.

Implications for Businesses and Innovation

For businesses, this creates a tiered service reality:

- The Free Experimenter: Small teams or individuals can continue to prototype using the ad-supported tier, accepting lower limits and the presence of external data collection/ads.

- The Production Powerhouse: Any serious enterprise integration, R&D pipeline, or customer-facing application *must* be built on paid subscriptions or API credits, ensuring predictable performance and no external distractions.

This stratification is healthy for OpenAI's revenue but requires a strategic adjustment for smaller innovators who relied on frictionless, high-volume free access for initial development.

Actionable Insights for the AI-Powered Future

The introduction of ads and usage limits is not a temporary setback; it is the foundation of the next generation of AI infrastructure funding. Here are the key actionable insights for navigating this new landscape:

1. Re-evaluate Compute Budgeting Immediately

If your business relies on interactions with models like GPT-4, stop planning around "free" access for non-production work. Start modeling costs based on API pricing for essential workflows now. The free tier is best viewed as a volatile sandbox, not a stable staging ground.

2. Focus on Multi-Modal & Open Source Alternatives

If ad-tolerance is low, businesses should aggressively explore alternatives. Investigate open-source models (like Meta’s Llama variants) that can be self-hosted, eliminating the direct inference cost but trading it for internal hardware and maintenance costs. Furthermore, look at how competitors package their non-text services (image, video generation) to see if they offer better value propositions for specific tasks.

3. Demand Transparency in Ad Integration

For consumer-facing products, be prepared to clearly articulate *why* you chose a paid tier over an ad-supported one. If you are building trust, users will appreciate that you invested in the ad-free experience. If you are targeting a mass market, understand that ad acceptance may be higher than tech pundits predict.

4. Prepare for Competitive Pricing Wars

The monetization game has begun. Expect fierce competition on subscription pricing, feature bundling, and API access tiers as companies battle over who can offer the best balance between cost and performance, pushing the boundaries established by this latest OpenAI maneuver.

Conclusion: From Playground to Marketplace

The arrival of ads on ChatGPT’s free tier marks a critical inflection point: Generative AI has graduated from a technological marvel to a fully commercialized utility. The promise of infinite, free intelligence has met the hard reality of silicon costs and operational scale.

While some users will lament the loss of the pristine, ad-free environment, this development ensures the necessary capital flow to continue pushing the boundaries of what these models can achieve. The future of AI will not be uniformly free; it will be segmented, negotiated, and funded—either through user data exchanged for access, or through direct payment for quality and reliability. As consumers and builders, our immediate task is to adapt to this new financial reality, strategically choosing which tier of intelligence we are willing to live and build within.