ChatGPT's Resurgence and Next-Gen Model: Decoding OpenAI's Strategic Leap in the AI Arms Race

The landscape of Artificial Intelligence is defined by constant motion, yet certain signals stand out as genuine indicators of tectonic shifts. Recent reports confirming that ChatGPT is growing again at double-digit rates, coupled with the imminent release of a new foundational model this week, mark such a moment. For months, industry observers speculated about user fatigue, the "trough of disillusionment" post-hype cycle, and aggressive competition from rivals like Google and Anthropic.

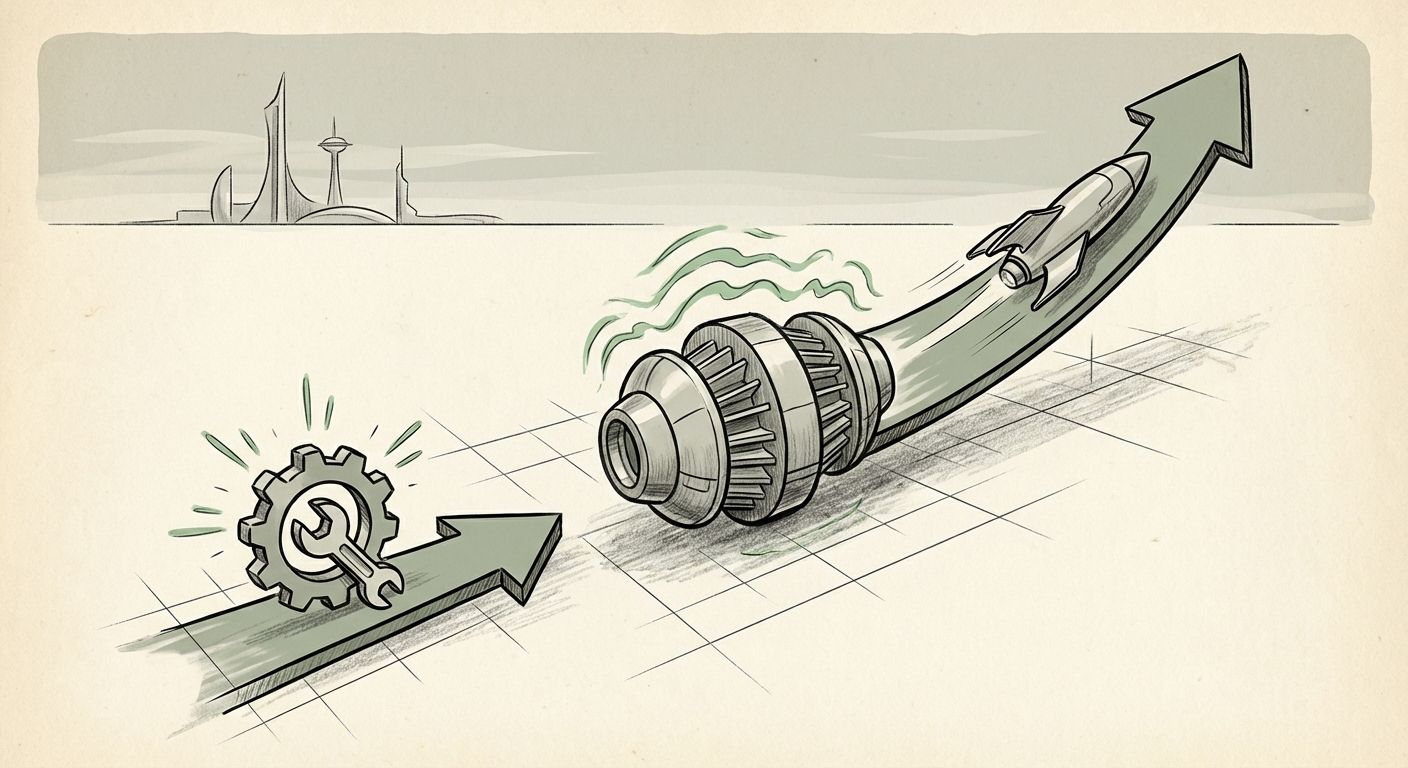

This renewed consumer momentum, alongside the staggering 50 percent growth reported for the specialized coding product, Codex, suggests that OpenAI has navigated the competitive turbulence effectively. This isn't just a sign of survival; it's a strategic pivot demonstrating the enduring power of their core products while simultaneously accelerating specialization. As an AI technology analyst, I see this as a clear signal that the next phase of AI development—marked by greater capability and deeper integration—is already underway.

The Context: Surviving the AI Hype Correction

To understand the significance of double-digit growth, we must first understand the preceding challenge. After the initial explosion in late 2022 and early 2023, many consumer-facing AI chatbots faced severe retention issues. Users experimented, but many failed to find sustained daily utility outside of novelty tasks. This market pause caused analysts to question if a generalized Large Language Model (LLM) interface could maintain broad appeal against specialized vertical solutions.

The queries we pursue to contextualize this news—analyzing user retention rates and overall market share—reveal that OpenAI seems to have successfully countered this trend. Whether through product enhancements, better integration into business workflows, or simply the continuous attraction of having "the original," the numbers suggest the core ChatGPT offering remains sticky. This stability is the bedrock upon which their next major launch is being built.

For the non-technical reader: Think of it like this: After everyone bought the first smartphone, many stopped playing with the new apps every day. OpenAI is showing that their core product is now useful enough that millions are coming back regularly—not just to play, but to actually use it for real work.

The Imminent Leap: Deciphering the New Model

The most electrifying piece of information is the planned new model release this week. In the fast-moving world of foundation models, a "new model" can mean anything from minor performance tuning to a foundational architectural overhaul. Based on recent analyst commentary and the trajectory established by GPT-4o (which focused heavily on speed and multimodality), we can anticipate this release will target key areas of weakness or aggressively push forward on known research frontiers.

Anticipated Capabilities and Market Impact

The next iteration will likely aim for substantial improvements in several areas:

- Advanced Reasoning: Moving beyond pattern matching to exhibit deeper, more reliable chain-of-thought reasoning, crucial for complex problem-solving in science, law, and engineering.

- Massive Context Windows: The ability to process and remember far more information in a single conversation or document set. This transforms the model from a conversational partner into a true digital analyst capable of summarizing entire corporate databases or codebases.

- Native Multimodality: If GPT-4o was multimodal by stitching components together, the new model might be natively trained on text, image, audio, and video simultaneously, leading to unparalleled cross-modal understanding (e.g., perfectly describing the physics occurring in a video clip).

This upcoming model release is not just about better performance; it’s about widening the capability moat between OpenAI and its competitors. If the new model offers a demonstrable, step-function improvement in reasoning or speed, it justifies the renewed user growth and puts immediate pressure on rivals to counter-announce.

For business strategists: This isn't just an upgrade; it’s a platform shift. Businesses relying on current LLM performance must immediately start modeling how a significant leap in reasoning power will change their operational efficiencies and potentially obsolete current AI-powered workflows.

The Specialization Success Story: Codex and Vertical AI

While the consumer-facing ChatGPT drives the headlines, the 50% growth in Codex is arguably the more profound indicator of AI’s future monetization path. Codex is OpenAI’s family of models specialized for code generation and comprehension. Its rapid ascent signals a crucial trend: the future isn't just about one giant, general-purpose AI; it’s about powerful foundation models that can be finely tuned for specific, high-value tasks.

The ROI of Specialized AI

Software development is a measurable, high-cost sector. When an AI tool like Codex (or its successor integrated into developer tools) can demonstrably increase a developer’s output—whether through faster debugging, boilerplate generation, or translating legacy code—the return on investment (ROI) is immediate and quantifiable. This robust growth suggests that enterprises are moving past experimenting with AI and are now integrating it where it directly impacts the bottom line.

This specialized success also places direct competitive pressure on offerings like GitHub Copilot (powered by Microsoft/OpenAI technology) and emerging competitors. The growth validates the market segment, confirming that developers are willing to pay for tools that genuinely make them better at their core job. As we investigate market adoption rates for coding assistants, we expect to see this correlation between specialization and high growth repeated across other professional domains like legal drafting and financial modeling.

In simpler terms: While everyone likes playing with ChatGPT, companies are investing heavily in tools that make their engineers write code 50% faster. This is where the real money and long-term dependence on AI is being built.

Implications for the Future of Technology and Society

These developments—renewed mainstream adoption and specialization acceleration—have profound implications across the technology stack and society at large.

1. The Velocity of Deployment

The rapid turnaround time between major announcements (like the GPT-4o update and this anticipated new model) is forcing industries to adopt a "constant state of readiness." Businesses can no longer afford multi-year planning cycles for AI integration. The pace demands agility. Those who wait for the "perfect" stable model will find themselves perpetually playing catch-up.

2. The Enterprise AI Stack Bifurcation

We are seeing a clear split emerge:

- The Front Door (ChatGPT): Remains vital for general knowledge access, idea generation, and mainstream familiarity. It drives the top-of-funnel interest.

- The Engine Room (Codex/Specialized Models): Drives core operational efficiency, automation, and competitive advantage in specific industries. Enterprise budgets are increasingly prioritizing these specialized deployments.

This bifurcation suggests future platform dominance will require mastery of both: a compelling, accessible general interface *and* a powerful suite of finely-tuned vertical tools.

3. Competition and Open Source

OpenAI's moves will inevitably provoke reactions from competitors like Google (Gemini), Anthropic (Claude), and the surging open-source community utilizing Meta’s Llama architecture. If the new OpenAI model establishes a significant lead in reasoning benchmarks, we will see a renewed focus from open-source developers on catching up in areas that matter most to enterprises, such as data security and deployment flexibility.

Actionable Insights for Leaders Today

How should technology leaders and business owners react to this confluence of strong adoption and impending capability upgrades?

For Developers and Engineering Leaders:

Action: Audit Your Toolchain for Specialization. Don't assume your generalized LLM subscription covers your specialized needs. If you are a coding team, actively benchmark your current AI assistant against the latest capabilities of Codex/GPT offerings for code review and testing. Look for concrete metrics on time saved, not just qualitative feedback.

For Product Managers and Innovation Leads:

Action: Prepare for Contextual Depth. Assume the next model can handle far larger datasets in context. Begin prototyping use cases that were previously impossible due to token limits—such as training an internal AI assistant on an entire year's worth of internal documentation or customer service transcripts for instant, high-fidelity answers.

For Executive Leadership and Investors:

Action: Re-evaluate AI Budget Allocation. The renewed growth confirms that GenAI is moving from exploratory phase to essential infrastructure. Ensure your budget reflects this by allocating significant resources not just to purchasing API access, but to the internal teams responsible for securely integrating these specialized tools (like Codex) into critical business processes.

Conclusion: The Accelerating Cycle of Innovation

The message delivered by OpenAI’s recent performance indicators is clear: The AI development cycle is not slowing down; it is simply becoming more sophisticated. The resurgence of ChatGPT indicates that even in a crowded market, delivering continuous, high-value utility keeps the user base engaged. Simultaneously, the explosive growth of Codex proves that specialized applications are the immediate key to massive enterprise ROI.

The upcoming model launch is the exclamation point on this period of strategic consolidation. It represents an attempt to redefine the performance ceiling before competitors can fully stabilize their offerings. As analysts, we must track this new release not just for its benchmarks, but for how it empowers specialized tools like Codex to move from useful productivity enhancers to indispensable, mission-critical components of the global digital economy. The era of foundational capability leaps is upon us once again, demanding vigilance and immediate strategic action from all participants.