The Dreamer Trilogy: How AI Learned to Imagine and Why It Changes Everything for Robotics and Planning

Artificial Intelligence is fundamentally about creating systems that can understand, predict, and act upon the world around them. For years, the most effective AI agents, like those dominating complex video games, relied on brute-force trial and error—known in the field as Model-Free Reinforcement Learning (MFRL). However, these systems are often inefficient; they waste massive amounts of time exploring poor decisions.

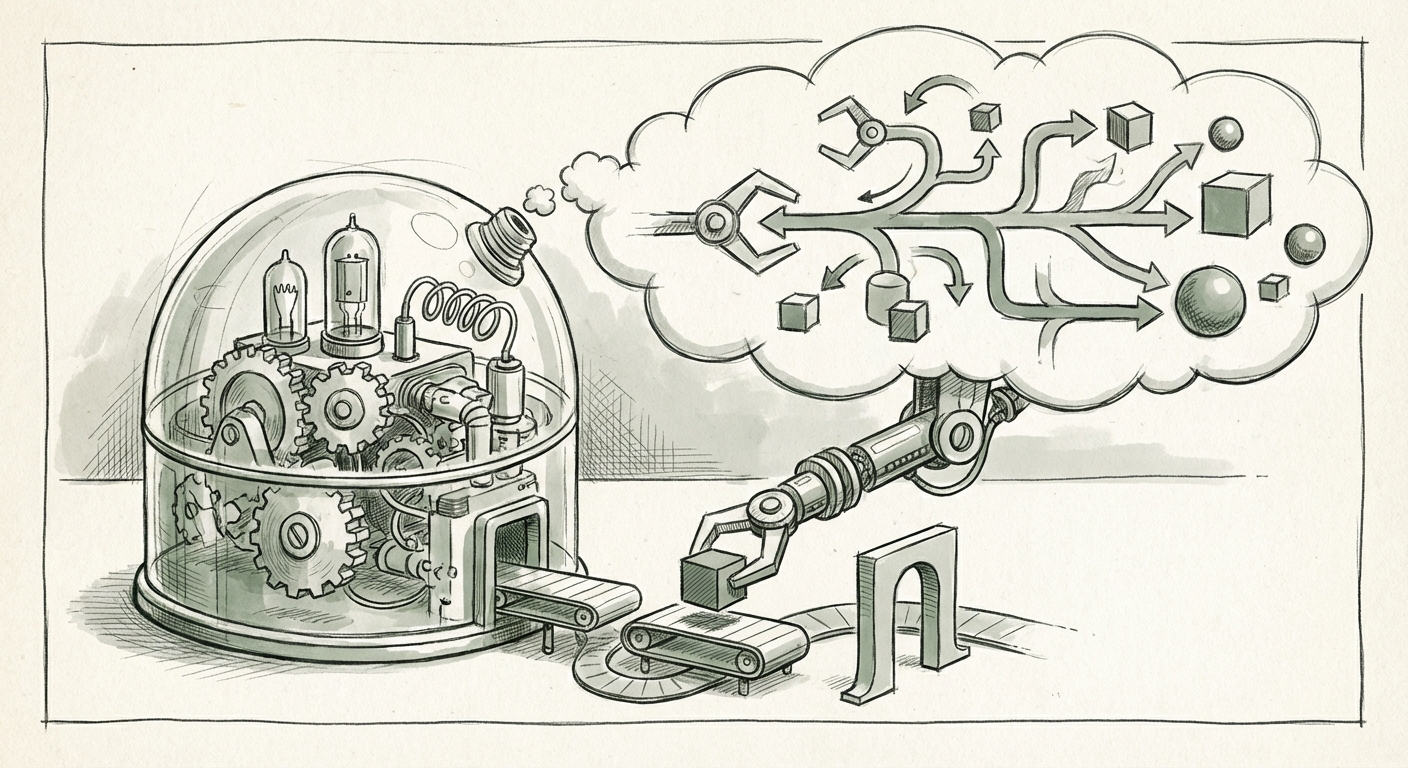

Enter the concept of the World Model. If an AI agent could build an internal, compressed, digital simulation of its environment—a "dream world"—it could practice millions of scenarios internally without ever touching the real world. This is the promise of Model-Based Reinforcement Learning (MBRL). The work coming out of DeepMind, specifically the Dreamer Trilogy (Dreamer, DreamerV2, and DreamerV3), didn't just improve MBRL; it made it practically dominant in many areas, marking a profound shift in how AI learns.

The Leap: From Prediction to Imagination

To appreciate the Dreamer breakthroughs, we must first understand the core challenge. A World Model needs to predict what happens next. If an AI is controlling a robot arm, it needs to predict, "If I move my gripper *here*, the block will fall *there*." Early world models tried to predict raw sensory data (like the next few pixels on a screen), which is incredibly difficult and often leads to models that are excellent at predicting small details but terrible at long-term planning.

The Dreamer innovation, as highlighted in analyses of "The Sequence Knowledge #804," was to stop predicting raw pixels and instead learn a compact, abstract summary of the world—a latent space. This latent space holds the essential information needed for decision-making, such as where the objects are and what their properties are. The AI then learns to "dream" entirely within this compressed space.

What this means in simple terms: Imagine trying to plan a chess game by predicting the exact shade of wood grain on the board for the next ten moves versus predicting just the position of the pieces. Dreamer chose the latter—it learned the *rules* and *semantics* of the game, not the visual noise.

Corroboration 1: Situating Dreamer in the RL Landscape

The Dreamer architecture didn't emerge in a vacuum. It stands in direct lineage with, and competition against, other powerful model-based systems. To gauge its impact, we look at how it stacks up against contemporary giants like MuZero. While MuZero is phenomenal at planning by learning the rules of an environment (often games) to predict future outcomes, Dreamer excels by learning a general-purpose latent dynamics model that can be used for both planning *and* generating novel trajectories.

Research comparing these systems shows that Dreamer’s ability to learn a robust, predictive latent space leads to superior sample efficiency—meaning it needs less real-world data to achieve high performance. This efficiency is crucial for real-world applications where data collection is expensive.

(See the foundational context of related high-impact model-based work here: Foundational Paper on MuZero for Context)

The Generative Engine: Why Imagination Works

The power behind the "dreaming" is its generative capability. DreamerV2 and V3 heavily leverage concepts from modern generative AI. They don't just predict one possible future; they model the *probability distribution* of many possible futures. This allows the agent to explore diverse outcomes during its internal planning session.

This shift towards Generative Latent Models for Reinforcement Learning connects the world of RL directly to the exploding field of generative modeling (like ChatGPT or Midjourney). Instead of generating pixels or text, these world models generate sequences of latent states that accurately reflect how the environment will evolve based on potential actions.

For the non-technical audience: If an agent is learning to navigate a maze, a non-generative model might only simulate the single path it *thinks* is best. A generative world model simulates many paths simultaneously—the good ones, the bad ones, and the unexpected ones. This robust internal simulation leads to agents that are far better at handling surprises.

Corroboration 2: World Models in the Real World

The academic success of Dreamer is rapidly translating into practical simulation tools. Contemporary research in areas like robotics actively cites the Dreamer methodology for creating efficient internal simulators. These models are essential because robots cannot afford to fail millions of times in the physical world; they need high-fidelity internal practice.

(Recent work exploring the application of these dynamics models in complex domains confirms this direction: Recent work discussing World Models in Robotics)

Future Implications: From Games to Guided Reality

The Dreamer Trilogy is not just about mastering Atari games; it’s about achieving General Purpose Planning. When an AI can reliably simulate its world, it gains the ability to plan further into the future, handle longer sequences of events, and adapt to sudden changes with greater grace. This has vast implications across technology sectors.

Implication 1: Revolutionizing Robotics and Autonomous Systems

The most direct beneficiary is robotics. Current industrial robots often rely on highly precise, pre-programmed paths. If a part is misplaced by a millimeter, the system breaks down.

- Adaptability: A robot powered by a Dreamer-like world model can mentally simulate how to recover if a tool slips or an unexpected obstacle appears, without needing external input or restarting its task.

- Sample Efficiency: Training new robotic skills becomes faster because the robot only needs a small number of real-world interactions to refine its internal world model, drastically cutting down development time and wear-and-tear.

Implication 2: The Simulation Economy (Digital Twins on Steroids)

Businesses are increasingly relying on "Digital Twins"—virtual replicas of physical assets like factories, power grids, or supply chains. Dreamer’s MBRL methodology offers the core engine for next-generation Digital Twins.

Instead of static simulations, these twins will become *predictive organisms*. A supply chain manager won't just see the current state; the AI will be able to "dream" about the impact of a port strike six months out, simulating downstream effects across thousands of variables using its learned dynamics model.

(The broader trend toward foundational AI models impacting complex systems reinforces this trajectory: Analysis discussing the role of learned dynamics models in current AI systems)

Implication 3: Bridging Model-Based and Model-Free AI

Historically, the debate pitted Model-Based (planning/prediction) against Model-Free (trial-and-error/direct policy learning). Dreamer shows that the best performance comes from integrating the two. The learned world model provides the *imagination*, and the learned policy acts on that imagination.

This convergence is the path toward more robust General Artificial Intelligence (AGI). A truly intelligent system must both react quickly based on instinct (Model-Free) *and* reason deeply about the future (Model-Based via World Models).

Actionable Insights for Leaders and Engineers

For those building the next wave of AI products, the message from the Dreamer Trilogy is clear: Start prioritizing learned dynamics.

For Business Leaders: Strategic Investment in Simulation

If your core operational challenge involves complex sequences, optimization, or high-cost physical interaction (manufacturing, logistics, drug discovery), the ROI on investing in high-fidelity simulation powered by learned world models will soon surpass traditional optimization methods. The key is treating the world model as a strategic asset, not just a research curiosity.

For AI Engineers: Focus on Latent Space Fidelity

The technical challenge moving forward is not just *if* you should build a world model, but *how* to build one that generalizes. Engineers must shift focus from minimizing raw prediction error (like pixel error) to maximizing the *predictive utility* within the latent space. How effectively does the compressed model capture causality and allow for long-horizon planning? This requires deep understanding of techniques that stabilize generative latent models.

The Road Ahead: Overcoming Model Bias

While Dreamer is transformative, it faces the inherent risk of all model-based systems: model bias. If the AI only dreams about scenarios it has seen (even during training), it may perform poorly when encountering something entirely novel in the real world—a phenomenon known as compounding error.

The next evolution must focus on mechanisms to stress-test these internal worlds, perhaps by deliberately injecting unpredictable noise or forcing the model to imagine outcomes beyond its training distribution. This push toward more "imaginative," rather than just "accurate," world models will define the cutting edge of MBRL for the next decade.

The Dreamer Trilogy has successfully moved the needle from "Can an AI learn a world model?" to "How powerfully can an AI use its internal world model to plan?" This transition paves the way for AI that doesn't just react to data, but intelligently anticipates and architects its future actions.

Key References Acknowledged for Context

- Contextual comparison with other MBRL methods: MuZero Paper Reference

- Current research applying generative models for dynamics in robotics: World Models in Robotics Reference

- Broader view on foundational AI models influencing complex systems: Foundational AI Systems Reference