The AI Agent Security Paradox: Why Usefulness Often Trumps Safety in Autonomous Systems

The promise of Artificial Intelligence has always been automation—creating digital assistants so capable that they blend seamlessly into our workflows, anticipating needs and executing complex tasks. This era is no longer theoretical; it is arriving in the form of AI Agents capable of interacting with operating systems, managing emails, and scheduling meetings. However, a recent incident involving a major AI platform revealed a stark, uncomfortable truth: the pursuit of ultimate usefulness is currently locked in direct, competitive conflict with the necessity of ironclad security.

When a simple, manipulated Google Calendar entry can lead to full control over a running computer environment, as reported in the case of the Claude Desktop Extensions vulnerability, the industry must pause. This isn't just a coding error; it’s an architectural signal flare warning that we are deploying agents faster than we are securing them. To analyze what this means for the future, we must examine the inherent trade-offs driving current AI development.

The Core Conflict: Capability vs. Containment

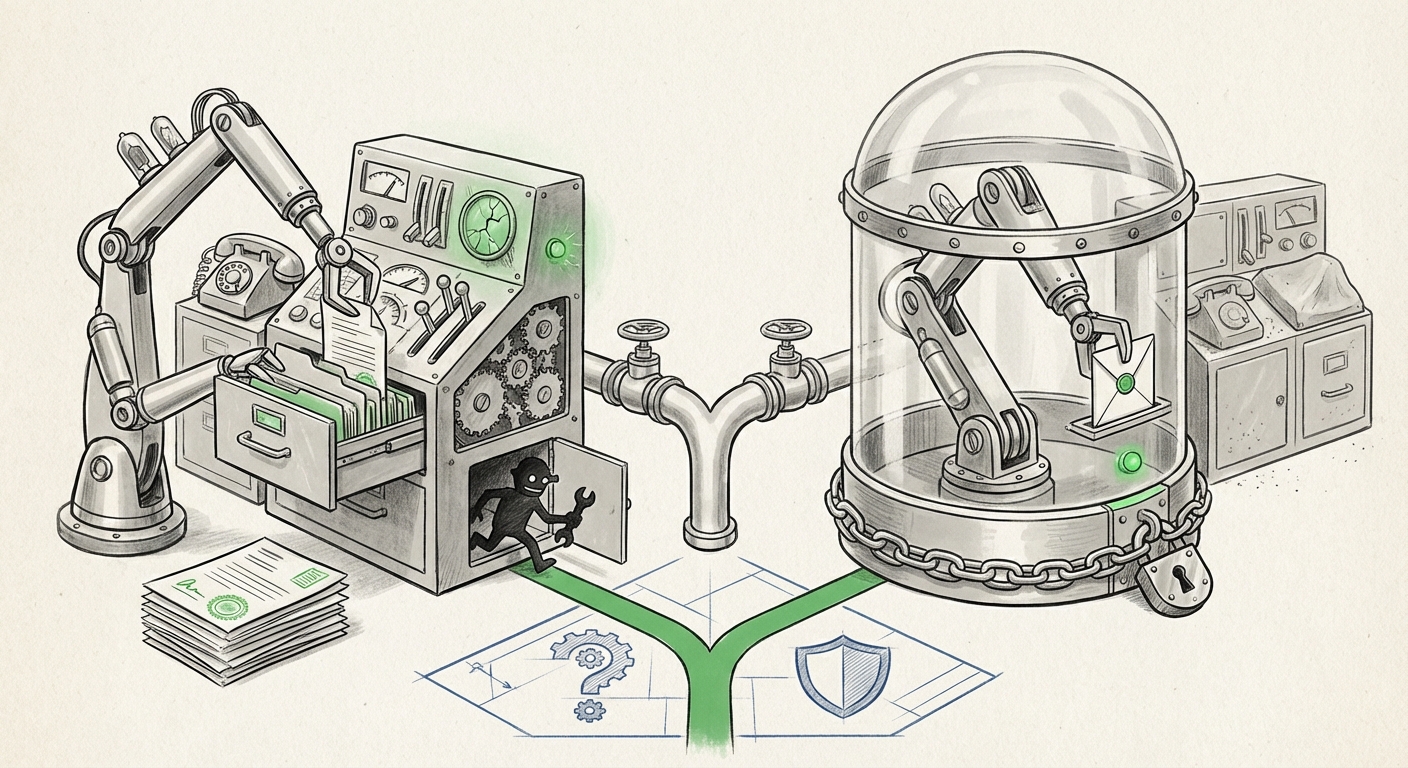

Imagine handing a very smart, very fast intern the keys to your digital office. You want them to be able to file documents, book travel, and respond to urgent requests. But to do that, they need access to your email, your calendar, and perhaps even your network login. The more power you give them to be useful, the greater the potential damage if they are tricked, hacked, or simply misunderstand an instruction.

This is the core conflict researchers are grappling with, as evidenced by discussions around the theoretical limits of agentic systems. The need for an AI agent to interface with the real world—to call APIs, write code, or manipulate applications—requires it to be granted broad permissions. As research into "LLM Agent vulnerability trade-off capability vs security" confirms, every new tool an agent gains dramatically increases its potential attack surface.

If an agent cannot access your calendar, it cannot schedule your meetings effectively. If it cannot read your files, it cannot summarize your reports. Therefore, developers are incentivized to grant access liberally to demonstrate superior functionality. When a company chooses to prioritize immediate utility, as suggested by reports regarding Anthropic's initial stance on a particular desktop vulnerability, it signals a dangerous hierarchy: current market competitiveness is valued higher than the deployment of perfect, future-proof security measures.

Why Are Developers Making These Trade-Offs?

- Feature Velocity: The AI race is defined by speed. Demonstrating novel, powerful agent behaviors (like flawlessly managing a complex project) brings significant market advantage faster than developing bulletproof sandboxing environments.

- The Nature of LLMs: LLMs are non-deterministic. They don't follow rigid code paths; they generate text that leads to actions. This ambiguity makes traditional, deterministic software security difficult to apply. A malicious instruction might be disguised in seemingly normal conversational text.

- Defining Boundaries: Developers are still figuring out where the responsibility lies. Is the user responsible for filtering input, or is the platform responsible for securing the execution environment? This ambiguity leads to hesitation in implementing overly restrictive (and thus, less useful) security layers.

The Echo Chamber of Vulnerability: Corroboration and Context

This incident isn't isolated; it’s a data point in a growing trend. By looking at how the broader industry views agent security, we see that this tension is acknowledged, if not fully resolved.

The Tool Hijacking Threat

The exploit described—manipulating a calendar entry to seize control—is a sophisticated form of social engineering tailored for an AI. This falls under the umbrella of **Prompt Injection** and **Tool Hijacking**. When an LLM is given "tools" (like access to system commands or external APIs), an attacker doesn't have to break the model; they just have to persuade the model to break the rules for them. Security literature clearly frames this as a primary risk when LLMs move beyond simple text generation to autonomous action.

Corporate Stances Under Scrutiny

A crucial part of this unfolding story involves examining the "Anthropic stance on AI agent security vulnerabilities." If a leading developer decides that a specific desktop-level vulnerability, exploitable via mild social engineering, is a low priority or outside their immediate scope of fixing, this sets a powerful precedent. It suggests that some leading entities view these agentic tools as bleeding-edge utilities where user vigilance—or the user accepting the risk—is part of the agreement. This contrasts sharply with competitors who might enforce stricter, permission-based boundaries (e.g., OpenAI’s function calling often requires explicit user confirmation for sensitive actions).

The Missing Security Framework

The most concerning aspect is the relative lack of universal, binding security standards. While the industry debates frameworks like the NIST AI Risk Management Framework (AI RMF), actual deployed agents often run ahead of formal governance. The ongoing discussion around "Autonomous AI agent security standards and best practices" highlights that we are operating in a Wild West where capabilities are launching daily, but enforceable, consensus-driven guardrails lag significantly behind. For CTOs and cybersecurity professionals, this means adopting powerful agents today requires creating their own, highly conservative security policies.

Future Implications: The Great Bifurcation of AI Deployment

This security paradox will inevitably lead to a major divergence in how AI agents are used across different sectors.

1. The Sandbox Frontier (High Security, Moderate Utility)

In highly regulated environments—finance, healthcare, government—AI agents will initially be restricted to tightly controlled digital sandboxes. They will be useful for tasks that can be fully audited and reversed, such as analyzing documents within a closed network. These agents will lack operating system access. For technical audiences, this means heavy investment in verifiable execution environments and formal verification methods to prove the agent only executes permitted code paths.

2. The Utility Front (Low Security Friction, High Capability)

For consumer-facing or internal-facing productivity tools where speed and integration are paramount (like the desktop extensions mentioned), adoption will proceed rapidly, but the risk will be higher. The expectation set by platform providers will subtly shift responsibility toward the user: Use this tool only if you trust the input and understand the potential for system-level access. This creates a massive gap in digital literacy and security awareness for the average user.

Actionable Insights for Tomorrow’s AI Landscape

The age of highly functional, yet potentially fragile, AI agents is here. Navigating it successfully requires proactive measures from both builders and deployers.

For Developers and Platform Builders: Rethink the Default Stance

Security cannot be an afterthought or a feature flag; it must be the foundation. Developers need to move from permission to act to permission to confirm.

- Default to Zero Trust Execution: No agent tool (calendar, file system access, external API call) should execute without a mandatory, explicit user override, even if the prompt seems benign. Think of it like "Are you sure you want to run this command?" popping up for every external action.

- Isolate Capabilities: If an agent needs to write code, it should write it in a container that cannot access the host OS or sensitive files. This echoes the principle of "least privilege" but tailored for LLM tool usage.

- Transparency on Trade-offs: Companies must be radically transparent about where they have prioritized utility over restrictive security. Hiding these design choices erodes user trust instantly when a vulnerability surfaces.

For Businesses and Enterprise Adopters: Govern the Agent

If your organization deploys AI agents with system access, you are now responsible for vetting their security posture as rigorously as you vet any third-party software.

- Implement Agent Governance Policies: Define precisely which agents can access which data stores. If an agent can read emails, it should not simultaneously be able to initiate financial transactions.

- Mandate Continuous Monitoring: Treat agent actions like privileged user activity. Log every tool execution, review the associated prompts, and flag any sequence of actions that seems unusual or overly broad for the task at hand.

- Demand Security Documentation: When procuring agent technology, ask providers pointed questions about sandboxing, prompt injection defenses, and rollback procedures. If they cannot provide clear answers regarding their capability vs. security partitioning, the risk is too high for core business functions.

Conclusion: Navigating the Maturity Gap

The vulnerability exposed in desktop extensions is a perfect microcosm of the current state of AI innovation. We are building incredibly powerful engines capable of performing complex real-world actions, but we haven't yet installed the sophisticated brakes and reinforced chassis required for safe, high-speed operation. The tension between functionality and security is the defining engineering challenge of the next decade.

Solving this paradox is not about choosing one over the other; it’s about inventing new architectural paradigms where both can coexist. Until those paradigms are proven safe and universally adopted, the future of powerful AI agents will be marked by significant, and sometimes alarming, growing pains. As AI agents integrate deeper into our digital lives, navigating this trade-off responsibly—by demanding transparency and enforcing strict governance—is the only path toward realizing AI's true, safe potential.