The Silicon Schism: Why ByteDance, Samsung, and Custom Chips Signal the End of NVIDIA's Monopoly in AI Hardware

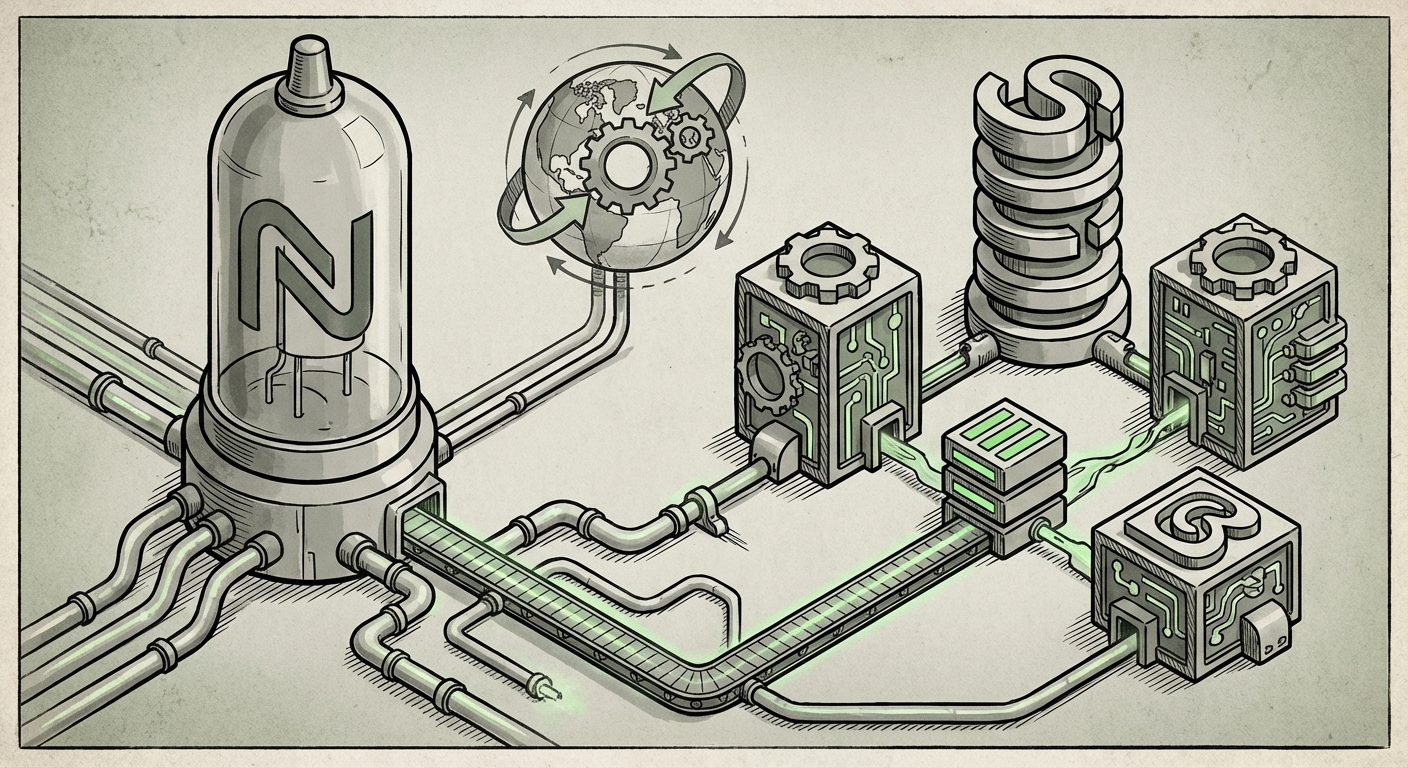

The digital world runs on chips—tiny pieces of silicon that power everything from your smartphone to the massive data centers churning out the latest Large Language Models (LLMs). For the past few years, the undisputed king of the AI hardware hill has been NVIDIA, whose GPUs have become the essential fuel for modern artificial intelligence. However, seismic shifts are occurring beneath the surface. The recent news that ByteDance, the parent company of TikTok, is actively pursuing a deal with Samsung to produce custom AI chips is not merely a supplier change; it’s a flashing neon sign indicating the dawn of a new, highly decentralized era in AI infrastructure.

This move signals a crucial pivot driven by two interconnected forces: the intense need for custom silicon optimization and the crushing reality of memory scarcity. By looking at ByteDance’s strategy in the context of other tech giants and the foundry landscape, we can map out the future of how AI will be built, deployed, and controlled.

The Race for Silicon Independence: Why Hyperscalers Are Designing Their Own Brains

To understand ByteDance’s strategy, we must first recognize the pattern set by its Silicon Valley peers. Building the world’s most advanced AI models—like those powering generative text, image creation, or advanced recommendation engines—requires astronomical amounts of computing power. While NVIDIA’s GPUs offer unparalleled general-purpose speed, they come at a premium price and often include capabilities that a specific company, like ByteDance, doesn't need.

This leads to the concept of custom silicon, or ASICs (Application-Specific Integrated Circuits). Think of it like this: NVIDIA sells a super-powerful, versatile Swiss Army Knife for every computing task. Hyperscalers are saying, "We only need a specialized bottle opener for the next five years."

- Cost Optimization: Custom chips, designed precisely for a company’s unique algorithms (like ByteDance’s recommendation ranking systems), can be significantly cheaper to operate at scale than renting or buying generic high-end GPUs.

- Performance Tuning: By designing the chip, companies can tailor the hardware architecture to perfectly match their software, leading to faster processing speeds for their specific workloads.

- Supply Chain Control: Relying solely on one primary vendor for the most crucial components of your business strategy is a massive risk. By developing their own designs, companies like ByteDance seek insulation from global chip shortages and pricing pressures.

This isn't new; Google pioneered this with its TPUs (Tensor Processing Units), and Amazon followed suit with Trainium and Inferentia. ByteDance is now joining this exclusive club, signaling that AI proficiency is intrinsically linked to owning the hardware blueprints. This move validates the trend that in the AI future, proprietary models require proprietary hardware for maximum efficiency.

The Memory Bottleneck: HBM and the Scarcity Crisis

The second, and arguably more pressing, driver mentioned in the ByteDance news is the quest for **scarce memory supplies**. In modern AI systems, the processing unit (the GPU or custom chip) is only as good as the memory it can feed data to. This crucial component is **High Bandwidth Memory (HBM)**.

HBM is fundamentally different from the RAM in your home computer. It involves stacking multiple layers of memory chips vertically and connecting them with ultra-short pathways, allowing data to move between the memory and the processor at incredible speeds. For training massive LLMs that require billions of parameters, HBM is the traffic cop ensuring the processor isn't starved of data.

Currently, the market for the leading HBM products (like HBM3E) is extremely tight, dominated primarily by SK Hynix, with Samsung rapidly trying to catch up. When ByteDance looks to secure a supply deal with Samsung, they are likely negotiating access to this premium, bottlenecked resource.

Why this matters for the future: If you design a fantastic custom AI chip but cannot secure enough HBM to populate it, that chip sits idle. ByteDance recognizes that control over the *entire stack*—compute and memory—is the new strategic imperative. Deals struck now are essentially pre-purchasing future capacity in a market where demand far outstrips immediate supply projections.

Samsung’s Strategic Gambit: Foundry Competition Heats Up

This potential partnership is a massive opportunity for Samsung, placing them in direct competition for the most lucrative manufacturing contracts in the world. As the semiconductor industry constantly monitors the foundry landscape, the battle between Samsung Foundry and Taiwan Semiconductor Manufacturing Company (TSMC) defines the global supply chain’s robustness.

TSMC currently manufactures the vast majority of the world’s leading AI chips, including those made by NVIDIA and AMD. If ByteDance commits to fabricating its custom chips at Samsung’s most advanced nodes (like 3nm or newer), it represents a significant win for Samsung in the high-stakes foundry race. This validates Samsung's technology roadmap and manufacturing capability, challenging the perception that TSMC is the only viable option for cutting-edge AI silicon.

For companies like ByteDance, spreading their manufacturing base across both TSMC and Samsung hedges their geopolitical and operational risks. A dependency on a single geographic region for advanced chip production is a vulnerability no major global technology player wants.

Implications for the Future of AI Infrastructure

The moves by ByteDance, mirroring those of Meta and Google, paint a clear picture of where AI infrastructure is headed. This is moving away from a simple "buy what’s available" model toward a deeply customized, vertically integrated supply chain.

1. The Decline of General-Purpose Dominance

While NVIDIA will undoubtedly remain a powerhouse for R&D labs and smaller firms, its stranglehold on large-scale enterprise AI deployment will slowly erode. As customized ASICs become more common, the overall market share for generalized GPUs will shrink relative to the total AI spend. This favors sophisticated, well-funded organizations that can afford the upfront design costs.

2. The Rise of Specialized Ecosystems

We are moving toward an ecosystem where different AI stacks speak different hardware languages. Google’s AI will be optimized for TPUs; Meta’s for MTIA; and ByteDance’s for its Samsung-fabricated chips. Interoperability will become a major technological headache, requiring new standardized software layers to bridge these hardware divides.

3. Increased Complexity and Talent Wars

Designing and validating a custom AI chip requires specialized talent spanning computer architecture, VLSI (Very Large Scale Integration) design, and system software. The competition for hardware engineers capable of mastering these complex flows will intensify exponentially. For smaller companies, this heightens the barrier to entry for competing at the foundational level of AI inference and training.

Actionable Insights: What Businesses Must Do Now

The era of passively accepting whatever hardware is available is over. For organizations looking to sustain competitive advantage in the AI landscape, here are actionable insights derived from these supply chain maneuvers:

Navigating the Hardware Reality

- Assess Customization Potential: Large enterprises running massive, repetitive inference tasks (e.g., recommendation engines, large-scale internal document processing) must audit their algorithmic workload. Can a dedicated chip provide a 3x cost saving or 2x speed improvement? If the workload is stable, the investment in custom design may pay off in 3-5 years.

- Diversify Foundry Partnerships: Do not anchor all advanced manufacturing capacity to a single foundry. Even if you are not designing custom silicon yet, understanding the capabilities and capacity queues at Samsung, TSMC, and others is vital for future growth planning.

- Prioritize Memory Strategy: Treat HBM access as a strategic asset, not just a commodity line item. Engagements with memory suppliers (Samsung, SK Hynix, Micron) should start now, focusing on long-term commitment contracts that secure future HBM generations (HBM4 and beyond).

- Embrace Hardware-Aware Software Development: Teams must begin thinking less about abstract models and more about how specific hardware executes those models. Software frameworks need to become more flexible, allowing easy porting between GPU architectures, custom ASICs, and specialized accelerators.

ByteDance’s reported push towards Samsung is more than a business transaction; it is a clear strategic declaration in the ongoing AI arms race. It confirms that achieving true leadership in AI is no longer about mastering the software layer alone; it requires deep, strategic control over the physical foundation upon which that software runs. The supply chain is fracturing into bespoke segments, and those who secure their customized silicon—and the HBM required to feed it—will define the next decade of technological advancement.