Beyond Pattern Matching: How World Models Like Dreamer are Building True AI Simulation

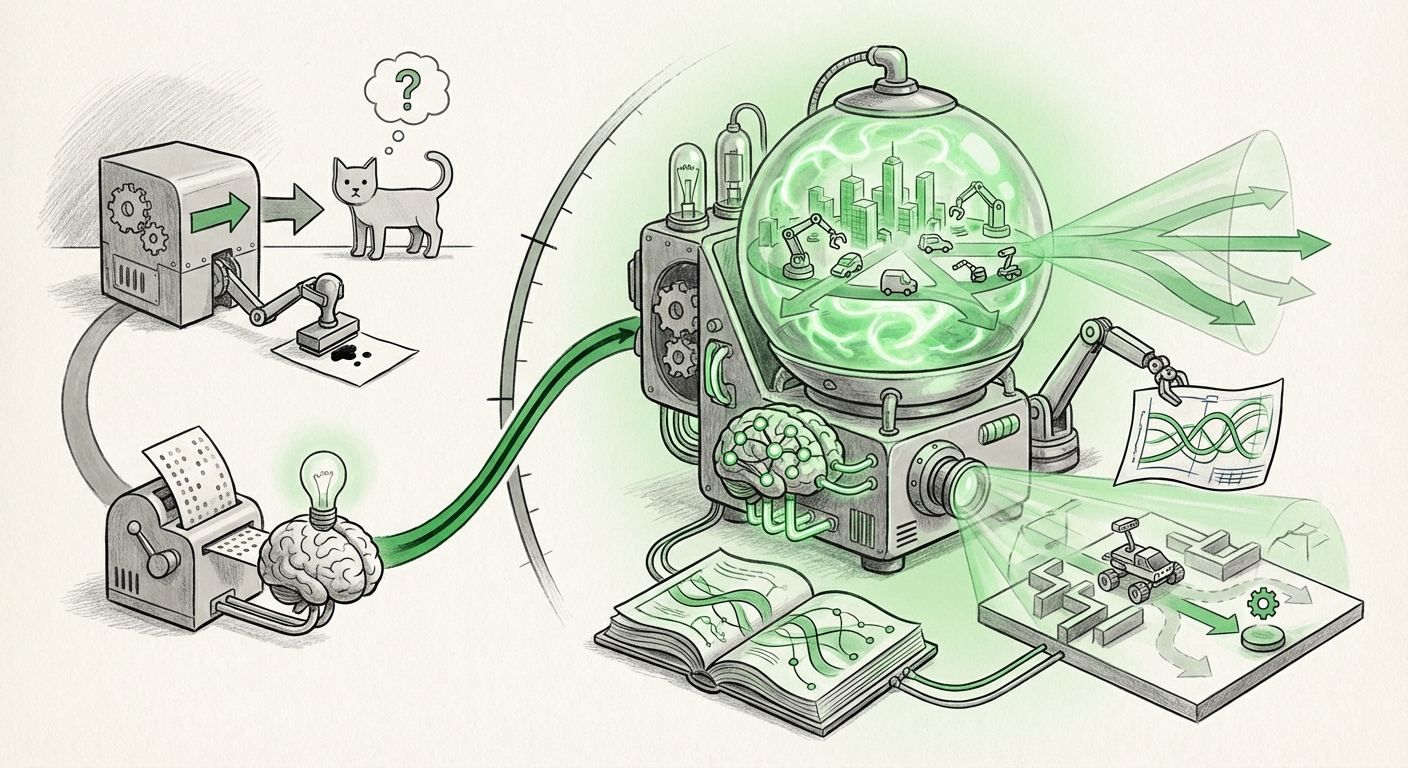

For years, Artificial Intelligence felt like sophisticated pattern matching. AI systems excelled at recognizing cats in pictures or predicting the next word in a sentence, but they often lacked true understanding of how the world works—the underlying physics, causes, and effects. That changes when we talk about **World Models**.

The recent focus on the "Dreamer Trilogy" papers highlights a turning point in AI research. These works, pioneered by researchers like Hafner, introduced architectures that allow an AI agent not just to react to data, but to imagine the future consequences of its actions. This move from mere perception to internal simulation is arguably the most significant trend paving the way for robust, general-purpose intelligence.

The Leap: From Reacting to Simulating

Imagine teaching a child to play catch. A simple reactive AI learns: "If ball approaches, move hand here." A World Model AI learns the rules of gravity, trajectory, and momentum. It can now catch the ball even if it’s thrown slightly differently than before, or it can even predict where the ball would go if it missed.

The Dreamer architecture achieves this by creating a compact internal map, or "latent space," of its environment. It uses sophisticated techniques rooted in **Variational Autoencoders (VAEs)** to compress high-dimensional sensory input (like pixels from a camera) into essential, predictable variables. Crucially, the model learns to predict what happens next *within this compressed space*.

1. The Engine Room: VAEs and Probabilistic Thinking

The success of Dreamer hinges on learning high-quality representations. If the internal map is fuzzy, the predictions will be useless. The choice of VAEs, as opposed to other generative methods like GANs (Generative Adversarial Networks), is pivotal. VAEs operate on a probabilistic framework. They don't just try to generate a perfect next image; they try to understand the distribution of possible outcomes. This probabilistic grounding is essential for planning under uncertainty.

For those deeply involved in generative modeling, comparing this approach to traditional methods is key. While GANs focus on photorealistic output, VAEs prioritize a structured, meaningful latent structure that facilitates inference and long-term prediction. This is why, even as Diffusion Models now dominate image generation, the structured latent space learned by Dreamer remains highly valuable for sequential decision-making.

2. The Real World Test: Scalability in Reinforcement Learning

A simulator is useless if it only works on simple video games. The true test of a World Model is its ability to improve an agent's performance in difficult, real-world scenarios—the domain of Reinforcement Learning (RL). Dreamer showed that an agent could learn complex behaviors by dreaming inside its own simulator, drastically reducing the need for expensive, slow real-world trials.

This is where the business implications begin to crystallize. In robotics, real-world training costs time, energy, and risks damaging hardware. By leveraging latent world models, a robot can rehearse millions of scenarios internally—learning to grip delicate objects or navigate cluttered spaces—before ever touching a real component. The ability to scale planning through simulation is what bridges the gap between academic AI breakthroughs and industrial deployment, particularly in manufacturing and autonomous navigation.

The Current Crossroads: LLMs as The Unexpected Competitor

Just as World Models were gaining traction for their predictive capabilities, Large Language Models (LLMs) arrived, fundamentally shifting the landscape. Models like GPT-4 appear, almost magically, to possess world knowledge when answering complex, causal questions. This has led to a critical debate in AI research: Are LLMs already functioning as implicit world models?

The Implicit vs. Explicit Divide

The difference lies in how the knowledge is structured. Dreamer builds an explicit model of physics and dynamics in its latent space. It learns the *rules* of movement. LLMs, conversely, acquire an implicit model through massive exposure to text describing the world. They learn the statistical correlation of words describing physics, but they don't necessarily simulate the underlying state vector directly.

When an LLM reasons, it’s predicting the next most plausible token based on its training data. When a Dreamer-like agent plans, it executes sequential steps within its learned, self-contained simulation engine. The research community is actively investigating where each approach excels. For tasks requiring precise, step-by-step physical prediction (like controlling a drone through wind gusts), the explicit simulation of a dedicated World Model often retains an edge. For tasks requiring vast amounts of contextual, common-sense reasoning, LLMs dominate.

The future likely involves a synthesis, where LLMs provide high-level strategy and goal setting, feeding those goals into highly specialized, explicit World Models for low-level, high-fidelity execution.

The Next Frontier: Robustness and Long-Term Planning

Even the most advanced World Models face a formidable challenge: Compounding Error. Think of a poorly maintained photocopy machine. If you photocopy a document, and then photocopy the copy, the degradation increases with every generation. Similarly, if a World Model predicts the next state, and then uses that predicted state to predict the state after that, tiny initial errors quickly compound, leading the agent into a fantastical, non-real world.

This "hallucination" risk makes long-horizon planning dangerous. Current advanced research is intensely focused on mitigating this. This involves integrating sophisticated uncertainty quantification—teaching the model to know when it doesn't know—and designing planning algorithms that actively explore the margins of error.

Solutions being explored include:

- Model Ensemble: Running multiple slightly different models and prioritizing plans that all models agree upon.

- Grounding Checks: Occasionally interrupting the internal dream sequence to observe a real action in the environment to reset the model’s state accuracy.

- Imagination-Augmented Planning: Using the model to simulate many potential futures, but prioritizing those futures that seem most stable or less sensitive to small input variations.

Practical Implications: What This Means for Business and Society

The transition from pattern recognition to predictive simulation is not just an academic curiosity; it is the foundation for the next generation of applied intelligence across several key sectors.

For Industry and Business

Robotics and Automation: Companies building sophisticated automation systems can transition from teaching robots rigid sequences to giving them general objectives. A warehouse robot powered by a World Model doesn't just follow a map; it understands how an obstacle might roll or how stacking items affects stability over time. This leads to far greater flexibility and reduced downtime for reprogramming.

Drug Discovery and Material Science: Simulating molecular interactions is notoriously expensive. A specialized chemical World Model could simulate millions of reaction pathways in silico (inside the computer) before costly lab work begins, dramatically accelerating research and development cycles. The model learns the "rules" of chemistry without reading every published paper.

Autonomous Systems: For self-driving cars or complex air traffic control, the ability to safely explore dangerous "what-if" scenarios internally—especially edge cases like sudden mechanical failure or unpredictable weather—is paramount. World Models provide the necessary sandbox for achieving higher safety standards.

Societal and Ethical Considerations

As AI systems become better simulators, their influence grows. If a powerful system can internally simulate complex societal outcomes—economic markets, political stability, or even the spread of misinformation—the implications are profound. Ensuring these models are aligned with human values and that their internal simulations are auditable (we must see why the AI chose a path) becomes a critical safety requirement.

Actionable Insights for the Technology Leader

The Dreamer Trilogy and its descendants show that specialized predictive simulation is a critical capability that complements the general knowledge held by LLMs.

- Invest in Latent Representation: Focus engineering efforts not just on the prediction mechanism, but on the quality of the compressed latent state. A poor internal map cripples even the best planning algorithm.

- Benchmark for Dynamics, Not Just Accuracy: When evaluating new RL or planning systems, measure performance on long-horizon tasks where errors compound. Success here indicates true understanding, not just short-term recall.

- Prepare for Hybrid Architectures: Do not view LLMs and World Models as competitors, but as necessary partners. LLMs handle the 'what' (goals, language context), while precise World Models handle the 'how' (physical simulation, dynamic control).

We are moving into an era where AI agents don't just process data; they build internal cognitive maps of reality that allow them to effectively dream the future. The Dreamer lineage proves that this predictive power is attainable, setting the stage for truly autonomous intelligence.