The Great European AI Divide: Why Research Prowess Isn't Enough to Beat Silicon Valley

Europe has long been lauded as a powerhouse of scientific discovery. In the realm of Artificial Intelligence, this reputation remains intact: European researchers consistently produce world-class papers, secure top talent, and drive fundamental theoretical breakthroughs. However, recent sobering assessments, particularly from advisory bodies in Germany, reveal a stark reality: strong research is not translating into scaled, sovereign commercial deployment.

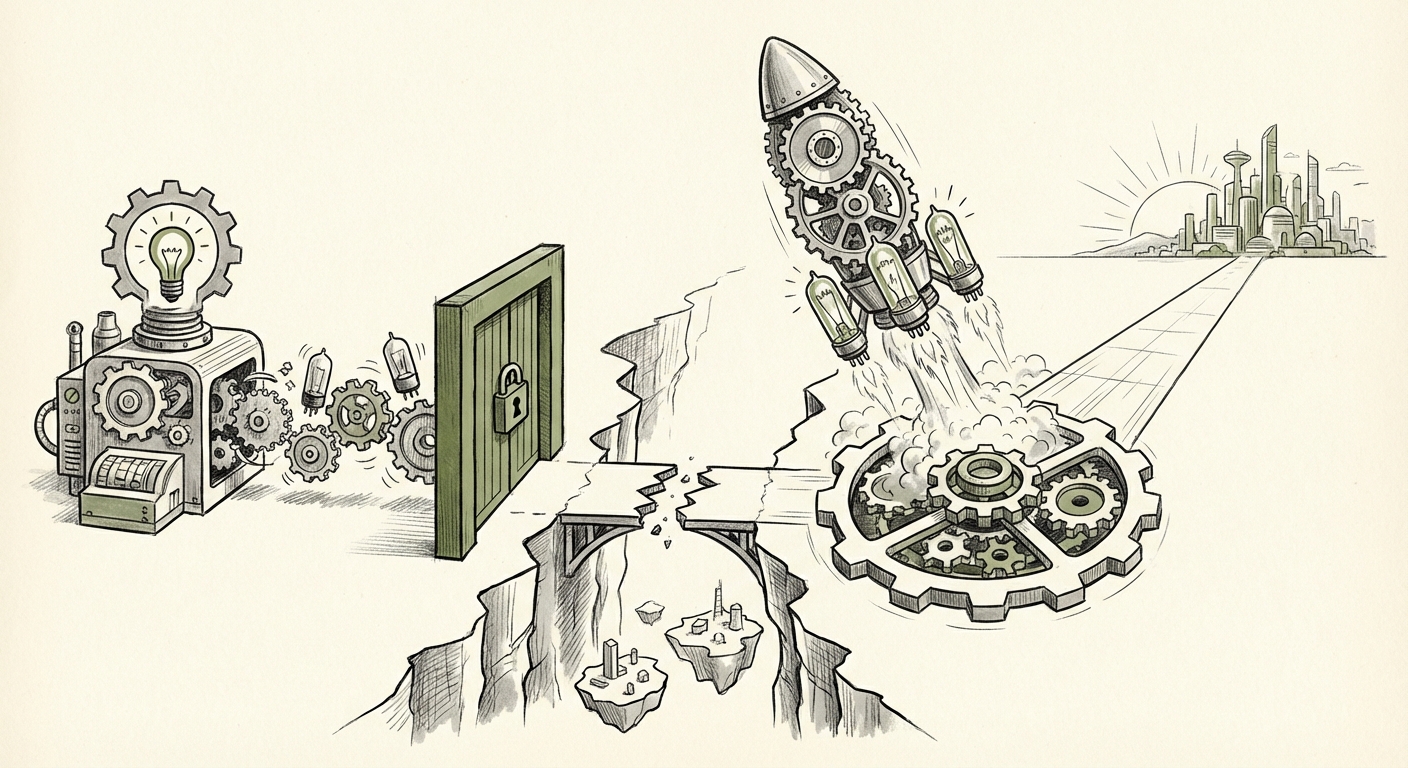

This gap—between brilliant ideas and market dominance—is the defining challenge for the European AI sector today. It creates a dependence on foreign technology and fundamentally shapes Europe's digital future. To understand where Europe stands, we must analyze the convergence of three critical bottlenecks: the compute chasm, regulatory misalignment, and market fragmentation.

The Compute Chasm: Why Training Frontier Models Requires Superpowers

The development of state-of-the-art Large Language Models (LLMs) and foundation models is no longer an academic exercise; it is an industrial arms race defined by access to processing power—specifically, high-end GPUs.

The German report highlights "too little compute capacity." This is not merely a shortage of standard cloud services; it refers to the requirement for massive clusters of specialized hardware, costing hundreds of millions, sometimes billions, of dollars, just to train a single frontier model. In contrast, US hyperscalers and major labs have access to infrastructure orders of magnitude larger.

Quantifying the Digital Dependence

This hardware deficit forces European innovators into a difficult position. They can either license or fine-tune models developed by US or Chinese firms, thereby relinquishing control over the core technology, or they can attempt to build domestically, knowing their training runs will be slow, expensive, and potentially uncompetitive due to resource constraints. Corroborating analysis on the "European Union AI compute infrastructure shortage" consistently points to this hardware gap as the single greatest barrier to achieving AI sovereignty.

For the average citizen or small business owner, this means the most powerful, customized AI tools they use might not be governed by European values or data laws simply because the homegrown alternatives cannot be developed at scale. The implication is clear: Control over compute equals control over the future capabilities of AI.

Regulatory Friction: GDPR, Compliance Costs, and the US Advantage

Europe has rightfully positioned itself as a global leader in data privacy and user rights, largely codified through the General Data Protection Regulation (GDPR). While noble in intent, recent criticisms suggest that the strictness of GDPR, combined with fragmented national enforcement, disproportionately burdens startups trying to innovate locally.

The Compliance Overhead vs. Scale

For a fledgling European AI company, navigating GDPR, data localization requirements, and ensuring ethical sourcing of training data adds substantial legal and operational overhead from day one. Meanwhile, large US competitors, who already possess vast, globally sourced datasets and deep legal teams, can deploy their pre-trained models directly into the EU market under existing compliance frameworks. As articles discussing the "GDPR impact on European AI startups vs US compliance" suggest, this asymmetry means US models often benefit from being 'first movers' in the EU, while local development stalls waiting for regulatory clarity or specialized data access.

The solution, of course, is the forthcoming EU AI Act. This landmark regulation aims to create a harmonized framework, classifying AI systems by risk. However, complex compliance layers, especially for "high-risk" applications, create a new set of challenges. While the AI Act seeks safety and trust, analysts worry that the compliance burden might still be too heavy for small European players to manage, effectively institutionalizing the dominance of large, well-resourced foreign incumbents.

The Market Puzzle: Fragmentation Versus Hyper-Scale

The ambition of creating a true European Single Digital Market remains partially unfulfilled. The advisory body’s call to open the "fragmented EU single market" underscores a critical economic reality: Scaling a tech product across 27 different national regulatory landscapes, languages, and consumer expectations is exponentially harder than scaling across the contiguous United States.

The "Scale or Sell" Dilemma

When European AI firms hit a growth ceiling—limited by compute and hampered by cross-border market access friction—the primary exit strategy often becomes acquisition by a US giant. This results in the "brain drain" of talent and IP out of Europe. Research into "EU digital single market fragmentation startups" frequently shows that without a unified, immediate market of 450 million consumers accessible with a single compliance passport, few European tech companies can reach the scale needed to justify the billion-dollar investments required for proprietary model training.

This fragmentation is not just commercial; it affects strategic sectors. As evidenced by the calls for radical reforms in the "Germany Bundeswehr AI adoption strategy," even government procurement struggles. Defense and critical infrastructure sectors require secure, sovereign AI solutions, yet the fragmented market makes it difficult for any single European vendor to achieve the necessary scale to serve multiple member states efficiently.

What This Means for the Future of AI Deployment in Europe

The current trajectory suggests a future where Europe is a significant *consumer* of advanced AI, rather than a primary *producer*. This reliance carries serious implications across several dimensions:

1. Sovereignty and Trust

If foundational models are trained elsewhere, European governments, businesses, and citizens rely on the ethical guardrails, security protocols, and geopolitical alignments of foreign entities. For applications in law enforcement, healthcare, or defense, this dependence is strategically unacceptable. The future of European AI hinges on achieving *technological sovereignty*—the ability to control the core stacks upon which society runs.

2. Innovation Velocity

Speed is paramount in AI development. When European researchers must wait months for compute allocation or navigate six different national data governance regimes for one project, US or Asian competitors move years ahead. The future favors those who can iterate fastest, meaning compute investment must be treated as a national security imperative, not just a capital expenditure.

3. The Talent Pipeline

While Europe produces excellent researchers, the best job opportunities often lie where the biggest models are being built—i.e., where the largest compute clusters reside. Failing to provide competitive infrastructure risks pushing top AI talent toward Silicon Valley or London, further exacerbating the domestic model creation gap.

Actionable Insights: Pathways to Parity

The problems are systemic, meaning the solutions must be equally ambitious and coordinated across the EU bloc. Here are actionable insights derived from analyzing the convergence of these trends:

1. Commit to Sovereign Compute Infrastructure (The Euro-GPU)

The EU must treat compute infrastructure like essential public utilities (like energy grids or major highways). This requires leveraging initiatives like EuroHPC, but perhaps with a sharper focus. Instead of just aiming for world-class supercomputing for pure science, a dedicated, fast-tracked fund must target the creation of **AI-specific compute clusters** accessible to certified European startups and academic consortia for model pre-training. This bypasses the standard, slow procurement cycles.

2. Regulatory "Fast Lanes" for High-Potential Local Models

While the AI Act is essential for risk mitigation, the EU needs to create regulatory sandboxes or "fast lanes" specifically designed to accelerate the training and testing of European foundation models. This would involve temporary exemptions or streamlined review processes for domestic developers working on models intended to uphold European values, allowing them to build up scale before being subjected to the full burden of compliance.

3. Enforce the Digital Single Market with AI in Mind

Policymakers must move beyond merely suggesting a unified market and enforce harmonization, particularly concerning data governance standards for *training* data. If a data set is certified as compliant in one member state for model training, that certification should be automatically recognized across the bloc. This directly addresses the fragmentation challenge that suffocates scale-up potential.

4. Prioritize Defense and Industrial AI Adoption

Governments, especially Germany's, must reform defense and procurement bureaucracies to accelerate the adoption of domestic AI solutions. By making significant, multi-national government contracts contingent on using European-trained and hosted models, the state creates the initial large-scale anchor demand necessary to pull private compute investment into the region. This serves both national security and commercial viability.

The scientific community in Europe has done its job by proving the concept. Now, the political and investment communities must catch up. The future of European innovation hinges on translating brilliant blueprints into functioning, powerful infrastructure that can compete on the global stage.