The Grounded Oracle: Analyzing GPT-5.2's Real-Time Search and the Reliability Challenge in Next-Gen AI

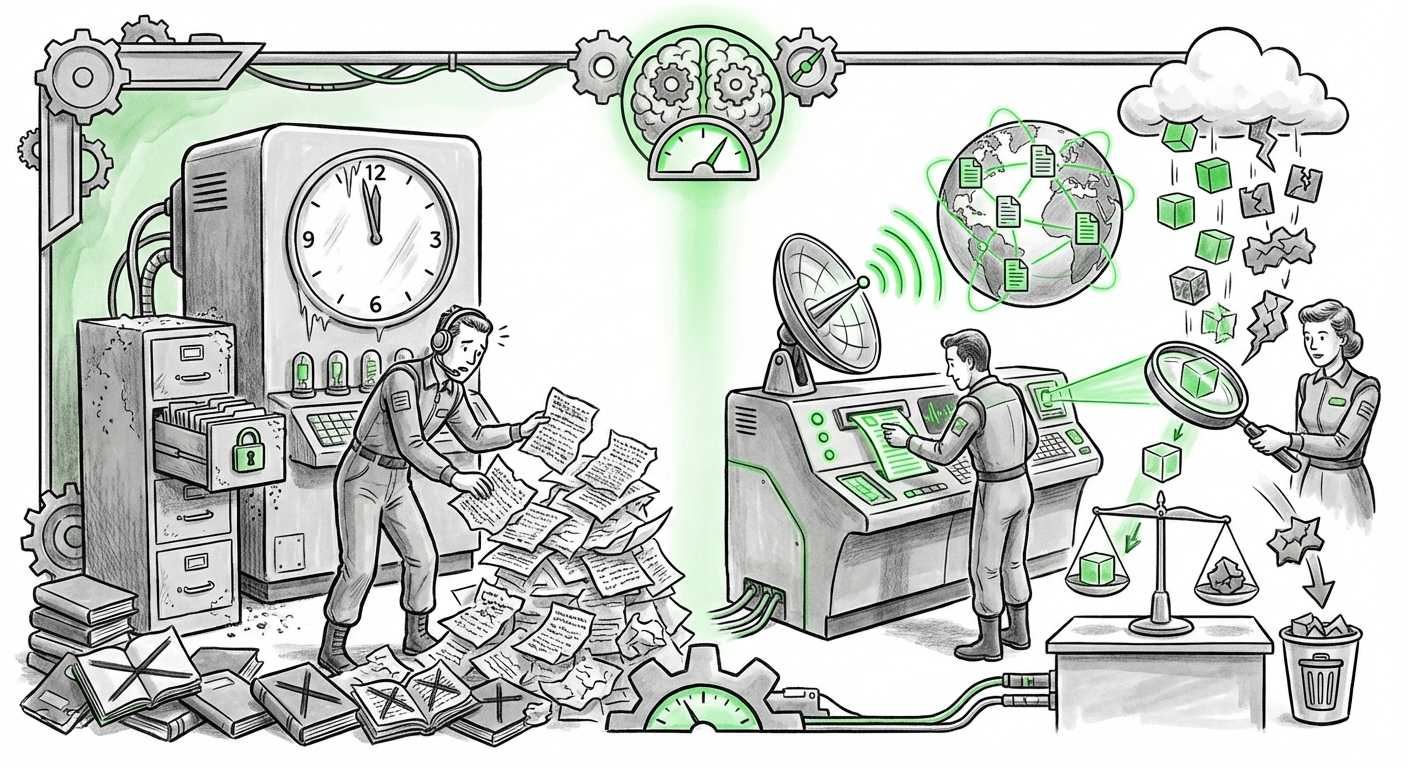

The Artificial Intelligence landscape is defined by relentless acceleration. Just as the industry absorbed the shift to large language models (LLMs) capable of sophisticated reasoning, we are witnessing the next major paradigm shift: **grounding**. Recent reports that OpenAI is powering its Deep Research features with an advanced model, speculated to be GPT-5.2, and equipping it with precise, targeted website searching capabilities, signals a move away from static knowledge silos toward dynamic, real-time information retrieval.

This development—real-time tracking combined with the ability to search *specific* sources—is a powerful upgrade. It promises to transform AI from an educated predictor into an active, verifiable research assistant. However, as noted by initial reports, this increased capability does not automatically solve the oldest problem in generative AI: **reliability**. The core tension now lies between maximizing utility through immediacy and maintaining absolute factual integrity.

1. The Technical Leap: From Static Memory to Dynamic Retrieval

For years, models like GPT-4 operated on a knowledge base frozen at the time of their last training cut-off. While impressive, this meant they were inherently historical documents. The integration of real-time, targeted search flips this dynamic, borrowing heavily from advancements in what we call **Retrieval-Augmented Generation (RAG)**.

Think of it this way: An older LLM was like a brilliant scholar who had read every book in a library up until 2023, but couldn't access the news from this morning. The new model, augmented with targeted search, is the same scholar, but now they have a digital assistant that can instantly retrieve the exact relevant page from a trusted journal or a specific regulatory filing as they speak.

The specific mention of "targeted website search" suggests a sophisticated RAG pipeline. Instead of simply browsing the general web, the system can be instructed to only pull data from, say, the SEC filings database, specific academic journals, or a company's internal documentation portal. This precision is key to unlocking high-stakes, professional applications. We are seeing the industry, as indicated by ongoing developments in RAG architecture, moving toward these fine-tuned pipelines to ensure models access the most authoritative context available.

The Competitive Context

This development places OpenAI firmly in the real-time arena currently occupied by competitors. The ability to offer live, verifiable information is no longer a differentiator but a baseline requirement for advanced LLMs. Analyzing how rivals like Google Gemini integrate live Search data versus OpenAI’s proprietary, targeted approach helps define the new standard for what "next-generation" capabilities actually entail.

2. Practical Implications: Reshaping the Knowledge Worker's Workflow

For professionals—whether in finance, legal services, engineering, or academic research—the ability to ask an AI to synthesize information from known, reliable sources represents a monumental efficiency gain.

Faster Synthesis, Deeper Vetting

The immediate practical implication is the drastic reduction in time spent on preliminary literature reviews and competitive landscape mapping. Instead of manually visiting twenty different websites or databases to piece together a market overview, the AI can be directed:

- "Search the last six months of press releases from these three semiconductor companies only."

- "Find the latest draft of the EU AI Act amendments specifically published on the European Commission’s portal."

This shifts the knowledge worker’s role from *information retrieval* to *information synthesis and critical judgment*. The AI handles the "where to look," allowing the human to focus on the "what does this all mean?" This accelerated workflow promises significant productivity uplifts, potentially redefining how quickly companies can respond to new data or regulatory changes.

The Rise of the Contextually Aware Tool

This capability heralds a move toward highly specialized AI tools. If a law firm can load a custom RAG pipeline pointing only to their past case files and specific jurisdictional statutes, the research assistant becomes exponentially more valuable and less prone to generic error. This level of **domain specificity** transforms AI from a general productivity tool into an indispensable, niche expert assistant.

3. The Unresolved Tension: Capability vs. Reliability

The excitement around real-time grounding must be tempered by the enduring challenge of **hallucination and factual reliability**. This is the critical factor that determines whether this technology is useful for drafting marketing copy or for performing mission-critical due diligence.

Why does increased searching not guarantee perfect reliability? Because the model still has to perform two potentially error-prone steps:

- Retrieval Success: Did the search correctly identify and retrieve the most relevant, up-to-date snippets from the target sites?

- Synthesis & Interpretation: Did the model correctly read, weigh, and synthesize those snippets? Models can still misinterpret context, favor newer (but less authoritative) information over older established facts, or misread nuanced technical documentation.

Reports and analyses across the AI community constantly highlight that while RAG helps constrain the model, it doesn't eliminate the risk of error. If two highly trusted websites offer slightly contradictory data points, the LLM, despite its superior access, still struggles with the ultimate arbitration of truth. The model is excellent at finding *what exists*, but still imperfect at determining *what is definitively true* when sources conflict.

For business adoption, this means that while research cycles are faster, the final human verification step remains mandatory, especially in regulated industries. Adopting this technology requires implementing rigorous auditing layers to check the AI’s sourced citations against the original documents. Ignoring this tension is a direct path to relying on sophisticated, real-time falsehoods.

Future Implications: AI as the Research Backbone

The trajectory set by this GPT-5.2-level feature indicates that future LLMs will not be judged on their size, but on their connectivity and verifiability. The future of AI research relies on three core pillars:

A. Hyper-Personalization of Data Access

We will see enterprise platforms moving toward models that are perpetually connected to the user's entire corpus of trusted, proprietary data. This isn't just searching the internet; it's searching the documents, emails, and meeting transcripts specific to your team. This requires highly secure, private RAG implementations that rival the capabilities demonstrated by public models on the open web.

B. The Evolution of Citation Standards

As AI tools become standard, the expectation for citations will become as rigorous as academic publishing. We expect forthcoming standards, potentially driven by regulatory bodies or industry consortiums, that dictate the format, depth, and provenance tracking required for AI-generated output based on retrieved data. If an AI cites a source, that citation must be instantly clickable and verifiable.

C. AI as a Cross-Domain Integrator

The real breakthrough will happen when the targeted search capability bridges disparate domains seamlessly. Imagine asking the AI to research the impact of new solar cell materials (Chemistry journal data) on the current market price of lithium (Financial data), sourced from specific industry reports. This cross-domain synthesis, powered by targeted, real-time grounding, is the ultimate destination for high-level AI assistance.

Actionable Insights for Adoption

For organizations looking to leverage these advanced capabilities responsibly, here are critical next steps:

- Audit Your Data Trust: Before deploying advanced web-grounding, clearly define which external sites are trustworthy for critical tasks. If you are relying on the model to search outside your controlled environment, establish a strict review protocol for output involving external claims.

- Invest in RAG Infrastructure: For internal enterprise use, focus development budgets on building robust, proprietary RAG pipelines. The real competitive advantage lies in linking the model to your unique, internal knowledge base, not just the public internet.

- Re-Skill for Verification: Train knowledge workers not just on how to prompt the AI, but how to *validate* its search results. Critical thinking shifts from finding information to verifying the AI's interpretation of that information.

The move to GPT-5.2 enhanced research capabilities is not merely an incremental update; it is a statement of intent: LLMs must be anchored in the present reality. The success of this shift will depend entirely on whether we can engineer systems that remain powerfully connected to current facts while retaining the intellectual honesty to admit when the facts are unclear or contradictory.