GPT-5.2 and Targeted Web Search: The New Frontier of Real-Time AI Research and Its Reliability Trap

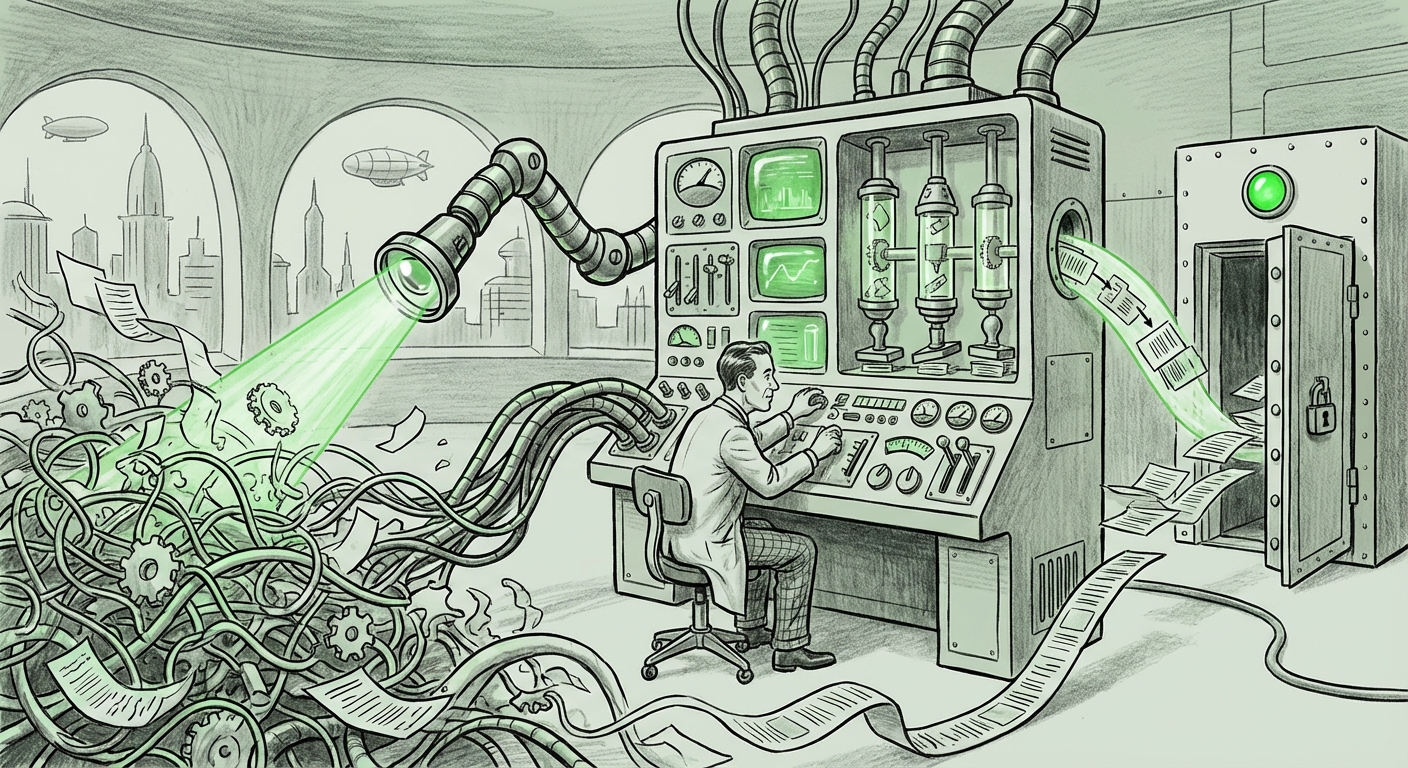

The evolution of Large Language Models (LLMs) is characterized by a constant tug-of-war: pushing the boundaries of what they *know* versus improving how they *find* what they don't know. The recent integration of **GPT-5.2** into OpenAI’s "Deep Research" feature, which now allows for highly specific, targeted website searching and real-time tracking, represents a powerful new phase in this cycle. This upgrade signifies a major leap in the LLM’s ability to act as a dynamic researcher, not just a static knowledge base.

However, as the initial reports caution, enhanced access does not automatically equal enhanced truth. The core challenge moving forward is navigating the tension between the allure of recency and access and the persistent need for accuracy and verification. This development compels us, as analysts and users, to redefine what "good research" means in the age of infinitely connected AI.

The Shift from Static Knowledge to Dynamic Retrieval

For early-generation models, research capabilities were inherently limited by the cutoff date of their massive training datasets. While incredibly knowledgeable about history, literature, and established science, they were effectively blind to yesterday’s stock market shifts or today’s breaking regulatory filings. The web-browsing feature, first introduced in earlier iterations, offered a remedy, but often felt clumsy—like asking a brilliant student to randomly skim the internet for an answer.

GPT-5.2’s Deep Research capability signals a maturation of this web interaction. The introduction of targeted website search suggests a more sophisticated underlying architecture. Instead of indiscriminate web crawling, the model can now be instructed to perform surgical strikes: "Analyze the latest shareholder letter published only on Company X’s Investor Relations page" or "Compare Q3 earnings reports found exclusively on SEC EDGAR filings."

This precision is transformative for professionals:

- Speed in Due Diligence: Financial analysts can compress days of manual document searching into minutes.

- Hyper-Focused Synthesis: Researchers can ground complex arguments in sources they have specifically dictated, reducing irrelevant noise.

- Real-Time Monitoring: The addition of real-time tracking means the AI can maintain a constant, specified watch over critical data streams.

Understanding the Engine: GPT-5.2 and Performance Benchmarks

To fully appreciate this feature, we must look beyond the interface and consider the engine. When seeking to understand the true impact, analysts must look for corroboration on the underlying model performance. We need to answer the question posed in our contextual analysis: Are these new retrieval mechanisms truly boosting reasoning, or are they just making retrieval faster? Search queries focusing on "GPT-5 performance benchmarks vs real-time data" are essential here. If the core reasoning capacity (the '5.2' aspect) is significantly superior to its predecessors, it can better integrate the new, disparate real-time data into coherent, reliable conclusions.

If benchmarks show only incremental gains in reasoning, then the user’s burden of verification remains critically high, reinforcing the caveat that reliability is not guaranteed.

The Reliability Conundrum: Grounding LLMs in a Messy Web

The most compelling aspect of this update is the inherent conflict it highlights: the Web is vast, immediate, and often untrustworthy. This brings us squarely to the challenges of Grounded LLMs and Retrieval-Augmented Generation (RAG).

In the context of AI research, RAG systems work by retrieving relevant passages from an external knowledge base (in this case, specific websites) and feeding them to the LLM as context before generating a final answer. The output is "grounded" in that specific data. The problem, as highlighted by technical deep dives into "Challenges of Grounded LLMs", is not always in the retrieval, but in the interpretation and synthesis.

Imagine a scenario where GPT-5.2 successfully locates two conflicting press releases about a corporate merger: one from the official company site and one from an unofficial, slightly earlier rumor site. The model might:

- Prioritize the most recent source (good).

- Struggle to distinguish between primary (official filing) and secondary (news report citing the filing) sources.

- Misrepresent the *context* of a statement, even if the quote itself is accurate (citation accuracy failure).

For technical users and researchers, the tool is powerful, but the human must remain the final arbiter. The AI is now an unparalleled research assistant, but it is not yet a trusted primary investigator. It excels at finding the haystack, but it still needs a human hand to confirm the needle is not straw.

Actionable Insight for Technical Users: Define Your Trust Perimeter

Technical users must leverage the specificity of the new feature defensively. Instead of asking for a general summary, mandate the inclusion of URLs from sources that meet your internal verification standards (e.g., only `.gov`, established industry journals, or known primary sources). This shifts the focus from hoping the AI chooses well, to *forcing* the AI to operate only within a pre-approved, trusted data perimeter.

Implications for the Future of Knowledge Work and Academia

The evolution of AI research tools has profound ripple effects across knowledge-based industries. We are moving past the era where AI democratized basic knowledge access; we are entering the era where AI hyper-specializes in *niche, timely data retrieval*.

For business strategists and knowledge workers, this means the competitive advantage shifts from simply *having* AI tools to mastering the *prompt engineering* that directs those tools to proprietary or hard-to-find information. If a competitor is still relying on month-old reports, the firm using GPT-5.2’s real-time tracking on competitor patents or supply chain announcements gains an immediate, tangible edge.

The Academic Crossroads

Nowhere is this shift more palpable than in academia. The query regarding the "Future of Academic Research" is timely. Historically, academic rigor demanded deep dives into curated library databases and peer-reviewed journals—a slow, deliberate process designed to filter out low-quality information.

GPT-5.2 challenges this methodology by offering instant access to primary web data. Will graduate students abandon established databases for real-time web scraping guided by an LLM? Perhaps. But if they do, the standards of scholarship must evolve rapidly to value real-time synthesis alongside traditional empirical verification. We are heading toward a hybrid model where LLMs are used for rapid hypothesis generation and preliminary source identification, followed by traditional methods for rigorous validation.

The Transparency Imperative: What OpenAI Needs to Reveal

For this feature to truly revolutionize research rather than just accelerate confusion, the underlying technology must embrace radical transparency. As our contextual investigation into "OpenAI data access updates" suggests, users need to know more than just *what* the model found, but *how* it decided to search there.

Future iterations of these tools must provide:

- Granular Citation Trails: Not just a list of sources used, but which specific section of the source was used to support which part of the generated conclusion.

- Source Authority Scores: An internal mechanism (even if proprietary) that ranks the retrieved source’s presumed trustworthiness based on domain reputation, age, and known bias.

- Retrieval Logic: A simple log explaining why the model chose Website A over Website B when both were potentially relevant to the query.

Without this transparency, the "Deep Research" becomes a sophisticated black box—efficient, perhaps, but fundamentally untrustworthy for high-stakes decision-making.

Actionable Takeaways for Adopting this Technology

The integration of GPT-5.2’s targeted search is not just an incremental update; it’s a statement about the future role of AI in information acquisition. Here are concrete steps for leaders and knowledge workers:

- Treat It as a Filter, Not a Final Answer: Understand that the primary value is rapidly narrowing the search space. Use the tool to reduce millions of potential documents down to a shortlist of 5-10 hyper-relevant documents.

- Develop Source Policy: For mission-critical tasks, create a clear list of allowed and disallowed domains for AI retrieval. If you are researching legal compliance, the AI should be constrained to official legislative websites and established law firm publications only.

- Mandate Dual Verification: Implement a workflow where any critical data retrieved by the AI must be manually confirmed by a human expert against the original source URL before action is taken. This blends AI speed with human accountability.

- Invest in LLM Literacy: Train employees not just on how to prompt the tool, but on the principles of RAG, hallucination, and source bias. Understanding the mechanism reduces over-reliance.

Conclusion: The Researcher's New Responsibility

GPT-5.2 in Deep Research mode is a powerful magnification tool for human intellect. It promises to compress the time between needing obscure, up-to-the-minute data and synthesizing that data into actionable intelligence. This capability will undoubtedly accelerate innovation across finance, engineering, and media.

Yet, the analyst’s caution remains the defining lesson of this moment: Speed and specificity are not synonyms for truth. The future of effective AI utilization hinges less on the raw power of the model and more on the rigor of the human directing it. The new responsibility of the AI-empowered researcher is not just to ask questions, but to meticulously manage the source list, demand transparency, and always, always verify the ground upon which the AI builds its conclusions.