The End of Static AI: Why Mid-Cycle LLM Updates Signal the Service Revolution

The world of Artificial Intelligence, particularly large language models (LLMs), has historically operated on a predictable, almost predictable cycle: a massive foundational model is announced, engineers spend months integrating it, and then months later, the next, clearly labeled version arrives. This system—the monolithic release—is now facing a fundamental challenge.

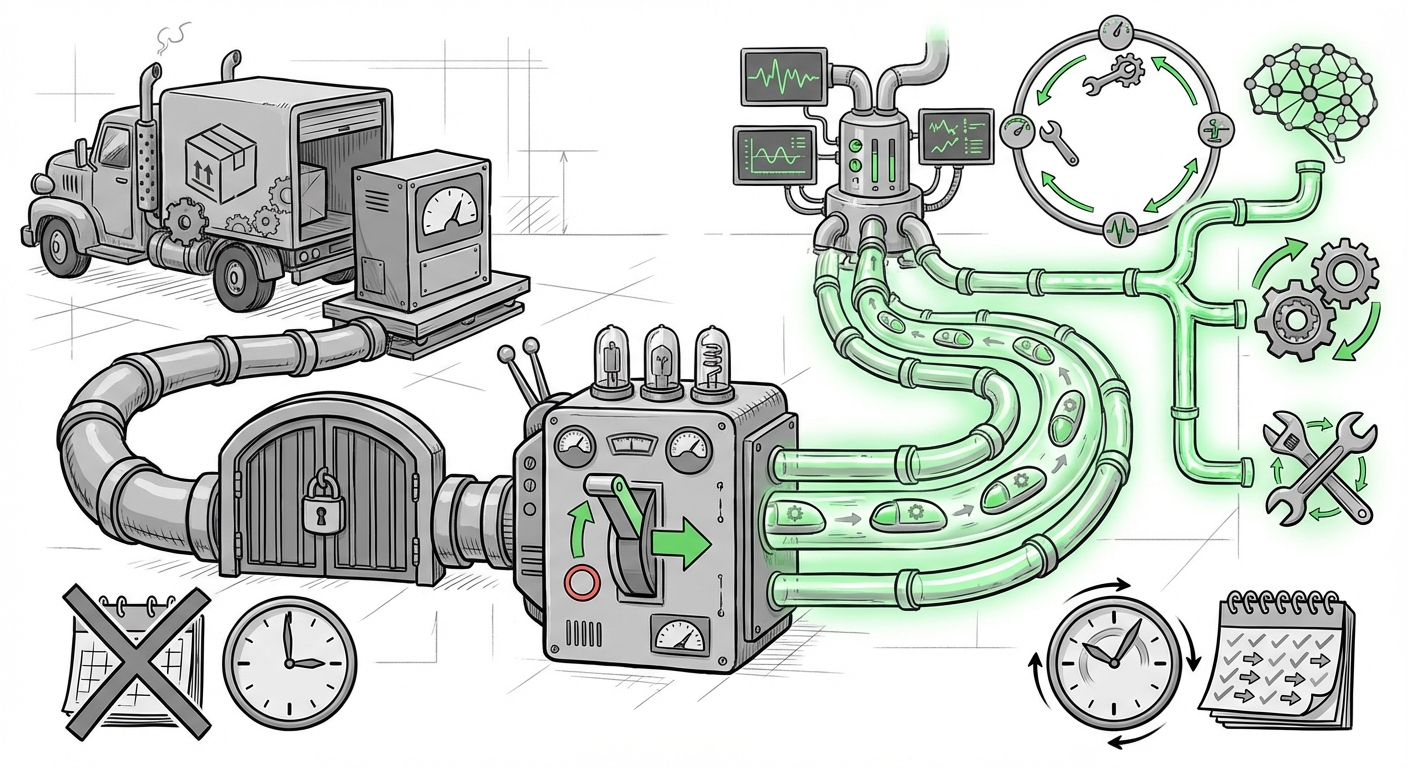

Recent reports confirming that OpenAI is pushing "mid-cycle" improvements to models like GPT-5.2, instantly tweaking response style and quality across both ChatGPT and the API, signal more than just a minor patch. This is the digital equivalent of an engine tune-up happening while the car is still driving down the highway. It confirms that the industry is transitioning LLMs from being static software products to dynamic, continuously refined services. This shift has profound implications for everyone, from the engineers writing code to the executives setting budgets.

The Shift from Product to Service: What Does 'Mid-Cycle' Mean?

For years, when a company released GPT-4, that was the benchmark. If you wanted better logic or less boilerplate in the responses, you had to wait for GPT-5. The updates were major, separated by significant time gaps, and usually required developers to re-test entire application flows.

OpenAI’s introduction of swift, iterative fixes for GPT-5.2 changes this cadence entirely. Think of it this way:

- Old Way (Product): Buying a software CD. If the CD had a bug, you waited for a new, labeled CD (e.g., Version 2.0) to fix it.

- New Way (Service): Subscribing to a cloud platform (like Netflix). The content delivery system is constantly updated in the background to improve your experience without you needing to download a new version.

When OpenAI announces an update improving "response style and quality" mid-cycle, it means their internal engineering and deployment pipelines are sophisticated enough to identify specific areas of weakness—perhaps the model became too verbose, or its tone shifted inappropriately—and deploy micro-adjustments without needing a full architectural overhaul. This requires robust infrastructure capable of rapid, safe iteration.

The Engineering Backbone: MLOps Meets CI/CD

This level of agility is not accidental; it is a direct result of maturation in Machine Learning Operations (MLOps). Our research into the necessary context (querying "CI/CD for large language models") shows that major players have been racing to build these capabilities. Deploying a change to a few lines of code is simple; deploying a change that affects trillions of parameters while maintaining reliability is monumentally complex.

For the AI infrastructure architect, this means:

- Advanced Monitoring: They must have real-time systems tracking response quality, latency, and toxicity across millions of queries to pinpoint exactly where a style degradation occurred.

- A/B Testing at Scale: Every small update must be rigorously tested against a control group to ensure the "improvement" doesn't inadvertently break performance for another segment of users.

- Immutable Versioning: While the service endpoint (like

gpt-5.2-instant) might remain stable, the underlying weights are constantly shifting. This necessitates meticulous tracking of which exact sub-version is currently active.

This engineering maturity is the quiet revolution underpinning the visible user-facing improvement. It’s the hard work of building robust Model Lifecycle Management that allows for this instant gratification.

Competitive Pressures Force Agility

OpenAI does not operate in a vacuum. The rapid pace of iteration seen here is often a direct response to competitive friction. By implementing these quick fixes, they maintain a clear advantage in perceived quality and responsiveness.

When investigating the competitive landscape (searching for "Anthropic Claude continuous updates" or "Google Gemini iterative model improvements"), we see rivals engaged in a perpetual game of catch-up. If a user finds that Claude 3.5 Sonnet is superior at creative writing for three weeks, but OpenAI can patch GPT-5.2's style issues in three days, the user experience gap closes instantly. This competitive environment compels major labs to move beyond the scheduled "big bang" release.

For technology analysts and strategists, this means that performance metrics are no longer measured quarterly; they are measured weekly, sometimes daily. The value proposition shifts from owning the "best model" on paper to owning the "most consistently well-tuned service" in production.

The Developer Dilemma: Stability vs. State-of-the-Art

While end-users enjoy better responses immediately, the developers integrating these models into their applications face a crucial balancing act. This leads directly to the concerns raised by examining API stability and versioning challenges (our third research area).

If my company built a complex customer support chatbot based on GPT-5.2 last month, and today OpenAI subtly changed how the model interprets conditional logic during a style update, my chatbot might start hallucinating edge cases it handled perfectly yesterday. This is the inherent risk of using a service that is constantly learning and being retuned.

Businesses relying on LLM APIs must now adopt software development principles that account for "drift." Developers can no longer afford to treat the model API as a static library. Instead, they must:

- Embrace Strict Version Pinning: If possible, pin to the oldest stable version that meets requirements, only upgrading when performance degradation is confirmed.

- Intensive Regression Testing: Integrate comprehensive prompt suites into automated testing pipelines that run *every time* a new model iteration is deployed, even if it’s a mid-cycle patch.

- Leverage "Fixed" Endpoints: If OpenAI offers an option to lock into a specific, time-stamped snapshot of the model weights (e.g.,

gpt-5.2-20240915), developers should use it for mission-critical stability, accepting that they will miss the very latest style tweak.

The excitement of faster quality improvements must be tempered by the practical need for predictable behavior in production systems. The industry is simultaneously pushing for agility and demanding reliability.

Future Implications: The Inevitable Supremacy of the Service Model

The most significant long-term takeaway stems from analyzing LLMs as real-time services rather than static products. This trend points toward a future where the underlying model architecture matters less than the continuous refinement applied to it.

When we ask what happens next (our fourth research area), the answer is clear: The concept of a discrete "Model Release" will fade.

Imagine future AI not as a downloadable file, but as an omnipresent utility, like electricity. You don't ask if you're using the 2024 version of the power grid; you simply plug in and expect a consistent voltage. Similarly, users will expect their AI to always be the best version available right now.

This model has several future implications:

- Economic Shift: Pricing will pivot entirely toward usage and quality-of-service tiers, rather than fixed licensing fees. You pay for the responsiveness and the accuracy level you require *at that moment*.

- Agentic Systems Emerge: This continuous pipeline is necessary for sophisticated AI Agents that need to learn from new data or user feedback and update their behavior *autonomously* without human intervention between major versions.

- Hyper-Personalization: Fine-tuning will become less about sending a static dataset and more about continuous feedback loops where the model refines its style to match individual user preferences dynamically, right now.

Actionable Insights for Businesses and Developers

This moment demands strategic adaptation, not just technical excitement. Here is how stakeholders should react to the reality of continuously updated LLMs:

For Business Leaders and Strategists:

- Re-evaluate Vendor Lock-in: If your core business logic relies on subtle behaviors that change weekly, switching providers becomes riskier, not easier. Ensure your contracts and usage patterns account for non-static dependencies.

- Prioritize Observability Budgets: Allocate significant resources toward monitoring the *quality* of AI outputs, not just uptime. A system that is 99.99% available but provides consistently mediocre answers is failing.

- Embrace Rapid Prototyping: Since quality improvements are deployed instantly, teams should test new application ideas against the newest endpoints faster. The friction between developing an idea and deploying the improved tech has dramatically decreased.

For Developers and Engineers:

- Build Guardrails, Not Just Prompts: Because prompts can break unexpectedly, your application logic must contain robust validation layers to catch nonsensical or stylistically incorrect outputs before they reach the end-user.

- Automate "Negative Testing": Dedicate a portion of your QA cycle to running tests designed to replicate old, problematic prompts to ensure the new mid-cycle update hasn't brought back previous failures.

- Understand the "Instant" Endpoint: If you see an endpoint labeled "Instant" or similar, assume it is the bleeding edge, subject to change. Reserve these for exploratory work, and rely on explicitly dated versions for production stability whenever possible.

The move to mid-cycle updates is a sign of true industrialization in AI. It means the days of waiting for the next major version breakthrough are over. The breakthrough is now continuous. We are no longer subscribing to static intelligence; we are plugging into a living, evolving digital mind.