The Great AI Consolidation: Why xAI's Restructuring Signals a New Era for Frontier Models

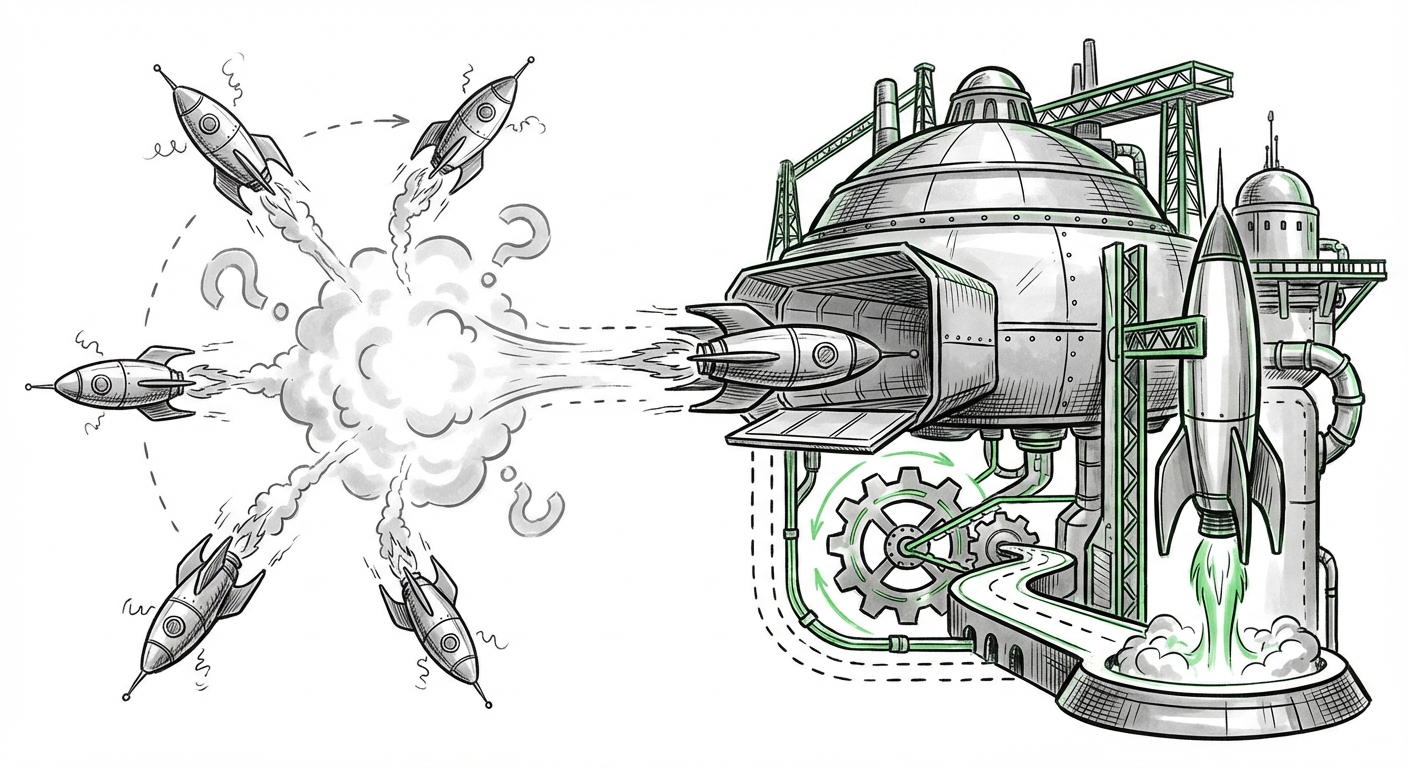

The pace of innovation in Artificial Intelligence is dizzying, but the recent tremors emanating from Elon Musk’s xAI—the departure of key co-founders alongside reports of financial strain and the potential absorption into SpaceX—are more than just internal personnel shifts. They represent a critical inflection point in the broader AI development landscape. This movement suggests a necessary, and perhaps inevitable, transition from speculative, cash-burning foundational research to **applied, mission-critical AI integration.**

For technologists, investors, and business leaders alike, understanding this pivot is crucial. It forces us to ask: Is the age of the independent, general-purpose AI startup over? What does this mean for the future utility of models like Grok, and where will the next great leaps in AI occur?

The Financial Reality Check: AI's Capital Barrier

Building a Large Language Model (LLM) capable of challenging OpenAI’s GPT series or Anthropic’s Claude models is staggeringly expensive. It requires access to vast, proprietary datasets, immense computational power (often costing hundreds of millions in specialized hardware), and the salaries of world-class researchers. The initial rush of enthusiasm following the ChatGPT launch led to an investment frenzy, funding nearly every competent team with a solid roadmap.

However, the shine is wearing off for investors expecting quick returns from generalized chat interfaces. We are seeing market dynamics shift, aligning perfectly with the challenges reportedly facing xAI. As we investigate the broader economic environment, the evidence points towards a **"AI funding crunch"** for those without immediate, concrete applications [Search Query 1 Rationale].

What this suggests for the industry is a widening chasm:

- The Titans (Google, Microsoft, Meta): They possess the balance sheets and existing infrastructure (cloud, data centers) to continue funding frontier research indefinitely, even if the immediate payoff is unclear.

- The Vertically Integrated Players: Companies that use AI to solve specific, high-value problems—like Tesla with autonomous driving or, potentially, SpaceX with rocketry—can justify the R&D costs through operational gains.

- The Squeezed Middle: Independent startups, unless they secure massive, multi-billion dollar funding rounds that few can afford to write, are increasingly becoming acquisition targets or finding their runway ending. The high cost of compute means that revenue must follow innovation much faster than previously anticipated.

For the average business leader, this means relying on the major cloud providers for foundational models is becoming the norm. The dream of easily swapping out a foundational model like swapping SaaS vendors is fading; the cost structure entrenches incumbents.

H2: Performance Anxiety: Is Grok Technologically Competitive?

Beyond the money, a foundational model must deliver results. The core value proposition of xAI was to create a powerful, open, and slightly irreverent competitor to the established LLMs. However, persistent reports suggest that the resulting model, Grok, has struggled to maintain parity with industry leaders like GPT-4 and Claude 3.

To truly judge xAI's viability, we must look at independent performance metrics. When evaluating LLMs, benchmarks like MMLU (measuring general knowledge) and coding tests are critical indicators of raw intelligence and reasoning capacity [Search Query 2 Rationale]. If objective data confirms that Grok lags significantly in complex reasoning tasks, it severely undercuts its ability to attract top-tier research talent or enterprise contracts.

If Grok is indeed lagging, the departure of co-founder Tony Wu and others becomes less about Musk’s management style and more about a fundamental technical challenge: catching up to research efforts that have been running at peak capacity for years.

The Implications for Model Diversity

A healthy AI ecosystem requires diverse models that showcase different architectural strengths and philosophical approaches. If one major contender falters, either by failing to reach parity or by being folded into another entity, it reduces the competitive pressure that drives innovation across the board. For engineers, this means fewer viable alternatives when designing specialized applications, potentially leading to vendor lock-in with the dominant models.

For society, diversity matters for bias mitigation. Different training philosophies lead to different model behaviors. A consolidation around just two or three dominant architectures makes the entire ecosystem vulnerable to shared blind spots or systemic biases inherent in those few architectures.

H3: The Great Pivot: From Chatbots to Mission Control

The most telling aspect of the xAI restructuring rumors is the potential merging with SpaceX. This is not a random administrative shuffle; it is a profound strategic redirection. This move directly addresses the **Utility Pivot**—the idea that AI's most immediate, high-value application is not creating better general conversation, but solving complex, deterministic, and high-stakes engineering problems [Search Query 3 Rationale].

Consider the immediate needs of SpaceX:

- Autonomous Flight Control: Training complex neural networks to manage Starship landing procedures, optimize trajectory in real-time, or diagnose critical failures faster than a human operator.

- Data Ingestion: Processing petabytes of telemetry data from thousands of Starlink satellites or ground sensors to predict maintenance needs before they become catastrophic.

- Material Science Acceleration: Using advanced models to simulate new alloy compositions for deep-space components.

These tasks require AI that is highly reliable, verifiable, and integrated directly into the operational stack—a perfect home for researchers needing massive computational resources tied to immediate, tangible engineering milestones.

Why This Strategy Makes Sense for Musk

For Musk, this consolidates his AI efforts under proven, cash-flowing operational entities (SpaceX, Tesla). It transforms xAI from a speculative research lab reliant on external investment sentiment into an internal R&D division whose success is measured by tangible engineering improvements—more successful launches, faster satellite deployment, or improved FSD safety metrics. This aligns perfectly with the need for **capital efficiency**.

This trend validates the view that the most potent AI advancements in the near term will come from industry-specific solutions rather than generalized, consumer-facing LLMs that struggle with factual accuracy and hallucination.

Future Implications: What This Means for Business and Society

The xAI narrative—struggle, pivot, consolidation—serves as a bellwether for the next three to five years of AI deployment. We can distill several actionable insights:

1. Actionable Insight for Businesses: Prioritize Vertical AI Integration

If you are a company looking to leverage generative AI, stop focusing solely on "chatting better." Instead, identify the most expensive, complex, or data-heavy bottleneck in your core operations. Can AI optimize your supply chain, accelerate your drug discovery pipeline, or automate complex compliance reporting? The ROI is clearest when AI is solving a specific, expensive business problem, rather than trying to win a general knowledge competition against giants.

The message from the market is clear: **Specialization trumps generalization** when capital is tight.

2. Future Trend: The Rise of Specialized Compute Clusters

When large entities absorb promising AI talent, they do so for the talent *and* the access to infrastructure. We will see more massive companies carving out internal, high-performance compute clusters dedicated solely to proprietary engineering applications (like SpaceX’s need for rapid simulation) rather than renting general-purpose cloud compute for broad LLM training.

3. Societal Implication: The Pressure on Open Source

When commercial funding consolidates around a few major players, the pressure on the open-source AI community intensifies. Open-source models are the primary mechanism for academic scrutiny, bias checking, and model diversity. If the commercial ecosystem shrinks, the burden (and cost) of maintaining competitive, safe, and open models falls entirely on foundations, universities, and government funding—a precarious position.

Conclusion: The Hard Road to Real-World AI

The shakeup at xAI is a microcosm of the broader AI ecosystem grappling with maturity. The initial euphoria has given way to the stark realities of engineering, economics, and market saturation in the generalized LLM space. The departure of key personnel amidst financial pressure confirms that the path to building truly cutting-edge AI is not just about genius ideas; it's about sustained, multi-billion dollar commitment and a clear utility roadmap.

For Elon Musk, this appears to be a strategic streamlining: consolidating talent toward missions where AI offers immediate, measurable competitive advantage—space exploration and autonomy. This shift away from pure, generalized research and toward applied engineering suggests the next wave of AI breakthroughs won't come from the labs building the best chatbot, but from the engineering teams embedding AI deep within the systems that run our physical world.