Decoding the Black Box: How AI Interpretability is Driving Trust, Regulation, and Production Deployment

The age of opaque Artificial Intelligence is drawing to a close. For years, the most powerful AI models—deep neural networks—have operated as sophisticated black boxes. They produced stunning results in image recognition, language generation, and decision-making, but when asked why they arrived at a specific conclusion, the answer was often a complex string of mathematics that offered little real insight. This opacity has been the single greatest barrier to widespread, responsible adoption in sensitive fields.

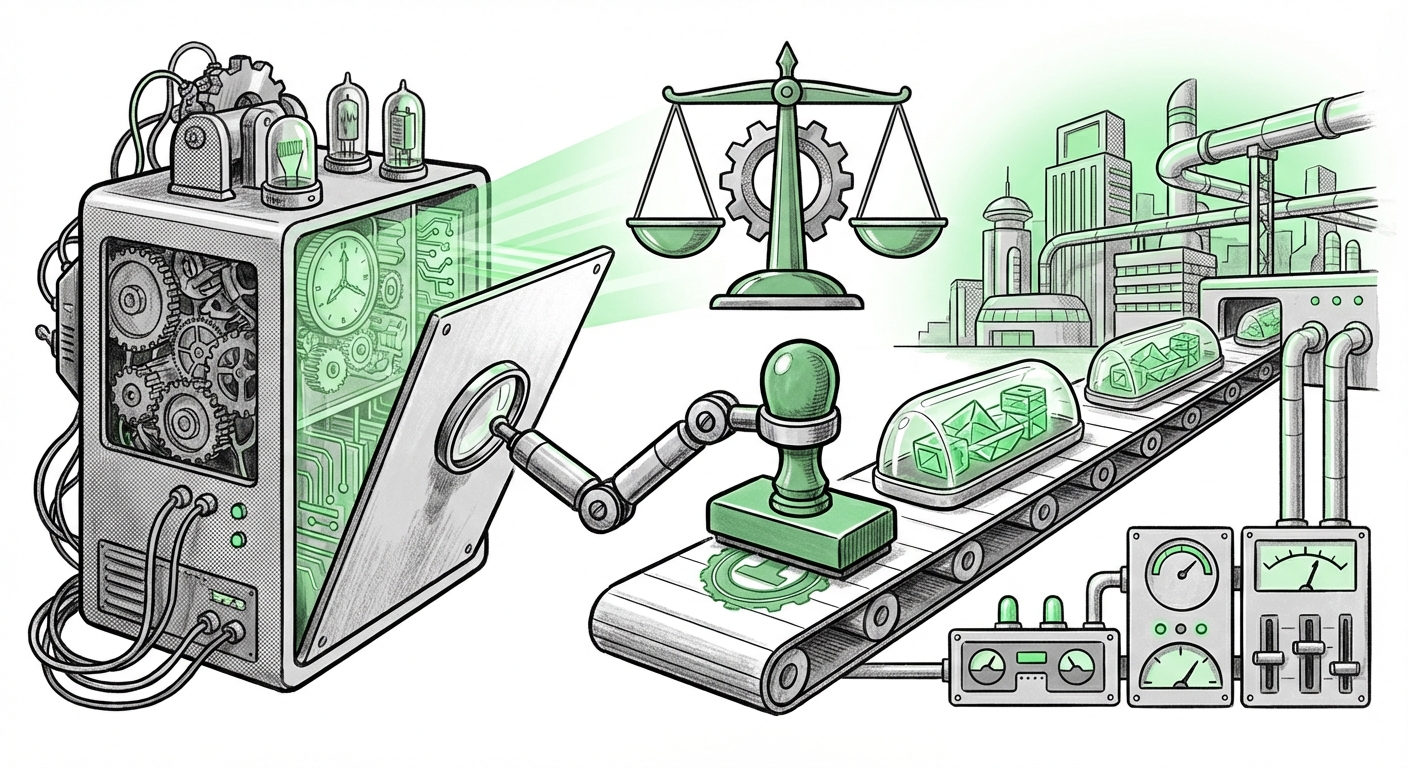

However, a powerful convergence of scientific endeavor, legal necessity, and engineering maturity is now forcing the field toward transparency. Recent work highlighted by labs such as GoodAI in the realm of AI Interpretability (XAI) is not just an academic pursuit; it is the essential bridge moving AI from a laboratory curiosity to a trustworthy, globally deployable utility.

The Scientific Frontier: Diving into Mechanistic Interpretability

When people talk about "understanding" an AI, they usually mean providing a simple reason, like, "The loan was denied because the debt-to-income ratio was too high." This is traditional, post-hoc explanation. The cutting edge, however, is pursuing something far more profound: Mechanistic Interpretability. This approach seeks to reverse-engineer the internal structure of the network itself.

Imagine a vast city (the neural network). Traditional XAI looks at the city's final output (the traffic leaving the city). Mechanistic Interpretability, conversely, tries to map the individual roads, stoplights, and power grids (the neurons and circuits) to see precisely how inputs are processed internally. Labs are working to identify specific computational "circuits" that handle concepts like negation, truthfulness, or even lying. If we can find the exact sequence of digital calculations responsible for a concept, we gain unparalleled control and assurance.

The goal here is deep comprehension. If an AI is trained on vast amounts of data, it learns incredibly complex statistical shortcuts. Mechanistic interpretability aims to prove that the AI isn't just mimicking superficial patterns but has truly grasped the underlying causality. This level of detail is crucial for debugging and ensuring models don't learn dangerous biases hidden deep within their weights.

For researchers and engineers, understanding these internal mechanics is the key to unlocking safer, more robust next-generation models.

The effort to map features and circuits within large language models—as seen in foundational work on representation geometry—is transforming AI from an art into an engineering discipline, allowing us to peek inside the "brain" itself. [Anthropic's foundational work on dictionary learning and representation geometry]

The Regulatory Hammer: Why Explainability is Now Non-Negotiable

While scientists push the boundaries of what *can* be explained, governments are defining what *must* be explained. The most significant driver globally is the forthcoming enforcement of regulations like the EU AI Act. This legislation categorizes AI systems by risk, and for "High-Risk" applications—such as those used in hiring, credit scoring, or critical infrastructure—transparency is mandatory.

To a business leader, this means that deploying a powerful, opaque model for mortgage approval is no longer a viable business strategy in many jurisdictions. If the model rejects a qualified applicant, the organization must be able to provide a clear, legally sound explanation. This is the "Right to Explanation."

The implications are vast:

- Liability and Auditing: Organizations need traceable proof that their AI adheres to non-discrimination laws. Without XAI documentation, proving compliance becomes impossible when disputes arise.

- Public Trust: In sectors like healthcare, if an AI recommends a specific treatment path, doctors and patients need assurance that the reasoning aligns with medical science, not statistical noise.

This regulatory push is accelerating the adoption of XAI techniques that might have previously been seen as secondary to raw performance. Compliance is now a core performance metric for any enterprise AI strategy.

The legal landscape is rapidly solidifying the requirement for documentation and transparency. Failure to incorporate robust explainability frameworks into high-risk models is now an open invitation to regulatory scrutiny. [A review of the obligations under the EU AI Act regarding transparency and documentation.]

Bridging the Gap: Operationalizing Interpretability in MLOps

The greatest challenge for many companies is not understanding the theory of XAI, but integrating it into their daily workflow. An explanation generated once during model training is useless if the model drifts in performance six months later. This is where the discipline of MLOps (Machine Learning Operations) intersects with XAI.

To make XAI practical, it must be automated and continuous. This involves:

- Continuous Monitoring: Deploying automated tools (like SHAP or LIME, or vendor-specific solutions) that calculate feature importance for every batch of production data, flagging significant shifts in how the model is making decisions.

- Traceability in Pipelines: Ensuring that the specific version of the model, the dataset used to train it, and the corresponding interpretability report are bundled together as an immutable record.

- Alerting on Drift: If the importance of a benign feature suddenly spikes, or if the model begins relying heavily on sensitive, prohibited features (like zip code instead of actual income), the MLOps system must generate an immediate alert for human review.

For the MLOps engineer, XAI shifts from a one-time report to a persistent monitoring layer. It makes AI systems observable, manageable, and auditable just like traditional software infrastructure.

In simple terms: If performance is the speedometer for your AI, interpretability tools are the diagnostic dashboard that tells you if the engine is about to explode.

Moving XAI from an experimental concept to an integrated operational tool requires seamless deployment within existing CI/CD frameworks. The industry is now standardizing how these diagnostic layers are injected into production environments to maintain trust over time. [A guide on deploying Explainable AI models using cloud services.]

The Future Implications: Trust as the Ultimate AI Feature

What does this synthesis of scientific research, regulatory pressure, and MLOps maturity mean for the future of AI?

1. Democratization of AI Insight

As mechanistic interpretability matures, the insights derived from complex models will become more accessible. We will move away from simply trusting that a foundation model works, toward understanding *how* it works. This will unlock AI adoption in fields like personalized medicine or complex financial modeling where the cost of error—and the need for justification—is extremely high.

2. Performance vs. Transparency Trade-Off Fades

Historically, highly complex models (better performance) were inherently less explainable. As interpretability techniques improve, this trade-off diminishes. Future competitive advantages will belong to organizations that can deliver *both* state-of-the-art performance and rigorous, legally compliant explanations.

3. The Rise of the AI Auditor Role

A new class of professionals will emerge, specializing not just in building models, but in auditing them for alignment, safety, and compliance using interpretability tools. These auditors will be the gatekeepers ensuring that the systems deployed uphold ethical and legal standards.

Actionable Insights for Business and Technology Leaders

The shift toward transparency is not optional; it is an embedded cost of entry for advanced AI deployment.

For Technology Leaders (CTOs, VPs of Engineering):

- Mandate XAI Integration Early: Do not treat interpretability as a post-training phase. Select modeling frameworks and MLOps tooling that natively support XAI visualization and monitoring (e.g., integrating SHAP analysis into your model validation stage).

- Invest in Skill Transfer: Ensure your data science teams are trained not only on model accuracy but also on causality, bias detection, and generating formal interpretability reports.

- Benchmark Against Safety: Begin benchmarking new model deployments not just on F1 scores or latency, but on standardized interpretability metrics that predict regulatory compliance risk.

For Business Leaders (CEOs, Compliance Officers):

- Treat XAI as Risk Management: Recognize that explainability is equivalent to a regulatory risk mitigation strategy. The cost of implementing XAI today is far lower than the potential fines or reputational damage from a single, unexplained, erroneous decision tomorrow.

- Define "Sufficient Explanation": Work with legal counsel to define what constitutes a "sufficient explanation" for your high-risk processes *before* you deploy the AI. This guides your technical teams.

- Prioritize Trust Over Speed: In critical domains, slow, trustworthy AI will outperform fast, untrustworthy AI due to reduced operational friction and fewer legal hurdles.

The era of "trust me, the algorithm knows best" is over. The future of artificial intelligence is one where sophisticated capabilities are coupled with radical transparency. Labs like GoodAI are doing the hard scientific work of cracking the black box, but it is the engineers, governance teams, and business leaders who must now operationalize this transparency to realize AI’s true, reliable potential.

Cited Context and Further Reading

- Mechanistic Interpretation Insight: [Anthropic's foundational work on dictionary learning and representation geometry]

- Regulatory Drivers: [A review of the obligations under the EU AI Act regarding transparency and documentation.]

- MLOps Implementation: [A guide on deploying Explainable AI models using cloud services.]