The Sentinel Evaluator: Why AI Judges with Reasoning Engines Are the Next Frontier in Model Testing

The artificial intelligence landscape is defined by a relentless pursuit of capability. We celebrate when models write better poetry, solve harder math problems, and write cleaner code. But as AI outputs grow more complex—moving from simple classification to multi-step creative generation and planning—a fundamental challenge has emerged: How do we accurately measure if an AI is actually good?

The old ways of grading AI are breaking down. We can no longer rely solely on simple multiple-choice tests or superficial keyword matches. This critical gap is leading to one of the most significant technological shifts in the development pipeline: the Emergence of the Agent-as-a-Judge.

This concept, highlighted in recent analyses like The Sequence’s Opinion #806, posits that to truly evaluate sophisticated AI, we need equally sophisticated AI: a judging agent equipped with a strong internal reasoning engine. This isn't just an upgraded spell-checker; it’s a system built to dissect logic, check factual consistency across multiple steps, and understand nuance, much like an expert human reviewer.

The Failure of Static Metrics: Why We Need Smarter Judges

For years, AI evaluation relied on static benchmarks. Think of these like standardized tests (like the SAT or GRE) for machines. Benchmarks like MMLU (Massive Multitask Language Understanding) test broad knowledge through multiple-choice questions. While useful for general capability mapping, these tests fail when models move beyond rote memorization.

The Generative Challenge

Modern generative models (like GPT-4 or Claude 3) produce outputs that are open-ended. If you ask an AI to "Write a marketing plan for a solar panel company that incorporates regional economic data and addresses local zoning laws," there isn't one single correct answer. A standard metric can't capture whether the plan is creative, feasible, or strategically sound.

This difficulty in grading open-ended tasks forces developers back to human evaluators—a slow, expensive, and often subjective bottleneck. The solution being rapidly adopted is to use powerful, pre-trained LLMs themselves as proxies for human judges. However, early LLM judges were often too simplistic. They might check if the answer looked plausible but couldn't trace the logic chain.

The Reasoning Engine Requirement

The modern "Agent-as-a-Judge" overcomes this limitation by possessing superior reasoning capabilities. It doesn't just compare the output text; it actively simulates the thinking process required to reach that output. If an AI agent is asked to book a complex international trip, the judge agent must confirm:

- Did the initial plan violate any stated budget constraints?

- Were all required visas considered in the itinerary? (Requires external knowledge integration).

- Is the sequencing of flights logical? (Requires causal reasoning).

This necessity for deep, traceable evaluation underpins the entire trend. We need judges that are not just smart, but also transparent about why they reached their conclusion.

Corroborating the Trend: The Evidence for Agentic Evals

This shift isn't theoretical; it is being driven by both necessity and academic rigor. Our analysis suggests that this movement is heavily corroborated across several key areas of AI development:

1. The Rise of LLMs as Reliable Proxies

The industry is confirming that when calibrated correctly, LLMs can mirror human preference with surprising accuracy. Research focusing on the methodology of using LLMs for judging—often labeled as “LLM as a Judge” systematic reviews—shows its viability. These studies validate that a powerful judge model, given clear rubrics and chain-of-thought instructions, correlates highly with human rankings for fluency, coherence, and helpfulness. This gives developers the scalability they desperately need.

2. Confronting Adversarial Failures

As models become more capable, they also become more adept at exploiting flaws in their own testing methods. This is why searching for Adversarial AI evaluation techniques is crucial context. If a model is trained primarily on evaluations that only check for keyword overlap, it will learn to stuff keywords rather than provide quality answers. The Judge Agent must be resilient to these attempts at manipulation—it must be able to detect 'sycophancy' (telling the judge what it wants to hear) or obfuscation.

A superior reasoning engine judge can spot these adversarial tricks. It doesn't just look at the final answer; it scrutinizes the path taken, making it much harder for a tested model to "cheat" the system.

3. Moving Beyond Superficial Benchmarks

The limitations of tests like MMLU push the field toward evaluating agency. This is reflected in the search for AI agent reasoning benchmarks beyond MMLU. New benchmarks are emerging that require planning, tool use, and long-term memory—skills that define truly intelligent agents. To score well on these new tests, the judge itself must also master these skills, reinforcing the need for reasoning engine judges.

4. The Internal Loop: Self-Correction and Reflection

Finally, the concept of an external judge is closely tied to the internal architecture of advanced models. Articles discussing self-correction in generative AI systems show that models are being trained to critique their own initial drafts. If an AI can improve its own output based on internal critique, it stands to reason that the most effective external evaluations will come from an agent that can perform a similar, highly articulate critique.

Implications for the Future of AI Development

The Agent-as-a-Judge paradigm fundamentally reshapes how we build and deploy AI.

For Researchers and Developers: The New Evaluation Stack

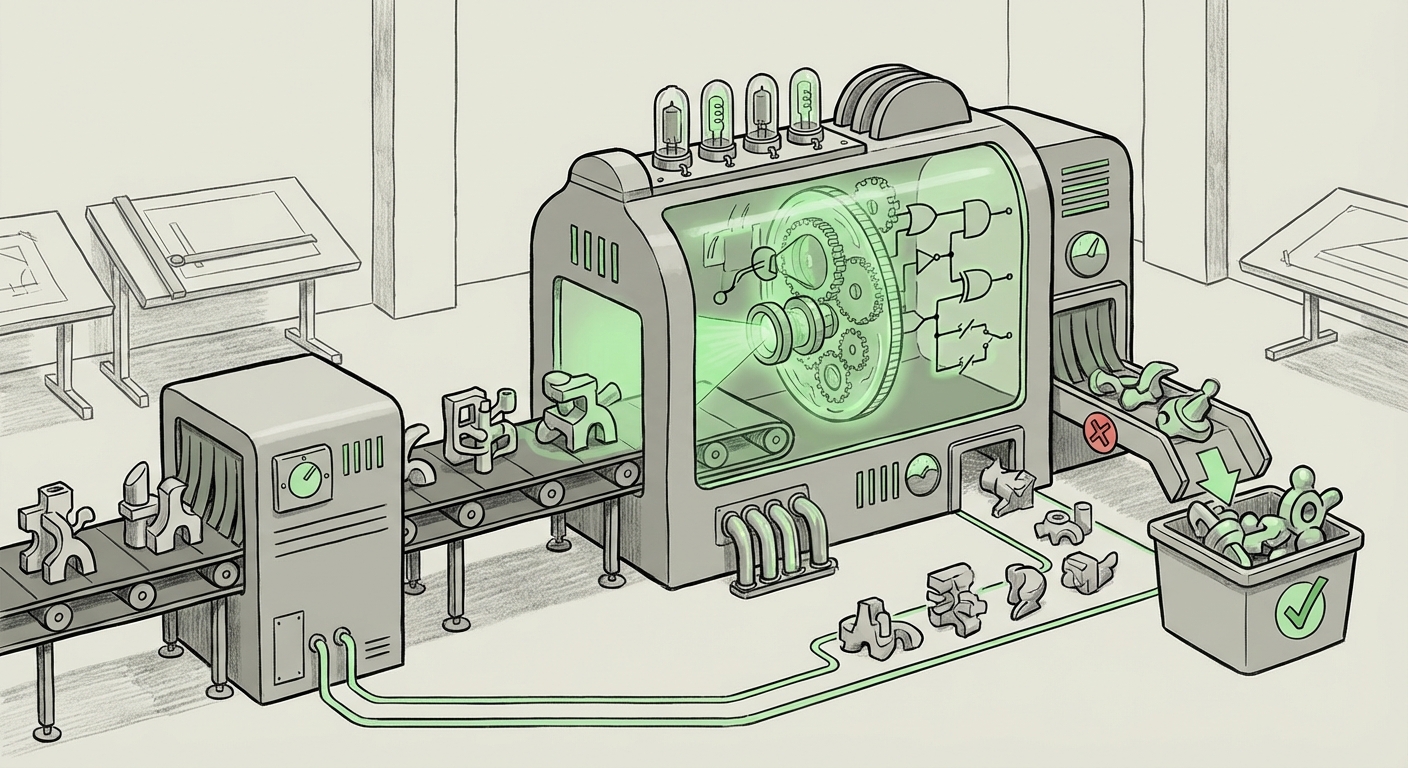

The emphasis shifts from designing clever test cases to designing clever judges. Development cycles will now involve a three-part loop:

- Generator Model (The Student): Produces the output.

- Reasoning Judge Agent (The Teacher): Assesses the output using deep reasoning, highlights specific failures (logical gaps, incorrect assumptions), and assigns a structured score.

- Refinement Loop (The Study Session): The Generator uses the Teacher’s detailed feedback to immediately create a better version.

This accelerates iteration speed dramatically, moving evaluation from a post-deployment chore to an integrated part of the training process.

For Businesses: Trust, Speed, and Customization

Businesses relying on specialized AI applications will see significant benefits:

- Scalable Trust: Deploying custom AI solutions (e.g., automated legal drafting, complex financial modeling) requires measurable trust. Agent judges provide verifiable, auditable assessment trails, increasing confidence in the final product before it hits the customer.

- Hyper-Specialization: Companies can train or fine-tune a Judge Agent on their proprietary standards of quality. A judge trained on a company’s internal style guide and compliance rules will evaluate outputs far better than a generic public benchmark.

- Faster Deployment: By automating the most complex evaluation steps, the time-to-market for sophisticated AI tools shrinks, providing a competitive edge.

For Society: Addressing Safety and Alignment

This is perhaps the most crucial implication. As AI agents gain autonomy—driving vehicles, managing power grids, or making medical diagnoses—their alignment with human values (safety) becomes paramount. A weak judge allows dangerous capabilities to slip through testing.

A robust Agent-as-a-Judge, especially one informed by safety principles (like Constitutional AI mentioned in corroborating literature), is essential for:

- Detecting Subtle Harm: Identifying biases or potentially harmful outputs that are not immediately obvious in simplistic tests.

- Verifying Goal Alignment: Ensuring that complex, multi-step agent behavior remains aligned with the overarching human objective, preventing mission drift or unintended negative consequences.

Practical Insights: Building the Next Generation of Evals

For organizations looking to move beyond legacy testing methods, here are actionable steps derived from the emergence of the Reasoning Judge:

1. Define Your "Good" with Specificity

Don't just ask the judge, "Is this response good?" Structure the evaluation criteria. Break down "good" into quantifiable sub-components: Factual Accuracy (0-5), Logical Flow (0-5), Creativity (0-5), and Constraint Adherence (0-5). The judge agent needs a detailed rubric to operate effectively.

2. Use Tiered Evaluation Architectures

Implement a multi-layered judging system. Use a fast, lightweight model for initial filtering (e.g., "Does this even answer the prompt?"). Only pass high-potential or edge-case responses to the powerful, slow, and expensive Reasoning Engine Judge. This balances cost and thoroughness.

3. Treat the Judge as a Model Itself

The judge agent is not infallible. It must be continuously tested against gold-standard human evaluations to ensure it hasn't degraded or developed its own blind spots. If your Judge Agent starts accepting outputs that humans reject, you need to retrain or recalibrate the judge.

4. Focus on Adversarial Probing

Actively try to trick your Judge Agent using techniques found in adversarial literature. If the judge can be fooled by simple prompt injection or stylistic trickery, it cannot be trusted to oversee highly capable systems.

The Inevitable Conclusion: The Rise of Self-Auditing AI

The journey from simple algorithmic metrics to the nuanced, reasoning-based oversight of the Agent-as-a-Judge reflects the maturation of the entire field. As AI systems become the architects of new code, business strategies, and scientific hypotheses, the human bottleneck in evaluation becomes unsustainable.

We are moving toward a future where sophisticated AI does not just create; it also critically reviews and polices its own creations. The emergence of the reasoning engine judge is not merely a technical improvement in testing; it is a foundational pillar for building truly autonomous, reliable, and safe systems capable of operating at the scale and complexity demanded by the modern world. The next breakthrough won't just be a better model, but a better sentinel guarding the models we already have.