The AI Release Cycle Revolution: Why Mid-Cycle Updates Are the New Norm for LLMs

For years in software development, major improvements came with major version numbers: Version 3.0, then 4.0, then 5.0. When dealing with Large Language Models (LLMs) like ChatGPT, this pattern held firm. Users expected a significant, cataloged leap in capability between major releases. However, recent announcements—such as the reported mid-cycle update to GPT-5.2 that instantly tweaks response style and quality—signal a profound, quiet revolution in how AI is managed.

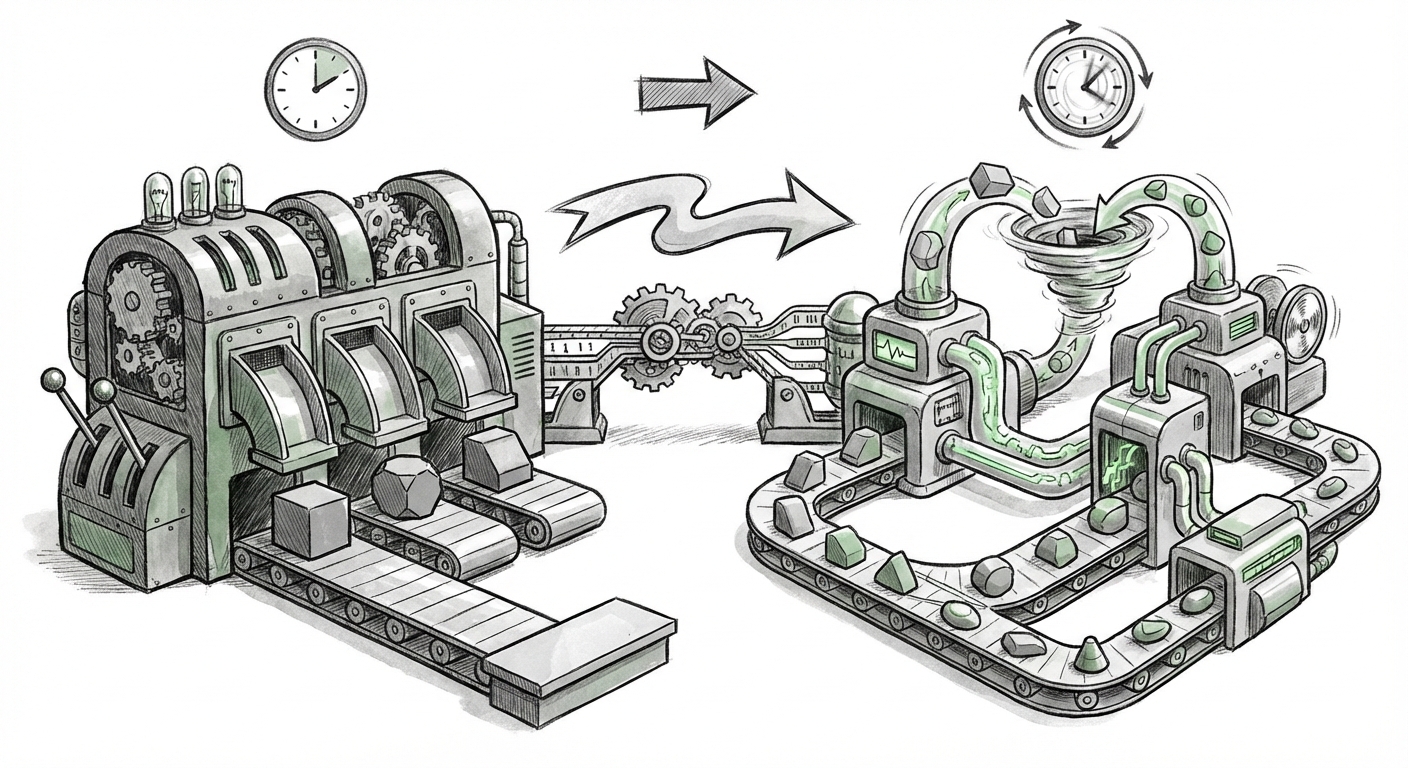

This shift from monolithic updates to constant, iterative refinement is not just a tweak to a software patch schedule; it is the formal adoption of Continuous Integration/Continuous Delivery (CI/CD) principles tailored for the scale and complexity of foundation models. This has massive implications for developers, enterprises, and the very definition of an "AI model."

From Stale Versions to Agile AI: The Operational Shift

When a new version of an LLM launches, it is generally frozen in time, representing the state of the art until the next scheduled major release. But the real world is dynamic. As developers use these models, they provide massive amounts of unstructured feedback: prompts that lead to verbose replies, instances where the tone is slightly off, or subtle knowledge gaps that need patching.

The traditional response was to log these issues and wait for the next major training run, often months later. The new approach, exemplified by quick "style and quality" updates within a single iteration (like GPT-5.2), changes the calculus:

- Increased Operational Maturity: Building the infrastructure to safely push performance tweaks *between* major versions without causing widespread outages or introducing catastrophic bugs is a monumental engineering feat. It implies that model providers have developed sophisticated monitoring, canary testing, and rollback capabilities specifically for neural network behavior. We are moving from treating AI like a physical product release to treating it like a dynamic, always-on cloud service.

- Competitive Necessity: In the hyper-competitive environment, if OpenAI can improve response style in weeks instead of months, rivals like Anthropic and Google must match that velocity. This rapid iteration becomes table stakes for API providers seeking long-term enterprise contracts.

- Focus on the "Last Mile" Quality: The most significant friction points for enterprise adoption are often not raw intelligence, but usability: tone, conciseness, and adherence to brand voice. These "edge cases" are best solved by small, targeted corrections based on real-world usage data. Mid-cycle updates address this crucial "last mile" of usability.

To understand the engineering behind this agility, one must investigate the technical underpinning. Queries like "LLM deployment" "continuous integration" "mid-cycle updates" reveal industry efforts to build robust MLOps pipelines capable of safely deploying updates without versioning chaos.

The Technical Hurdle: MLOps Meets the Neural Network

For traditional software, CI/CD is well-understood. You write code, run automated tests, and deploy. LLMs complicate this because the "code" is the massive trained weights of the model itself, and the "tests" are often subjective human evaluations.

When OpenAI updates GPT-5.2 to improve "response style," they are likely implementing one of three high-level technical strategies:

1. Reinforcement Learning from Human Feedback (RLHF) Refinements

This involves feeding the model new, carefully curated preference data derived from recent user interactions or human labeler feedback. These targeted fine-tuning runs correct specific behavioral flaws—like reducing "hallucinations" in specific domains or ensuring outputs adhere to requested formats.

2. Prompt Template and System Instruction Updates

Sometimes, the change isn't in the core weights but in the hidden instructions provided to the model *before* the user prompt is processed. An update might subtly adjust the core system prompt to encourage less apologetic language or greater conciseness across the board. This is the fastest and least intrusive method.

3. Model Pruning or Quantization Tweaks

Less common for quality-of-style updates, but still possible, are minor adjustments to model architecture or optimization that prioritize speed or reduce complexity for specific API endpoints, potentially affecting output characteristics as a side effect.

The necessity of these fine-tunings points toward the concept of Model Drift Management. Even a perfectly trained model can drift in behavior as the data it processes—or the world itself—changes. Regular, iterative patching is essential to maintain peak performance, a concept explored by researchers looking into "AI model drift management" "post-release fine-tuning".

The Dual Edge: Benefits for Business and Developer Risk

For businesses building products on top of these models, this new reality presents both profound opportunity and significant new risk.

The Opportunity: Higher Quality, Faster

Enterprises rely on AI for customer-facing roles, legal summarization, or internal knowledge bases. In these fields, the difference between a slightly verbose answer and a perfectly concise one can be the difference between user delight and operational failure. The ability to benefit from these quality improvements almost instantly means:

- Faster Time-to-Reliability: Bugs in tone or logic reported yesterday can be fixed today, allowing products to mature much faster.

- Better Enterprise Fit: Updates targeting "style" directly address the need for AI systems to sound consistent, professional, and on-brand.

The Risk: Unstable APIs and the Testing Burden

If the foundational behavior of the model changes without a corresponding version bump (e.g., moving from GPT-5.2a to GPT-5.2b), applications depending on exact output formatting or latency can break silently. This is the core concern often discussed in developer forums searching for "impact of frequent LLM updates on API stability".

Imagine a developer who wrote custom code to parse JSON output from the model. If a style update causes the model to prepend a sentence like, "Here is the data you requested:" before the JSON block, that parser will immediately fail, even though the developer is still technically using "GPT-5.2."

The implication for developers is clear: **Pinning is paramount.** Relying on a floating, generic tag like "latest" for a production system is now far riskier. Developers must demand granular versioning (e.g., `gpt-5.2.101`) or seek immutable endpoints to ensure that when they test a solution, they are testing the exact model version that will run in production.

The Future Implication: The End of the Model Generation Gap

The most exciting long-term implication of this trend is the death of the "stale model generation." In the future, we may stop talking about GPT-5 or Gemini 2.0 as fixed entities. Instead, the entire ecosystem will transition to a service model where the underlying capability is constantly evolving.

This acceleration suggests that the competitive battleground is moving away from who can train the biggest single model, and toward who can deploy the most sophisticated, safe, and rapidly adaptable *service layer* around that model.

We must also consider the competitive landscape. If market leaders enforce rapid iteration, other major players will be compelled to adopt similar speeds. Searching for terms like "Anthropic" "Google Gemini" "iterative model updates" policy helps track this institutionalization across the industry.

Actionable Insights for Stakeholders

For Technical Teams (Engineers & MLOps):

- Demand Granular Versioning: Insist that your AI provider offers immutable endpoints (specific hashes or build numbers) for production workloads. Never rely solely on broad version tags for critical systems.

- Build Robust Guardrails: Since underlying behavior can change slightly, invest heavily in post-processing validation. If you expect JSON, use strict schema validation on output, regardless of conversational preamble.

- Automate Regression Testing: Maintain a comprehensive suite of "golden prompts" that reflect your core business logic. Run this suite automatically against the API daily to catch any silent behavioral shifts instantly.

For Business Leaders (Executives & Product Managers):

- Factor in Continuous Operational Cost: Recognize that maintaining AI integrations is not a "set it and forget it" process. Budget time and resources for ongoing API testing, as quality improvements are now deployed continuously, not yearly.

- Leverage Niche Improvements: Use the improved "style" and "quality" to drive adoption in areas previously hindered by clunky AI behavior (e.g., high-stakes customer service scripting).

- Engage Feedback Loops: Actively participate in early access programs or targeted feedback channels. Your specific usage patterns are the direct fuel for the next mid-cycle update that will benefit your entire industry.

Conclusion: Agility is the New Benchmark

The move toward mid-cycle LLM updates marks the transition of generative AI from a scientific milestone to a fully mature, operational utility. The era of waiting months for critical bug fixes or desirable stylistic tweaks is ending. We are entering an age of agile AI, where performance is fluid, and improvement is the default setting.

While this promises better, more nuanced AI tools for everyone, it simultaneously raises the bar for technical governance. The companies that thrive will be those that not only build the most powerful models but also build the most resilient, well-tested pipelines to consume them. In the future of AI, speed of iteration defines market leadership, and invisibility of improvement defines user satisfaction.