The Agentic Leap: How OpenAI's API Upgrade Unlocks Hours-Long, Autonomous AI Workflows

For the past few years, our interactions with Large Language Models (LLMs) have largely been transactional: we send a prompt, we get a response. This "single-turn" dialogue has been revolutionary, but it hits a wall when tasks require planning, memory, external verification, and hours of continuous effort. OpenAI’s recent upgrade to its Responses API—specifically targeting features for long-running, autonomous AI agents—is not just an incremental update; it is a declaration of intent for the next era of AI deployment.

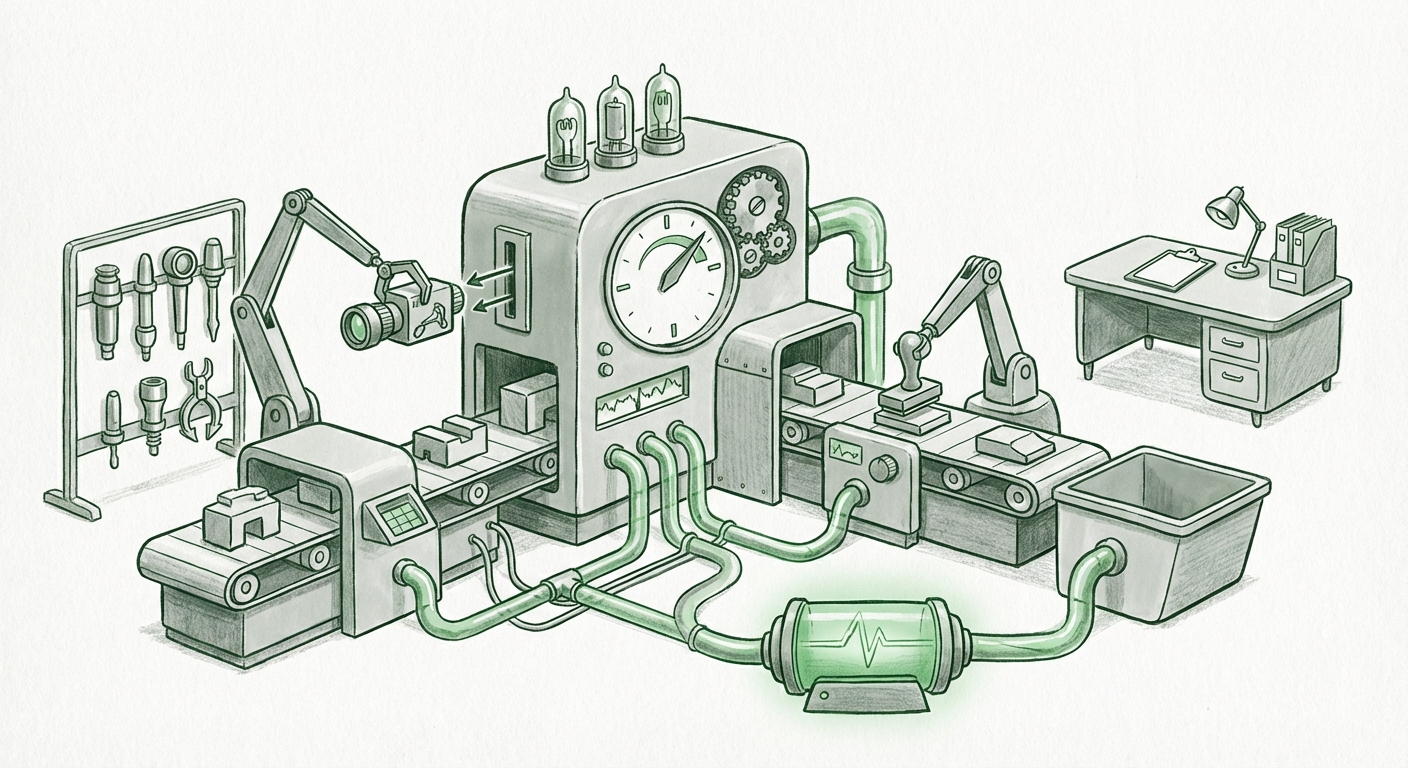

This enhancement signals a fundamental pivot from AI as an intelligent assistant to AI as an autonomous worker. By building features that allow agents to run for hours, access the internet, and dynamically load new capabilities ("skill packages"), OpenAI is laying the groundwork for truly persistent, goal-oriented systems. As technology analysts, we must examine what this means by looking at the convergence of three crucial trends: the architecture of persistence, the mechanics of dynamic tool use, and the underlying economic reality of sustained computation.

The Shift: From Chatbot to Autonomous Agent

The concept of the "AI Agent" is simple, yet profound: an AI that can observe its environment (data, web, files), form a plan, execute steps, evaluate results, and repeat until a complex goal is achieved. Think of asking a chatbot to "Write a summary of the top 10 tech stocks today, find analyst projections for Q4, and draft an investment memo." A standard chatbot struggles because it loses context quickly and can't reliably execute all those steps sequentially.

OpenAI's API upgrade directly attacks this fragmentation. By explicitly supporting long-running operations, they are addressing the core architectural challenge of state management and persistence. Previous agent frameworks (like early iterations of AutoGPT) often relied on developers building complex, brittle layers *outside* the core LLM API to manage memory and retry logic. Now, these critical functionalities appear to be baked directly into the API infrastructure.

This centralization of agent logic within the API framework has massive implications. As corroborated by ongoing industry discussions concerning state management in complex workflows, persistence is the key to moving AI out of the lab and into mission-critical enterprise processes. When an agent can run for hours, it moves from answering a query to completing a substantial project, such as conducting comprehensive, multi-day market research or debugging large sections of legacy code. This is the transition from AI as a helpful tool to AI as a digital colleague.

Corroborating Trend 1: The Need for Persistence

The industry recognizes that true autonomy requires memory that lasts longer than a single API call. Technical deep dives into agent architectures frequently highlight the difficulty of maintaining long context windows across multiple planning cycles without exploding token costs or losing vital information. The need for robust, built-in state management suggests OpenAI is abstracting away the complexity of maintaining session continuity, making it easier for developers to build systems that don't "forget" their goals midway through a task.

The Power of On-Demand Skills: Evolution of Tool Use

Perhaps the most exciting feature mentioned is the ability to load reusable skill packages on demand. This goes far beyond the initial concept of Function Calling, where developers listed a finite set of tools at the start of a conversation.

Imagine an agent tasked with optimizing a logistics network. Initially, it might only need a database lookup tool. Halfway through the process, the agent realizes it needs to run a complex simulation that requires a specialized Python interpreter environment and access to weather APIs. The "on-demand skill loading" capability implies the agent can dynamically request and integrate this new toolkit without restarting or being pre-programmed with every single possibility.

Corroborating Trend 2: The Expanding Frontier of Function Calling

This development mirrors the wider trend of sophisticated tool use across the AI landscape. As sources focused on the evolution of AI Function Calling suggest, the focus is rapidly shifting from simple input/output mapping to complex, conditional tool orchestration. The model must not only know *what* tools exist but *when* to acquire them and *how* to integrate them into its execution chain. For software architects, this means designing APIs that are modular, discoverable, and executable by an autonomous reasoning layer, rather than just for human programmers.

The Business Case: Why Enterprise Demands Agentic Workflows

Why is OpenAI prioritizing these complex, resource-intensive capabilities? The answer lies in the immense projected value of automating multi-step business processes. If a task currently takes an experienced human analyst 10 hours to complete—involving reading documents, searching the web, using internal tools, and synthesizing findings—an autonomous agent that can perform that task reliably in 4 hours (with the agent running for 2 hours and the human reviewing for 2 hours) represents massive efficiency gains.

Corroborating Trend 3: Enterprise Adoption as the Driving Force

Research into the enterprise adoption of autonomous agents confirms a strong demand for systems capable of these high-value, multi-step workflows. Industries like finance, law, R&D, and large-scale operations are the first movers. They are not looking for better chatbots; they are looking for AI systems that can manage entire segments of their digital labor force. The ability to web-access and plan over long periods is what transforms AI from a productivity booster into a structural cost-saver.

The Unseen Challenge: Infrastructure Economics

While the new API capabilities are exciting, they introduce significant operational hurdles. Running an AI agent continuously for hours means making hundreds or thousands of successive API calls, each requiring context to be managed. This places immense pressure on computational resources and introduces a new economic model for AI usage.

If a single complex research task consumes the equivalent computing power that used to power 50 simple queries, the cost structure changes fundamentally. The viability of these long-running agents rests not just on the model's intelligence, but on the efficiency of the underlying infrastructure.

Corroborating Trend 4: The Cost of Continuous Inference

Discussions around LLM inference costs are becoming central to AI viability. Developers and infrastructure experts are actively researching how to serve these sustained workflows economically. This includes debates over whether highly capable agents should always rely on the largest, most expensive frontier models, or if they should employ a tiered system—using a small, fast model for simple planning steps and only engaging the massive models for the complex "reasoning hubs." OpenAI’s API upgrade forces developers to confront these economic realities head-on, as sustained execution translates directly into sustained expenditure.

Future Implications: Architecting the Agent Ecosystem

This API enhancement marks the beginning of a true separation between two classes of AI users:

- The Prompt Engineer: Those who optimize single-turn interactions for maximum output quality (still highly valuable).

- The Agent Architect: Those who design the mission, define the skill packages, manage the state handoffs, and monitor the long-running execution environment.

For developers, this means the focus shifts from mastering prompt syntax to mastering workflow design. Building these systems effectively will require deep knowledge not just of the LLM, but of security protocols for external tool access, robust error handling for long jobs, and strategies for efficient context recycling to manage costs.

Actionable Insight for Businesses: Start Experimenting with Agent Sandboxes

Businesses should not wait until these agents are perfectly stable. The time to act is now by identifying internal processes that are multi-step, data-intensive, and currently bottlenecked by human time. Create "sandboxes"—controlled environments where rudimentary agents can test a small part of a known complex workflow. This allows teams to gain familiarity with the new operational paradigm—observing where the agents fail, where they hallucinate during long runs, and what specific "skill packages" (APIs or internal tools) need to be securely exposed.

Conclusion: The Dawn of Persistent Digital Labor

OpenAI’s focus on long-running, autonomous agents via its Responses API is a clear signal that the next major productivity wave will be powered by persistent, goal-driven AI. We are moving past the novelty phase of LLMs and entering the industrialization phase, where AI tools are expected to execute long, complicated tasks reliably, accessing necessary resources as they go. This evolution demands architectural sophistication, careful economic planning, and a strategic view of what tasks are ready to be handed over to persistent digital workers.

The challenge is no longer teaching the AI to talk; it is teaching it how to work, uninterrupted, for hours on end. The framework provided by this API upgrade is a powerful step toward making that vision a ubiquitous reality.