The End of Gibberish: How Alibaba's Qwen-Image-2.0 Redefines Text Fidelity in Multimodal AI

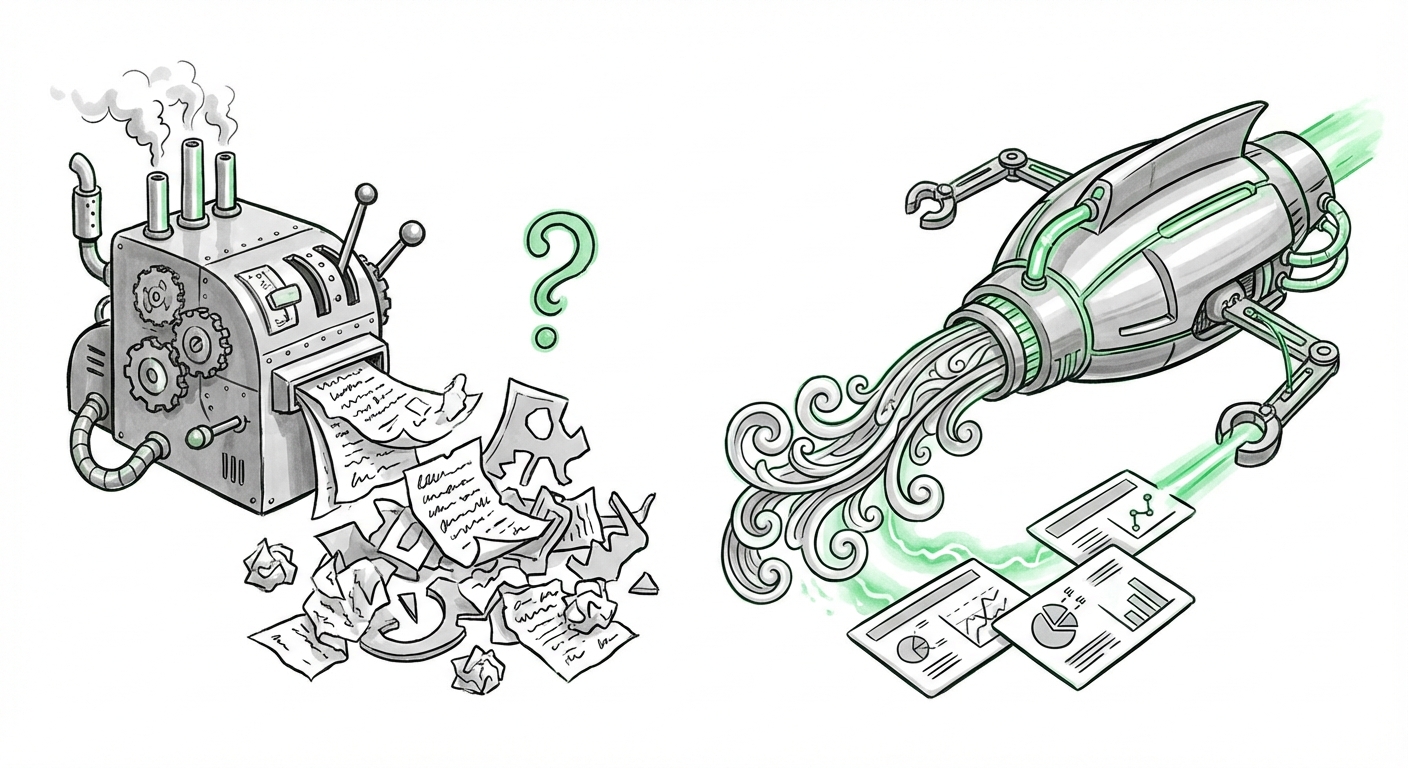

For years, text-to-image AI models—from early diffusion models to even recent high-profile iterations—shared a peculiar, almost comical flaw: they could paint breathtaking landscapes, photorealistic portraits, and surreal concepts, but ask them to write a simple word, and they produced beautiful, elaborate gibberish. This failure to master textual coherence was the final, glaring gap between powerful image generation and true utility.

The introduction of Alibaba’s Qwen-Image-2.0 marks a pivotal moment suggesting that gap is rapidly closing. By demonstrating "near-perfect text accuracy," not just in standardized English but critically in complex, high-context scripts like ancient Chinese calligraphy and structured digital formats like PowerPoint slides, Qwen-Image-2.0 is forcing us to recalibrate our expectations for multimodal AI.

The Fidelity Wall: Why Perfect Text Matters

To appreciate this development, we must understand why text rendering has been so difficult. An image model operates in pixel space; it thinks visually. Language, however, is symbolic, discrete, and governed by strict grammatical and orthographic rules. Asking a model to generate the letter 'R' requires it to understand not just the shape, but the context—is it a logo? A header? A historical document?

Historically, models struggled because they treated text as textures rather than symbols that needed to be correctly sequenced. If you asked DALL-E 2 for "A sign that says CAT," you might get a picture of a cat next to a shape that vaguely resembles C-A-T. This is often referred to as the "gibberish text problem."

Benchmarking Against the Elite

The race to solve this has been intense. Reports detailing the advancements in models like DALL-E 3 and Google's Gemini have frequently highlighted improved text handling as a key differentiator against their predecessors. The technical consensus suggests that achieving reliable text requires more sophisticated integration between the language model (LLM) component and the image generation (diffusion) component. It’s no longer enough to just describe the *style* of the text; the model must *know* the text.

Qwen-Image-2.0’s performance suggests it has not just met the industry standard set by Western competitors but potentially exceeded it in specific, challenging domains. By showcasing mastery over **ancient Chinese calligraphy**, the model proves its ability to handle scripts with highly variable stroke weights, complex structural rules, and deep cultural context—a feat that requires an exceptionally robust understanding of symbolic representation within a visual canvas. This level of accuracy forces a re-evaluation of current multimodal benchmarks.

The Competitive Edge: Localized Mastery vs. Global Scale

Qwen’s success must be viewed through the lens of the global AI competition, where Chinese technology firms are rapidly closing the gap on foundational models. The focus on complex local scripts is a clear strategic differentiator.

When we look at the global landscape, models like Google's Gemini are praised for their broad, highly capable multimodal integration, often blending text understanding with video analysis. However, Qwen-Image-2.0’s specialization suggests a crucial strategy: achieving **hyper-fidelity** in niche, high-value areas where generalist models might fall short due to training data bias.

This localized mastery is not merely technical boasting; it’s a market signal. For businesses operating in East Asia, the ability to generate accurate, culturally resonant visuals containing complex characters (whether historical or modern) is non-negotiable. This specialization positions Qwen and Alibaba favorably in specific enterprise segments, validating the investment in domestic foundational models that deeply understand local linguistic nuances.

Further analysis into the competitive landscape often centers on how foundational models integrate across modalities. Reports on Google's Gemini 1.5 Pro, for instance, emphasize blending text and video, setting a high bar for general multimodal integration. Qwen’s success in visual text fidelity suggests a strategy to dominate the *precision* aspect of multimodal output, complementing generalized understanding. [See analysis on Google's Gemini capabilities for context on the broader field.]

The PowerPoint Revelation: Moving from Art to Enterprise

Perhaps the most commercially jarring aspect of the Qwen-Image-2.0 announcement is its capability to render **PowerPoint slides** with accuracy. This moves the application of generative AI from abstract art creation to direct, structured business workflow replacement.

Imagine the workflow today: A marketer needs a slide summarizing quarterly results. They write the text, export it, open a graphic design tool (like Photoshop or Canva), manually place the text, adjust fonts, and ensure alignment. This process is slow and requires specialized software knowledge.

With Qwen-Image-2.0’s demonstrated ability, the future workflow becomes:

- Input prompt: "Generate a quarterly review slide for Q3, using the Qwen corporate color palette, featuring these three bullet points exactly as written."

- Output: A pixel-perfect image of the slide, ready for immediate integration into the presentation deck.

This capability eliminates the "copy-paste-and-fix" cycle that plagues current design automation attempts. It shows that the model understands **layout metadata**: where the title should go, how bullet points structure hierarchy, and the requirement for absolute textual correctness.

The shift toward automated complex visual content is a major theme in enterprise tech. Analysts note that AI must move beyond simple image generation to handle structured outputs necessary for business workflows. Qwen’s reported success in layout generation—as seen in the PowerPoint example—is exactly this "next frontier," moving tools from fun novelties to essential productivity boosters. [Read more on Generative AI automating complex visual content beyond simple images for workflow impact.]

Future Implications: The Death of Visual Incoherence

What does this evolution mean for the next five years of AI development?

1. Reliability Over Novelty

The focus will shift from "Can the AI make something weird?" to "Can the AI make exactly what I asked for, reliably?" Perfect text fidelity is a prerequisite for AI to be trusted with sensitive or mission-critical outputs—legal documents, technical diagrams, corporate branding assets, and educational materials. When AI stops producing visual nonsense, its utility in professional settings skyrockets.

2. Deepening Multimodal Integration

Qwen-Image-2.0 proves that tight coupling between language understanding and visual synthesis is achievable. We will see this pattern expand across other modalities. Expect models soon that can perfectly render complex architectural blueprints, instantly generate functioning code snippets within a mock-up UI, or accurately translate and overlay subtitles onto video frames without distortion.

3. Reshaping the Creative Economy

For graphic designers, this is both a threat and an opportunity. Routine tasks involving text placement (like creating standardized advertisements, simple infographics, or internal communications slides) can now be automated. The actionable insight here is clear: creatives must pivot toward leveraging AI for rapid prototyping and focus their human expertise on conceptualization, emotional resonance, and custom aesthetics that still push the boundaries of what the machine can generate.

For specialized fields like historical archiving or cultural preservation, the calligraphy breakthrough is profound. Imagine being able to feed a system thousands of ancient, faded documents and have the AI not only attempt transcription (text modeling) but also generate a high-resolution, visually perfect digital restoration of the original script (image modeling). This opens new avenues for digital humanities research.

Actionable Insights for Tech Leaders and Implementers

For businesses looking to capitalize on these advancements, the time for passive observation is over. The move to reliable text generation changes the deployment calculus:

- Audit Content Pipelines: Identify bottlenecks where manual graphic design or text placement slows down output (e.g., localization, creating regional marketing variants, updating presentation decks). These are prime targets for Qwen-like models.

- Re-evaluate Text Training Data: If your business relies on specific brand fonts, complex product labeling, or proprietary symbols, understand whether your chosen multimodal model has been specifically trained or fine-tuned to respect those assets. Qwen’s success suggests specialized training yields superior results.

- Embrace Structured Prompts: The accuracy demonstrated implies that the models are responding well to highly structured, detailed prompts. Shift engineering efforts toward developing robust prompt engineering guidelines that treat the image generator like a database query requiring specific positional and textual parameters, not just abstract concepts.

Conclusion: Precision as the New Frontier

Alibaba’s Qwen-Image-2.0 is more than just an iterative improvement; it represents a qualitative shift. By conquering the challenge of legible, contextually perfect text across wildly different visual domains—from the flowing art of calligraphy to the rigid structure of a business slide—it signals that multimodal AI is maturing past the novelty phase. Precision is the new frontier. As these models continue to weave text and image together seamlessly, the line between digital creation and digital reality blurs further, promising efficiency gains that were previously confined to science fiction.