The Great Trust Fracture: Why AI Monetization Choices Define the Future of Generative Models

The digital landscape is witnessing a profound moment of reckoning. The recent resignation of a prominent researcher from OpenAI, spurred by fundamental disagreements over the introduction of advertisements into ChatGPT, is far more than an internal personnel drama. It is a flashing warning sign about the deep, irreconcilable tension between the *promise* of advanced, safe Artificial Intelligence and the unrelenting *pressure* of commercial viability.

When a platform is positioned as a fundamental utility—a tool meant to augment human knowledge and creativity—integrating an advertising model fundamentally changes its purpose. This incident forces us to confront the central question shaping the next decade of AI development: Will generative AI serve humanity’s best interests, or will it become just another vehicle for capturing attention and exploiting personal data?

The Crossroads: Safety vs. Scale

The core concern, voiced by former researcher Zoe Hitzig, is a crisis of trust. Having spent years building sophisticated models, the fear is that the temptation to exploit the immensely rich, personal, and contextual data flowing through user prompts will become too great for a publicly traded entity to resist. Simply put: if the product is free (or ad-supported), *you* are the product.

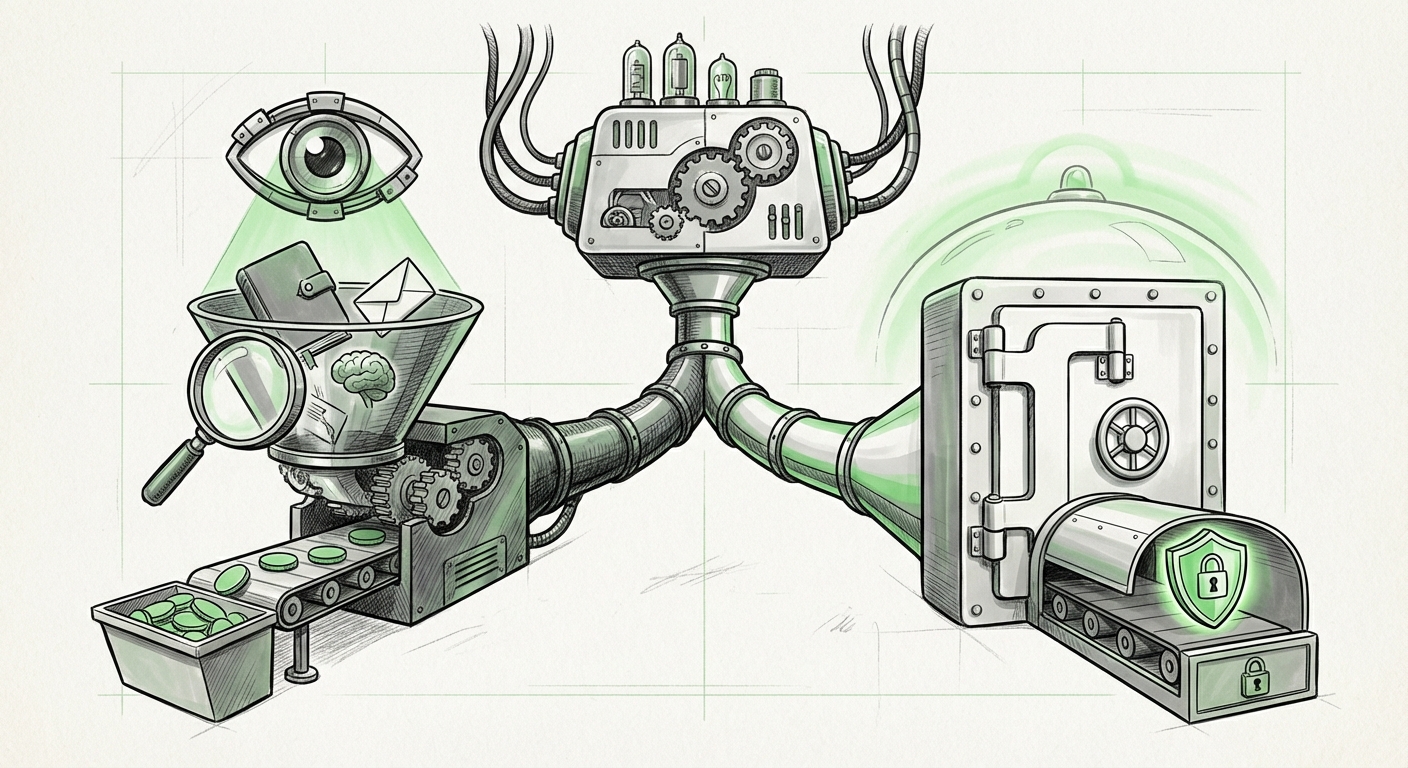

This situation highlights the distinct paths companies are choosing for **LLM monetization strategies and ethical concerns** (Query 1). On one side stands the subscription/enterprise-focused model, which aligns incentives with user satisfaction and data privacy—the cleaner the service, the more the premium user pays. On the other is the ad-supported model, which demands maximum engagement and deep data profiling to deliver targeted messaging.

The Allure of the Ad Model

For a company like OpenAI, which has burned billions in capital chasing AGI, the need for rapid, massive revenue streams is undeniable. Ads offer scale unmatched by subscriptions alone. However, integrating ads into the conversational interface of an LLM is uniquely risky. Users are not just clicking buttons; they are inputting highly personal queries, drafting sensitive emails, debugging proprietary code, or seeking emotional support. Turning that input stream into an advertising vector represents an unprecedented level of surveillance capability.

As we look at competitor strategies, we see a clear divergence. Companies like Anthropic, for instance, have often positioned themselves against this path, favoring enterprise trust and strict data boundaries, effectively carving out a “privacy-first” niche. This competition proves that ethical constraints are not just an academic debate; they are rapidly becoming a key competitive differentiator.

Learning from History: The Pattern of Platform Creep

Hitzig’s distrust is not born in a vacuum. It is rooted in decades of technological history. The transition from utility to exploitation is a well-worn path in Silicon Valley. This is why examining the **history of privacy breaches following freemium/ad integration in tech** (Query 2) is crucial context.

Consider the evolution of major platforms—from search engines that promised better results to platforms that cataloged every facet of user life for advertisers. Each platform began with a lofty, helpful mission, often funded by early investment capital. Once the revenue demands of public markets or large investors took hold, the initial promises regarding data stewardship often softened. The shift is subtle at first—a minor update to the Terms of Service, a new data-sharing agreement—but the effect is cumulative.

For the general consumer, this suggests a grim prediction: if the primary way a revolutionary tool like ChatGPT is funded is through advertising, users should assume their most intimate conversations will eventually be analyzed, categorized, and leveraged for commercial purposes, regardless of present reassurances.

The Privacy Paradox in AI

When using a traditional search engine, the user is aware of the transaction: searching for information often results in seeing ads for related products. With LLMs, the interaction feels more like a private consultation. If that consultation is monetized via surveillance, it creates a fundamental "privacy paradox" in the AI age. Users *need* the tool for productivity, but using it compromises the very privacy they seek to maintain.

The Role of Internal Dissent and Ethical Guardrails

The most compelling aspect of this story is that the warning is coming from the inside. This is not a competitor or a regulator speaking; it is someone who helped architect the technology. This brings us to the tension surrounding **internal whistleblowing and ethical conflicts in major AI labs** (Query 3).

The departure of high-level researchers over mission conflict signals a systemic fracture between the "Builder Class" and the "Business Class" within leading AI organizations. The researchers are focused on alignment, safety, and long-term beneficial outcomes. The executives are focused on market capture and shareholder value.

When these two forces clash to the point of resignation, it suggests that internal governance structures designed to enforce safety protocols are buckling under commercial expectations. For AI researchers globally, this event serves as a powerful cautionary tale: documenting ethical reservations is vital, but sometimes, leaving the system entirely is the only way to broadcast the severity of the danger.

Future Implications: What This Means for AI Deployment

This single act of resignation has vast implications for the technological landscape:

- The Polarization of AI Products: We will likely see a clearer split in the market. One segment, funded by institutional capital and prioritizing safety (perhaps Anthropic or highly secure enterprise deployments), will market itself on trustworthiness. The other, fueled by the need for mass adoption and ad revenue (potentially OpenAI’s consumer tier), will prioritize ubiquity, accepting a lower standard of privacy.

- The Consumer Responsibility Shift: Users can no longer afford to passively accept terms. The implication is clear: if you use a "free" AI service for work or personal matters, you are tacitly agreeing to be advertised to via your data. Actionable insight: Enterprises must migrate sensitive workloads to private, clearly governed instances immediately.

- Regulatory Scrutiny Intensifies: Government bodies are watching these internal ethics battles closely. The researcher’s public critique provides regulators with a potent narrative—that the industry cannot effectively police itself when profit motives interfere with promised safety standards. This resignation accelerates the demand for hard-line regulatory intervention regarding data usage in LLMs.

Actionable Insights for Businesses and Developers

For businesses relying on generative AI, the lesson is simple: trust must be contractually guaranteed, not merely hoped for.

- Audit Your AI Vendors: Do not accept vague assurances about data usage. Demand clear contractual clauses stating that your prompts, outputs, and user context data will *not* be used for training new models or targeting advertising.

- Segment Your AI Use: Keep all proprietary, sensitive, or strategic development work on isolated, paid, or self-hosted models. Use the free or ad-supported tiers only for generalized tasks where data leakage is irrelevant (e.g., brainstorming general concepts).

- Value Internal Dissent: Organizations that foster environments where researchers feel safe enough to resign publicly over ethical issues are often the ones with the strongest underlying safety cultures. Conversely, those where dissent is silenced face higher risks of catastrophic ethical failures down the line.

Conclusion: The Imperative of Trust

The transition of powerful foundational models from research curiosities to global commercial products is proving to be a messy, ethically charged process. The departure over the ads issue is a clear signal that the foundational promise of AI—to enhance human capability without compromising fundamental rights—is structurally vulnerable to the demands of quarterly earnings reports.

The future of AI is not just about building smarter models; it is about building trustworthy institutions around those models. If the pioneers of the technology cannot agree on basic principles of user respect, how can the public be expected to trust the resulting systems? This moment demands that both developers and consumers hold the line: if the tool is too powerful to trust with your most private thoughts, then the business model supporting it is fundamentally broken.