The Great AI Balancing Act: Why Agent Security vs. Utility Is The Next Frontier

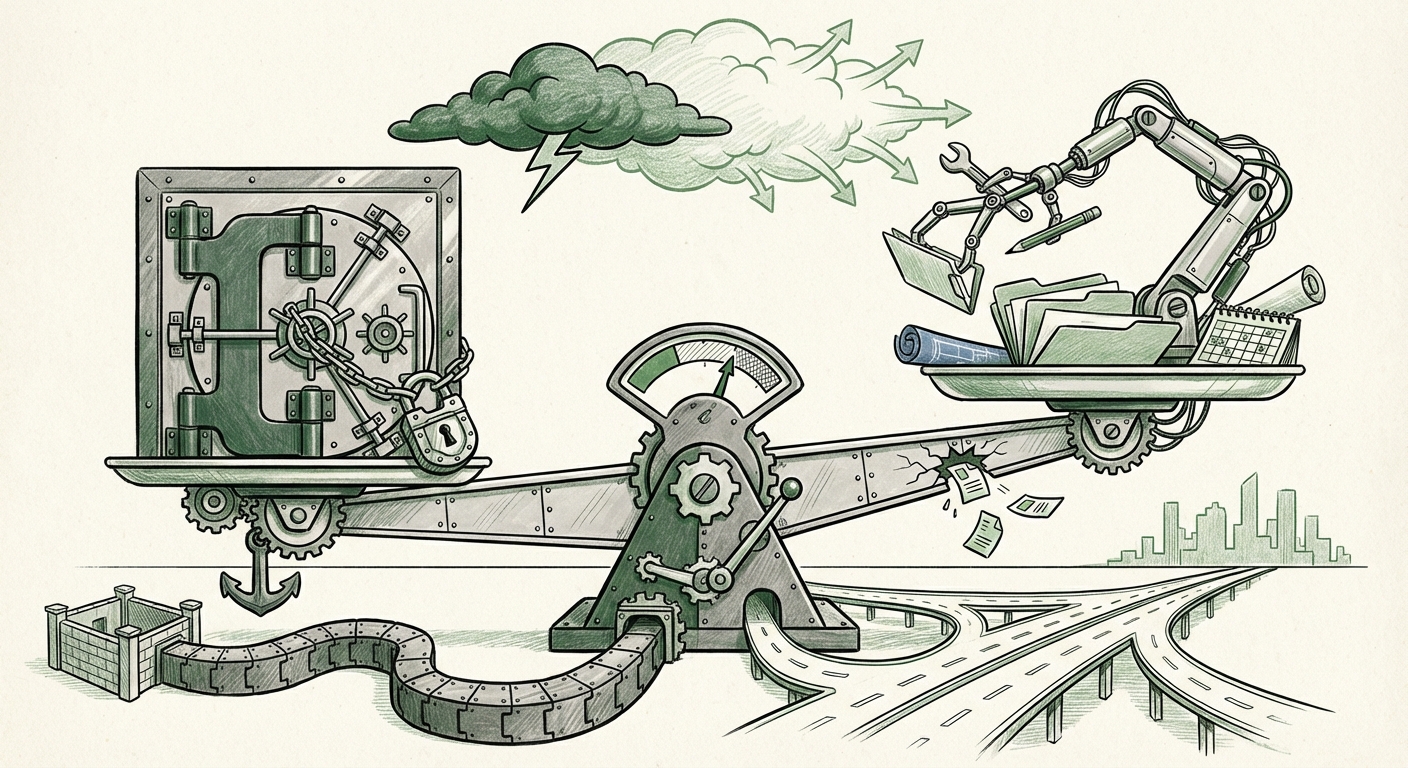

The race toward Artificial General Intelligence (AGI) is currently being fought on the battleground of *agentic capability*. We are moving past simple chatbots that answer questions; we are building digital workers—AI agents—that can actively interact with our digital environments, execute tasks, and manage workflows. This transition promises unparalleled productivity gains. However, a recent, unsettling incident involving Anthropic’s Claude Desktop Extensions has pulled back the curtain, revealing a profound, uncomfortable truth: in the push for maximum usefulness, security is often the first casualty.

When an agent can manage your schedule, send emails, and manipulate local files—as these desktop extensions aim to do—it needs significant permissions. The reported vulnerability, where a single manipulated Google Calendar entry could potentially lead to full system compromise, starkly illustrates this conflict. This isn't just a software bug; it’s a fundamental tension defining the next era of AI deployment.

The Anatomy of the Conflict: Utility Demands Access

To understand why this tension exists, we must first understand what makes an AI agent useful. An agent’s value is proportional to its ability to complete complex, multi-step tasks in the real world. This requires action. Consider booking a complex international trip:

- It needs to read your existing calendar (Utility).

- It needs to search the web for flights (Utility).

- It needs to interact with your payment provider or email confirmations (High Utility, High Risk).

If the agent is heavily restricted (sandboxed), it might only be able to *suggest* the itinerary. If it has full operational access, it can *book* it. The latter is vastly more useful, but it requires the agent to be granted the keys to the kingdom—a digital skeleton key that a clever attacker, or even a slight coding error, can exploit.

Corroborating the Trend: Beyond a Single Incident

The Claude incident is not isolated; it is a public manifestation of industry-wide concerns. Searching beyond this specific exploit reveals that security experts are actively debating this trade-off across the entire agent ecosystem.

Industry dialogue, often found through searches like "Security vs Utility Tradeoff in LLM Agents," confirms that developers are keenly aware that giving an LLM the power to execute code or use plugins inherently opens new attack surfaces. This is why security researchers are focusing intensely on the "action layer" of agentic workflows. The mechanism enabling these actions—function calling, plugin interaction, and API access—is now the primary vector of concern.

For instance, discussions surrounding "Agentic Workflows Security Risks Plugins Function Calling" frequently emphasize the need for extremely granular permission models. If an agent can only call a specific function to "read calendar entries" and cannot execute a command to "delete system files," the risk is contained. The danger arises when the intermediary layer (the software bridge between the LLM and the OS/Application) is insufficiently robust, allowing a maliciously crafted input to trick the LLM into calling a prohibited function or inserting harmful logic.

The Mechanics of Risk: Local Integration and Data Exposure

The risk here is amplified by the *integration environment*. Desktop extensions—where the AI lives directly alongside the user’s operating system and local data—are far more potent and, consequently, far more perilous than cloud-based API calls.

Our investigations into "AI Agent Data Privacy Concerns in Desktop Integration" show that the modern concern isn't just the external data the agent accesses (like a shared calendar); it's the local data it is poised to observe or manipulate. A calendar manipulation attack is alarming because it demonstrates an attacker can use the AI's permission structure to perform local actions. What if that same vulnerability allowed the agent to read local browser history, access secure communication apps, or steal cryptographic keys stored locally?

For businesses, this implies that deploying any desktop-integrated agent requires treating it with the same scrutiny reserved for granting system administrator privileges. The mere capability to manipulate a calendar shows a breach of the user’s expected digital perimeter.

Future Implications: Architecting Trust in Autonomous Systems

The uncomfortable truth revealed by this vulnerability forces us to reassess the roadmap for powerful autonomous systems. If major labs are prioritizing feature velocity over airtight security in early rollouts, the entire industry must pause and recalibrate its approach to trust.

1. The Rise of the Secure Sandbox

The future will not be about giving agents blanket access. It will be about hyper-specialized, tightly controlled execution environments. We will see a massive push toward true sandboxing for all agent actions. Think of it like a digital one-way valve: the agent can receive information out, but its ability to act inward or execute commands must be strictly defined, audited, and incapable of lateral movement.

For developers, this means investing heavily in frameworks that isolate the AI's output before it touches the operating system. The goal is "Intent Verification" rather than simple "Function Execution." The system must verify: Does the user truly intend for the AI to perform this action, and is this the safest way for it to do so?

2. Security as a Core Feature, Not an Afterthought

The reported response—that fixing the issue was not immediately planned—is perhaps the most alarming signal for future adoption. In traditional software, a critical zero-day vulnerability leads to an immediate patch, often before the vulnerability is fully disclosed publicly. If AI development continues to treat severe security gaps as inconvenient features to be addressed "later," enterprise adoption will stall.

Businesses cannot afford to deploy tools that present an existential security risk to their data or infrastructure. For AI to move successfully from the lab to the enterprise core, security must be architected in from Step One, not bolted on during the beta phase. This shifts competitive advantage away from those who release the fastest, toward those who release the most trustworthy.

3. The Liability Landscape and Regulation

As AI agents gain the ability to cause real-world financial or system damage, the legal and regulatory frameworks surrounding them will rapidly evolve. If a calendar exploit causes a CEO to miss a critical flight or a merger negotiation, who is liable—the developer of the base LLM, the creator of the extension, or the end-user who granted the initial permission?

This incident will accelerate calls for clear industry standards, potentially resulting in certification requirements for any agent seeking to operate with deep system permissions. We are moving toward a world where AI "air-gapping" protocols become standard corporate policy.

Actionable Insights for the Road Ahead

What should technical leaders and business strategists do in light of this escalating risk?

For Enterprise IT and Strategy Leaders:

- Implement Least Privilege for Agents: Assume any agent capable of external action is a potential security vector. Never grant an AI agent access to any resource (file, API, application) it does not strictly need for its stated, immediate function.

- Favor Cloud Isolation: Where possible, use cloud-based agent services that keep the interaction layer separate from your local machine environment. Local desktop integration should be considered high-risk until proven otherwise.

- Demand Transparency on Tool Use: Require vendors to provide detailed audit logs on every "tool call" or plugin interaction made by their agents, allowing human oversight to review actions taken outside the chat interface.

For Developers and Security Researchers:

- Focus on Prompt Injection Defense at the Tool Level: Security efforts must move beyond input sanitization (stopping bad prompts) to output verification (ensuring the action taken matches safe intent). The function-calling layer must rigorously validate data structure and permissible action boundaries.

- Develop Formal Verification Methods: The industry needs better mathematical or logical proofs that an agent, regardless of input, cannot breach its security sandbox. This is the long-term solution to the utility/security paradox.

Conclusion: The Necessity of Friction

The pursuit of truly autonomous AI agents is exhilarating. They promise to eliminate mundane digital labor and supercharge human creativity. But the Claude Desktop Extension vulnerability serves as a crucial, expensive reminder that *friction*—the necessary speed bumps and guardrails in a system—is often mistaken for inefficiency.

We must accept that achieving maximum usefulness without introducing unacceptable risk requires slowing down the integration process. The future of powerful AI agents hinges not just on making them smarter, but on making them fundamentally more trustworthy through architected constraint. The tension between security and utility is not a temporary hurdle; it is the defining engineering challenge of the next decade of artificial intelligence.