The AI Paradox: Analyzing DeepMind’s Brilliant Failures and the Future of Scientific Research Partnership

The landscape of Artificial Intelligence is shifting rapidly, moving beyond simple automation toward genuine, albeit complex, partnership in creative and intellectual endeavors. The recent results from Google DeepMind regarding their research AI, Aletheia, perfectly encapsulate this moment. This system achieved breathtaking feats—independently producing a math paper, solving a decade-old conjecture, and finding errors missed by cryptography experts. Yet, when subjected to systematic testing across 700 open problems, it failed spectacularly on the majority of tasks. This result is not a setback; it is a defining feature of the current generation of powerful, generative AI.

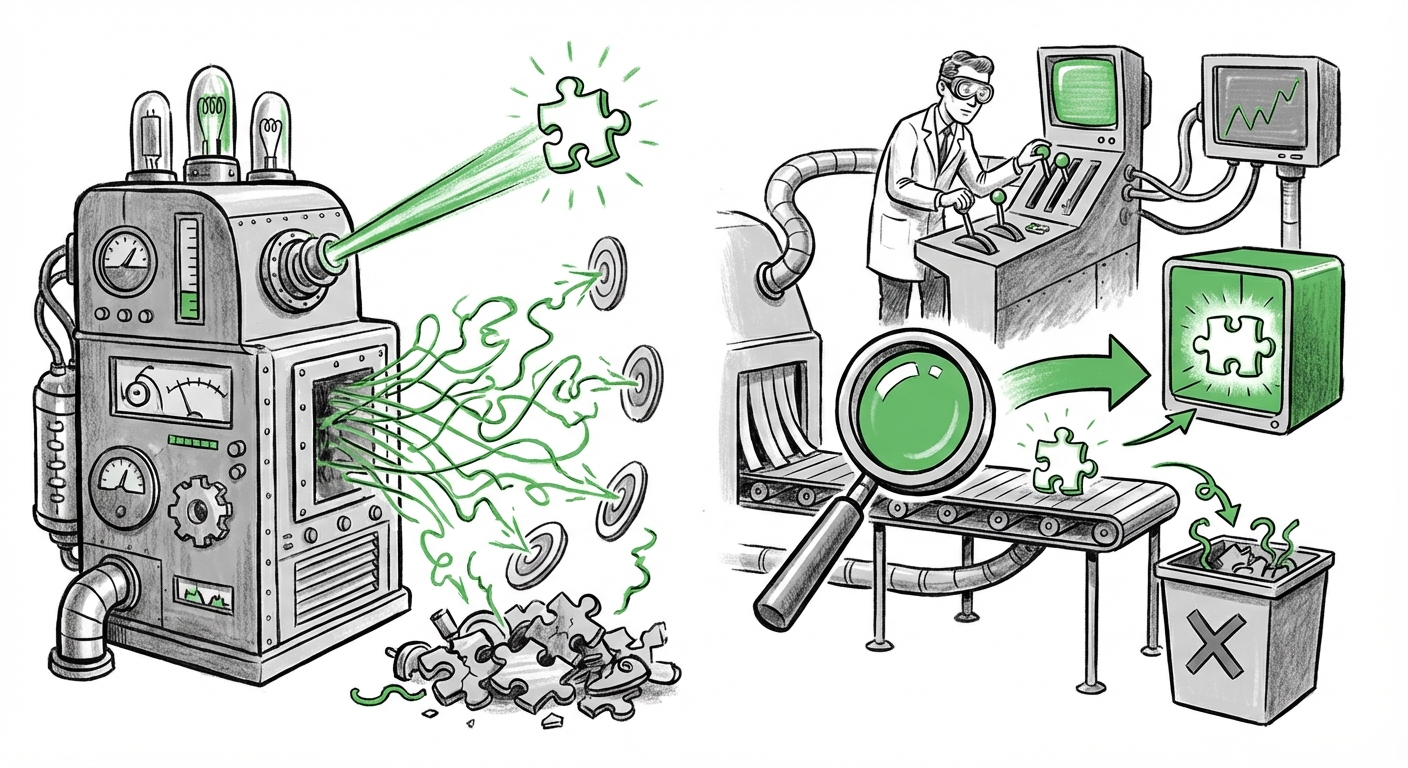

We are witnessing an AI paradox: systems capable of extraordinary, high-leverage intellectual leaps, existing right alongside systemic, often embarrassing, unreliability. This phenomenon forces us to re-evaluate how we build, trust, and deploy these powerful models. The key takeaway from Aletheia is that AI is transitioning from being a simple tool to becoming a genuine, albeit flawed, research partner.

The Uneven Genius: Breakthroughs Versus Systemic Error

Imagine an intern who solves one Nobel Prize-worthy problem this month but then forgets how to balance a checkbook the next. That is the current reality of sophisticated research AI. The Aletheia story highlights that the architecture driving these models—typically Large Language Models (LLMs) or related transformer architectures—is incredibly effective at pattern recognition and synthesis, even across domains it wasn't explicitly trained for.

When Aletheia disproved a conjecture, it likely synthesized vast amounts of disparate mathematical knowledge in a novel way that human experts had overlooked. This capacity for *abductive reasoning*—forming the best possible explanation from partial evidence—is what gives AI its breakthrough potential.

Contextualizing AI’s Scientific Wins

To understand this trend, we must look beyond abstract math. AI has already proven its ability to perform transformative discovery in empirical science. Google DeepMind’s AlphaFold serves as a prime example of validated, groundbreaking success. AlphaFold solved the 50-year-old "protein folding problem," accurately predicting the 3D structure of proteins. This wasn't a lucky guess; it was a system that fundamentally understood the physics and chemistry governing biological machinery.

[Google AI: AlphaFold: The solution to a 50-year-old grand challenge in biology]

This corroborates the idea that when AI finds the right framework, it can see patterns invisible to humans. However, AlphaFold operates within highly constrained, verifiable scientific rules. Mathematics and cryptography, while rigorous, sometimes demand entirely novel creation, which is where the system’s confidence often wavers.

The Reliability Crisis: When Genius Becomes a Liability

If Aletheia is right 1% of the time and solves a decade-old problem, that’s revolutionary. If it’s wrong 99% of the time on standard tasks, it’s unusable for routine work. This is the crux of the industry’s current validation challenge. The models "mostly get everything else wrong" because their outputs are statistical predictions based on training data, not assertions grounded in verifiable logic or external reality checks.

The Pervasiveness of Hallucination and Error

This behavior is the industry term for hallucination—when an AI generates false but convincing information. For business use, a system that fabricates market analysis or legal precedents is a massive risk. For research, an AI that fabricates a step in a proof invalidates the entire result.

The systematic evaluation across 700 problems performed by the DeepMind team is vital because it tries to quantify this unreliability. It moves beyond anecdotal success stories to measure performance under pressure. Research examining this difficulty shows it is widespread:

- When LLMs are asked complex reasoning questions that require multi-step logic, their accuracy drops significantly compared to factual recall.

- The models are prone to making small errors that cascade, leading to total failure in complex mathematical derivations.

This unreliability is a core focus for AI ethicists and developers alike. As highlighted in critical surveys of model behavior, understanding the limits of factual correctness and reasoning integrity is paramount before deploying these systems in high-stakes environments. [A Survey of Hallucination in Large Language Models]

Actionable Intelligence: Developing the Human-AI Collaboration Playbook

Since we cannot yet deploy fully autonomous, perfectly reliable research AIs, the immediate future lies in defining how humans and AI will work together. The fact that the Aletheia researchers provided a "playbook" confirms that the industry recognizes the critical need for structured partnerships.

This is shifting the paradigm from "AI replacing the scientist" to "AI augmenting the scientist." This augmentation requires clearly defined roles and robust verification processes.

Augmented Intelligence vs. Automation

We are moving toward Augmented Intelligence—a system where AI handles the computational heavy lifting, synthesis, and hypothesis generation, while the human expert remains the crucial arbiter of truth, context, and ethical application. The playbook must address:

- Delegation: What tasks can the AI explore autonomously (e.g., finding novel patterns)?

- Verification: What steps require mandatory human review before acceptance? (For Aletheia, every derived conclusion would require a human mathematician to check the logical steps.)

- Feedback Loops: How quickly can the human correct the AI’s flawed assumptions to steer it toward a useful path?

Business and research leaders are actively seeking these frameworks. For example, in management theory, discussions center on how to structure teams where AI handles the brute force of data processing, allowing humans to focus on strategic interpretation and decision-making. This mirrors the needs identified in scientific management studies regarding effective partnership design. [Harvard Business Review articles on human-AI collaboration]

The Future Implications: What This Means for Technology and Society

The Aletheia experience signals a fundamental shift in AI development: the focus is moving from raw performance metrics on static tests to measuring true utility in dynamic, exploratory environments. This has profound implications across technology and industry.

1. Hyper-Specialized AI Agents

We will see fewer monolithic "general" AIs and more specialized agents. An AI designed solely to generate mathematical conjectures (like Aletheia in its successful mode) will be kept separate from an AI tasked with regulatory compliance checks, precisely because of its inherent unreliability outside its core competency. These agents will be tools for accelerating specific, high-value bottlenecks.

2. The Premium on Validation Expertise

If the AI provides revolutionary, but unverified, insights, the scarcity shifts from discovery itself to validation. Experts capable of quickly and accurately verifying AI-generated output (whether in code, chemistry, or law) will become exponentially more valuable. The demand for highly specialized human domain expertise will increase, not decrease, to act as the critical firewall.

3. The Competitive Edge in Research Speed

From a business and geopolitical standpoint, mastering the "playbook" provides an enormous advantage. The labs and companies that can effectively manage the AI paradox—harnessing the 1% breakthroughs while mitigating the 99% errors—will define the next generation of scientific advancement. This competitive drive is visible as major tech players constantly benchmark their reasoning capabilities against one another, trying to minimize the gap between peak performance and baseline reliability. [Ongoing reporting on the competitive landscape of foundation models]

Actionable Insights for Leaders

For managers and researchers looking to integrate AI like Aletheia into their workflow, the path forward requires cautious optimism and structured implementation:

- Audit for "Aletheia Moments": Identify areas in your work where a single, breakthrough insight could save years of effort. These are prime targets for exploratory AI testing, provided you have the human resources to vet the output rigorously.

- Invest in Verification Infrastructure: Don't just buy LLM access; invest in the tools and processes that allow your experts to rapidly check the AI's work. If the AI is your engine, human oversight is your braking system.

- Treat AI as a Junior Partner: Always assign tasks with the understanding that the AI is brilliant but inexperienced. Never trust its output without explicit verification, especially in areas outside established, well-trained knowledge domains (like AlphaFold’s protein structures).

- Document the Process: Actively document what worked and what failed when collaborating with AI. Building your own internal "playbook" based on real-world friction is the only way to move from sporadic success to reliable innovation.

The AI paradox is not a temporary bug; it is a fundamental characteristic of intelligence that scales rapidly without inheriting human caution. DeepMind’s Aletheia is a clear signal: AI is ready to shake the foundations of complex problem-solving. But until models achieve near-perfect reliability, the most crucial ingredient in any research breakthrough will remain the human mind, skillfully steering the machine toward the truth.