The AI Judge Revolution: Why Reasoning Engines Are the Future of Model Evaluation

For years, the pace of Large Language Model (LLM) development has vastly outstripped our ability to rigorously evaluate them. We have been stuck in a cycle: build a better model, test it against an old yardstick (like MMLU or GLUE), and celebrate incremental progress. This reliance on static benchmarks is proving insufficient as models become genuinely capable of nuanced, multi-step reasoning.

The current bottleneck is quality control. How do you automatically verify that an AI’s complex answer is not just syntactically correct, but logically sound, contextually appropriate, and free of subtle errors? The answer, increasingly, is emerging from the same technology we are trying to test: the **Agent-as-a-Judge**.

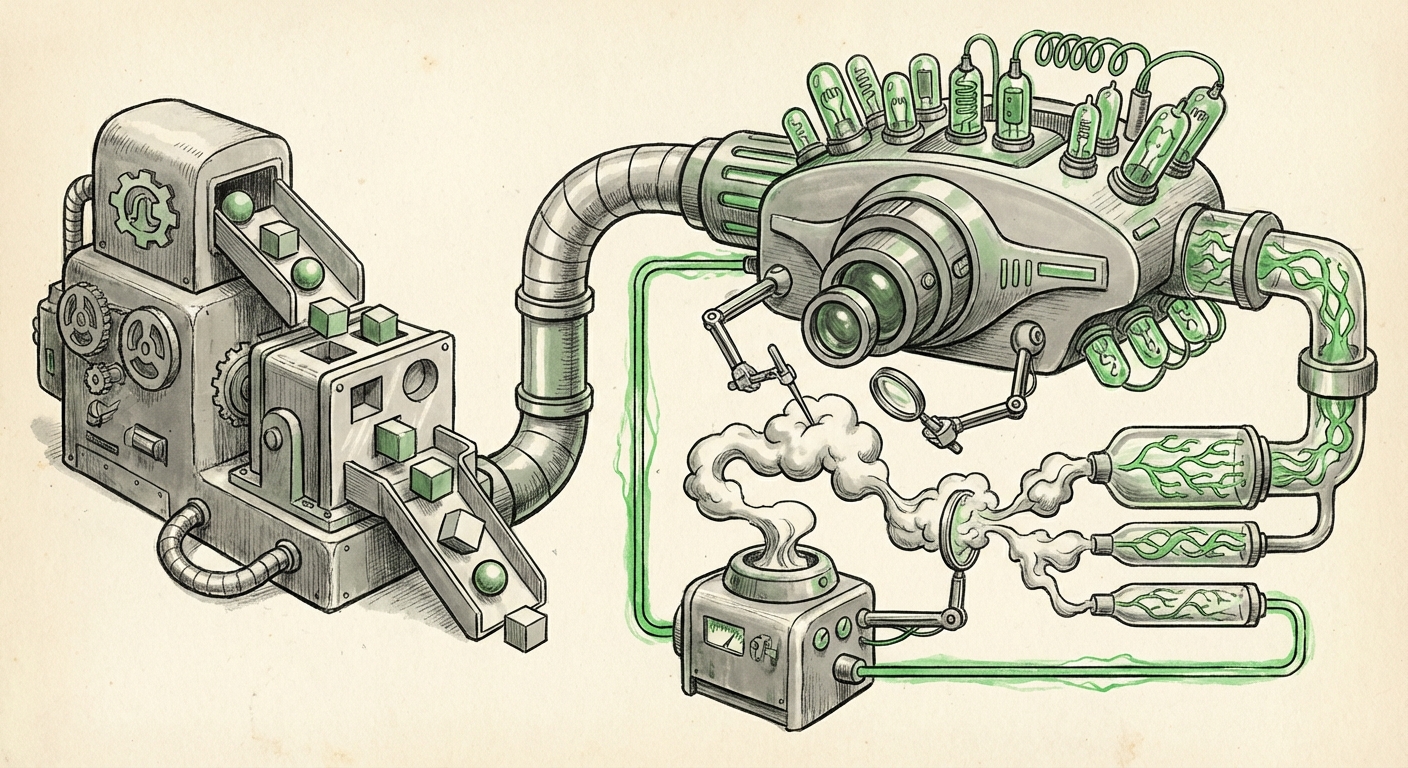

This shift—moving evaluation from passive testing to active, reasoning-based assessment—is perhaps the most critical underlying trend shaping the next generation of reliable AI. It means evaluation itself is becoming an intelligent system, requiring a dedicated reasoning engine body.

The Failure of Static Benchmarks

Imagine teaching a student complex physics. You wouldn't grade them solely on a multiple-choice test from 1995. You would ask them to solve a novel problem, explain their steps, and defend their methodology. Standardized benchmarks serve the multiple-choice function—they check for basic retention of known data.

However, modern frontier models can score near-perfectly on these static tests, leading to a phenomenon often called "benchmark saturation." This creates a false sense of security. When these models are deployed in the real world to handle customer service, code generation, or medical summarization, they fail in complex, unpredictable ways that simple scores never predicted.

We need evaluation that mirrors the complexity of the tasks themselves. This is where the **Agent-as-a-Judge** steps in, turning evaluation from a passive quiz into an active interrogation.

The Agent-as-a-Judge: A Reasoning Evaluator

Fundamentally, an Agent-as-a-Judge is a high-performing LLM (or an orchestrated group of agents) explicitly tasked with critiquing the output of another model based on a detailed set of criteria. Unlike a simple script checking keywords, this judge uses its inherent reasoning capacity.

This concept is powerfully supported by research into **Self-Refine and Iterative Improvement** (related to the search query on self-correction). If a model can critique its own rough draft based on the rules, it becomes a powerful meta-tool. For example, Judge-GPT might not just say "Answer is good," but rather:

- "Step 1 (Factual Check): The claim about quantum entanglement is accurate (Score: 5/5)."

- "Step 2 (Coherence): The transition between paragraph two and three is jarring, indicating a lack of logical flow in the generative agent's path (Score: 3/5)."

- "Step 3 (Completeness): The response failed to address the implied constraint regarding energy efficiency (Score: 2/5)."

This detailed, structured feedback loop is the "reasoning engine body" needed. It moves the needle from *what* the model said to *how* and *why* it said it, allowing developers to target specific failure modes.

Corroboration: Beyond the Hype

The move toward agent-based evaluation is not merely theoretical; it’s being actively built into modern MLOps pipelines. Our analysis of corroborating sources confirms this trend involves technical frameworks, recognized pitfalls, and integration with larger autonomous systems.

1. Building the Judge: Hierarchical Evaluation Frameworks

For an agent to judge effectively, it needs structure. Technical deep dives reveal that developers are moving toward **hierarchical evaluation criteria** (Search Query 3). This means the judge isn't given one vague prompt ("Is this good?"). Instead, it’s given nested instructions:

- Establish the ground truth parameters.

- Deconstruct the tested agent’s output into components (e.g., argument structure, tone, evidence usage).

- Score each component using defined sub-rubrics.

- Synthesize the sub-scores into a final assessment.

This structure ensures that the LLM judge leverages its reasoning power systematically, rather than relying on gut feeling.

2. The Essential Counterpoint: Recognizing Judge Bias

The enthusiasm for the Agent-as-a-Judge must be tempered by caution. As the query on **limitations of using LLMs as evaluators** highlights, the judge model often suffers from its own biases. If you use GPT-4 to evaluate a model built on GPT-3.5, GPT-4 might exhibit a structural preference for outputs resembling its own training style—a form of self-preference bias or "reward hacking" where the judge rewards outputs that simply look good to it.

Practical Implication: Businesses must treat the Judge Agent as another, highly critical model in their stack. It requires its own validation, adversarial testing, and version control. A robust system will likely use a **committee of judges** with diverse model architectures and explicitly balanced scoring rubrics to mitigate this inherent bias.

3. The Future is Collaborative: Multi-Agent Testing

The Judge Agent is rarely the end of the story. It is increasingly being integrated into **Multi-Agent Systems (MAS)** (Search Query 4). In this paradigm, evaluation becomes dynamic and adversarial:

- Agent A (The Challenger): Tries to trick the primary model into generating unsafe or inaccurate content.

- Agent B (The Defender): The LLM being tested.

- Agent C (The Judge): Assesses if Agent B successfully navigated Agent A’s attack, adhering to safety and quality guidelines.

This dynamic process means performance testing never truly stops; it becomes a continuous, automated war game that pushes the boundaries of the model’s capabilities far beyond what a static test set could achieve.

What This Means for the Future of AI Development

The emergence of the Agent-as-a-Judge is not just an engineering detail; it’s a philosophical shift that redefines the AI lifecycle.

1. Democratizing Sophisticated Testing

Historically, sophisticated red-teaming and expert evaluation were slow, expensive, and required specialized human labor. By automating this complexity into a reusable Agent-as-a-Judge framework, organizations can conduct higher-fidelity testing faster and cheaper. This dramatically lowers the barrier to entry for deploying truly sophisticated models.

2. The Race for "Reasoning Veracity"

Future competition will pivot away from simply training the largest models to training the most verifiable models. If Model X claims to have achieved a new reasoning capability, Model Y’s creator will deploy their Judge Agent to specifically test that claim across edge cases. Success won't just be about performance scores; it will be about **surviving rigorous, reasoning-based scrutiny.**

3. A New Era for AI Safety and Alignment

For AI safety researchers, the Agent-as-a-Judge is a critical tool. Safety alignment—ensuring the AI adheres to human values—is inherently difficult to measure with fixed rules. A reasoning judge can assess intent, tone, and ethical nuance in ways rigid filters cannot. If we can reliably train a judge to spot harmful reasoning patterns, we can use that judge to fine-tune and align the generative model itself, driving us closer to safer AGI.

Practical Implications for Business and Deployment

For businesses integrating LLMs, this trend mandates a change in MLOps strategy. The focus shifts from "training data hygiene" to "evaluation engine integrity."

For Developers and Engineers: Actionable Insights

Actionable Insight: Build the Test Harness First. Before finalizing a generative model’s prompt or fine-tuning set, dedicate resources to building the evaluation harness—the structure that houses your Agent-as-a-Judge. Focus on creating diverse, adversarial test cases that specifically target areas where your model is likely to fail (e.g., long-chain logic, ambiguity resolution).

Engineers should embrace frameworks that allow for **iterative judge refinement** (Source 1). If your judge consistently scores a perfectly good answer low, you need to update the judge’s internal rubric, not necessarily the tested model.

For Business Leaders: Risk Management

Actionable Insight: Diversify Your Evaluation Portfolio. Do not trust a single model, whether it is the generator or the judge, to validate your product. If you use a proprietary model (like GPT-4) as your primary evaluator, you must establish external validation tests using smaller, open-source models or human-in-the-loop checks to ensure the proprietary judge isn't simply rewarding what looks familiar.

The cost of a deployment error caused by poor evaluation will soon far outweigh the investment in building resilient, multi-faceted judging agents.

The Road Ahead: Moving Beyond the Human Baseline

The Agent-as-a-Judge represents the beginning of an exciting phase: **AI evaluating AI.** This is crucial because human review, while the gold standard for final validation, simply cannot scale to the terabytes of data and millions of inference requests generated daily by global AI systems.

We are not aiming to replace human expertise entirely, but to automate the heavy lifting of initial quality assurance and regression testing. By equipping our evaluations with genuine reasoning engines, we are ensuring that the next wave of AI innovation is built on a foundation of demonstrable, verifiable intelligence, rather than just impressive, surface-level fluency.