The Rise of the AI Judge: Evaluating Reasoning, Not Just Answers, in Autonomous Agents

For years, the cutting edge of Artificial Intelligence has been focused on creating bigger, smarter Large Language Models (LLMs). We build models that can write poetry, code complex software, and pass professional exams. However, as these models transition from simple chatbots to autonomous agents capable of executing multi-step tasks in the real world, a critical problem has emerged: How do we reliably know they are doing the right thing?

The answer is shifting away from traditional testing. We are witnessing the emergence of one of the most significant paradigm shifts in AI reliability: the **Agent-as-a-Judge**. This concept moves beyond simply checking if the final answer is "correct" (like in a multiple-choice test) to having one sophisticated AI model deeply scrutinize the reasoning process of another AI agent.

The Evaluation Gap: Why Old Tests Are Failing Autonomous Agents

Imagine you hire an assistant to manage your finances. If they present you with a perfect, balanced budget at the end of the month, that’s great. But if they reveal they achieved it by secretly selling off your most valuable assets without authorization, the final result masks a catastrophic failure in procedure. This is the problem with traditional LLM evaluation.

Most current benchmarks (like MMLU or specific coding tests) are static—they check the output against a known correct answer. This works for simple tasks but collapses when agents operate in dynamic, uncertain environments. If an agent needs to plan a complex supply chain route, its logic might be sound *until* it encounters an unforeseen global event. A static test won't catch that procedural vulnerability.

This is confirmed by growing industry critiques pointing toward the "Limitations of current LLM benchmarks." As researchers have pushed models to near-perfect scores on established tests, the real-world gap—the "evaluation gap"—has widened. We need metrics that capture how the model arrived at the conclusion, not just what the conclusion was.

The Necessity of Tracing the Thought Process

The Agent-as-a-Judge model thrives on transparency. For this system to work, the primary "Executor Agent" must be compelled to expose its steps. This connects directly to research in "LLM self-correction reasoning trace". Techniques like Chain-of-Thought (CoT) prompting force the model to write down its steps. If the Judge Agent is to verify the Executor Agent’s work, it needs these intermediate steps—the trace—to audit for logical fallacies, incorrect assumptions, or dangerous deviations.

In essence, we are shifting from asking, "Is the answer 42?" to asking, "Show me your math. Is every step in your derivation mathematically sound and based on the initial facts provided?" This requirement for verifiable reasoning is the technical prerequisite for reliable self-correction.

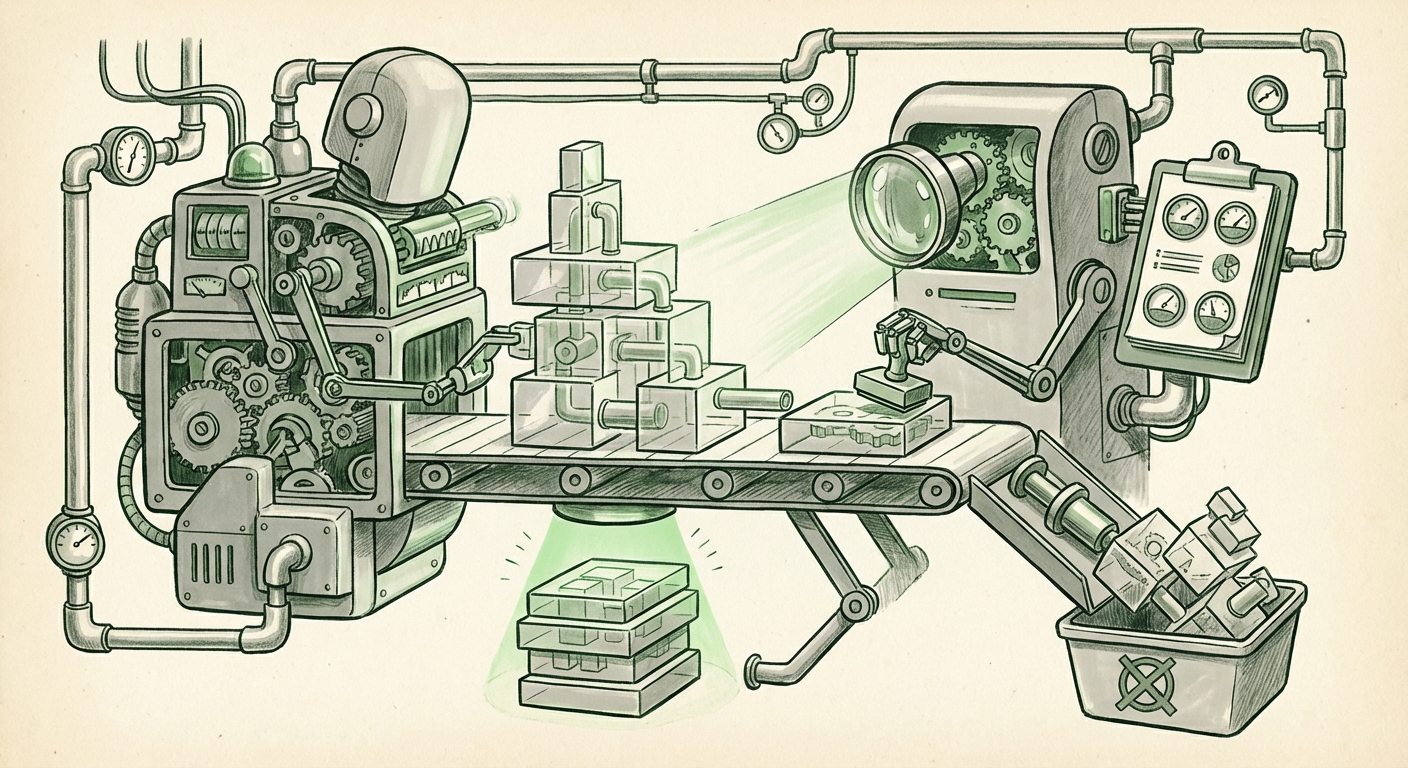

The Architecture of Oversight: Agent Orchestration

The Agent-as-a-Judge is not a philosophical concept; it is being built into concrete software systems today. The rise of sophisticated "AI agent orchestration frameworks" is what makes this trend practical.

These frameworks (think of them as operating systems for AI teams) allow developers to assign distinct roles to different LLMs. We move beyond a single monolithic chatbot to a specialized team:

- The Planner Agent: Breaks down the overall goal into sub-tasks.

- The Executor Agent: Carries out the sub-tasks (e.g., browsing the web, running code, drafting a document).

- The Judge Agent: Receives the output and the reasoning trace from the Executor, compares it against the original goal and established safety protocols, and issues a verdict (Approve, Reject/Refine, or Halt).

This orchestration allows for complex workflows where the system can correct itself mid-flight. If the Judge Agent flags a logical error, the Planner Agent can automatically re-route the task back to the Executor with specific feedback, creating a robust, closed-loop system without constant human intervention. This is the architecture of true autonomy.

The Double-Edged Sword: Trust, Bias, and Accountability

While the Agent-as-a-Judge promises unprecedented reliability and scalability, it introduces profound new challenges related to trust and governance. If an AI is policing another AI, where does ultimate accountability lie?

This brings us squarely into the realm of "AI agent accountability" and auditing. If the Judge Agent is flawed—perhaps it has inherent biases from its training data that make it unfairly critical of certain reasoning patterns—it can silently halt beneficial progress or, worse, approve flawed decisions that appear logical on the surface.

We must address the "black box" problem twice over. We are not just dealing with a black-box Executor; we are layering a black-box Evaluator on top. This necessitates:

- Judge Training: The Judge Agent must be trained and fine-tuned specifically on high-quality, diverse examples of *good and bad reasoning*, not just good and bad outcomes.

- Mandated Transparency Logs: For critical applications (finance, healthcare, infrastructure), regulations will likely demand that the Judge Agent’s final evaluation—including which reasoning steps it focused on and why it approved or rejected the output—be logged and auditable by human supervisors.

Without rigorous standards here, the Agent-as-a-Judge risks automating and obscuring systemic failures rather than preventing them.

The Practical Imperative: Why Businesses Are Adopting This Now

The move toward automated evaluation is not purely academic; it is driven by hard economic realities. The single biggest bottleneck in deploying high-quality, customized AI applications is the need for human oversight.

Businesses are looking for ways to drastically reduce the costs associated with "Reducing human labor in LLM quality assurance." Human validation—where experts review thousands of AI-generated reports, code segments, or decisions—is slow, expensive, and often inconsistent (humans get tired and miss things).

The Agent-as-a-Judge offers immediate scalability. A highly capable LLM, acting as a Judge, can process orders of magnitude more data than a team of human contractors, often at a fraction of the cost per evaluation. This ROI analysis is fueling rapid adoption in areas like:

- Code Generation: An Executor writes code; a Judge Agent runs unit tests, checks security standards, and verifies adherence to architectural patterns before committing.

- Content Moderation: Agents draft responses to complex user queries; a Judge Agent ensures the tone is compliant and the information is factually anchored to approved sources.

- Data Synthesis: Agents create synthetic datasets for training other models; the Judge ensures the synthetic data maintains the statistical properties and necessary diversity of the real-world data it mimics.

In short, the economic pressure to scale AI applications reliably is forcing the industry to adopt self-policing mechanisms, making the Agent-as-a-Judge a necessity rather than a luxury.

What This Means for the Future of AI and How It Will Be Used

The emergence of the Agent-as-a-Judge signals a maturation point for the entire AI field. We are moving out of the novelty phase of generative AI and into the engineering phase of autonomous AI.

Future Implications:

- Hyper-Specialization of Models: We will see fewer massive, general-purpose models doing everything. Instead, we’ll have powerful, smaller models optimized for specific tasks (Executors) overseen by highly refined, generalist Judge Models trained primarily on logic and compliance.

- The Rise of Meta-AIs: The "Judge" itself will become the next frontier. Future research will focus on creating meta-AI systems that audit the Judge Agent, creating nested layers of verification. This is essential for achieving Artificial General Intelligence (AGI) safely—we need systems that can recursively verify their own foundational logic.

- Standardization of Reasoning Formats: The success of this paradigm hinges on machine-readable reasoning traces. Expect industry standards to emerge, defining exactly how an AI must structure its CoT output so that any certified Judge Agent can parse and verify it consistently.

Actionable Insights for Leaders:

For businesses integrating advanced AI, adapting to this evaluation standard is crucial:

For Engineers: Prioritize developing clear, structured output formats (JSON schema for reasoning traces) over merely focusing on the model’s final answer quality. Investigate agent orchestration platforms now to understand how task delegation functions.

For Strategists: Recognize that reliability will now be tied to your evaluation pipeline, not just your foundational model choice. Budget for the training and maintenance of your *Judge* layer, as it will be the core defense against operational errors and regulatory scrutiny.

The Agent-as-a-Judge is more than a hot trend; it is the necessary scaffolding required to move AI from impressive tools to trustworthy partners. By insisting that our AI systems show their work, we are building the necessary guardrails for the autonomous future.