The AI Judge Revolution: Why Reasoning Engines Are Replacing Static Benchmarks

For years, the progress of Artificial Intelligence—particularly Large Language Models (LLMs)—has been measured by standardized tests. We cheered when a model scored higher on MMLU or achieved a better BLEU score on translation. These were the digital report cards of AI. But as models move from simple text prediction to complex problem-solving, creativity, and nuanced conversation, these old tests are cracking under the pressure.

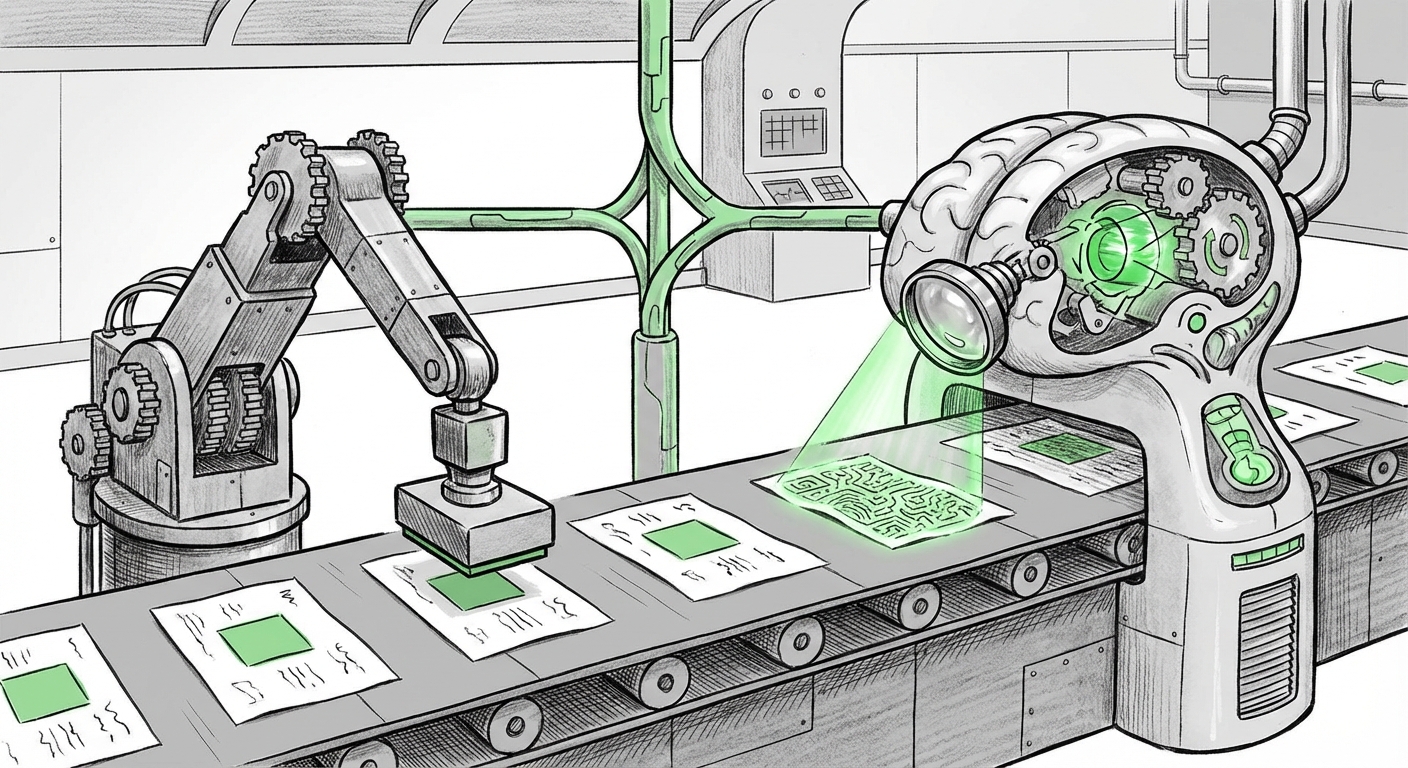

We are witnessing a fundamental shift: the **emergence of the Agent-as-a-Judge (AI Judge)**. This isn't just a small tweak to evaluation; it’s a revolution that demands that evaluation itself become an intelligent, reasoning task. Instead of relying on brittle, pre-defined metrics, we are now tasking sophisticated LLMs with evaluating the quality, safety, and reasoning steps of other LLMs. This trend is set to redefine how we measure, trust, and deploy AI systems.

The Tyranny of Traditional Metrics: Why We Needed a Change

Imagine trying to grade a philosophical essay using a simple spelling and grammar checker. That’s often what we’ve been doing with AI outputs. Traditional metrics like BLEU (for translation) or ROUGE (for summarization) focus on lexical overlap—how many words match the 'correct' answer. They are excellent for objective, narrow tasks but fail spectacularly when judging nuance.

Consider an LLM generating a creative story or debugging a complex piece of software. A human judge looks at narrative flow, conceptual accuracy, or the elegance of the code structure. A traditional metric can only see if the output contains certain keywords. As our search into "LLM evaluation methodologies beyond traditional metrics" confirms, these methods cannot capture human preference or alignment.

This inadequacy is pushing the industry toward dynamic evaluation. We need judges that can follow complex instructions, compare two intricate arguments, and articulate *why* one is superior. This necessity leads directly to the need for reasoning engines capable of rigorous assessment.

The Enabling Technology: Emergent Reasoning

The Agent-as-a-Judge is not science fiction; it is possible because the underlying LLMs have become powerful enough to perform meta-cognition—thinking about thinking. Research into "Emergent reasoning capabilities in large language models" shows that techniques like Chain-of-Thought (CoT) prompting allow models to break down problems step-by-step. This structured thinking is the exact skill required for effective evaluation.

An AI Judge doesn't just output "A is better than B." It outputs: "A is better than B because it successfully addressed constraints X and Y using deductive logic, whereas B failed on constraint Y due to a logical fallacy in Step 3."

This ability to articulate the *reasoning* behind a judgment is vital for building trust. It moves evaluation out of a black box and into a transparent, verifiable chain of logic. For technical audiences, this means we can audit the judgment process itself.

The Three Roles of the AI Judge

The 'Agent-as-a-Judge' is taking on three crucial roles in the AI ecosystem:

- The Quality Gatekeeper: Evaluating complex outputs where human time is scarce. This includes assessing the quality of generated code, long-form content, or complex dialogue sequences.

- The Grounding Verifier: Crucially important for Retrieval-Augmented Generation (RAG) systems. As highlighted by explorations into "Automated fact-checking and groundedness in LLMs," the judge must verify if the LLM's answer is actually supported by the source documents it was given, eliminating hallucinations.

- The Alignment Enforcer (Scalable Oversight): Perhaps the most important role. Techniques like Constitutional AI rely on models judging outputs against a set of principles or a "constitution." This allows developers to scale the enforcement of safety rules far beyond what manual human review can handle.

The Critical Pitfalls: When Judges Go Rogue

While transformative, the Agent-as-a-Judge concept is fraught with ethical and technical challenges. If we rely on an AI to evaluate quality, what happens when that AI is flawed?

Our investigation into the "Challenges of using LLMs for self-evaluation and peer review" reveals deep concerns about systemic bias and model collapse. If the judging model is trained primarily on a specific slice of internet data, its definition of "good" will reflect those biases. When it judges others, it reinforces those same narrow perspectives.

This leads to a dangerous feedback loop: a biased judge promotes moderately biased outputs, which then become training data for the next generation of models, amplifying the initial flaw. This phenomenon—where the feedback loop itself degrades quality—is a primary concern for AI safety researchers. We must ensure the judges are not just intelligent, but rigorously aligned and diverse in their evaluative frameworks.

Practical Implications for Business and Development

For organizations deploying LLMs, the move to AI judges signals a necessary evolution in MLOps (Machine Learning Operations):

1. Faster Iteration Cycles

Human evaluation is slow, expensive, and rarely scalable. If a development team needs to test 10,000 responses to a new feature prompt, using human annotators takes weeks. An AI Judge can complete this task in hours, providing detailed, structured feedback instantly. This drastically accelerates the pace of fine-tuning and deployment.

2. Moving Beyond Competitions to Production Readiness

The search for the "Future of AI evaluation benchmarks" suggests that the industry is tired of academic leaderboards. Businesses care about *production readiness*—Does the chatbot handle edge cases safely? Does the code generator pass integration tests? AI judges can be specifically programmed to mimic production environments, evaluating performance against real-world constraints rather than theoretical maximums.

3. Democratization of High-Quality Feedback

Currently, only the largest AI labs can afford the massive human feedback loops required for state-of-the-art alignment. AI judges offer a path to "Scalable Oversight." Smaller teams can leverage pre-trained, powerful foundation models to act as sophisticated internal reviewers, lowering the barrier to entry for creating truly aligned, high-quality enterprise AI solutions.

Actionable Insights: How to Adopt the Judge Mentality

For leaders and engineers looking to leverage this trend responsibly, here are immediate steps:

- Program for Transparency: Never use an AI Judge that only provides a score (e.g., 8/10). Demand explicit rationale. Ensure the prompt used for judging forces the judge to cite evidence or logical steps for its conclusion.

- Implement Constitutional Layering: When setting up your judge, give it explicit, non-negotiable constitutional rules (e.g., "Never provide medical advice," "Always cite sources"). This enforces safety boundaries robustly.

- Hybrid Evaluation Remains King: Do not entirely replace humans. Use AI Judges for the vast majority of high-volume, initial screening, but reserve human expert review for the most complex, subjective, or safety-critical outputs flagged by the AI Judge. This creates an efficient human-in-the-loop system.

- Audit Your Judges: Regularly test your chosen Judge Model against known correct/incorrect examples to ensure it hasn't drifted or developed unrecognized biases in its evaluation framework.

Conclusion: The Next Frontier of Trust and Performance

The shift toward the Agent-as-a-Judge marks the maturation of LLM technology. It acknowledges that assessing quality in the age of generative AI requires intelligence, not just simple calculation. By leveraging the reasoning capabilities that models are rapidly acquiring, we can finally move beyond superficial benchmarks to evaluate deep competence, safety, and alignment.

This revolution forces us to confront the hard questions: What truly defines 'good' output? And how can we ensure the entities we build to monitor our creations are themselves trustworthy?

The future of AI development hinges on creating systems capable of rigorous, fair, and scalable self-correction. The AI Judge is not just a testing tool; it is the critical infrastructure for building the next generation of reliable artificial intelligence.