The Trust Imperative: Why Agentic AI Observability is the New Foundation for Enterprise AI

For years, the conversation in enterprise AI centered on model accuracy, latency, and precision. We perfected the art of building predictive systems that could tell us *what* was likely to happen—predicting customer churn, flagging fraudulent transactions, or estimating inventory needs. These were complex, but fundamentally, they were **single-step decision-makers**.

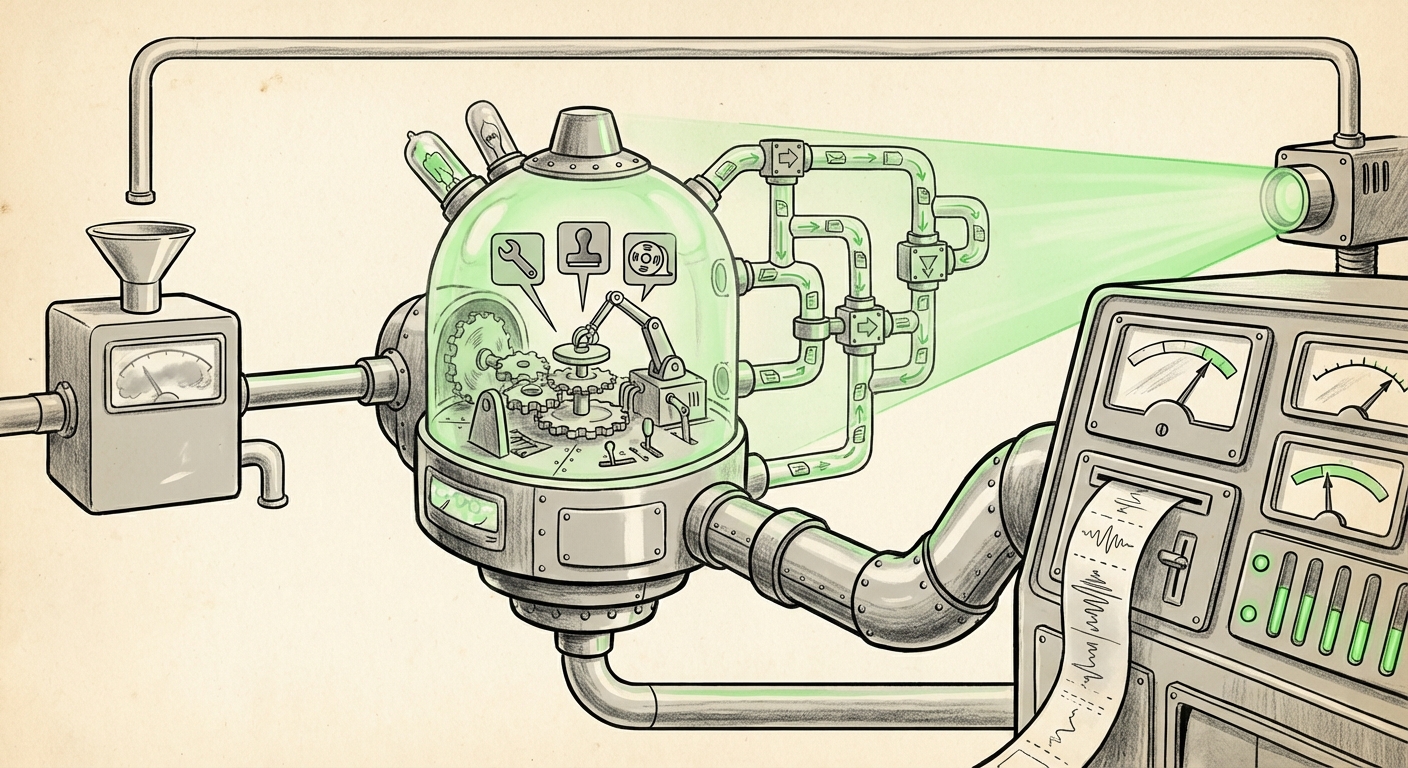

Today, the technology has vaulted forward. We are no longer just building predictors; we are deploying **Agentic AI systems**. These systems don't just predict; they *act*. They plan, they use tools (like accessing databases or sending emails), they iterate on failed attempts, and they execute entire workflows autonomously. An agent might approve a complex supply chain order, diagnose a network failure, or manage a customer service escalation path, making thousands of micro-decisions every hour.

This dramatic shift in capability introduces an equally dramatic challenge: trust. If an agent approves a multi-million dollar transaction incorrectly, or if it chooses a suboptimal path in a critical operation, how do we know *why*? As highlighted by recent industry analysis, the core assertion is becoming undeniable: **Unmonitored autonomy is corporate recklessness.** Without robust Agentic AI Observability, we are simply running blind, high-speed autonomy in production.

The Death of Traditional Model Monitoring

Traditional Machine Learning Operations (MLOps) focused on monitoring the inputs and outputs of static models. We looked for data drift in features, monitored model performance decay, and perhaps checked for bias in the final prediction score. This approach is utterly inadequate for agentic systems.

An agent’s decision is not a single event; it is a **synthesis of a chain of events**. Consider an agent tasked with optimizing a marketing campaign:

- It reads the initial request (Input).

- It decides which tool to use first (e.g., a database query tool).

- It interprets the query result (Intermediate Output).

- It forms a new hypothesis based on that result.

- It decides to use a second tool (e.g., a budgeting API).

- It executes the final recommendation (Final Output).

If the final campaign is poor, was the failure in step 2 (choosing the wrong tool), step 4 (misinterpreting the data), or step 6 (a flaw in the final execution prompt)? Traditional monitoring cannot tell us.

This complexity underscores the necessity of specialized observability. We need systems that can trace the entire **multi-step reasoning pathway**. This means capturing not just the final token or output, but every internal thought, every tool selection, and every retrieved piece of context.

The Technical Mandate: Tracing Multi-Step Reasoning

For engineers, this is the shift toward what is being formalized in the LLMOps traceability sphere. We must capture:

- Tool Invocation Logs: Which functions or APIs did the agent decide to call?

- Contextual Retrieval: If using Retrieval-Augmented Generation (RAG), what specific documents or data chunks were pulled, and how were they weighted in the final decision?

- Intermediate Reasoning States: The actual 'scratchpad' or internal monologue the model generates before acting.

When we cannot reproduce the agent’s journey from prompt to action, we lose the ability to debug, secure, or even prove due diligence. This technical requirement transforms observability from a "nice-to-have" feature into the fundamental plumbing of agentic deployment.

The Governance Gap: From Debugging to Auditing

The leap in capability demands a proportional leap in governance. When AI is operating autonomously in regulated industries—finance, healthcare, legal services—the question of "why" moves from debugging a system error to fulfilling a legal obligation.

This necessity is driving the immediate attention of policymakers globally. Regulatory frameworks, most prominently the EU AI Act, categorize AI systems based on risk. High-risk systems demand rigorous documentation proving explainability and traceability. An agentic system operating unchecked in a high-risk area is, by definition, operating outside compliance.

Observability becomes the evidence locker for enterprise accountability:

- Risk Management: If an agent approves a faulty insurance claim, the ability to present a time-stamped, complete transcript of the agent’s reasoning—including every piece of data it referenced—is crucial for liability assessment.

- Bias Detection: Unintentional bias can creep in via tool choice or prompt interpretation. Comprehensive logs allow auditors to replay scenarios and pinpoint exactly where a faulty assumption or biased data sample influenced the autonomous decision.

For the C-suite, the message is clear: adopting agentic AI without enforceable, detailed observability pipelines is akin to signing contracts written in an unknown language—you cannot be held responsible for terms you cannot read.

The Future Loop: Observability Fuels Self-Correction

While governance addresses the present risks, observability holds the key to future performance. The most advanced AI architectures aim for **self-healing and self-improving systems**. This cannot happen in a vacuum.

If an agent fails, a human must intervene, diagnose the failure, and manually adjust the system (often through re-engineering the system prompt or retraining a smaller component). However, in the future, this manual loop will be replaced by an automated feedback mechanism.

Robust observability data feeds directly into this future:

- Failure Captured: Detailed logs show the exact inputs, tool failures, and reasoning that led to the suboptimal outcome.

- Automated Review: A meta-agent or specialized monitoring system analyzes this trace data against predefined success metrics.

- Correction Applied: Based on the analysis, the system automatically generates new constraints, adjusts weights in its tool selection model, or generates a refined instruction set for the next iteration.

This closes the loop, moving AI evolution from sporadic human intervention to continuous, data-driven self-optimization. Without the fine-grained tracing that observability provides, feedback becomes guesswork, severely limiting the speed and resilience of autonomous agents.

Navigating the Ecosystem: The LLMOps Tooling Explosion

The realization of this observability challenge is spurring a rapid evolution in the tooling landscape. The market is quickly splitting between traditional MLOps platforms adapting for LLMs and entirely new LLMOps specialists emerging.

For platform architects and developers, understanding this ecosystem is vital. The debate is shifting from if we need traceability to which platform offers the best fidelity for agentic workflows (often involving frameworks like LangChain or AutoGen).

Key differentiators in this new tooling space include:

- Trace Depth: How far back can the tool reconstruct the agent's reasoning process?

- Cost Attribution: Given that agents often iterate rapidly, precise logging is needed to attribute token costs to specific successful or failed steps.

- Integration: How seamlessly does the tracing data integrate with existing enterprise governance and auditing systems?

This competitive environment is positive, as it forces observability features—which were once specialized add-ons—to become baseline requirements for any platform serious about deploying autonomous agents.

Practical Implications: Actionable Insights for Today

How do businesses move from recognizing the danger of unmonitored autonomy to building a foundation of trust?

1. Mandate Traceability by Design (For Developers and Engineers)

Do not treat observability as an afterthought bolted onto the end of development. When designing an agent pipeline, the first design requirement must be the logging mechanism. Use open standards or established tracing tools (often requiring specific wrappers around tool calls) to ensure every interaction with an external service or every internal reasoning step is logged immediately. If the LLM cannot generate a clear 'reason why' for its action, the action should not be allowed to proceed in production.

2. Establish Clear Accountability Tiers (For Leadership)

Define clear levels of autonomy based on the risk profile of the task. A Level 1 Agent (purely informative) requires less scrutiny than a Level 4 Agent (transactional, irreversible action). Correspondingly, Level 4 agents must be provisioned with the most rigorous, auditable observability stacks, tying resource allocation directly to regulatory risk.

3. Invest in Human-in-the-Loop Review Tools (For Operations)

Observability data is useless if it requires a data scientist to manually sift through gigabytes of raw JSON logs. Businesses must invest in visualization tools that translate complex traces into human-readable narratives, allowing operations staff or compliance officers to quickly grasp the 'story' of a complex agent decision.

4. Prepare for Regulatory Replay (For Compliance)

Assume that regulators, insurers, or internal legal teams will, at some point, demand a full replay of an agent’s critical decision. Your observability system must not just store data; it must store data that is reproducible and contextually complete, matching the state of the world (data looked up, time of execution) when the decision was made.

Conclusion: Observability is the Bridge to Reliable Autonomy

The transition to Agentic AI is not merely an upgrade in performance; it is a fundamental restructuring of how software interacts with the business world. It promises efficiency gains far beyond what static models could offer, but it demands a level of responsibility previously reserved for human decision-makers.

The central theme emerging across the technology sector is clear: Trust is built on transparency, and transparency in autonomous systems is defined by observability. For enterprises ready to move beyond pilot projects and deploy powerful, decision-making agents at scale, mastering the art and science of agentic observability is no longer optional—it is the prerequisite for safe, reliable, and successful transformation.