The Great Power Pivot: Anthropic’s Energy Pledge Reveals the True Cost of Advanced AI

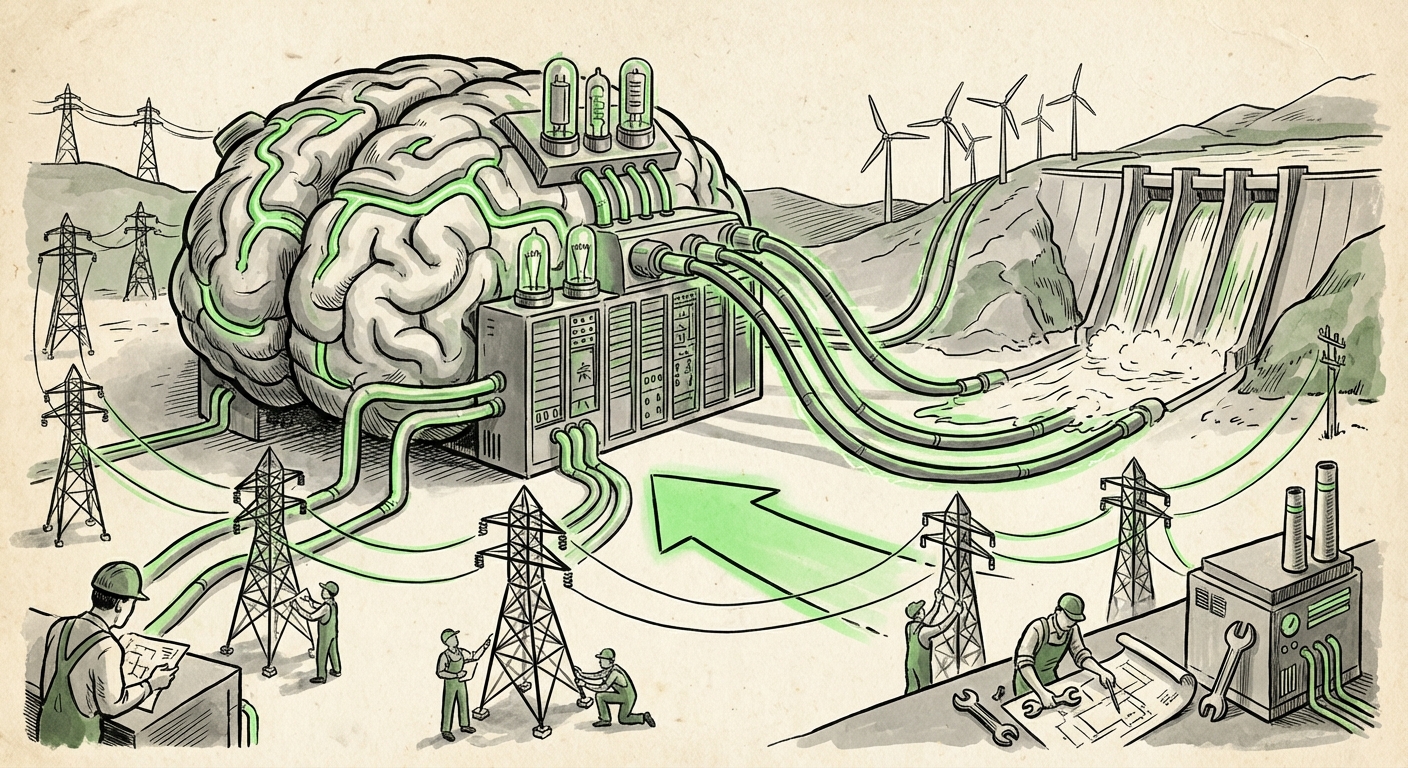

The rapid advancement of Artificial Intelligence—from cutting-edge Large Language Models (LLMs) to sophisticated multimodal systems—is often discussed in terms of parameters, speed, and intelligence benchmarks. However, behind the scenes, the industry is facing a far more fundamental constraint: power. Electricity is the hidden input that determines the pace of AI progress, and the associated infrastructure costs are becoming astronomical.

Anthropic’s recent public commitment—to fully absorb grid upgrade costs, invest directly in new power generation, and cap energy consumption during peak utility hours stemming from new data center construction—is not just a nice gesture; it is a profound signal about the maturity and impending collision of AI growth with physical reality. As an analyst, this move forces us to look beyond the code and examine the iron, concrete, and kilowatts underwriting the AI revolution.

The Unseen Engine: Contextualizing AI’s Energy Footprint

To understand the gravity of Anthropic’s financial promise, we must first grasp the scale of the energy demand. Training the largest, most powerful LLMs requires massive, sustained computational power. This is not like running a typical office server; it involves thousands of high-powered accelerators running continuously for months.

If we look at projections on AI data center energy consumption forecasts for 2024 and beyond, the trend is sobering. Many analyses suggest that the energy demands of AI could rival the total consumption of entire medium-sized countries within the next decade. This growth stems from two main factors:

- Model Scaling: New models are exponentially larger and require more energy for the initial, intensive training phase.

- Inference Overload: As these models are deployed across billions of user queries (inference), the cumulative, constant draw starts to dwarf the initial training costs.

When a company like Anthropic commits to covering grid upgrades, they are acknowledging that their next-generation hardware deployment will create a localized, sudden spike in demand that existing local utilities cannot immediately handle. This is the cost of pushing the frontier—it requires preemptive infrastructure build-out.

Hyperscalers and the New Power Procurement Game

Anthropic is not operating in a vacuum. Their action is a response to, and a potential setter of precedent for, industry-wide trends observed among hyperscalers like Google, Microsoft, and Amazon. Analyzing the data center power procurement strategies shows that securing stable, clean power is now the primary bottleneck for expansion, overshadowing even the supply of specialized AI chips.

These large cloud providers are increasingly bypassing standard utility procurement channels. Instead, they are engaging in massive Power Purchase Agreements (PPAs) to fund the construction of dedicated solar farms, wind projects, or even micro-nuclear facilities specifically dedicated to fueling their AI operations. Why? Reliability and sustainability branding.

Anthropic’s pledge to invest in "new power generation" directly mirrors this high-level strategy. However, by explicitly promising to cover *consumer* electricity costs and grid upgrades, they are effectively de-risking the transition for the immediate community. This strategy has two immediate benefits:

- Regulatory Acceptance: It smooths the path for zoning and permitting by demonstrating proactive concern for local grid stability.

- Talent Attraction: It signals a long-term commitment to sustainable, reliable operations, appealing to both environmentally conscious investors and top-tier engineers.

The implication for other AI developers is clear: Capital expenditure must now include significant, long-term energy infrastructure guarantees. Simply leasing rack space is no longer sufficient when the primary limiting factor is the local substation's capacity.

The Technical Tightrope: Efficiency vs. Scale

While capital investment secures the power supply, the technological race is on to ensure that power is used wisely. The commitment to "cap its data centers' energy consumption during peak hours" is a powerful hint that efficiency optimizations are paramount.

We must look at the push for AI hardware efficiency improvements versus ever-growing model sizes. The industry is caught in a trade-off. While new chip architectures (like custom ASICs or highly optimized GPUs) offer better performance-per-watt than previous generations, the sheer size of the models being deployed tends to eat up those efficiency gains.

For Anthropic, peak-hour capping suggests they are employing sophisticated dynamic load-balancing systems. This involves intelligent software that shifts heavy, non-time-critical training jobs to off-peak hours (often late at night) when electricity is cheaper and grid demand is lower. This technical maneuvering allows them to maintain high utilization rates without triggering brownouts or incurring exorbitant peak-demand pricing penalties.

The future of competitive AI development will hinge not just on who has the largest model, but who has the most efficient orchestration layer managing that model’s power draw. This convergence of data science and electrical engineering expertise is a critical, emerging skill set.

Societal Friction: The Zoning Wars and Social License to Operate

The physical manifestation of AI—the massive, perpetually humming data center—is increasingly becoming a public concern. Examining the local government reaction to data center energy draw reveals mounting political friction.

Cities and counties, often seeking tax revenue, are suddenly finding their municipal power grid capacity severely tested by proposals for AI data centers that require power equivalent to tens of thousands of homes. This has led to moratoriums, public pushback, and intense regulatory scrutiny—the "Zoning Wars" mentioned in current analysis.

Anthropic’s promise to cover consumer electricity costs is a strategic move to secure its social license to operate. By directly offsetting potential costs or stress placed on the local grid that serves residents, they transform from a disruptive external entity into a responsible infrastructure partner. This sets a high bar for future expansions.

Future Implications: The New Calculus of AI Deployment

What does this entire development—the massive capital commitment, the focus on procurement, and the necessary political outreach—mean for the future trajectory of Artificial Intelligence?

1. Energy Becomes the Primary Barrier to Entry

In the early days of LLMs, the barrier was talent and access to specialized chips (like those from Nvidia). Now, the barrier is increasingly energy security and capital liquidity. Only companies with billions in funding, the ability to secure long-term, massive energy contracts, and the technical expertise to manage complex utility relationships will be able to build and sustain frontier models.

This concentrates power further. Smaller startups will become increasingly reliant on leasing compute time from the very hyperscalers who are busy securing their own energy empires.

2. Geographical Shifts in AI Hubs

AI development will no longer follow fiber optic cables alone; it will follow cheap, abundant, and reliable power sources. We will see increased investment in regions that offer:

- Access to cheap renewable energy zones (e.g., wind corridors or geothermal sites).

- Favorable regulatory environments that fast-track utility interconnections.

- Proximity to transmission infrastructure, even if it requires private capital investment to upgrade it.

This could lead to a decentralization away from traditional tech hubs toward resource-rich, but potentially remote, areas, provided the company is willing to finance the necessary transmission upgrades.

3. Mandatory Green Tech Integration

The concept of "sustainability" is moving from a marketing bullet point to a core architectural requirement. Future AI platforms will be judged not just on their accuracy (e.g., Claude vs. GPT) but on their PUE (Power Usage Effectiveness) and their reliance on 24/7 carbon-free energy (CFE).

Anthropic’s move effectively codifies the expectation that AI leaders must be infrastructure builders, not just software developers.

Actionable Insights for Stakeholders

This energy pivot provides clear directives for different segments of the technology ecosystem:

For Technology Leaders (CEOs and CTOs):

Actionable Insight: Treat power supply as a mission-critical asset, equal to compute supply. Integrate energy planning into the earliest stages of model development. If your roadmap requires a 10x increase in training compute, you must have a verified, funded plan for securing 10x the power capacity, including contingency plans for grid instability.

For Investors and Financial Analysts:

Actionable Insight: Scrutinize CapEx disclosures for line items related to energy infrastructure (PPAs, direct generation investments, grid upgrade surety bonds). A company *not* making significant proactive energy investments may face throttling or higher operational costs down the line due to reliance on volatile peak pricing or aging infrastructure.

For Policy Makers and Regulators:

Actionable Insight: Recognize that AI infrastructure build-out requires massive lead time for utility upgrades. Policymakers must streamline interconnection processes while establishing performance mandates (like peak-hour usage caps or CFE requirements) to ensure that the AI boom benefits society broadly, rather than stressing residential power systems.

Anthropic’s announcement serves as a crucial inflection point. The age of easily scalable, virtually unlimited computational power is ending, replaced by an era where physical constraints—thermodynamics, land use, and electricity generation—dictate the speed of innovation. The successful AI companies of the next decade will be those that master not just the algorithms, but the entire energy stack required to run them.