The $380 Billion AI Bet: Analyzing Anthropic's Valuation and the New Frontier of AI Power

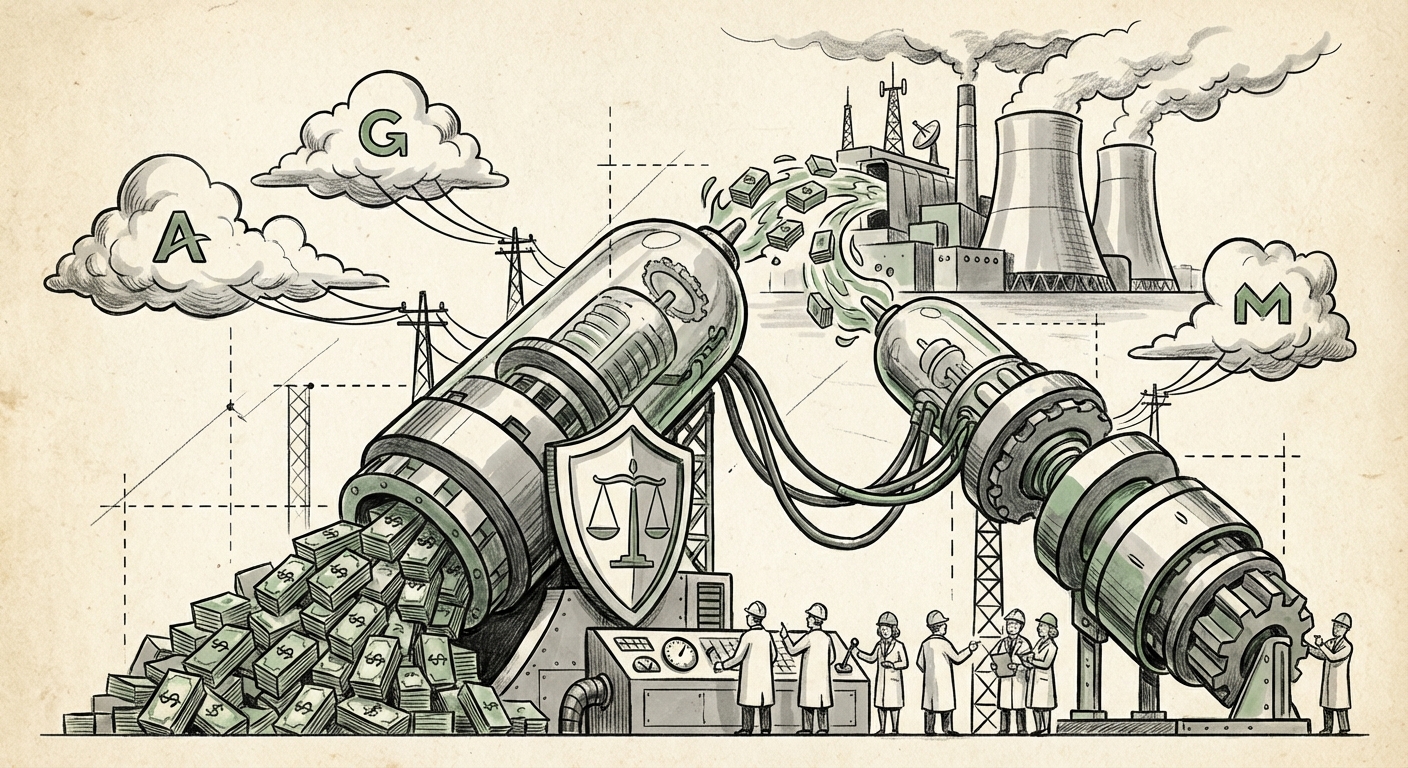

The world of Artificial Intelligence just witnessed a financial earthquake. Anthropic, the creator of the Claude family of large language models (LLMs), has closed a staggering Series G funding round reportedly topping $30 billion, catapulting its post-money valuation to an eye-watering $380 billion. To put this in perspective, this single funding event makes Anthropic one of the most valuable private companies globally, placing it squarely in the stratosphere alongside established tech giants.

For those of us tracking the exponential growth of generative AI, this isn't just a headline; it’s a critical indicator of market dynamics, competitive strategy, and the future direction of foundational technology development. This analysis dives deep into what this valuation means, who is underwriting this monumental risk, and the practical implications for businesses betting their futures on AI.

The Shockwave: Deconstructing the $380 Billion Figure

Why is Anthropic worth so much? Traditional valuation metrics based on current revenue are nearly meaningless in the current AI frontier landscape. This valuation is fundamentally a bet on future utility, strategic access, and moat building.

1. The Competitive Landscape: Challenging the Incumbent

Anthropic exists primarily in direct competition with OpenAI (backed by Microsoft). For Anthropic to command a valuation near or exceeding its main rival suggests that the market perceives the competition as a genuine duopoly, rather than a simple leader-follower dynamic. This signals a belief that model diversity—having two leading, highly capable, and differently governed foundational models—is essential for the market's stability and innovation speed.

When we examine this against OpenAI's recent valuation milestones, we see a furious escalation in price tags across the board. This isn't just about feature parity; it’s about securing the intellectual property, talent, and compute required to define the next era of AGI. The investment is essentially a pre-purchase of market dominance.

2. The Hyperscaler Cloud Wars: Securing Compute Access

To understand the mechanics of this raise, we must investigate who provided the capital. Our research focus on "Anthropic funding round investors details" highlights that these massive rounds are rarely pure equity plays; they are strategic infrastructure pacts.

Major cloud providers like Amazon (AWS) and Google (GCP) are investing billions. For them, owning a stake in Anthropic means securing preferential access to run Claude models on their infrastructure. This strategy solidifies their cloud market share. If you are a major enterprise deciding between Azure, AWS, or GCP, the availability and integration of a top-tier model like Claude versus GPT becomes a deciding factor. This investment effectively turns Anthropic into a critical, non-transferable asset for its backers.

3. The Cost of Being Cutting-Edge: The Compute Imperative

A key context derived from searching the "Cost of training leading large language models 2024" reveals the brutal economics of frontier AI development. Training models capable of surpassing Claude 3 Opus or GPT-4 requires infrastructure costing hundreds of millions, possibly billions, of dollars in specialized GPU clusters (like Nvidia’s latest Blackwell architecture). The sheer magnitude of the $30 billion raise reflects the operational runway needed to:

- Secure scarce, cutting-edge hardware years in advance.

- Fund iterative training runs that refine safety and capability.

- Maintain a top-tier research team capable of theoretical breakthroughs.

For a technical audience, this valuation is a necessary premium paid to maintain footing in the compute arms race. If you cannot afford the GPUs, you cannot compete.

The Safety Premium: Differentiating Through Governance

Anthropic was founded by researchers concerned about the rapid, potentially unchecked commercialization of powerful AI. Their core innovation, *Constitutional AI*, guides model behavior using a set of principles (a "constitution") rather than solely relying on human feedback for every decision. This focus is a major differentiator.

Our investigation into "AI safety investment trends and regulation" shows that investors are increasingly placing a "Safety Premium" on Anthropic. As governments (especially in the EU and US) begin to draft serious regulation for frontier models, companies that can demonstrate built-in safety mechanisms—like Constitutional AI—are viewed as less risky investments. They are seen as more likely to navigate future compliance hurdles smoothly. For businesses, this translates to adopting a partner whose core philosophy aligns better with long-term governance and liability management.

Implications for the Future of AI Development

This massive capitalization event shifts the landscape in several profound ways:

The Bifurcation of AI Development

We are moving rapidly toward a world where frontier AI development is dominated by two or three heavily financed entities (OpenAI/Microsoft, Anthropic/Cloud Allies, and potentially a well-funded Google/DeepMind axis). The barrier to entry for building a general-purpose foundation model has become effectively insurmountable for startups lacking billion-dollar backing. This consolidation concentrates immense power and expertise.

The Commoditization of "Good Enough" AI

While the frontier models—Claude 3.5 and its successors—will remain incredibly expensive to build, the massive investment drives down the cost of *using* them. The competition between hyperscalers ensures that integration, fine-tuning, and deployment services become cheaper and more accessible to smaller businesses. If you don't need absolute state-of-the-art reasoning for your core product, you will soon be able to leverage highly capable, specialized models for a fraction of the cost.

The Rise of Model-Centric Services (Not Just APIs)

The investment isn't just for training; it's for deployment and vertical integration. Businesses must stop viewing Claude or GPT as merely a replaceable API endpoint. Instead, they must see them as integrated technology stacks. Anthropic’s backers are ensuring that the cloud platforms offer superior, deeply optimized environments for running Claude, turning the relationship into an exclusive ecosystem play rather than a simple transactional one.

Actionable Insights for Businesses and Strategists

How should organizations react to this AI valuation arms race?

1. Evaluate Model Portfolios Strategically, Not Emotionally

Do not get locked into one vendor based on hype. The battle between Claude and GPT means that capabilities shift rapidly. Businesses should adopt a *multi-model strategy*. Test your core tasks—summarization, coding, creative generation—on both leading platforms. Your enterprise success will rely on agility in switching between providers based on performance benchmarks, not long-term brand loyalty.

2. Prioritize Compute Strategy Over Model Choice (Initially)

If your company relies on proprietary data for fine-tuning or developing RAG (Retrieval-Augmented Generation) systems, your compute availability is paramount. Understand which cloud provider offers the best long-term commitment, latency, and security guarantees for the models you need. The investment structure shows that access (Query 1) is currently as valuable as the intelligence itself (Query 2).

3. Embrace Safety and Governance Early

If you are building customer-facing AI applications, Anthropic’s emphasis on safety (Query 4) should serve as a warning: Regulatory scrutiny is coming. Integrating tools that allow you to audit and define the "constitution" or guardrails of your AI outputs will soon transition from a 'nice-to-have' feature to a critical compliance requirement. Choosing vendors who prioritize this reduces your future legal and reputational risk.

4. Prepare for Escalating Talent Costs

The intense capital flowing into AI research confirms that the talent driving this innovation (the engineers who manage these billions in compute budgets, Query 3) will command top-tier compensation for the foreseeable future. Businesses must budget not only for model consumption but for the specialized AI personnel required to effectively integrate and steward these powerful tools.

Conclusion: The Age of Stratospheric Capital

Anthropic's $380 billion valuation is a watershed moment. It confirms that the development of frontier AI is no longer a scalable startup endeavor; it is an industrial-scale project requiring capital reserves akin to building next-generation semiconductor fabs or launching space programs. This level of funding signals immense confidence from global financial and technological power brokers in the trajectory of generative intelligence.

The future of AI will be defined by this competition—a high-stakes race fought over hardware access, theoretical breakthroughs, and governance frameworks. For the rest of the industry, the takeaway is clear: the foundation layer is solidifying rapidly, and success will belong to those agile enough to leverage this intense competition while strategically managing the inherent risks of these rapidly evolving, enormously powerful systems.