The Great Energy Reckoning: How AI Giants Are Rewiring Power Grids to Secure Compute

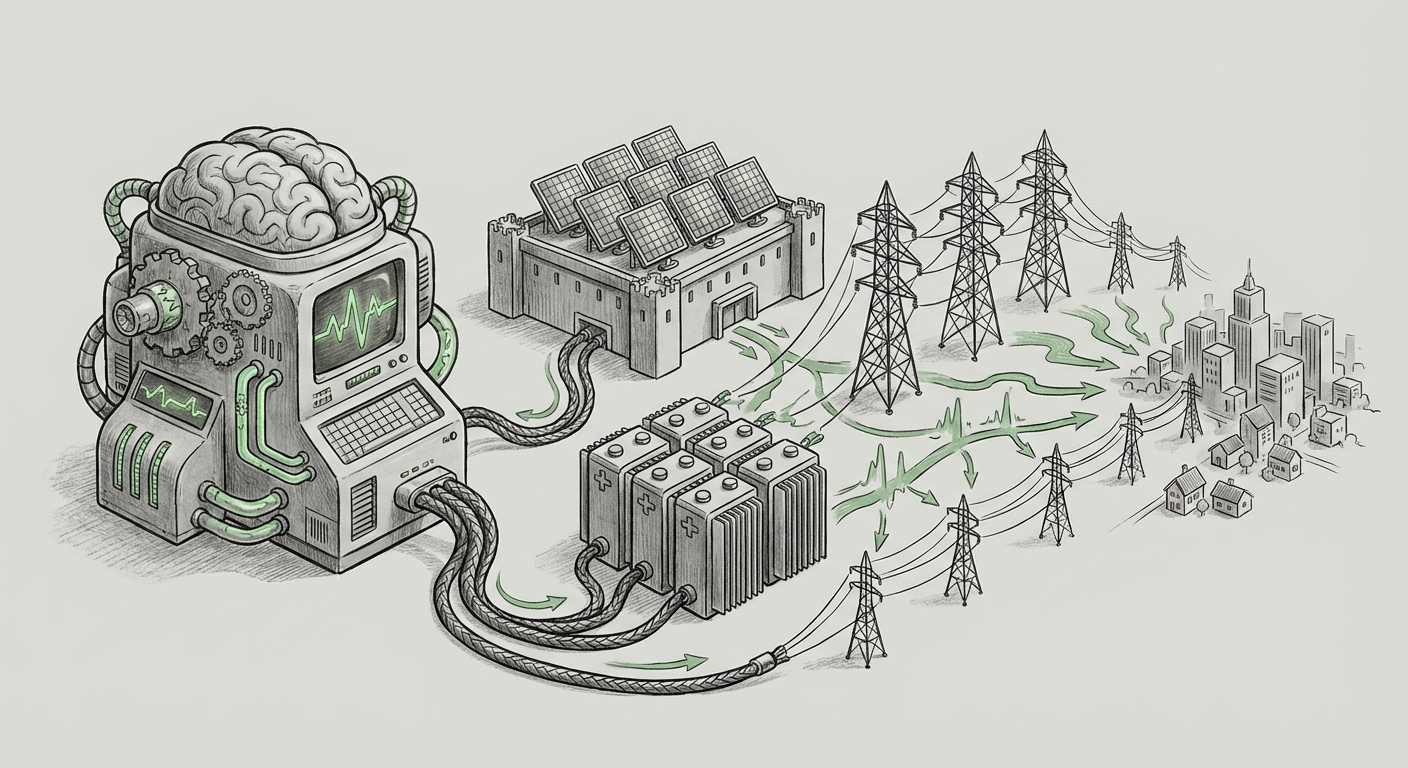

Artificial Intelligence is not just changing how we work; it is fundamentally changing how the world consumes electricity. For years, the immense energy needs of training and running Large Language Models (LLMs) were discussed in abstract technical terms. Now, we are witnessing a powerful shift: AI infrastructure expansion is colliding head-on with municipal power grids, forcing tech giants to take unprecedented responsibility for the physical realities of energy supply.

Anthropic’s recent promise—to fully absorb the costs associated with grid upgrades for their new data centers and even cover consumer electricity costs in the vicinity—is not merely a generous public relations gesture. It is a pragmatic, high-stakes maneuver that signals the beginning of a new era of infrastructure negotiation between Big Tech and the utility sector.

The Context: Why AI Power Demands Are a Crisis, Not a Prediction

To understand why Anthropic is making such a bold financial commitment, we must first grasp the sheer scale of energy AI requires. The training of a single, state-of-the-art LLM consumes energy equivalent to hundreds of homes for a year. As models grow larger and inference (the act of running the model) becomes more frequent across millions of users, the energy hunger escalates exponentially.

Analysis of AI energy consumption projections shows a terrifying curve for grid operators. Where data centers have historically accounted for a modest percentage of regional power use, projections for 2025 and beyond suggest that AI compute demand alone could match the entire annual electricity use of mid-sized nations. This rapid, concentrated demand places immediate, localized stress on electrical infrastructure not designed for such sudden spikes.

This leads to the first crucial implication: The bottleneck for AI expansion is no longer just GPU availability; it is stable, scalable, and affordable power.

The Financial Reality of Grid Lock

When a hyperscaler decides to build a massive new facility in a promising location, the local utility often must undertake multi-year, multi-billion-dollar upgrades—building new substations, upgrading transmission lines, or even constructing new power plants—to handle the guaranteed load. Traditionally, these costs are often passed on to ratepayers over time or delayed indefinitely, leading to instability for both the utility and the surrounding community. Anthropic’s move, by absorbing these costs upfront and directly, eliminates the primary source of community friction and regulatory delay.

Three Pillars of the New AI Infrastructure Deal

Anthropic’s announcement rests on three interconnected pillars, each representing a major trend shaping the future of tech infrastructure. We can analyze these against what we know about the current landscape of AI growth (corroborated by trends in competitor actions and energy reports):

Pillar 1: Direct Cost Absorption (The Consumer Shield)

By promising to "fully absorb grid upgrade costs" and "cover consumer electricity costs," Anthropic is essentially purchasing social license and certainty. In highly competitive areas already grappling with power shortages (like the U.S. Mid-Atlantic or parts of the EU), the ability to turn on a multi-megawatt data center without causing rolling blackouts or local rate hikes is priceless. This move preempts regulatory bodies from imposing punitive taxes or usage caps on large AI consumers.

For Businesses: This sets a new, high bar. If smaller competitors cannot afford this commitment, they may find themselves locked out of prime real estate zones where power capacity is constrained.

Pillar 2: Investing in Generation (From Consumer to Producer)

The promise to "invest in new power generation" is the most profound technological shift. AI companies are transitioning from being passive *consumers* of grid power to active *developers* of energy assets. They are bypassing the slow pace of traditional utility development by directly financing solar farms, battery storage facilities, or even exploring next-generation solutions like Small Modular Reactors (SMRs). This guarantees energy supply security, insulating them from fluctuating energy markets and political uncertainty. This reflects a trend where major tech firms are moving toward becoming utility-scale power players in their own right.

Pillar 3: Operational Flexibility (Becoming a 'Flexible Grid Asset')

Capping consumption during peak hours is a sophisticated strategy known as Demand Response (DR). During periods of extreme heat or cold—when the grid is most stressed and consumer rates skyrocket—AI data centers will throttle non-essential training tasks, effectively donating their unused capacity back to the local grid to keep hospitals and homes online. This transforms the massive, always-on data center into a programmable, responsive part of the energy infrastructure.

This practice demonstrates a maturity in operational technology (OT) management, recognizing that AI workloads can often be shifted (or paused) without major immediate impact, unlike critical legacy industrial loads.

Implications for the Future of AI Development

This energy reckoning fundamentally reshapes the economics and geography of AI deployment. The future of large-scale AI will be dictated by energy availability, not just chip availability.

The Geography of Compute Shifts

We will see a bifurcation in where AI infrastructure is built:

- Energy-Rich Zones: Areas with abundant, often cheaper, renewable energy (e.g., regions with high geothermal, hydro, or vast, underutilized solar/wind potential) will become the new hubs for foundational model training.

- Energy-Constrained Zones: Dense urban areas or established tech corridors will rely heavily on localized, high-cost solutions like massive battery storage or sophisticated DR programs to host inference services (the running of the models), due to the constant, modest power needs for user interactions.

The days of simply placing a data center where fiber optics are cheapest are over. Energy cost and availability now dictate location strategy.

A New Benchmark for Corporate Responsibility

Anthropic has created a new standard for engaging with host communities. When a corporation’s need for computational power threatens local power reliability or drives up household bills, the social contract is broken. By absorbing these costs, Anthropic signals that future AI expansion must be inherently tied to community benefit or, at minimum, neutrality regarding baseline utility stability. This will inevitably lead to increased pressure on competitors to adopt similar transparent and cost-absorbing models, especially when faced with local permitting boards.

The Rise of the Energy-Aware AI Engineer

Operationally, this demands closer alignment between data scientists and energy engineers. Developers will need tools that allow them to schedule large training runs not just based on GPU utilization, but on grid carbon intensity and peak load times. Efficiency will become a core feature, not an afterthought. Models that require 10% less compute power will suddenly become vastly more valuable because they require 10% less infrastructure investment and risk.

Actionable Insights for Stakeholders

What should businesses, policymakers, and investors take away from this evolving energy landscape?

For Technology Leaders and CTOs:

Audit Your Power Portfolio: If your AI roadmap requires scaling up compute by 5x in the next three years, you must stress-test your planned data center locations against local grid capacity reports. Negotiate power contracts that include Demand Response incentives, or begin modeling the feasibility of self-generation (microgrids or dedicated renewable PPAs).

For Investors and Infrastructure Analysts:

Follow the Electrons, Not Just the Chips: Investment opportunities are shifting upstream. Look closely at companies specializing in grid stabilization technology, long-duration battery storage, advanced transmission hardware, and regulatory navigation for utility interconnection. The companies that solve the energy puzzle will command massive premiums.

For Policymakers and Regulators:

Create Clear Frameworks: Current utility regulations are ill-equipped to handle private entities building dedicated power generation next to public grids. Policymakers must rapidly develop clear guidelines for interconnection, shared infrastructure ownership, and data transparency regarding AI energy consumption to prevent regional energy crises and ensure equitable access to power.

Conclusion: Compute Must Pay Its Way

The age of "hyperscale at any cost" for AI compute is drawing to a close. Anthropic’s promise is a watershed moment, confirming that the physical constraints of our power grids will now dictate the pace and geography of artificial intelligence advancement. The next decade of AI innovation will not just be measured in parameters and performance benchmarks, but in megawatts and infrastructure investment.

The future requires AI developers to mature from simply being the greatest *users* of electricity to being proactive *stewards* and *investors* in energy infrastructure. By acknowledging and paying the true cost of reliable power—including the cost to their neighbors—companies like Anthropic are paving the path for sustainable, large-scale AI deployment. The race is on to build not just smarter models, but more resilient power systems to run them.