The Energy Trade-Off: How AI Giants Are Paying for the Power That Fuels Tomorrow

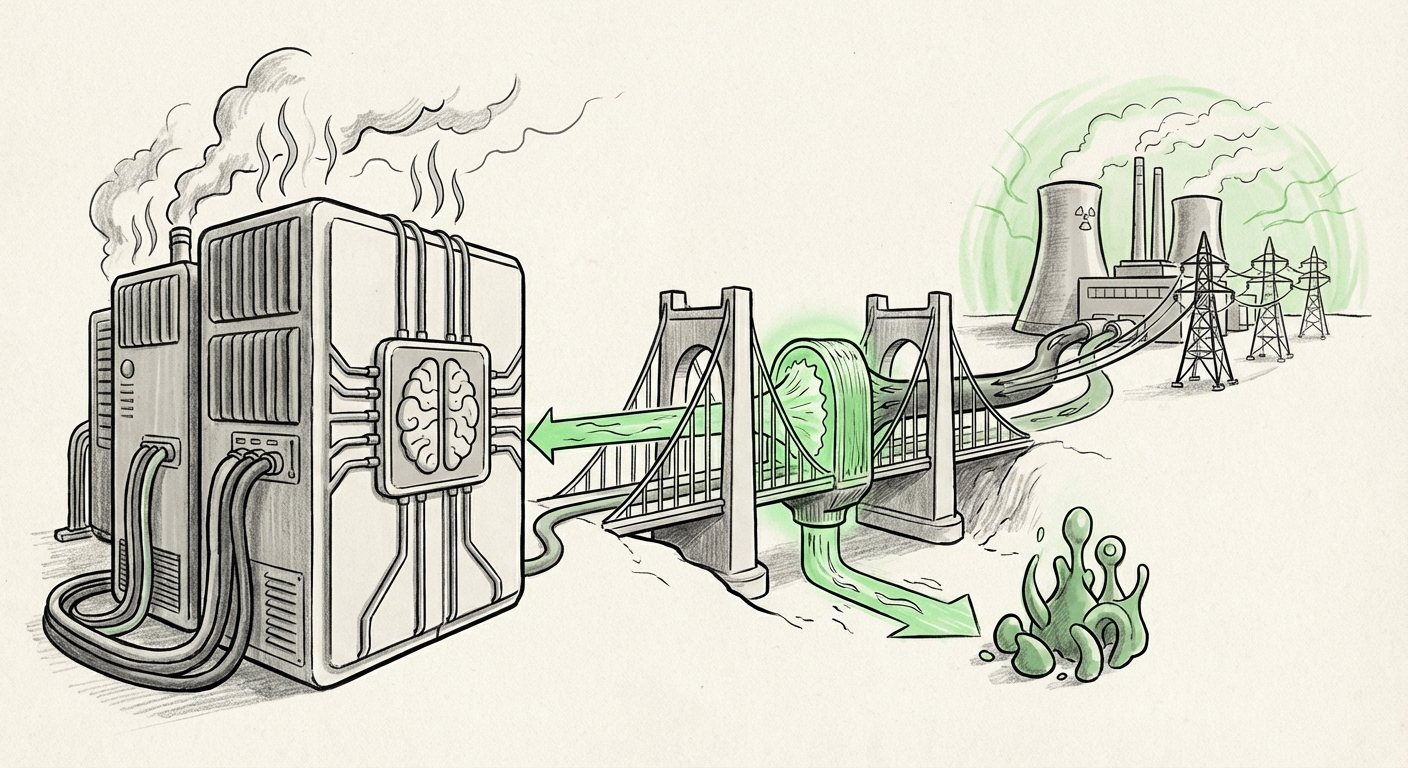

The recent announcement from Anthropic—that it will fully absorb consumer electricity costs and invest directly in new power generation to offset the demands of its expanding data centers—is far more than a simple corporate public relations move. It is a monumental signal defining the next era of Artificial Intelligence development. This move publicly acknowledges the staggering, unseen physical cost of building intelligence: massive, continuous energy consumption.

We are currently witnessing an explosion in AI capability, driven by the deployment of larger and more complex models. However, this computational hunger is translating directly into an unprecedented drain on global energy grids. Anthropic's decisive commitment forces us to confront the real-world logistics and financial burdens underpinning the AI revolution, shifting the focus from software innovation to infrastructure sustainability.

The Unseen Bill: AI Compute and the Energy Crisis

To appreciate the gravity of Anthropic’s pledge, we must first understand the scale of the problem. Training and running frontier AI models is incredibly power-intensive. While the public interacts with the polished interfaces, behind the screens are warehouses filled with specialized chips (GPUs and TPUs) running 24/7. This requires enormous amounts of electricity, both for computation and for cooling those chips.

Analyses tracking **AI data center energy consumption projections for 2024** consistently point toward alarming growth curves. These reports often estimate that data centers globally could consume energy equivalent to adding several mid-sized nations to the global energy demand within the next few years. This rapid escalation creates a clear bottleneck:

- Grid Stability: Local grids, designed for predictable commercial and residential loads, cannot instantly handle the sudden, massive power demands of a new hyperscale AI facility.

- Cost Escalation: Energy costs are not static. As AI demand outstrips supply in certain regions, utility prices rise, directly impacting the cost of running these services.

When Anthropic promises to cover consumer electricity costs, they are essentially externalizing a liability that would otherwise be absorbed by the local utility or, worse, passed onto residents and small businesses. This is an admission that the cost of operating at the cutting edge now includes a mandatory "social license fee" paid directly back to the community.

The Cost of Locality: Why Grid Upgrades Matter

Anthropic specifically mentioned absorbing "grid upgrade costs." This is a critical detail. When a large data center proposes to locate near a city, it often requires utilities to spend millions—sometimes hundreds of millions—upgrading substations, laying new high-capacity transmission lines, or securing new power contracts. Traditionally, these costs are shared across the utility’s rate base, meaning every customer eventually helps pay for the infrastructure that benefits one large entity.

By proactively covering these **impacts of AI data center buildout on local power grids**, Anthropic is doing two things:

- Streamlining Development: They eliminate a major source of community friction and regulatory delays that plague many new data center projects globally.

- Brand Positioning: They position themselves as responsible builders, differentiating from peers who might rely on traditional cost-shifting methods that create public resentment.

We see this tension playing out globally. Reports detailing strain from new AI facilities in regions like Ireland show the intense political and infrastructural pressure these developments create. Anthropic’s approach is a calculated financial and public relations investment designed to secure necessary real estate and regulatory approvals faster.

Context from Competitors: The Race for ESG Leadership

This move by Anthropic must be viewed within the competitive landscape. The biggest players—Google, Microsoft, and Amazon—have long been making high-profile sustainability pledges, such as investing heavily in Power Purchase Agreements (PPAs) for renewable energy or setting aggressive carbon-negative goals. Microsoft, for example, has detailed commitments to be carbon negative and water positive by 2030, an effort heavily focused on managing its massive data center footprint [https://news.microsoft.com/sustainability/].

For a company like Anthropic, which competes fiercely for top talent and institutional trust, demonstrating a proactive, tangible commitment to infrastructure health is vital. If the AI future requires immense energy, the companies that can guarantee that energy access *sustainably and without burdening the public* will win the long-term race for capital and regulatory goodwill.

The Technological Arms Race: Efficiency as the New Frontier

The second part of Anthropic’s promise—capping energy consumption during peak hours and investing in new power generation—forces a technological pivot. Simply paying the bill isn't enough; they must also use less power when the grid is stressed. This leads directly to hardware and operational innovations.

The future efficiency of AI depends on moving beyond conventional cooling methods. The industry is rapidly exploring and adopting advanced cooling techniques, most notably **liquid cooling adoption rates in AI data centers**. Traditional air cooling struggles to handle the intense, localized heat generated by the newest, densest AI accelerators. Liquid cooling, which can dramatically improve thermal management, is becoming essential for maximizing computation density while minimizing runaway energy use.

By setting internal consumption caps, Anthropic incentivizes its engineers to prioritize efficiency innovations—whether through smarter workload scheduling that avoids peak grid times or by deploying cutting-edge server hardware capable of higher computation per watt.

Future Implications: Operationalizing the AI Economy

What does Anthropic’s energy strategy mean for the broader technological landscape?

1. The Shift from CAPEX to OPEX Integration

For years, the primary concern for AI companies was the Capital Expenditure (CAPEX) of buying the chips. Now, the Operational Expenditure (OPEX) of running those chips—primarily energy—is becoming the dominant long-term financial constraint. Anthropic is signaling that OPEX management now requires direct investment in energy infrastructure, blurring the lines between tech company and utility provider.

2. Regulation by Preemption

Governments and municipalities are growing wary of unchecked data center expansion. Anthropic's voluntary cost absorption is a form of regulatory preemption. By solving the consumer cost issue upfront, they reduce the likelihood of heavy-handed regulatory intervention later that could slow down their deployment timelines.

3. A New Barrier to Entry

This move sets a new, expensive baseline for what it means to deploy *frontier* AI. Smaller startups or organizations without access to billions in funding might struggle to meet these environmental and financial requirements. The capital required to run the most powerful models just got significantly higher, potentially consolidating power among the well-capitalized foundational model builders.

Actionable Insights for the AI Ecosystem

For stakeholders across the ecosystem, Anthropic’s commitment translates into clear directives:

For Infrastructure Investors: Look beyond the PPA deals. The real value is in companies that control the physical supply chain for energy—be it advanced geothermal, modular nuclear, or novel grid stabilization technology. Infrastructure supporting AI compute is the new high-growth sector.

For AI Developers: Energy efficiency is no longer just a sustainability goal; it is a core engineering metric directly tied to profitability and deployment speed. Optimization teams focused on reducing inference costs (the power needed to answer a single user query) will become invaluable.

For Policymakers: The voluntary actions taken by AI leaders highlight the need for streamlined permitting processes for renewable energy projects specifically earmarked for AI load growth. If AI companies are willing to pay for the infrastructure, bureaucracy should not be the next bottleneck.

The age of cheap, limitless compute is definitively over. The physical limitations of energy are now shaping the strategic roadmap of Artificial Intelligence. Anthropic’s financial pledge is the market’s clearest indicator yet that the next breakthrough in AI might not be a better algorithm, but a cheaper kilowatt-hour.