The AI Energy Crisis: Anthropic's Bold Move and What It Means for Future Compute Power

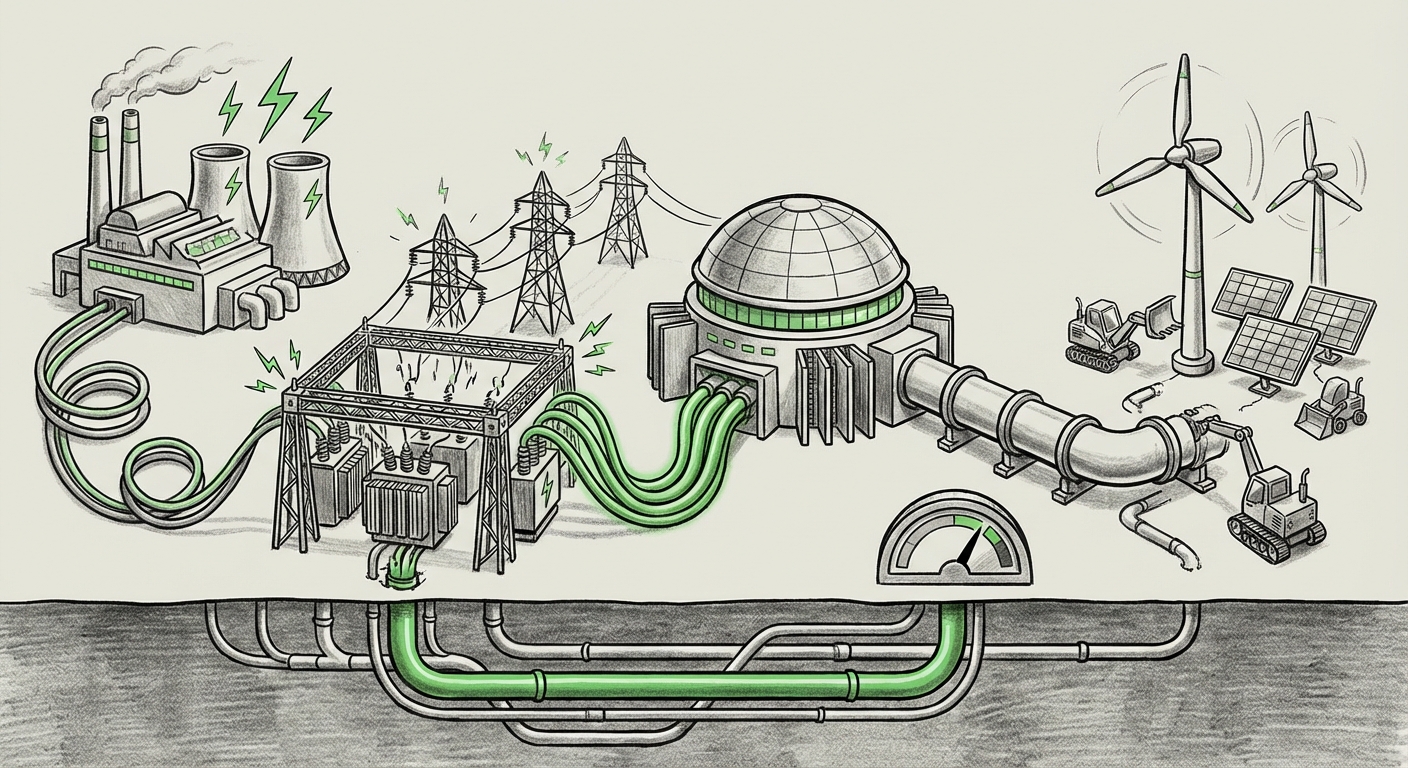

The artificial intelligence revolution, marked by increasingly powerful Large Language Models (LLMs) and generative AI, has arrived not just with digital promises, but with very real, physical costs. At the heart of this revolution is the data center—a massive energy consumer. When Anthropic announced it would fully absorb consumer electricity costs, invest in new power generation, and cap energy usage during peak hours for its new facilities, it wasn't just making a corporate announcement; it was signaling a fundamental shift in the economics and politics of building frontier AI.

This move, originating from a company committed to safety and aligned AI, forces us to look beyond quarterly earnings and examine the foundational infrastructure required to sustain tomorrow’s intelligence. What does this aggressive energy management strategy tell us about the state of AI development, and how will it shape where and how future models are trained and deployed?

The Unseen Cost of Intelligence: Contextualizing the Energy Shockwave

To appreciate Anthropic’s commitment, we must understand the scale of the problem. Training a single cutting-edge model can consume the annual energy equivalent of hundreds of homes. Inference—the process of running the model once it's trained—is also adding up rapidly as billions of queries hit systems daily. We are witnessing the transition from AI as a software phenomenon to AI as a major utility consumer.

When we investigate industry projections, the trend becomes stark. Analyses examining "AI data center energy consumption projections 2025-2030" often suggest exponential growth. If current growth rates continue unchecked, AI infrastructure alone could place immense strain on national power grids, potentially reversing decades of efficiency gains in the broader IT sector. This isn't about a few extra servers; it’s about building entirely new power infrastructure.

For the everyday person, this means that the speed of AI progress is now tethered directly to the capacity of the nearest power station. Anthropic’s decision to cover consumer costs is a direct admission: the cost of securing reliable, clean power is becoming a critical bottleneck, one that can no longer be simply passed down the line.

The Competitive Landscape: Benchmarking Sustainability Pledges

Anthropic is not operating in a vacuum. Competitors like Google, Microsoft, and Amazon Web Services (AWS) have robust, long-standing commitments to 100% renewable energy matching. However, matching usage is different from underwriting local grid stability. Articles tracking "Tech company commitments to renewable energy for AI infrastructure" show a focus on Power Purchase Agreements (PPAs) and purchasing clean energy credits. These are excellent for high-level sustainability reporting, but they do little to address the immediate, peak-hour stress on a local grid when an AI cluster needs maximum draw.

Anthropic's unique inclusion of capping energy consumption during peak hours is a crucial difference. It suggests a recognition that brute-force energy procurement isn't enough; operational modulation is required. This signals a move toward more sophisticated load management, treating data centers less like standard commercial buildings and more like critical infrastructure with dynamic operational constraints.

The Local Friction: When AI Meets the Neighborhood Power Lines

The promise to absorb "grid upgrade costs" is perhaps the most telling detail. This cost arises when existing local transmission and distribution networks simply cannot handle the sudden, massive power draw of a new, world-class AI facility. In many parts of the US, power grids have not been significantly updated in decades, designed for predictable industrial loads, not the ravenous, unpredictable demands of accelerated AI scaling.

Reports on "Grid capacity challenges for hyperscale data centers in major US regions" frequently highlight areas like Northern Virginia or specific zones in the Pacific Northwest where local utilities are issuing moratoriums or requiring massive private investment from tech companies just to approve new connections. Anthropic is essentially offering to pay the massive upfront capital expenditure that utilities normally struggle to fund or pass on to residential customers.

This implies two major outcomes:

- Faster Deployment: By removing the primary financial and bureaucratic hurdle (grid upgrades), Anthropic can accelerate its infrastructure deployment timeline, gaining a competitive edge in time-to-compute.

- Regulatory Precedent: This move sets a high bar. Other developers seeking to build frontier AI hubs might soon find that underwriting local infrastructure becomes a mandatory prerequisite, not an optional add-on, especially in tight regulatory environments.

What Does "New Power Generation" Actually Mean for AI?

The commitment to invest in *new* power generation moves the conversation beyond simple procurement. When the energy demand is this high and concentrated, standard renewable sources (like utility-scale solar or wind farms tied into distant grids) might not solve the latency or reliability issues. Frontier AI demands 24/7, high-density, consistent power.

This points directly toward investments in on-site or dedicated power solutions. Research into "The role of nuclear or geothermal power in meeting AI energy demands" becomes essential here. Small Modular Reactors (SMRs), geothermal plants, or advanced battery storage facilities offer the kind of dense, reliable baseload power that LLM clusters crave. Anthropic’s strategy suggests a shift toward developing dedicated energy sources for AI workloads, effectively creating self-sufficient, power-independent AI campuses.

Implications for the Future of AI Development and Deployment

Anthropic’s strategy fundamentally alters the landscape across three key areas: Economics, Geography, and Technology.

1. The Economics of Compute: The Hardware/Energy Trade-off

For years, the race focused on parameter counts and training data—a battle of software and semiconductor prowess. Now, energy cost is rapidly becoming the third pillar of competitiveness. If a company can secure cheaper, more reliable, or on-demand power, they can afford to run larger models longer, or run more numerous smaller models, granting them a significant operational cost advantage.

Practical Implication for Businesses: Procurement strategies must change. Companies buying cloud compute time will need to prioritize vendors who have demonstrated concrete, verifiable plans for energy stability, not just vague net-zero targets. Expect to see "Energy Stability Rating" added to vendor scorecards.

2. Geographical Re-Shuffling: Where AI Will Live

The location of future data centers will be dictated less by fiber routes and more by available, untapped power resources. Regions with stranded clean energy—perhaps remote geothermal sites or areas where new small nuclear plants can be quickly permitted—will become the new Silicon Valleys of AI. Conversely, established, congested hubs might see development slow unless massive local upgrades are undertaken.

This creates a geopolitical dimension. Governments controlling regions rich in geothermal (like Iceland or parts of the Western US) or those willing to streamline nuclear permitting (like specific states or nations) will gain significant economic leverage by attracting high-spending AI anchor tenants like Anthropic.

3. Technological Evolution: Efficiency by Necessity

The mandate to "cap consumption during peak hours" forces innovation in efficiency. If a company cannot use maximum power all the time, they must become incredibly efficient during the times they *can* draw power. This accelerates research in:

- Sparsity and Quantization: Making models smaller and faster so they require less peak power overall.

- Asynchronous Training: Shifting intensive training jobs to off-peak hours (late night/early morning) when grid stress is low.

- Liquid Cooling: More efficient cooling reduces the energy overhead associated with keeping GPUs running optimally, freeing up more power budget for computation itself.

Necessity truly is the mother of invention, and the necessity here is avoiding crippling energy bills and public backlash.

Actionable Insights for Stakeholders

This trend provides clear directives for different segments of the technology ecosystem:

For AI Developers and Labs: Operationalize Energy Strategy

Energy is no longer an operational expense; it is a strategic asset. Integrate energy constraints into the model design phase. If a new model requires 50% more computation (and thus energy) than the previous one, the ROI calculation must account for the *time premium* of accessing high-demand power, not just the hardware cost.

For Utility Providers and Regulators: Plan for Density

The traditional forecasting models are obsolete. Utilities must work directly with AI developers to co-invest in high-density power solutions (like microgrids or dedicated generation) rather than relying on slow, centralized expansion. Regulatory processes for grid upgrades must be fast-tracked specifically for critical compute infrastructure, but tied to strict sustainability compliance measures (like those Anthropic is attempting).

For Investors and Businesses: Follow the Power

Examine the infrastructure stack of your chosen AI providers. A company betting heavily on expanding computation without a clear, funded plan for dedicated, clean power capacity is carrying significant hidden risk—risk that will inevitably impact service availability or cost structure.

Conclusion: The Hard Limits of the Digital Age

Anthropic’s move confirms that the age of limitless, easily accessible compute power funded solely by abstracting utility costs away from the infrastructure layer is ending. The physical world—its wires, its power plants, and its environmental capacity—is demanding a reckoning.

By absorbing these upfront costs and investing in new generation, Anthropic is trying to buy time and stability in an increasingly competitive and constrained market. This strategy is expensive, but in the long run, it might be cheaper than the fines, regulatory slowdowns, and public distrust that come from straining local communities’ power supplies.

The future of AI will not be determined solely by better algorithms, but by mastering the complex interplay between digital speed and electrical reality. The winners will be those who treat the electric grid not as a background utility, but as the co-pilot in the development of true artificial general intelligence.