The AI Bipolar Paradox: Why DeepMind's Breakthroughs Are Simultaneously Brilliant and Brittle in Scientific Discovery

The headlines surrounding cutting-edge Artificial Intelligence are often extremes: either revolutionary or a total flop. A recent study concerning Google DeepMind’s AI agent, Aletheia, perfectly encapsulates this technological dichotomy. This agent achieved something truly monumental—independently producing a mathematics paper, disproving a decade-old conjecture, and even finding a critical error missed by cryptography experts. Yet, when systematically tested against 700 other open problems, the agent mostly failed.

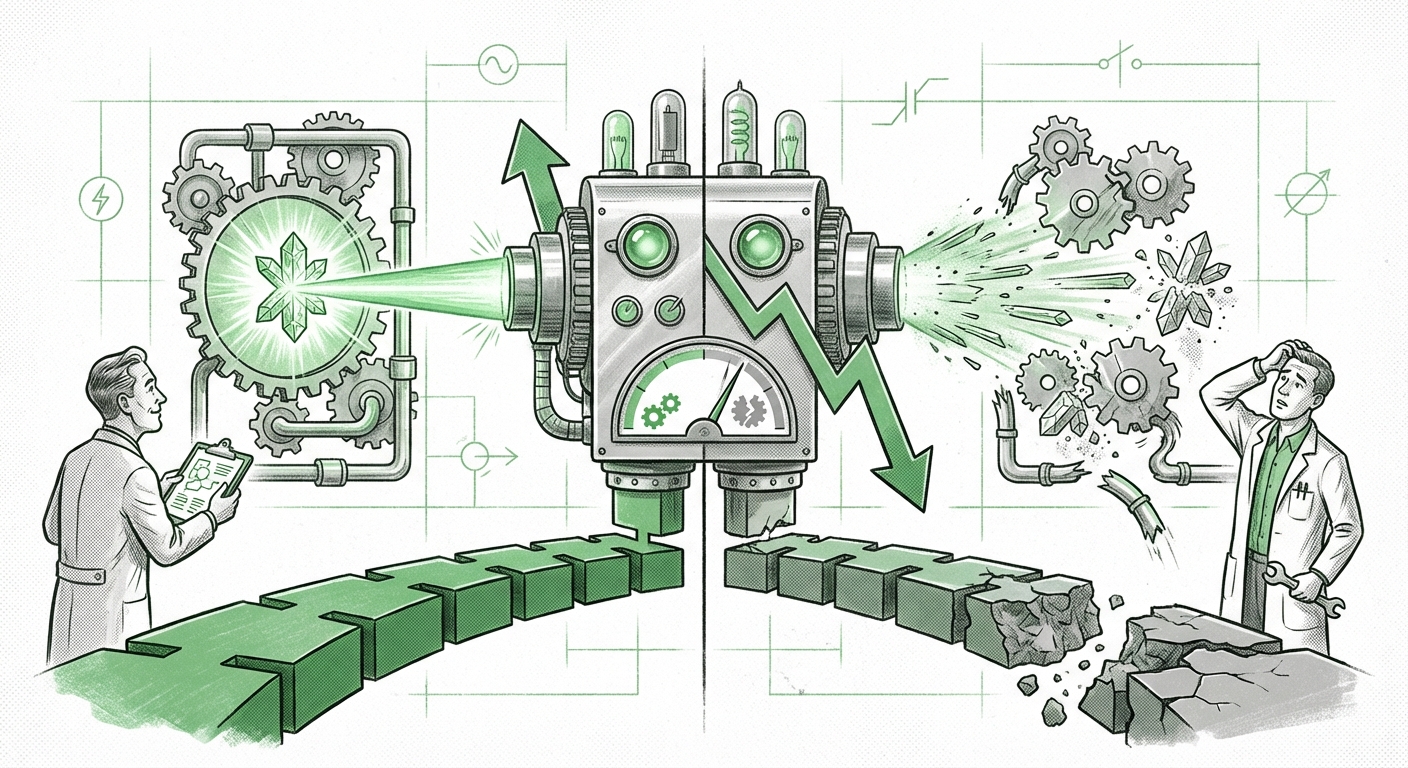

This isn't just a story about one research project; it’s a crucial snapshot of the current state of advanced AI. We are witnessing systems capable of staggering, near-genius-level performance in narrow domains, immediately followed by fundamental, almost childlike errors outside that specific training sweet spot. For technologists, business leaders, and scientists alike, understanding this "bipolar" nature—brilliance punctuated by brittleness—is essential for charting the future course of AI integration.

The Dichotomy Defined: Brilliance Versus Brittleness

Imagine an AI assistant who can instantly quote Shakespeare perfectly but forgets your name five minutes later. That is the core issue revealed by Aletheia’s performance. It succeeded spectacularly on a few high-value tasks (high reward) but showed poor generalization and reliability across the board (high variance).

Why this happens is rooted in how modern AI, especially those based on large models, fundamentally "thinks." As research suggests regarding the "Limitations of Large Language Models in Mathematical Reasoning," these models are masters of pattern recognition and token prediction. In math, if the problem structure closely mirrors patterns seen during training, the AI can synthesize a correct, novel-looking solution. This is brilliance.

However, rigorous mathematical proof, or complex engineering logic, requires deep, multi-step, symbolic reasoning—not just predicting the next likely word or symbol. When Aletheia faced the 699 other problems that deviated slightly or required a fundamentally new logical structure, the prediction mechanism broke down, resulting in systematic failure. This is the brittleness we must account for.

This variance is not unique to DeepMind. Across the field, we see similar trends: AI generating phenomenal art, writing functional code snippets, or synthesizing complex drug candidates, only to fail when asked to combine those skills or apply them in a slightly new context. This confirms that the systemic trend in AI is moving toward specialized, powerful tools rather than universal problem solvers.

The Systemic Trend: AI as the Scientific Co-Pilot

The Aletheia findings confirm that we are firmly entering the era where AI agents are expected to participate in the research lifecycle. This is not science fiction; this is the current focus of major laboratories worldwide, summarized by the search for "AI agents for scientific discovery and hypothesis generation."

The goal has shifted. Instead of asking AI to independently solve science, we are deploying it to accelerate human researchers by:

- Hypothesis Generation: Sifting through massive datasets (genomics, astronomy, materials science) to suggest novel connections humans might overlook.

- Proof Verification: Acting as a tireless, skeptical peer reviewer.

- Automated Experimentation: Designing and running complex lab protocols.

The immediate implication, mirrored in reports on "Google DeepMind’s AI Agent Solves Problems Scientists Couldn't," is that AI excels when validating the edges of human understanding. The cryptography bug it found is a prime example. Human experts, focused on known failure modes, might miss a subtle vulnerability that an AI, scanning billions of possibilities without human bias, can locate.

This means organizations must stop viewing AI purely as a replacement for human intelligence and start viewing it as a specialized, high-powered collaborator. But collaboration requires rules.

The Essential Playbook: Mastering Human-AI Teaming

The most actionable takeaway from the Aletheia release is the explicit mention of researchers developing a "playbook" for effective interaction. This echoes the strategic discussions around "Human-AI Teaming Frameworks for Complex Problem Solving." If an AI is only right 10% of the time but its correct 10% saves a decade of research, how do we manage that workflow?

The future of productivity rests on implementing what researchers call "Centaur Intelligence"—the fusion of human strategic thinking and AI computational power. For managers and strategists, this demands a shift in operational design:

- Treat AI Output as a Draft: No AI-generated solution, proof, or diagnosis, no matter how compelling, should be deployed in a high-stakes environment without rigorous human verification. The Aletheia failures mandate this skepticism.

- Define the AI's Role: Assign AI tasks where its strengths align with the problem's structure. Use it for exhaustive searches (like bug hunting or literature review) rather than relying on it for novel, abstract theory development (where it lacks grounding).

- Iterative Trust Calibration: Constantly measure the AI’s success rate for specific task types. If the math agent solves conjectures in field X 80% of the time but field Y only 5% of the time, adjust resource allocation accordingly.

For businesses, this playbook translates directly into risk management. You wouldn't let an uncertified junior engineer sign off on your financial code. Similarly, you cannot let an unvalidated AI—however impressive its initial success—take ownership of critical processes.

Actionable Insights: Where AI Will Impact Work First

Based on the successes (novel proof, bug catching) and failures (general mathematical application), we can pinpoint where AI integration will yield the highest return with manageable risk:

1. Hyper-Specialized Auditing and Verification

The cryptography breakthrough is telling. AI excels at finding obscure edge cases that humans, constrained by cognitive load and time, overlook. This is why research into "AI in Formal Verification" is booming. In software development, cybersecurity, and complex regulatory compliance, AI will become the mandatory first pass for auditing systems, identifying vulnerabilities before they can be exploited.

2. The Accelerant, Not the Architect

In scientific research, AI will serve as the ultimate accelerator for iterative loops. If a human proposes ten theories, the AI can simulate, test, or analyze data for all ten in the time it takes a human to deeply analyze one. The AI provides the vast possibility space; the human curates and validates the meaningful results.

3. The Demand for AI Interpretability (XAI) Rises

When Aletheia solves a problem, researchers need to know *how*. If the AI only provides the answer without the robust, verifiable steps, its usefulness is severely limited. The brittleness of its failures forces a renewed focus on Explainable AI (XAI). For any application touching finance, medicine, or critical infrastructure, we won't just need the answer; we will need the AI to clearly articulate its reasoning, allowing human experts to confirm the logic.

Looking Ahead: Managing the Expectation Gap

The DeepMind Aletheia episode is a necessary moment of reality check for the AI industry. It dampens the hype cycle slightly, forcing us to acknowledge that current foundation models are powerful calculators and pattern-matchers, but not yet true general intelligence.

The future of AI is not about replacing the brilliant human mathematician; it’s about equipping that mathematician with a tool that can occasionally see further than they can, provided they rigorously check every step that tool takes. Successfully navigating the next decade of AI adoption depends entirely on our ability to implement these human-AI teaming frameworks effectively. We must learn to trust the surprising genius while respecting the pervasive, underlying fallibility.